universe

Everything you ever wanted to know about the Universe, explained by physicist Sean Carroll.

▸

1:33:47 min

—

with

A new model addresses a longstanding problem: where do quasars get the fuel they need to outshine entire galaxies?

What was the universe like one-trillionth of a second after the Big Bang? Science has an answer.

The eastern inner core located beneath Indonesia’s Banda Sea is growing faster than the western side beneath Brazil.

The great theoretical physicist Steven Weinberg passed away on July 23. This is our tribute.

Scientists do not know what is causing the overabundance of the gas.

A new artificial intelligence method removes the effect of gravity on cosmic images, showing the real shapes of distant galaxies.

If the laws of physics are symmetrical as we think they are, then the Big Bang should have created matter and antimatter in the same amount.

Astronomers find a third type of supernova and explain a mystery from 1054 AD.

An analysis of the gravitational wave data from black hole mergers show that the event horizon area, and entropy, always increases.

Researchers discovered a galactic wind from a supermassive black hole that sheds light on the evolution of galaxies.

Every star we can see, including our sun, was born in one of these violent clouds.

Asking science to determine what happened before time began is like asking, “Who were you before you were born?”

Astronomers possibly solve the mystery of how the enormous Oort cloud, with over 100 billion comet-like objects, was formed.

Can one equation unite all of physics?

▸

6 min

—

with

Determining if the universe is infinite pushes the limits of our knowledge.

A new paper reveals that the Voyager 1 spacecraft detected a constant hum coming from outside our Solar System.

The research suggests that roughly 1 percent of galaxy clusters look atypical and can be easily misidentified.

Differences in the way that the Hubble constant—which measures the rate of cosmic expansion—are measured have profound implications for the future of cosmology.

New studies find the interstellar comet 2I/Borisov is the most “pristine” ever discovered.

Researchers propose a new method that could definitively prove the existence of dark matter.

Can spacekime help us make headway on some of the most pernicious inconsistencies in physics?

Results from an experiment using the Large Hadron Collider challenges the accepted model of physics.

Two new studies examine ways we could engineer human wormhole travel.

Some scientists believe the lightning-produced frequencies may be connected to our brain waves, meditation, and hypnosis.

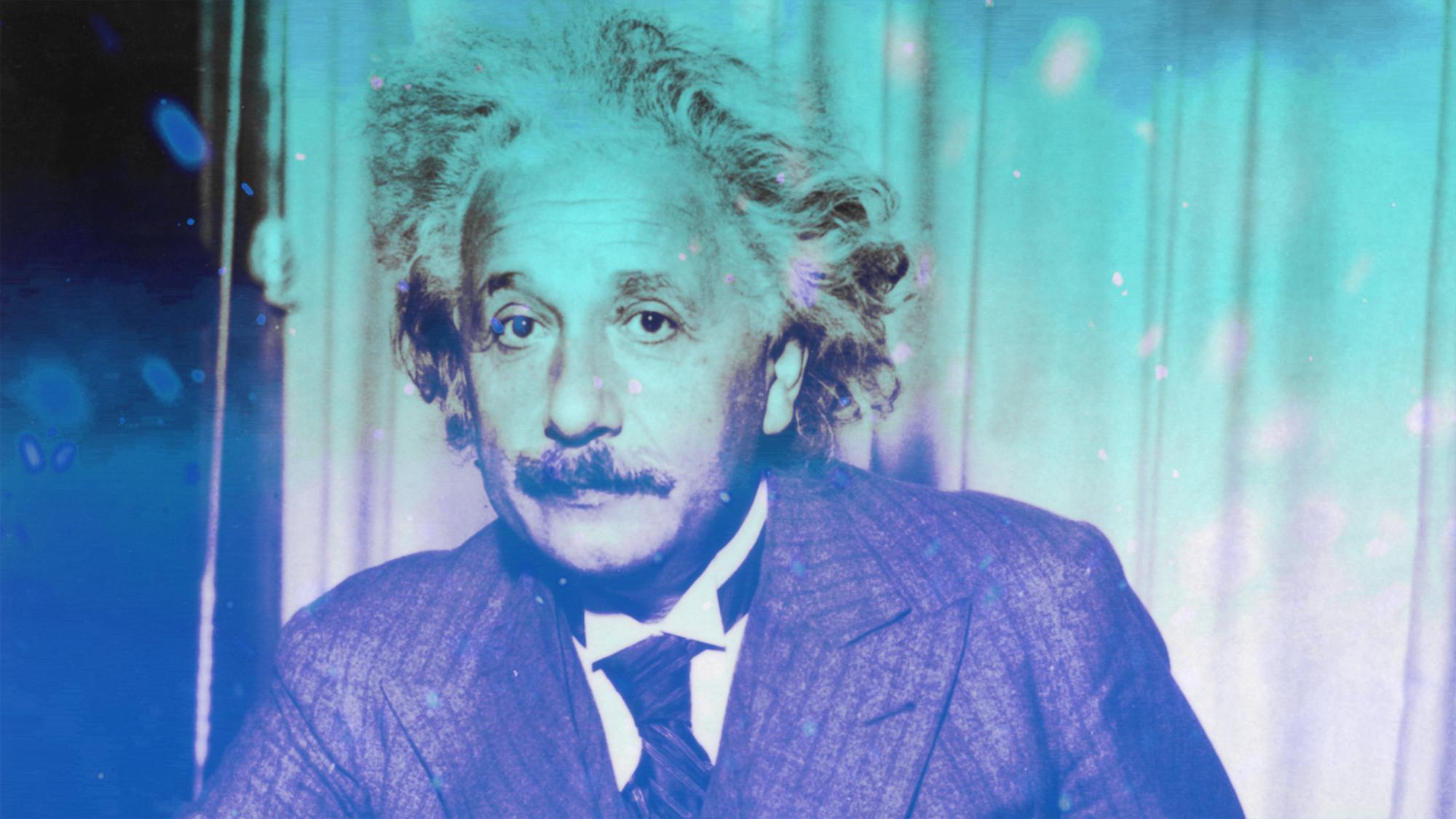

Here’s what Einstein meant when he spoke of cosmic dice and the “secrets of the Ancient One”.

NASA astronomer Michelle Thaller is coming back to Big Think’s studio soon to answer YOUR questions! Here’s all you need to know to submit your science-related inquiries.

The controversy over the universe’s expansion rate continues with a new, faster estimate.

The rock, found in the Sahara, likely comes from a long-lost baby protoplanet.

A physicist creates an AI algorithm that predicts natural events and may prove the simulation hypothesis.