sight

Nearly 90% of the world’s blind live in low-income countries.

People who go ballistic over other people’s eating sounds aren’t just cranky — they have misophonia.

It uses radio waves to pinpoint items, even when they’re hidden from view.

We can’t ask them, so scientists have devised an experiment.

Scientists observe how the halves of the brain keep us informed about everything everywhere.

Fractal patterns are noticed by people of all ages, even small children, and have significant calming effects.

Certain colors are globally linked to certain feelings, the study reveals.

The area of the brain that recognizes letters and words is ready for action right from the start.

A large-scale study from King’s College London explores the link between genetics and sun-seeking behaviors.

A study uses sugar water experiments to show that hummingbirds can see colors invisible to us.

A new study enhanced color vision for individuals with the most common type of red-green color blindness.

Recent studies suggest virtual reality porn can produce a more positive experience than viewing from a monitor or screen.

Why finding joy is more easily attainable than the pursuit of happiness.

▸

9 min

—

with

Take the circumstances in your life seriously, but not literally. Here’s why.

▸

27 min

—

with

They’re made from stretchy, electroactive polymer films.

Few could match the famous physicist in his ability to communicate difficult-to-understand concepts in a simple and warm fashion.

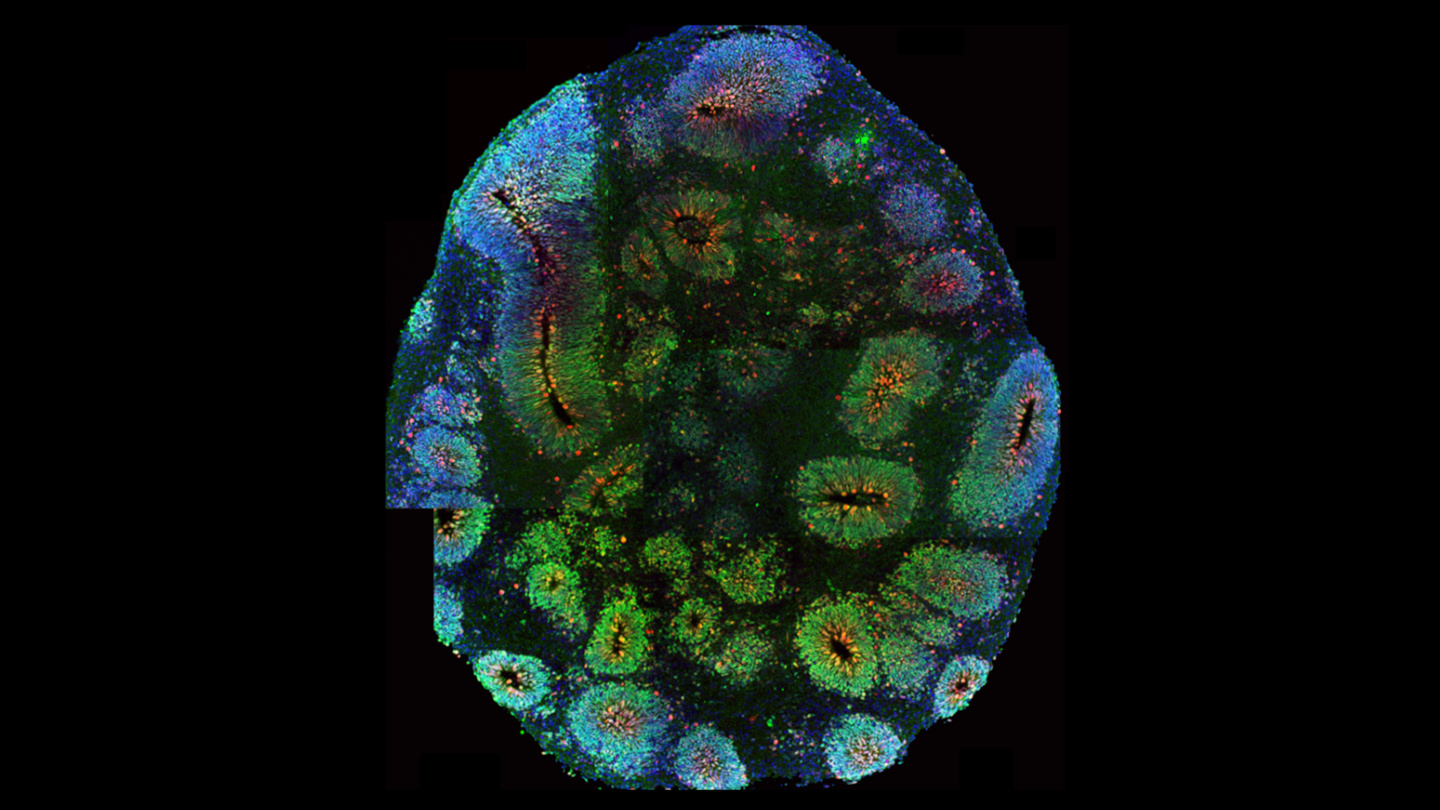

Using a new process, a mini-brain develops retinal cells.

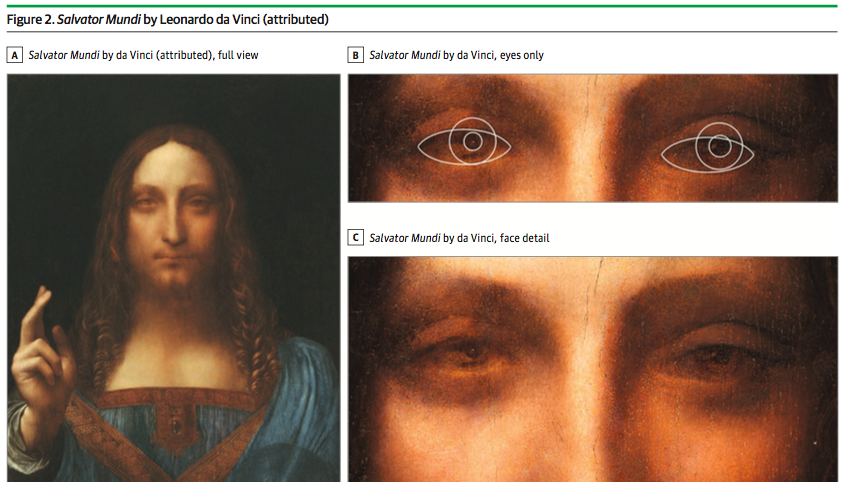

A neuroscientist argues that da Vinci shared a disorder with Picasso and Rembrandt.

A joint study from U.S. and U.K. universities shows promising results in reducing the rate of cognitive decline.

America’s most popular conspiracy theories and the science behind them.