prediction

The way we imagine and listen to melodies sheds light on imagination.

New machine-learning algorithms from Columbia University detect cognitive impairment in older drivers.

Can spacekime help us make headway on some of the most pernicious inconsistencies in physics?

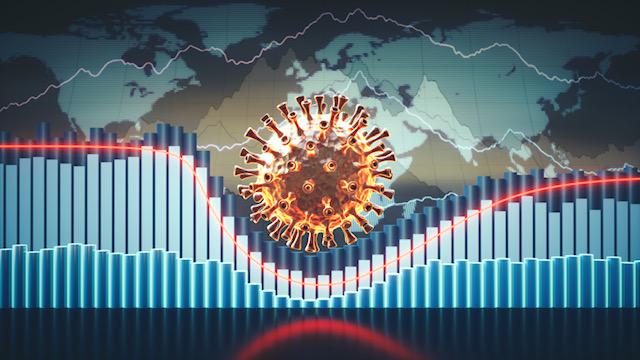

Northwell Health is using insights from website traffic to forecast COVID-19 hospitalizations two weeks in the future.

What lies in store for humanity? Theoretical physicist Michio Kaku explains how different life will be for your descendants—and maybe your future self, if the timing works out.

▸

15 min

—

with

It’s hard to stop looking back and forth between these faces and the busts they came from.

Math doesn’t suck. It is one of humanity’s greatest and most mysterious journeys.

▸

15 min

—

with

Astrophysicists calculate the likely number of civilization out there capable of communicating with us.

It looks like a busy hurricane season ahead. Probably.

Researchers devise an effective new predictive tool for maritime first-responders.

Should humans fear artificial intelligence or welcome it into our lives?

▸

3 min

—

with

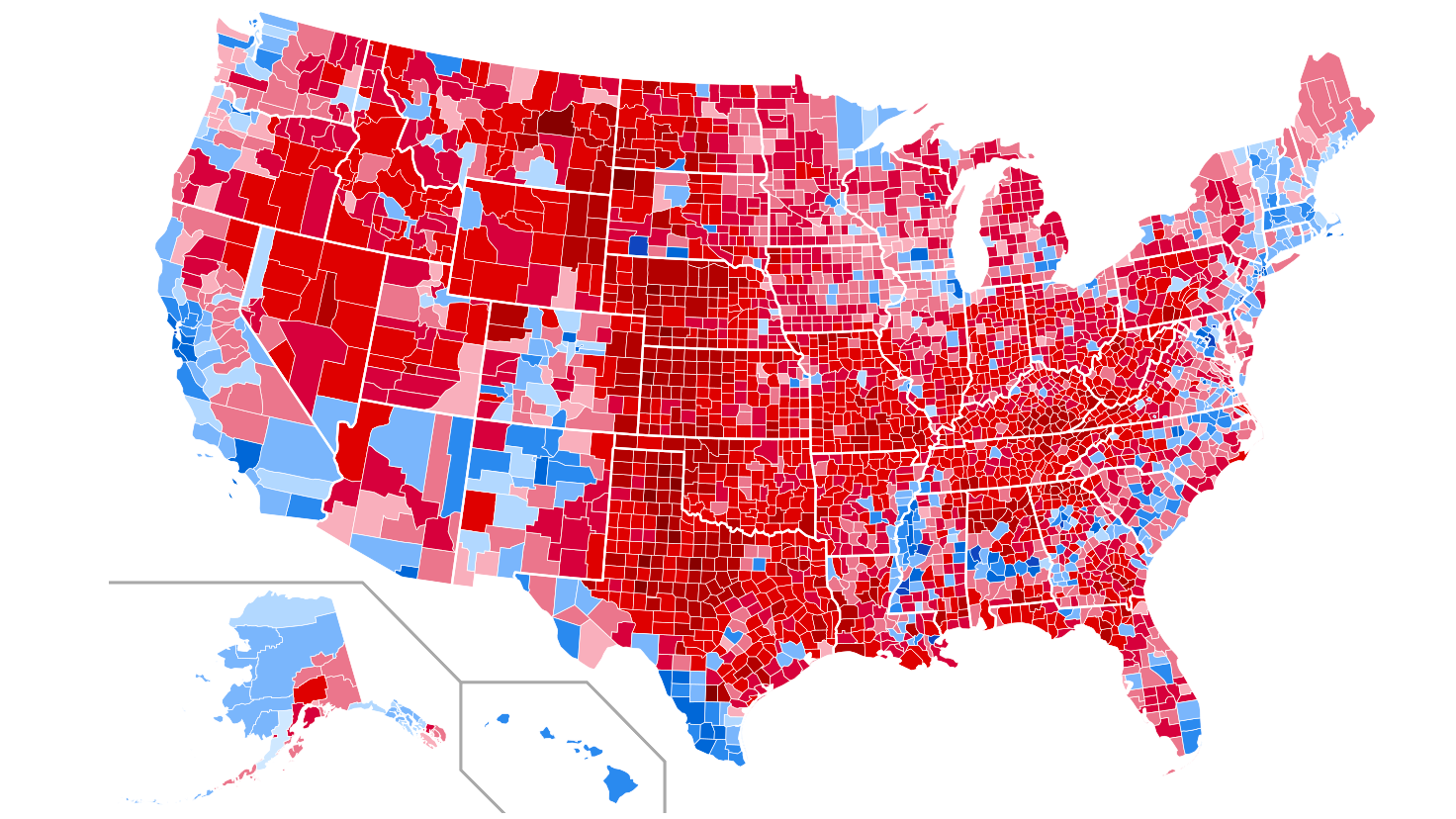

Everyone wants to predict who will win the 2020 presidential election. Here are 2 misconceptions to bust so people don’t proclaim the death of data like they did in 2016.

These are the top advances in technology that will impact the world in the coming decade.

The power to predict the next revolution keeps companies on top.

▸

3 min

—

with

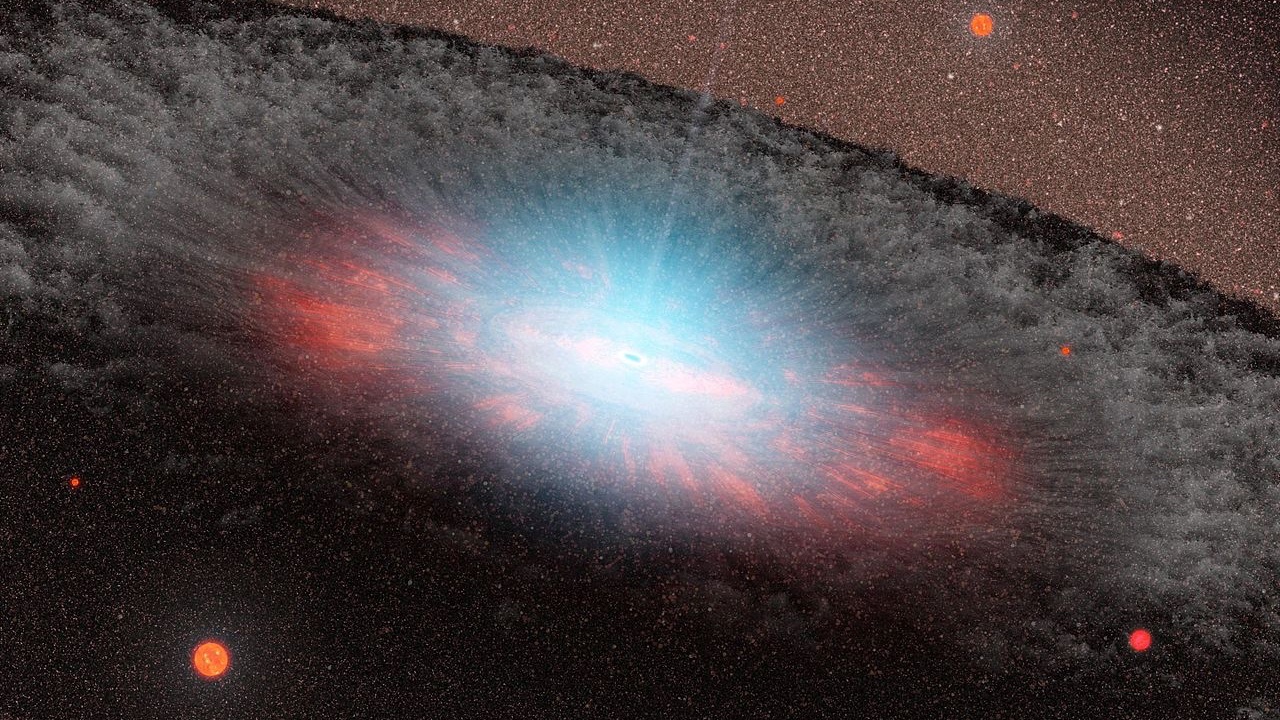

A new paper suggests a primordial black hole may be making things weird at the edge of our solar system.

A new report sees a major disruption in where we get our food.

Someday we’ll beam to the moon for afternoon tea, and be back in New York for dinner.

▸

5 min

—

with

Soon we’ll be able to blink and instantly go online via computer chips attached to our eyes.

▸

3 min

—

with

One of Stephen Hawking’s predictions seems to have been borne out in a man-made “black hole.”

Some have suggested that there is no hidden giant out there.

Lasers could cut lifespan of nuclear waste from “a million years to 30 minutes,” says Nobel laureate

Physicist plans to karate-chop them with super-fast blasts of light.

At high tide each night, bright lights predict the underwater future.

Why are soda and ice cream each linked to violence? This article delivers the final word on what people mean by “correlation does not imply causation.”

▸

with

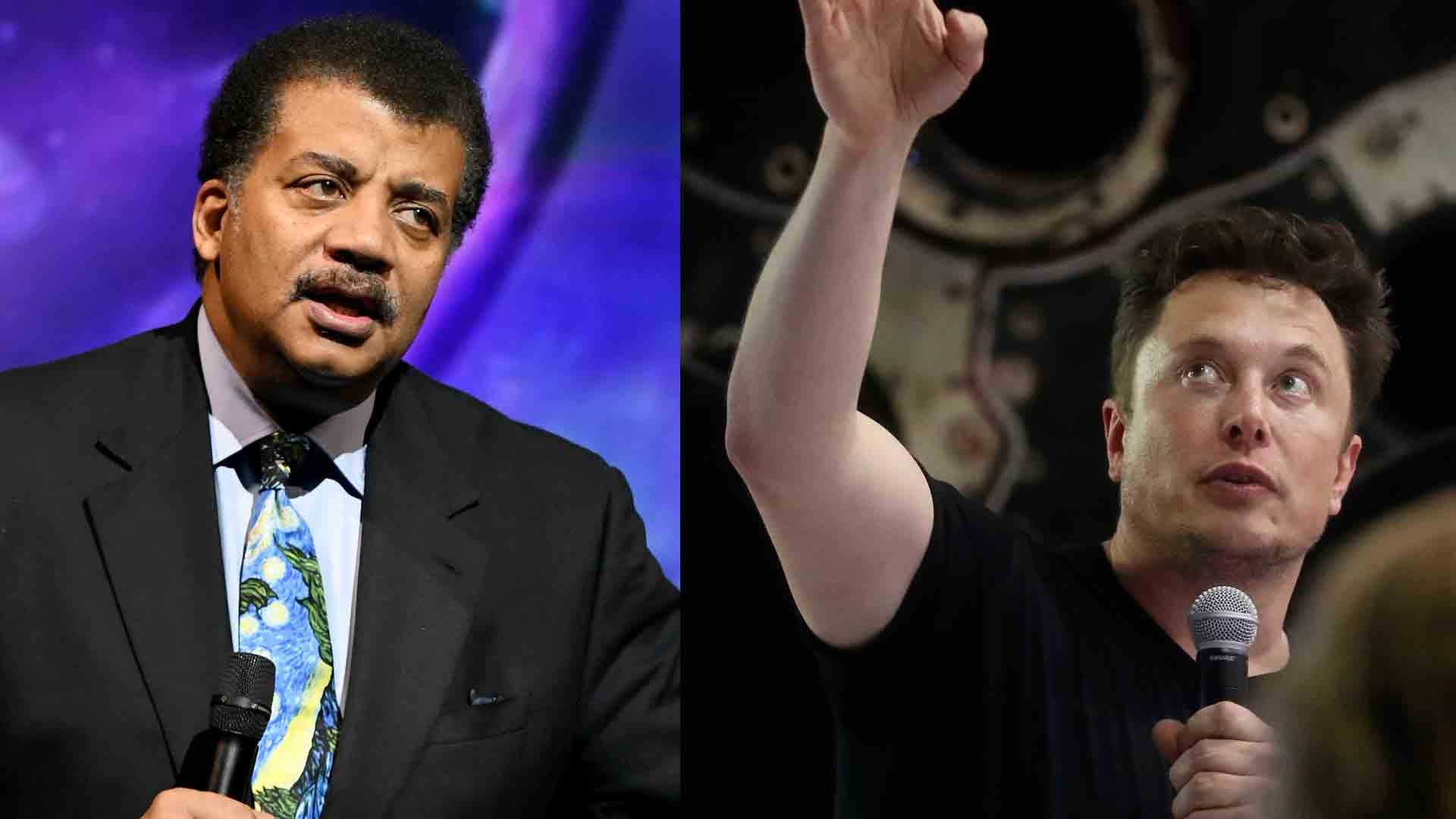

When he first became a multi-millionaire, Elon Musk shared how his vision led to success.

The sharp drop-off in world trade growth is a major risk in the coming year.

The famous astrophysicist argues why Elon Musk is more important than Jeff Bezos, Steve Jobs and Mark Zuckerberg.

These seven presidents had a window into the future—or were really good guessers.

Future vacationing could be pretty different.

Are we sure this isn’t alien technology?