sound

The way we imagine and listen to melodies sheds light on imagination.

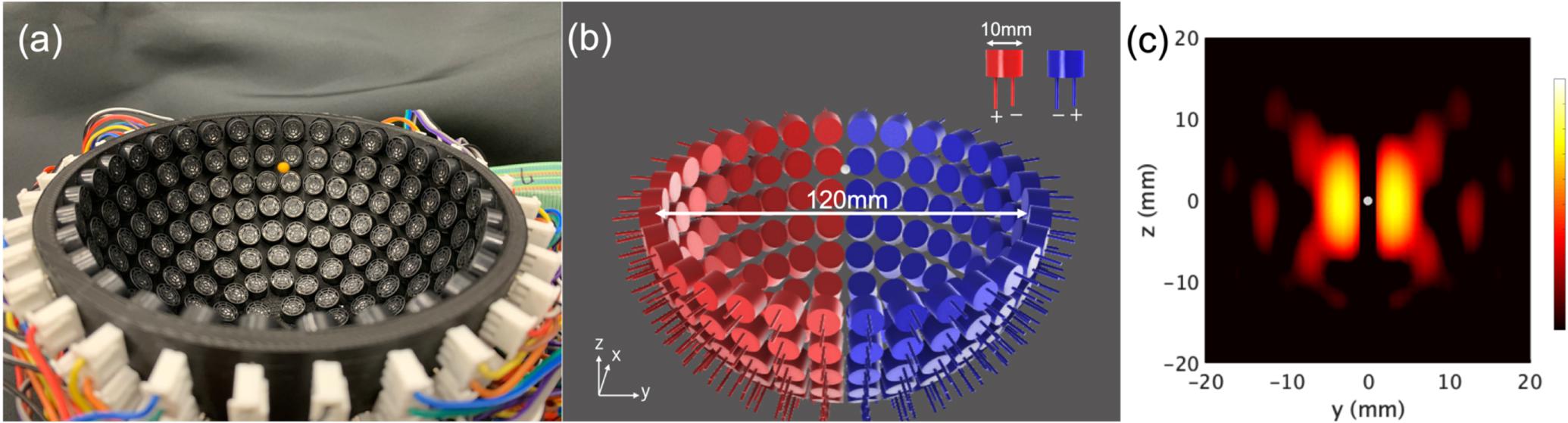

The non-contact technique could someday be used to lift much heavier objects — maybe even humans.

Ernst Chladni proved that sound can be seen, and developed a technique of visualizing vibrations on a metal plate.

People who go ballistic over other people’s eating sounds aren’t just cranky — they have misophonia.

The way you speak might reveal a lot about you, such as your willingness to engage in casual sex.

Noise causes stress. For our ancestors, it meant danger: thunder, animal roars, war cries, triggering a ‘fight or run’ reaction.

Their ear structures were not that different from ours.

A new study looks at why mysterious voices are sometimes taken as spirits and other times as symptoms of mental health issues.

Research finds that our sense of self can be manipulated by certain smells and sounds.

These tiny fish are helping scientists understand how the human brain processes sound.

A study from McGill University reveals the secret of musicians who have excellent time.

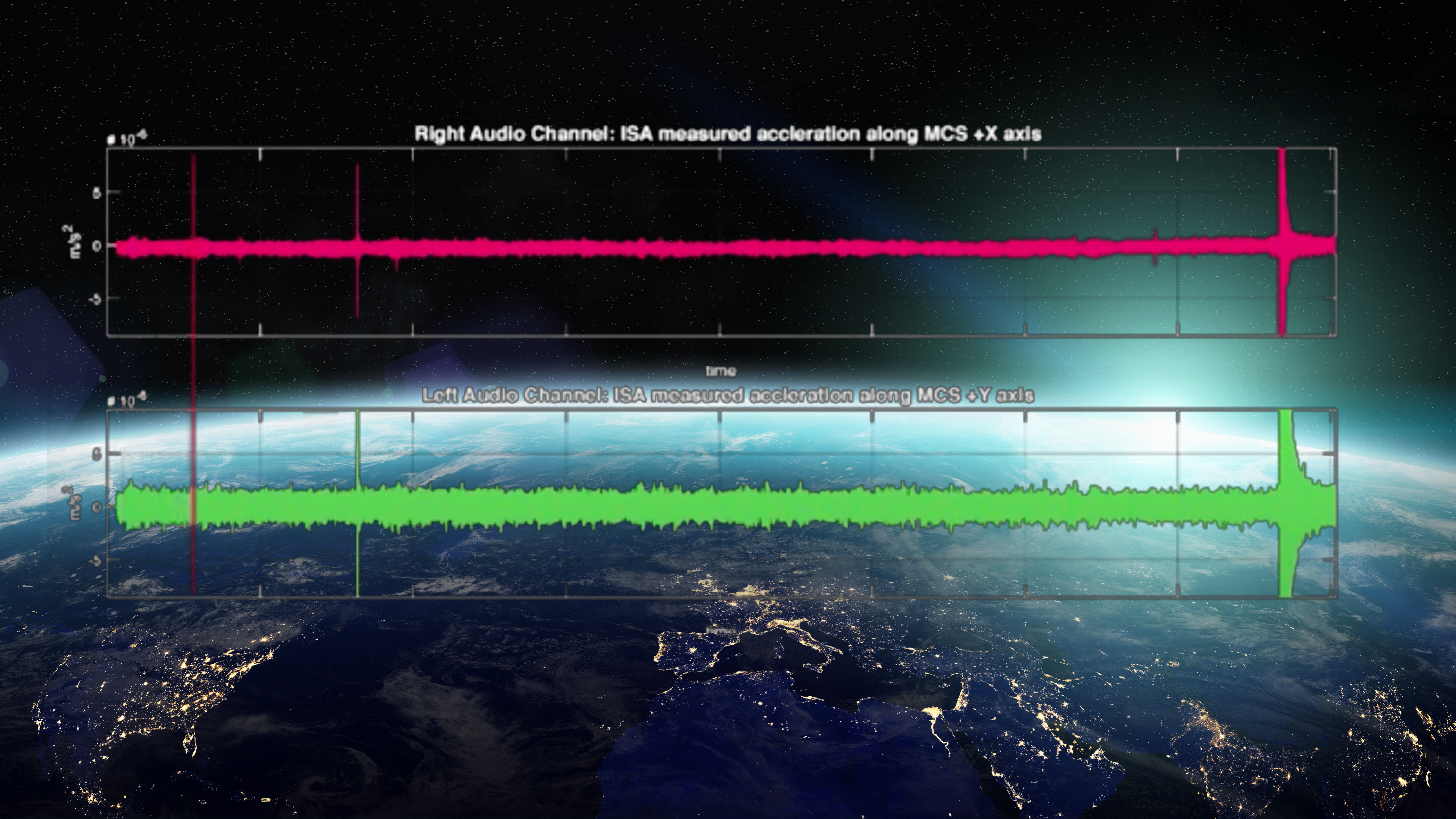

A Mercury-bound spacecraft’s noisy flyby of our home planet.

Why finding joy is more easily attainable than the pursuit of happiness.

▸

9 min

—

with

Audio recordings reveal cows have unique voices and share emotions with each other.

Your cat thinks your taste stinks. Also that you’re mingy with the laser pointer.

Among the variety of human screams, it is screams of terror that stand out most vividly.

Scientists may have seen a way to cure a maddening symptom of hearing loss.

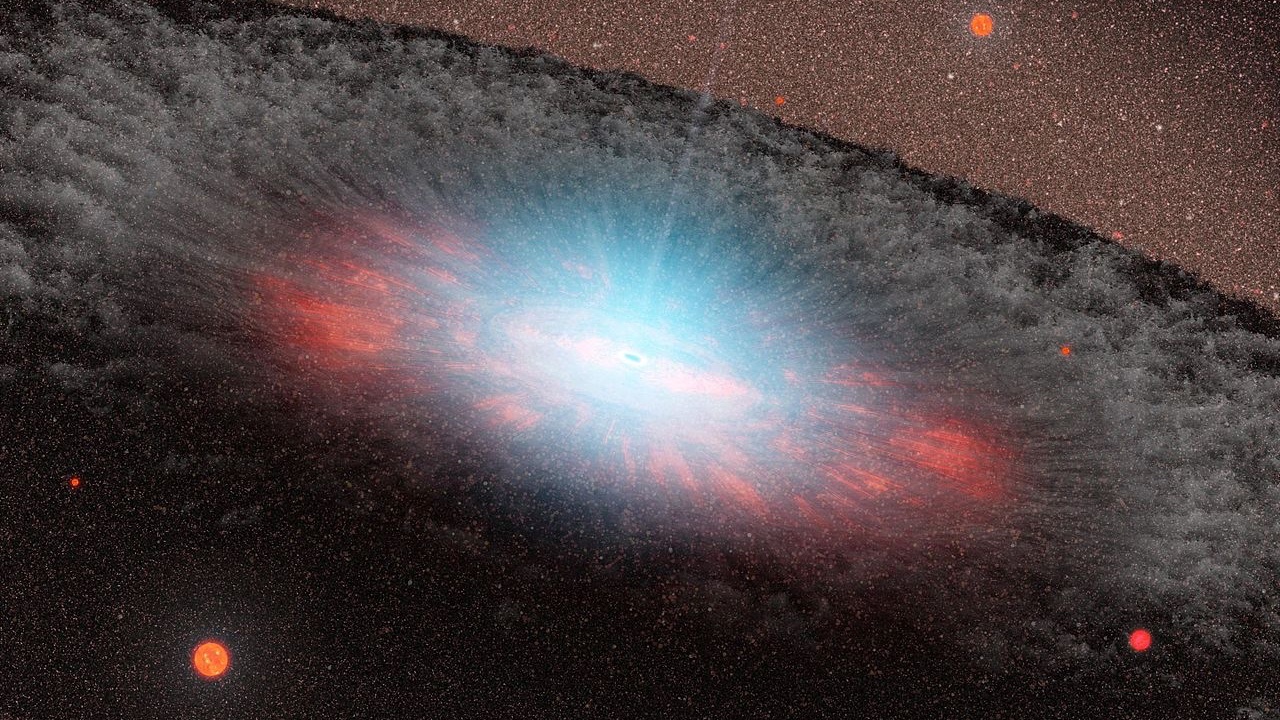

One of Stephen Hawking’s predictions seems to have been borne out in a man-made “black hole.”

Between the noise and frustration, we’re suffering more than ever.

A breakthrough app for ultrasonic squeak analysis.

Moving from HOT to HAT, a dazzling new acoustic technology.

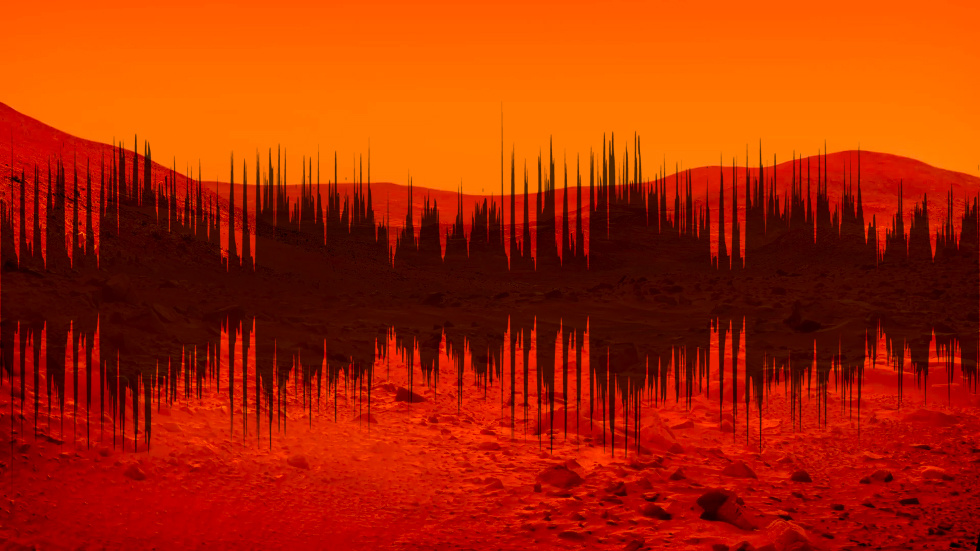

On Friday, NASA’s InSight Mars lander captured and transmitted historic audio from the red planet.

Tiny bubbles talk photosynthesis.

Over 67,000 trials by the Color Guard can’t be wrong.

It has experts baffled.

Neuroscientists are now starting to put TMR to work.