Engineering

The design could help restore motor function after stroke, enhance virtual gaming experiences.

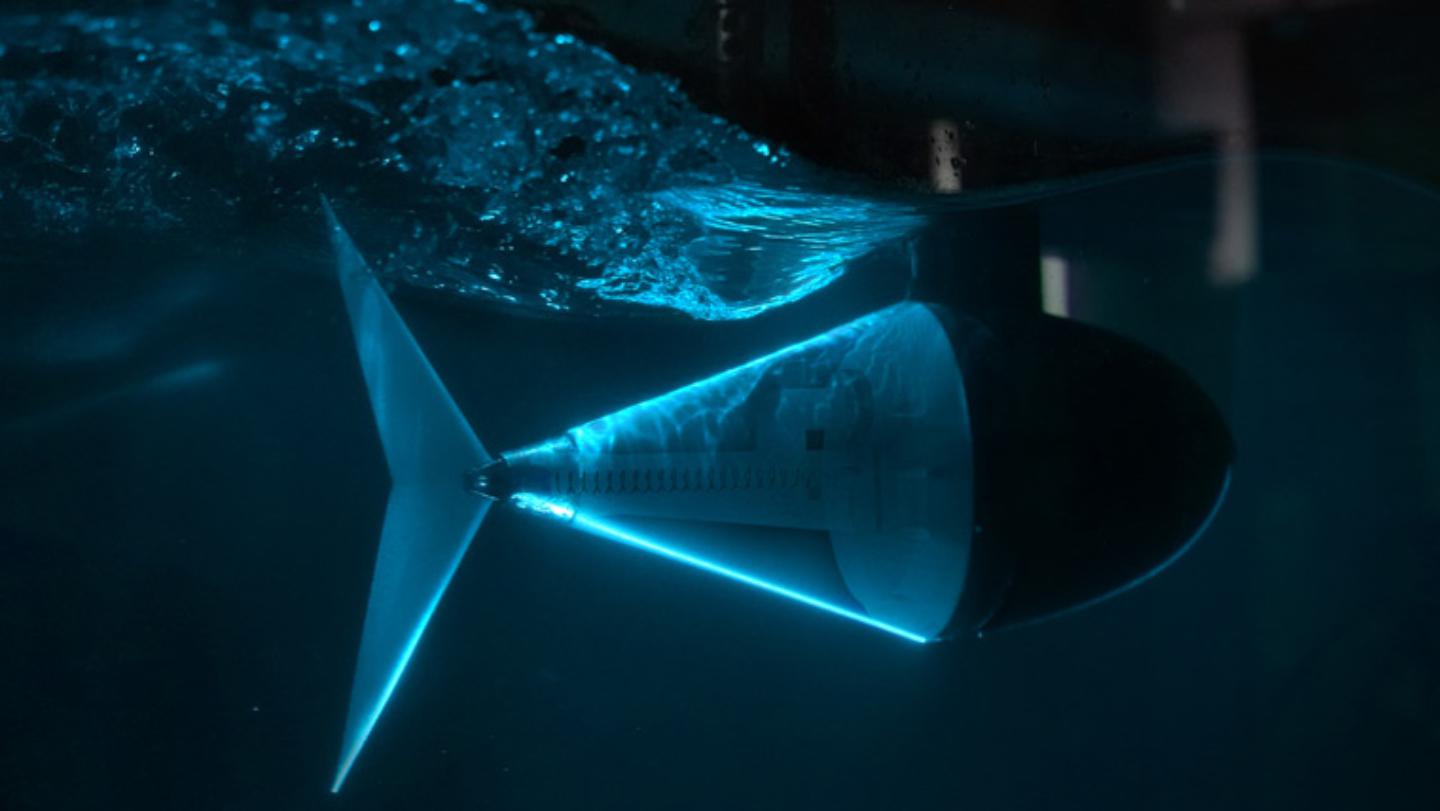

A new tuna robot leads the way to more agile underwater robots and drones.

Israeli food-tech company DouxMatok (Hebrew for “double sweet”) has created a sugary product that uses 40 percent less actual sugar yet still tastes sweet.

Researchers were even able store and read a 767-kilobit full-color short movie file in the fabric.

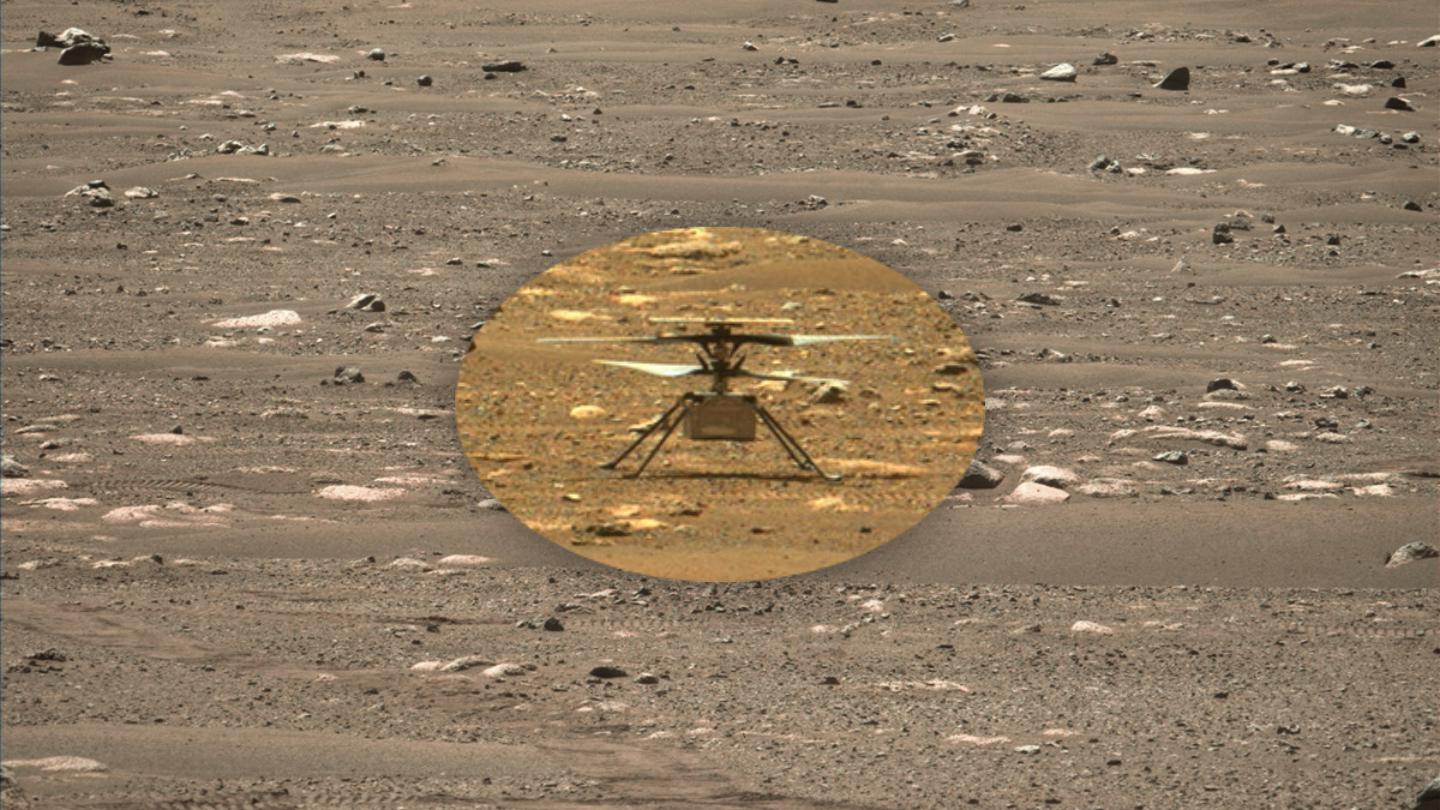

The helicopter’s sixth mission almost went down in disaster.

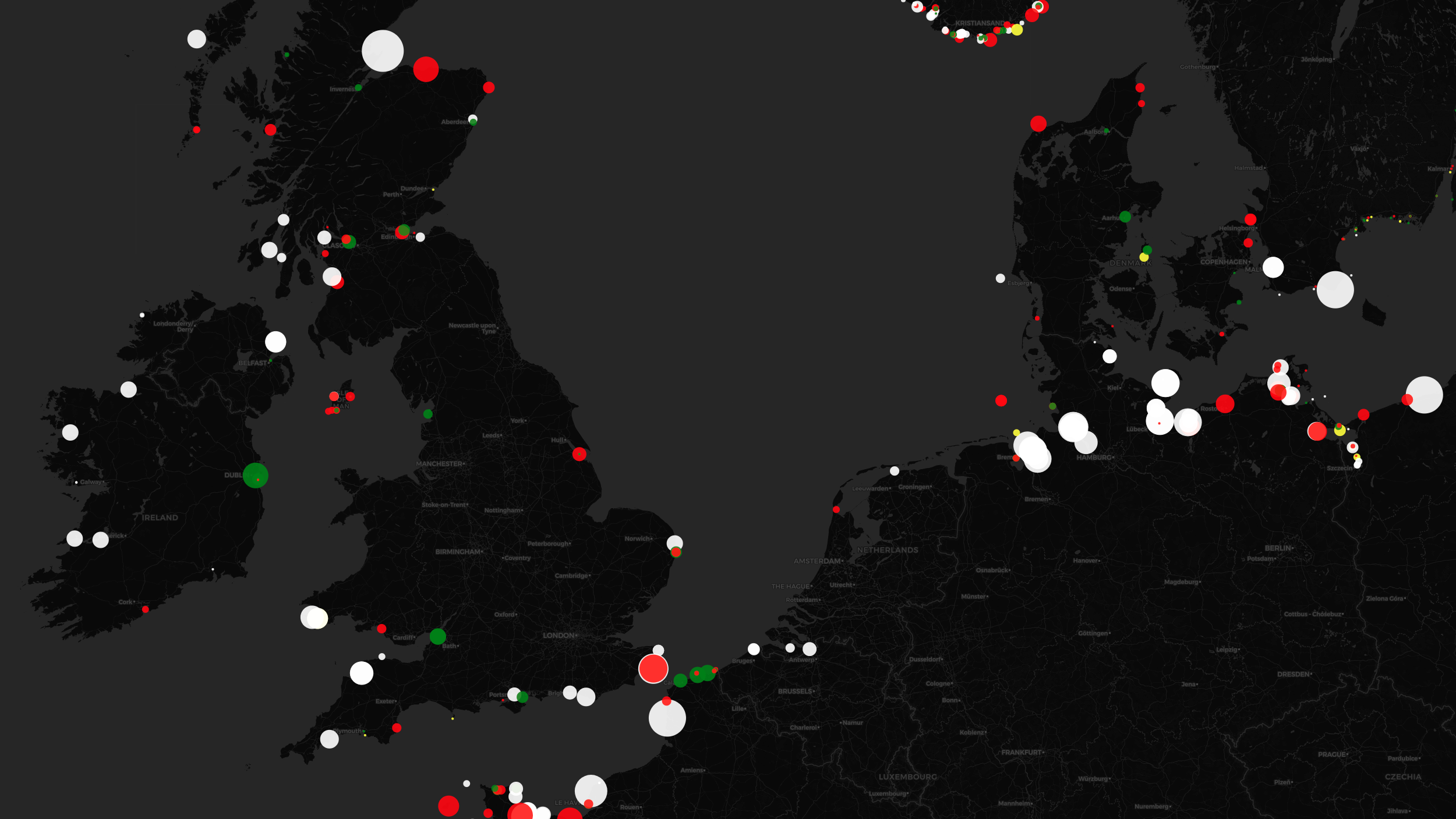

The unique light signatures of nautical beacons translate into hypnotic cartography.

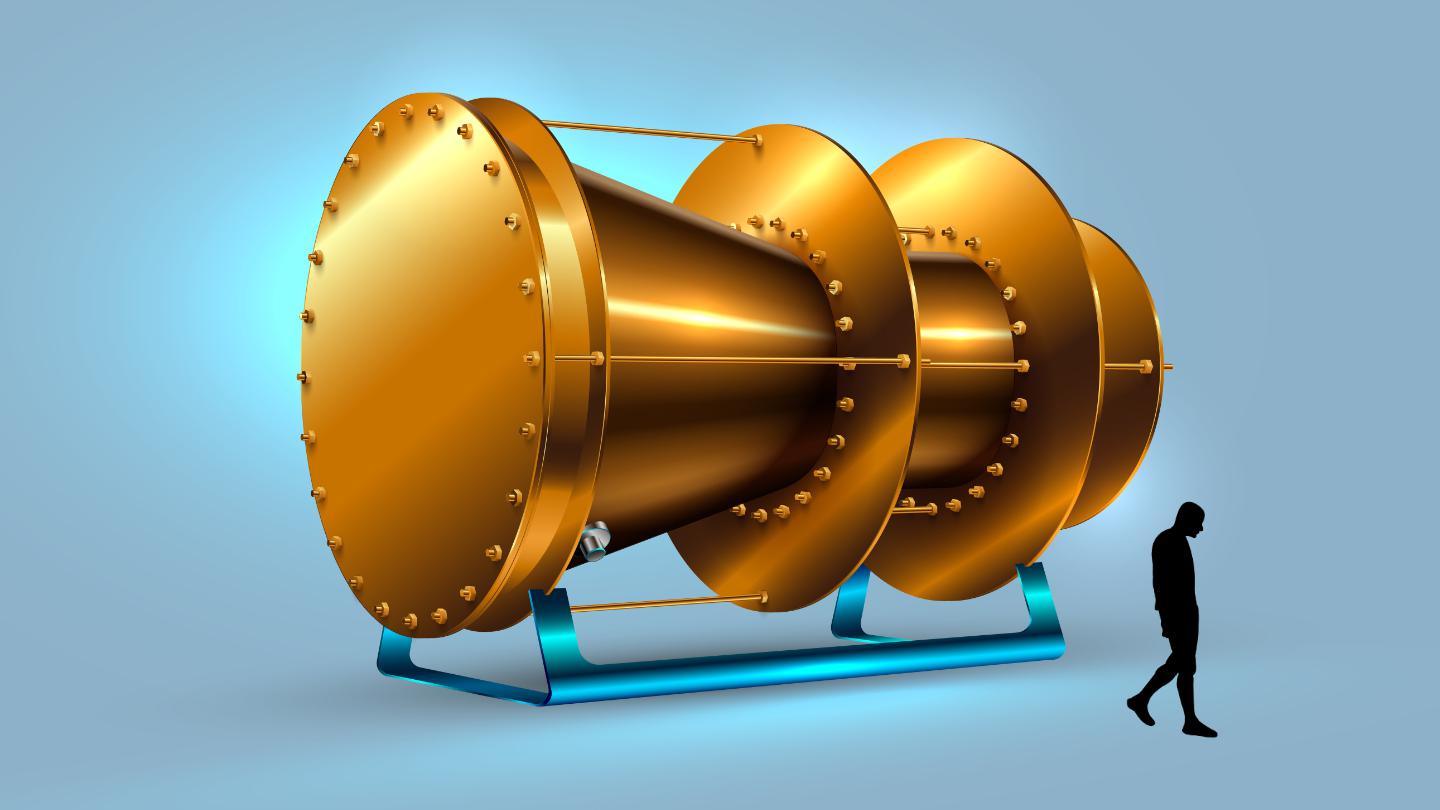

The EmDrive turns out to be the “um…” drive after all, as a new study dubs any previous encouraging EmDrive results “false positives.”

The bird demonstrates cutting-edge technology for devising self-folding nanoscale robots.

Learn all about mechanical design, product development, material selection, manufacturing, and so much more.

The satellite would burn instead of becoming more space debris.

Boston Dynamics’ notorious robot goes on an interplanetary mission.

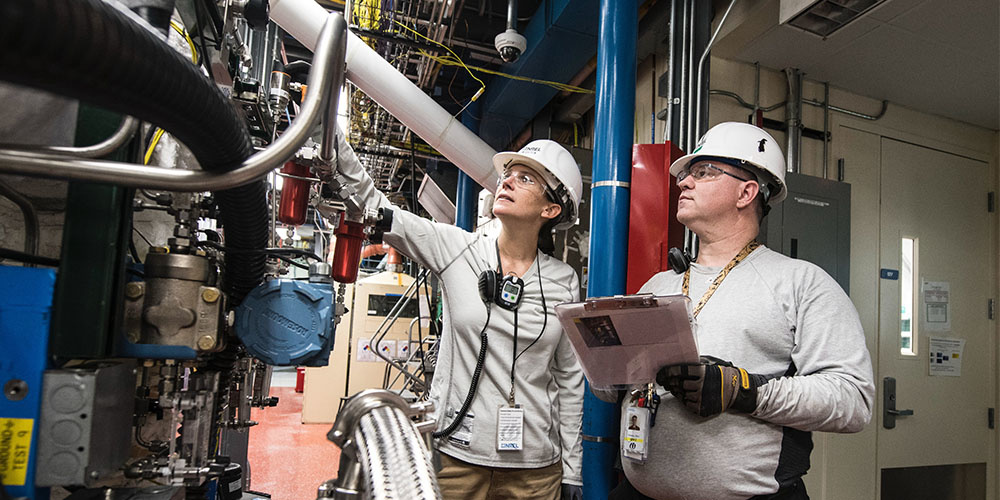

Scientists at Washington University are patenting a new electrolyzer designed for frigid Martian water.

Researchers make the case for “deep evidential regression.”

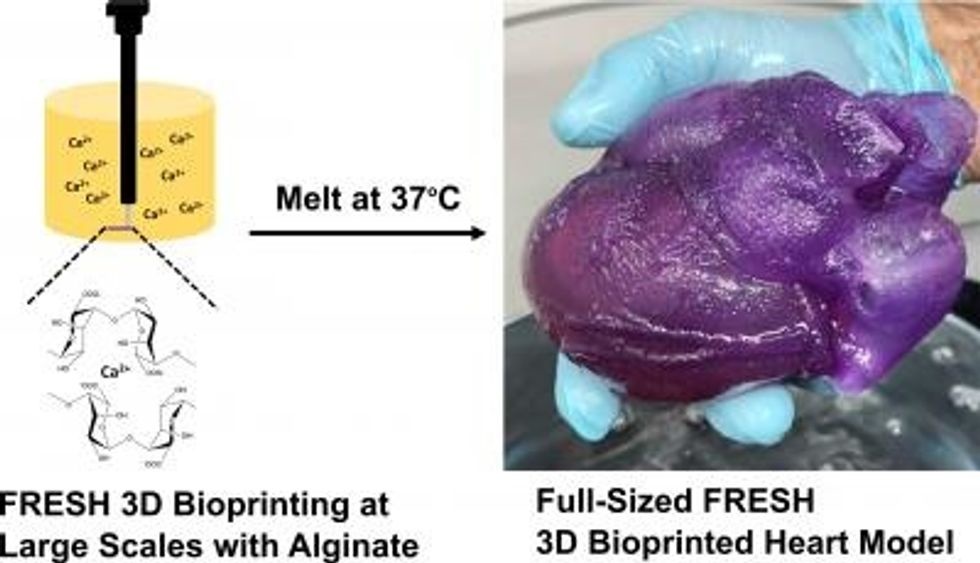

A new method is able to create realistic models of the human heart, which could vastly improve how surgeons train for complex procedures.

The new tool may someday be used in work that needs a light touch.

Miso Robotics has already served up over 12,000 hamburgers.

This wide-ranging, 13-course electrical engineering training is your next power move.

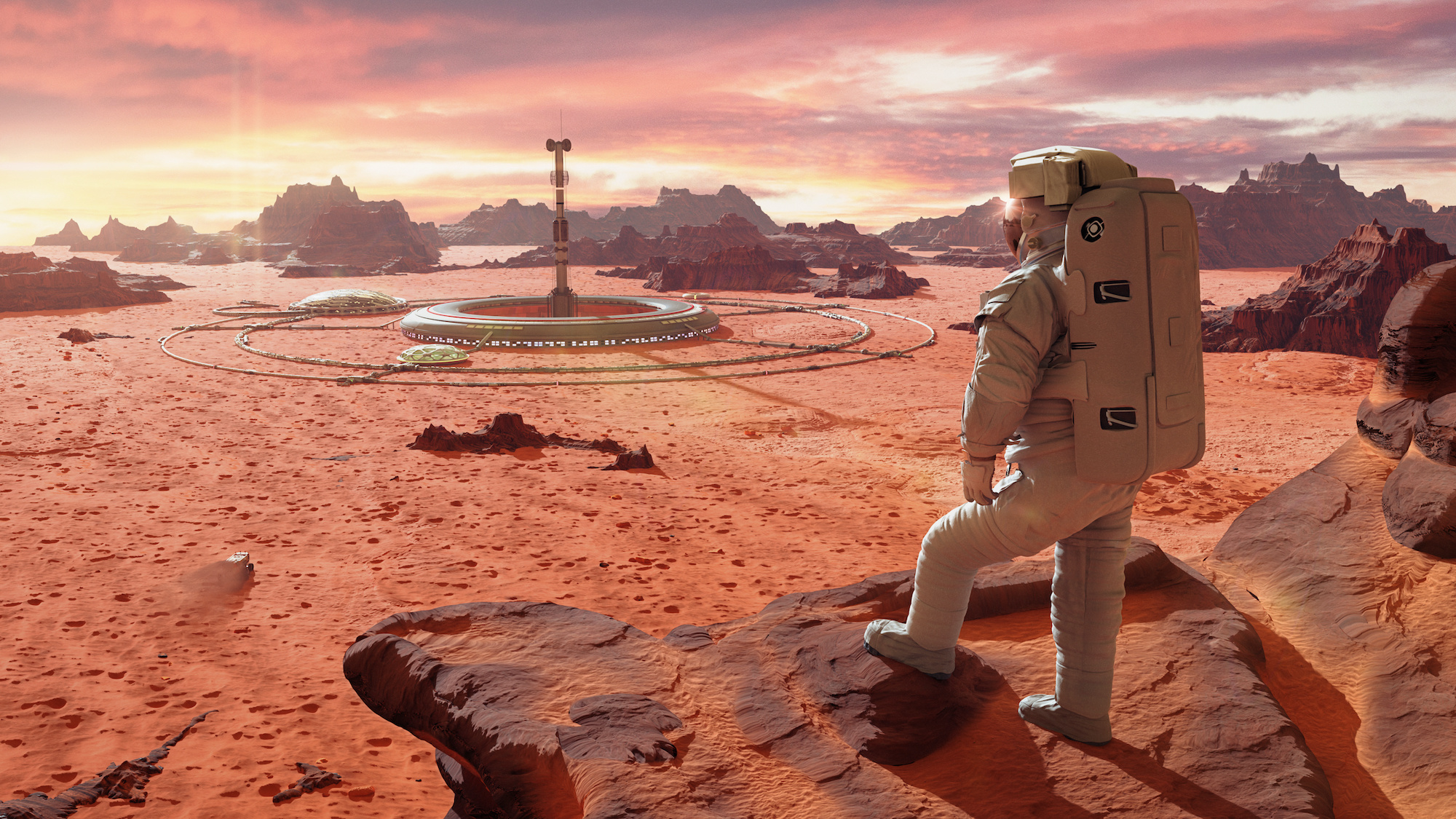

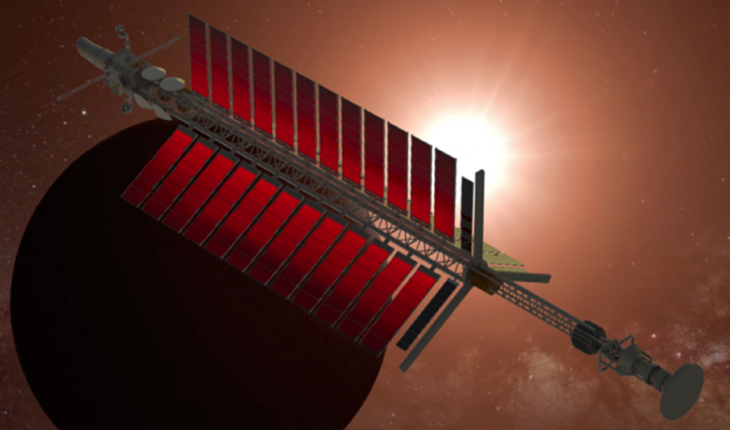

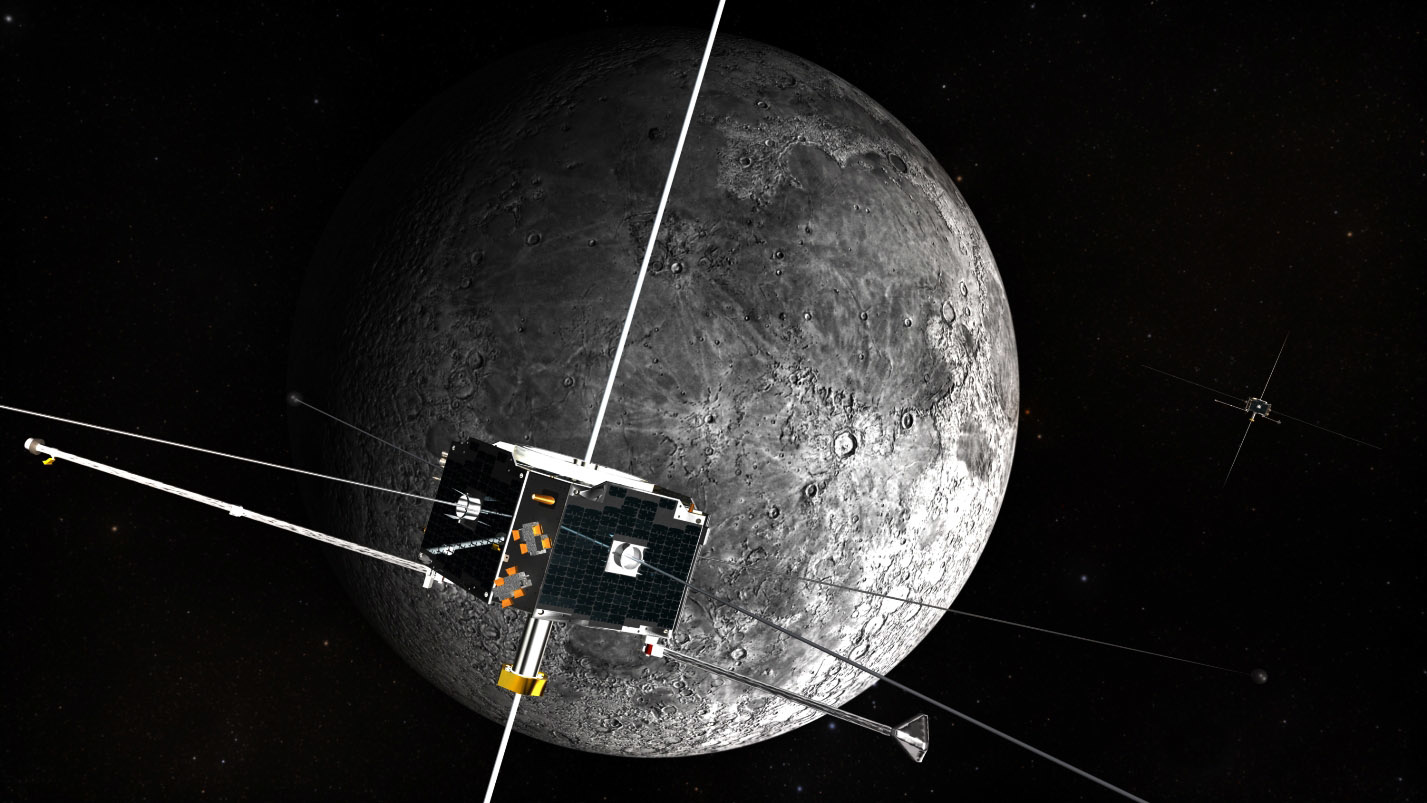

The future of cities on the Moon, Mars and orbital habitats.

Turns out chitin is quite useful when you need a wrench.

A mile-high tower would not just be a new structure, but a new technology.

The drive would provide enough thrust for a spacecraft to travel near the speed of light using only electricity, says physicist Jim Woodward.

DNA molecules are highly programmable.

It doesn’t help that Hollywood has cast the ‘coder’ as a socially challenged, type-first-think-later hacker, inevitably white and male.

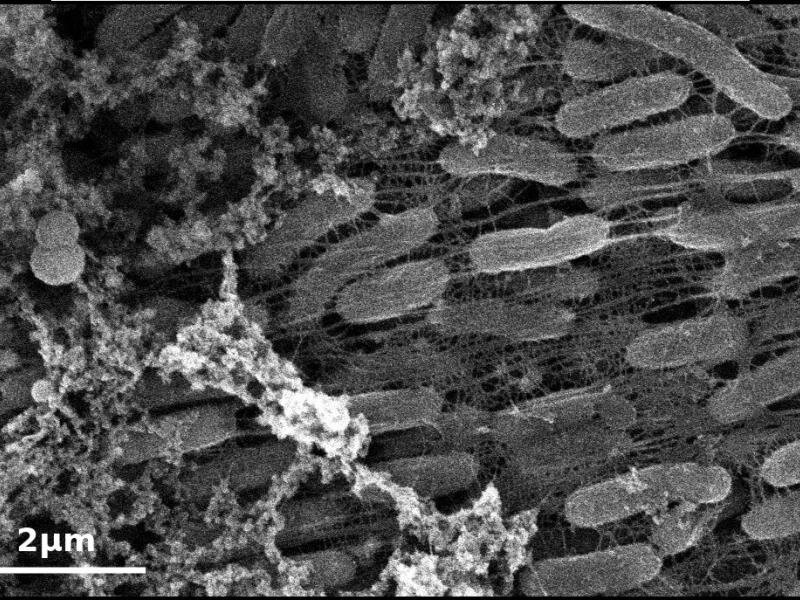

Researchers find an unusual property of a bacteria that can breathe in metal.

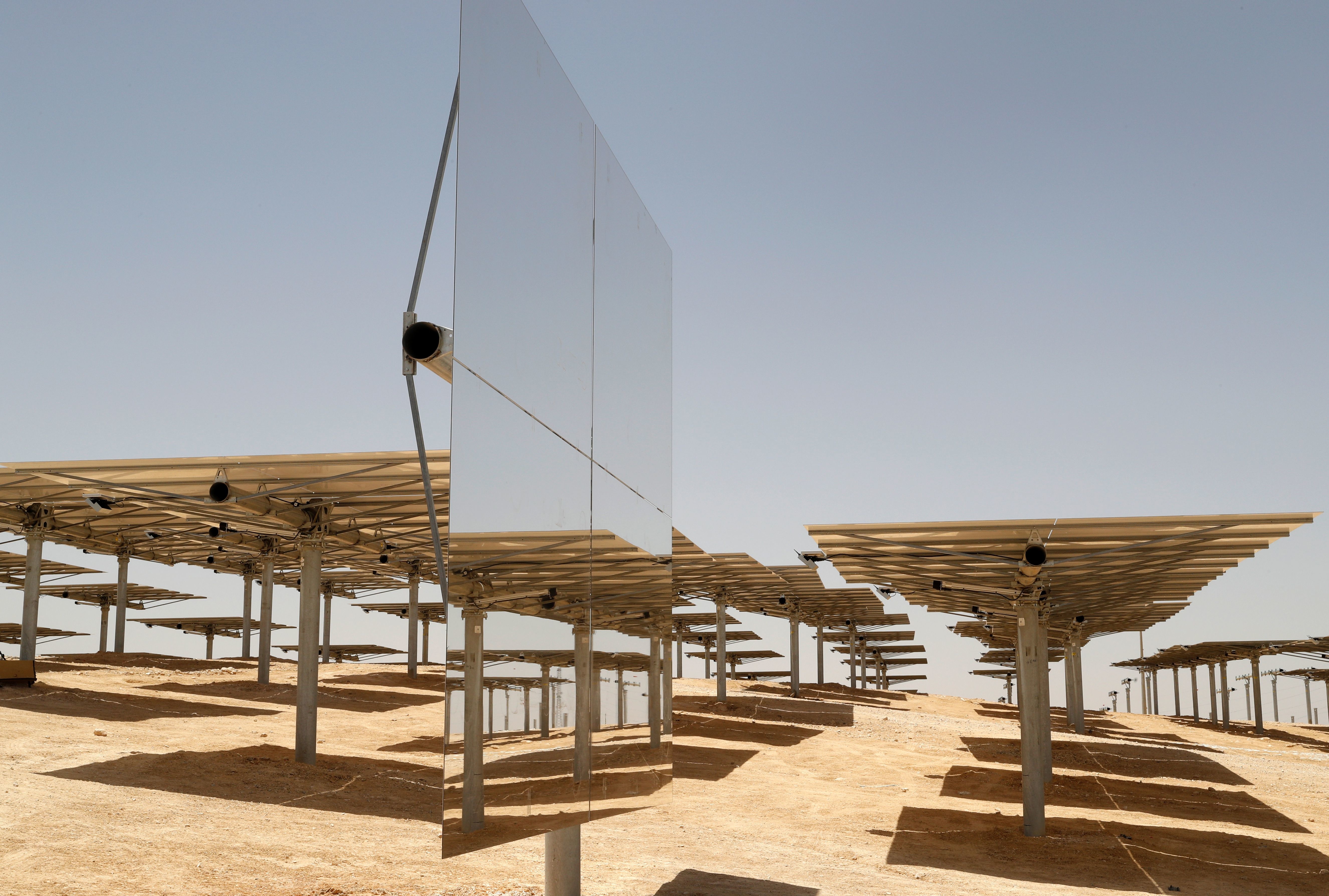

Solar geoengineering ideas could weaken storms in both hemispheres, scientists find.

SpaceX’s momentous Crew Dragon launch is a sign of things to come for the space industry, and humanity’s future.

An MIT system uses wireless signals to measure in-home appliance usage to better understand health tendencies.

A NASA-sponsored competition asks participants to improve the design of a bucket drum for moon excavation.

Despite the hype, these technologies aren’t relevant right now. But they could be in the future.

▸

4 min

—

with

The BYP Network is shining light on overlooked talent in certain industries.