Both social media companies plan to implement special protocols on Tuesday as election results begin rolling in.

Why virtue signaling does nothing.

▸

with

We’ve got the biggest comments. The best comments. You’re going to have such good comments that you’re going to be sick of how good these comments are. Believe me.

The internet and social media have made persuasive appeals more powerful than ever before.

The social media behemoth wants you to use their platform less, not more, than before.

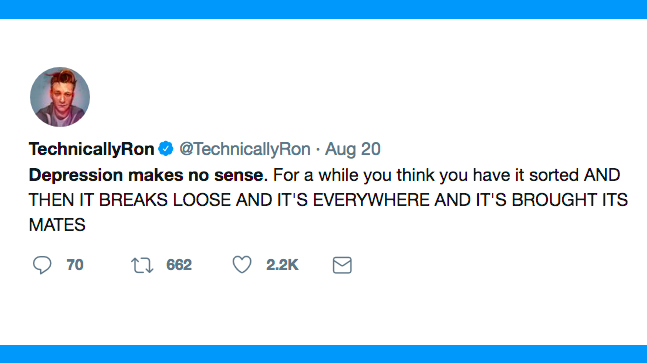

When a ‘Rick and Morty’ fan recently tweeted at Dan Harmon asking how to deal with depression, it didn’t take him long to reply.

A supervised learning algorithm can predict clinical depression much earlier and more accurately than trained health professionals.

You mad, bro? The way that Facebook (and Twitter) manipulates your brain should be the very thing that outrages us the most.

▸

7 min

—

with

Neil deGrasse Tyson, famous in part for using his scientific literacy to point out flaws in TV and movies, recently criticized the good and bad science behind HBO’s Game of Thrones.

In the US Army, taking a knee has a special connotation.

The Washington Post created a Twitter account that automatically retweets all the tweets from the people whom President Donald Trump follows.

Many people were upset when NPR tweeted out the Declaration of Independence.

A recent study shows that NBA players performed worse in games where they had spent the previous night staying up late and Tweeting.

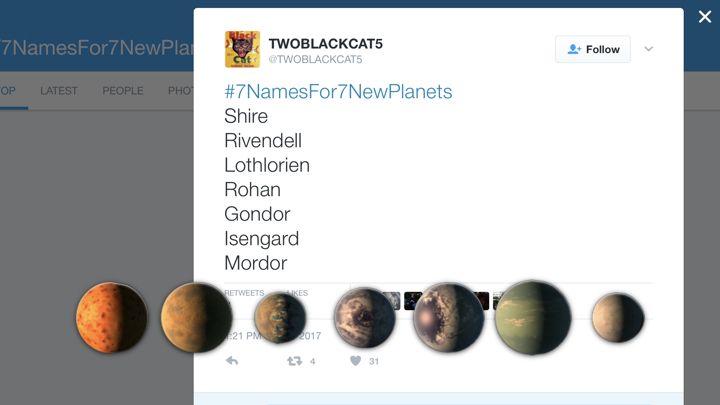

NASA has turned to the internet for help in naming the newly discovered Trappist-1 exoplanets.

Elon Musk’s cryptic messages about a mysterious tunneling project in California are getting more substantive.

Go fearlessly into the Internet, but not blindly, says Virginia Heffernan – each corner of digital culture has its best practices. Not learning them is a disrespect.

▸

7 min

—

with

Researchers find more evidence of the link between social media use by young adults and depression.

A recent tweet from Donald Trump plummeted the value of Lockheed Martin’s stocks. What implications does this hold about the economic influences of social media?

Despite our romanticized vision of social media as a global town square overflowing with diversity, the reality is that each user’s experience is hyper-filtered.

If hate is a virus, the U.S. has got it bad. Oliver Luckett presents a fascinating perspective on how the 2016 election divided America, how social media mimics biology, and how the U.S. can start to rebuild.

▸

10 min

—

with