bioethics

Medical science can save lives, but should it do so at the cost of quality of life?

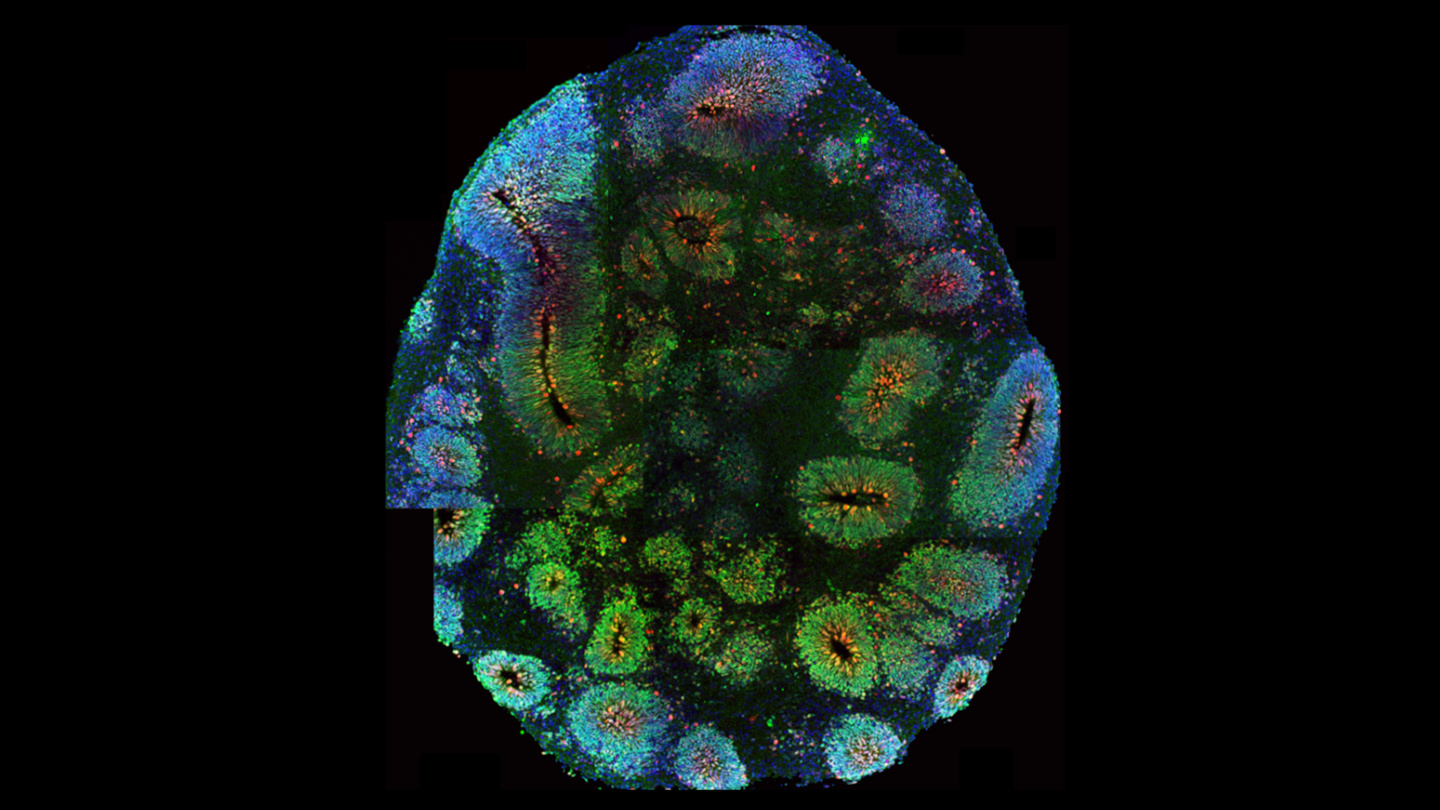

Some neurology experiments — such as growing miniature human brains and reanimating the brains of dead pigs — are getting weird. It’s time to discuss ethics.

“The question is which are okay, which are not okay.”

▸

14 min

—

with

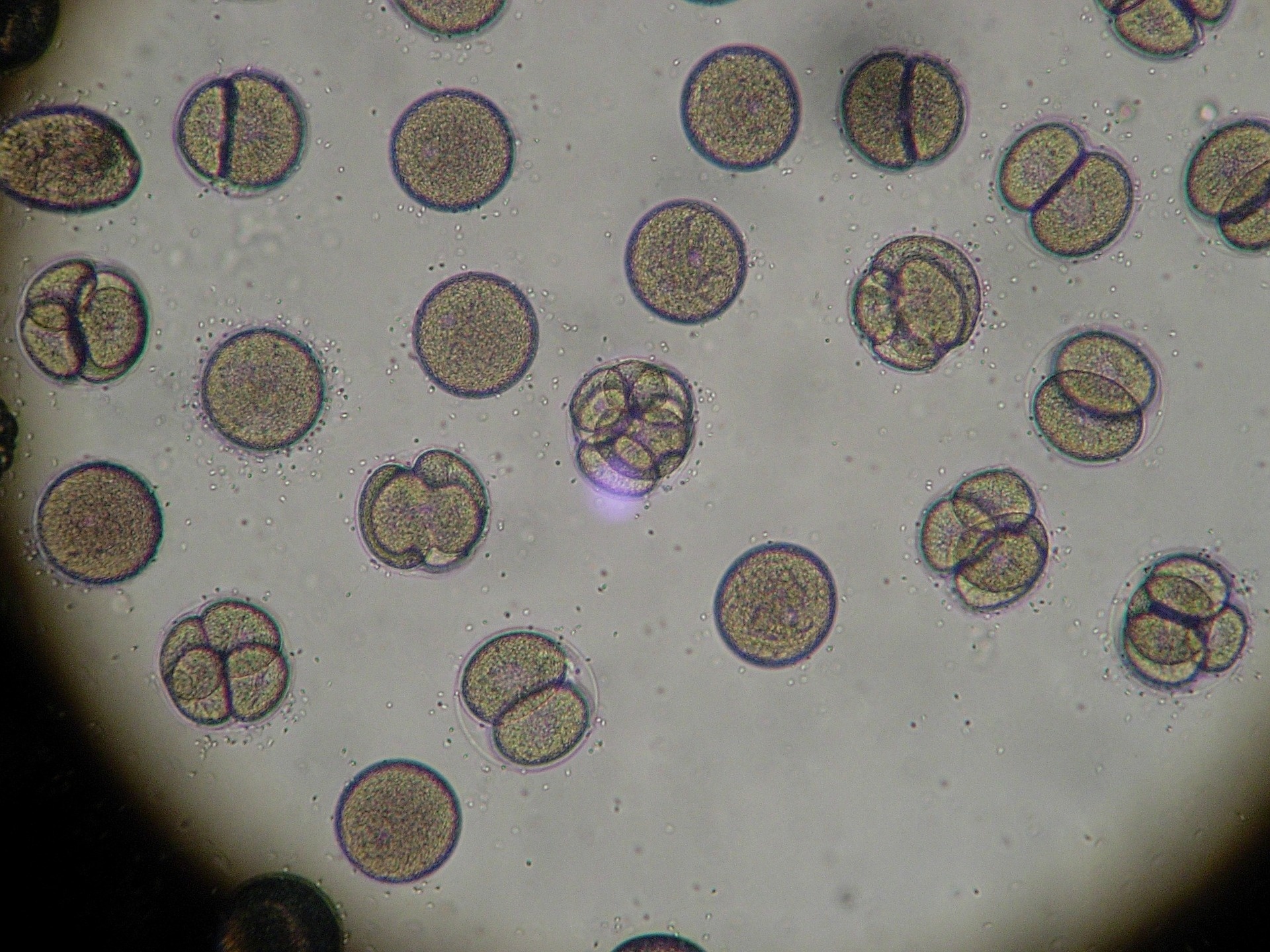

This spring, a U.S. and Chinese team announced that it had successfully grown, for the first time, embryos that included both human and monkey cells.

Could a pill make you more moral? Should you take it if it could?

How would the ability to genetically customize children change society? Sci-fi author Eugene Clark explores the future on our horizon in Volume I of the “Genetic Pressure” series.

Thought expriments are great tools, but do they always do what we want them to?

If machines develop consciousness, or if we manage to give it to them, the human-robot dynamic will forever be different.

▸

16 min

—

with

Exploring how a small change in your DNA sequence can make you a natural blonde.

Should pharmaceutical companies pay people for their plasma? Here’s why paid plasma is a hot ethical issue.

▸

17 min

—

with

Those who have experienced amputations often wonder what happened to their limb after surgery.

New research shows how Americans feel about genetic engineering, human enhancement and automation.

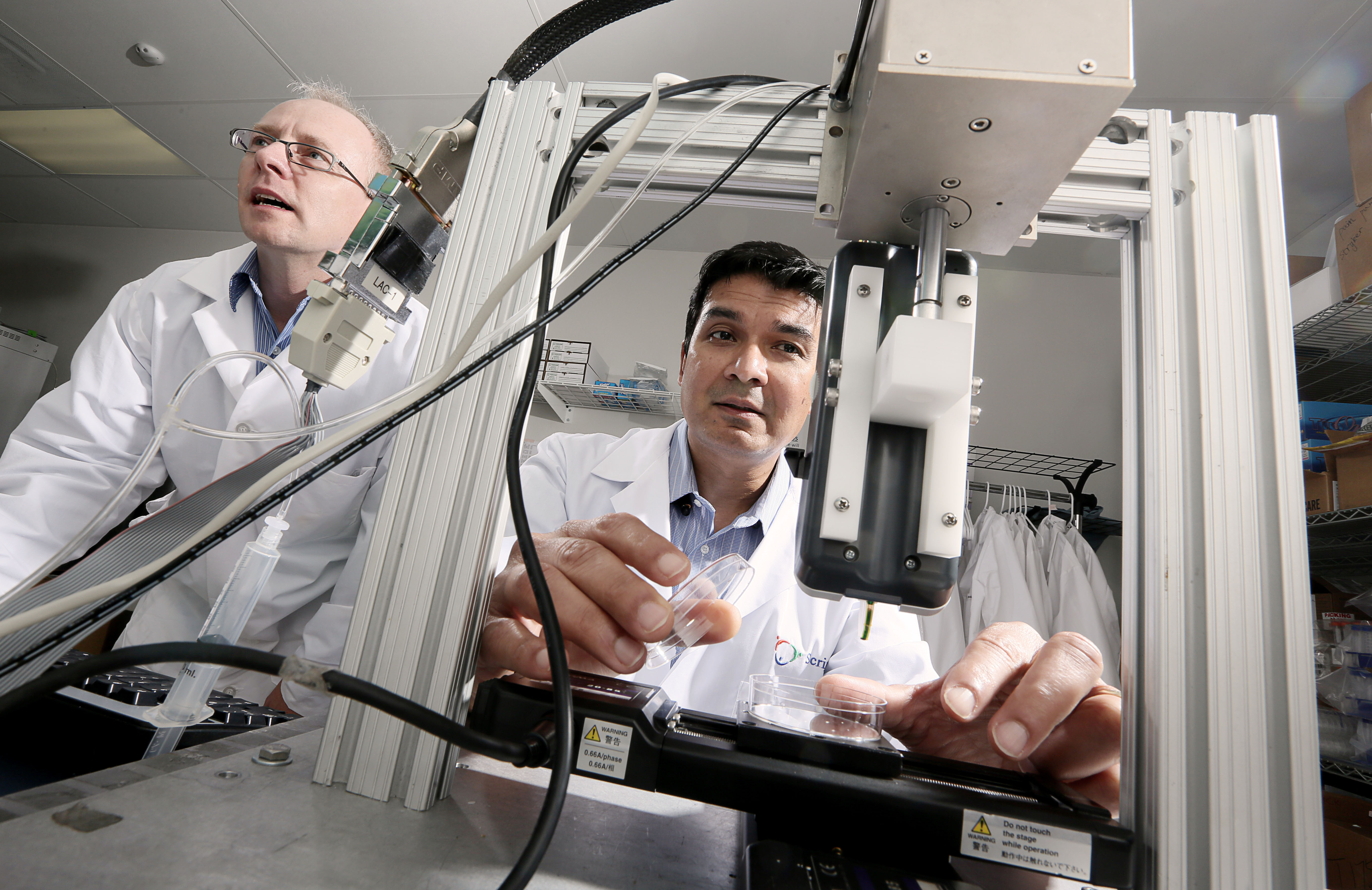

Today, a quickly emerging set of technologies known as bioprinting is poised to push the boundaries further.

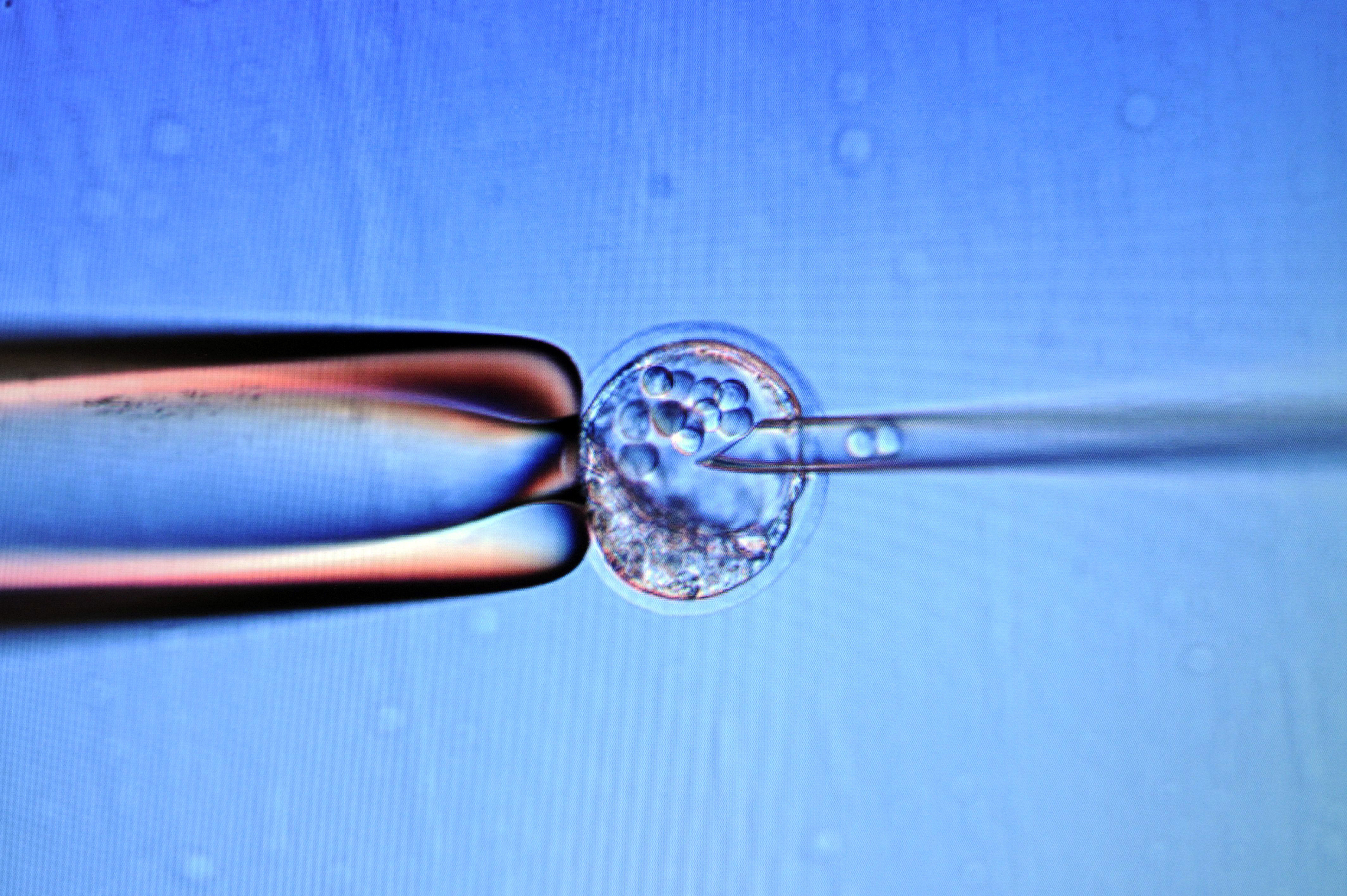

A punishment is handed down for performing shocking research on human embryos.

Thinning forests in the Western United States can save billions of gallons of water per year and improve conservation efforts.

While the blockbuster franchise might have given us a distorted view of science’s capabilities to address species extinction, new research might come close to “resurrecting” lost species’ DNA.

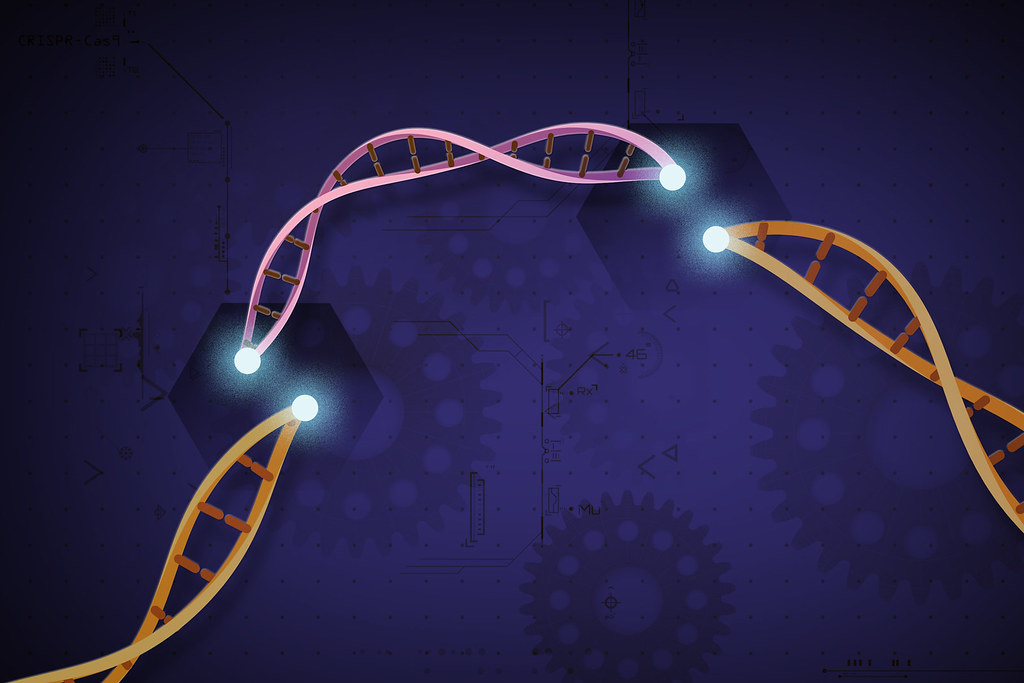

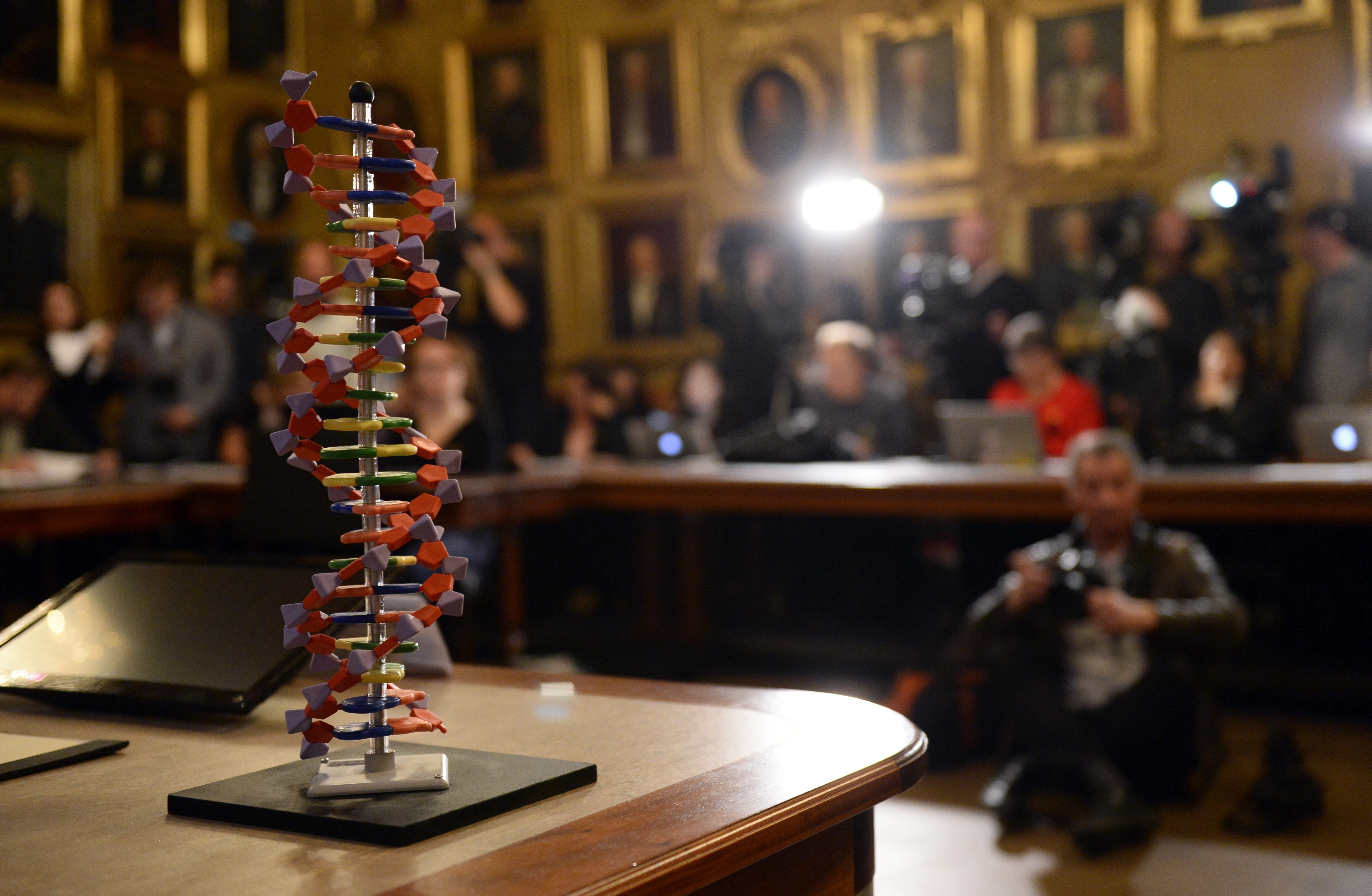

A transformational tool for the future of the world.

The question is no longer “can we” but “should we” edit human embryos.

These tests report on more than just your risk for cilantro aversion and your ice-cream flavor preference.

The goal is to eventually grow a human pancreas in a larger animal – such as a pig – which can be transplanted.

The ultimate goal is to grow organs inside animals that could be transplanted into humans.

Should doctors allow their expertise to trump patient’s personal goals — or should they yield to it?

▸

6 min

—

with

A comprehensive interdisciplinary paper removes any doubt that orcas don’t belong in marine parks and zoos.

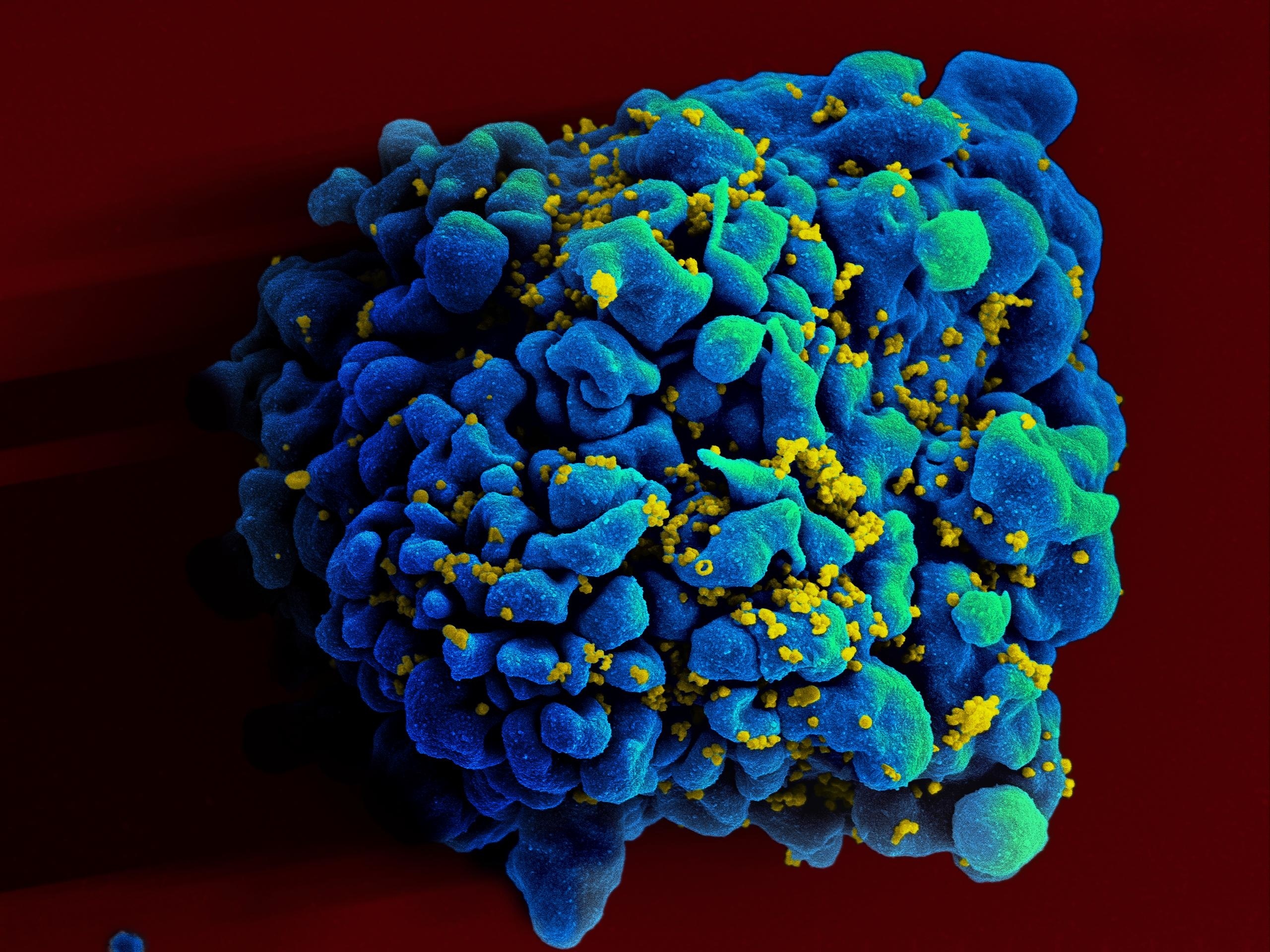

Chinese scientist He Jiankui edited the genes of two babies to be resistant to HIV, provoking outrage. Now, a new genetic analysis shows why this was reckless.

A DNA test promises to reveal your hidden history — but is it all smoke and mirrors?

Hearing-related problems are on the rise.

If A.I.s are as smart as mice or dogs, do they deserve the same rights?

Using a new process, a mini-brain develops retinal cells.

A Chinese researcher has sparked controversy after claiming to have used gene-editing technology known as CRISPR to help make the world’s first genetically modified babies.