software

Understanding “why” may be the key to unlocking an AI’s imagination.

A clever new design introduces a way to image the vast ocean floor.

A small proof-of-concept study shows smartphones could help detect drunkenness based on the way you walk.

The Silicon Valley titan has promised scholarships for its tech-focused certificate courses alongside $10 million in job training grants.

The programming giant exits the space due to ethical concerns.

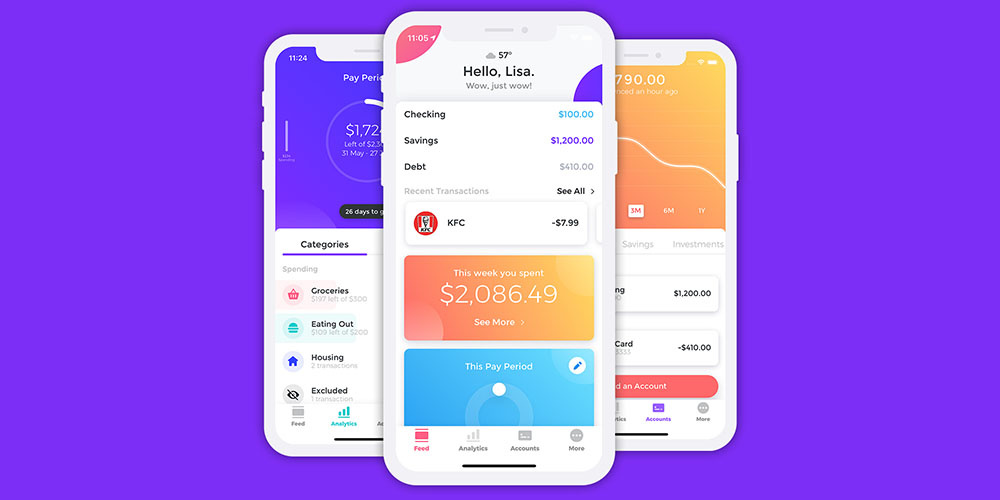

Get your finances in shape with this powerful money manager.

Interpreter, Google’s language translating tool, is coming to mobile and it’s poised to change our everyday conversations.

No harm done this time, but it’s an ominous occurrence.

In the next two to three years we’ll see passwords go away in a way that’s long overdue.

▸

2 min

—

with

Recent years have seen countries across the African continent investing deep into the tech industry. Rwanda is angling to get ahead of the pack.

Hackers look for open doors. If your personal data isn’t protected, it’s that much easier to compromise your identity.

▸

3 min

—

with

The recent discovery highlights an alarming cybersecurity vulnerability in the health care industry.

The history of Silicon Valley: The rise of a technological unicorn.

▸

10 min

—

with

A new study highlights a fascinating application of AI, though other uses are more troubling.

Half of Americans do not trust the federal government or social media sites to protect their data.

What does your phone know about you?

When he first became a multi-millionaire, Elon Musk shared how his vision led to success.

Back with another one of those block(chain)-rockin’ reads.

Inconceivable wealth. And a few lessons in how not to get rich, too.

The Seattle tech magnate died from complications of non-Hodgkin’s lymphoma.