robots

The AI remembers that you are 32 years old and like to eat sushi, except on Thursdays.

Is there actually anything deserving of the term AI?

You don’t need to completely automate a job to fundamentally change it.

While a squirrel’s life may look simple to human observers – climb, eat, sleep, repeat – it involves finely tuned cognitive skills.

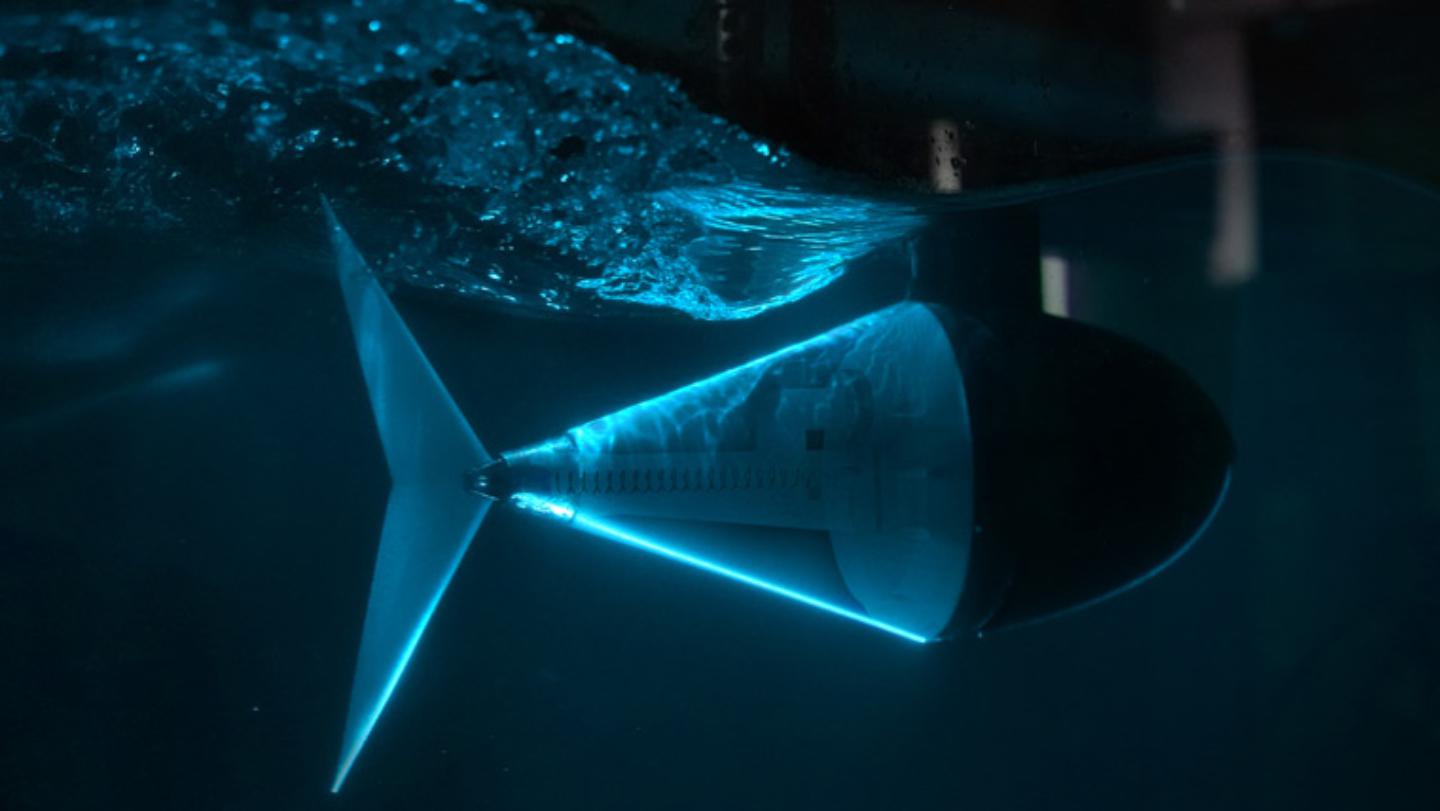

A new tuna robot leads the way to more agile underwater robots and drones.

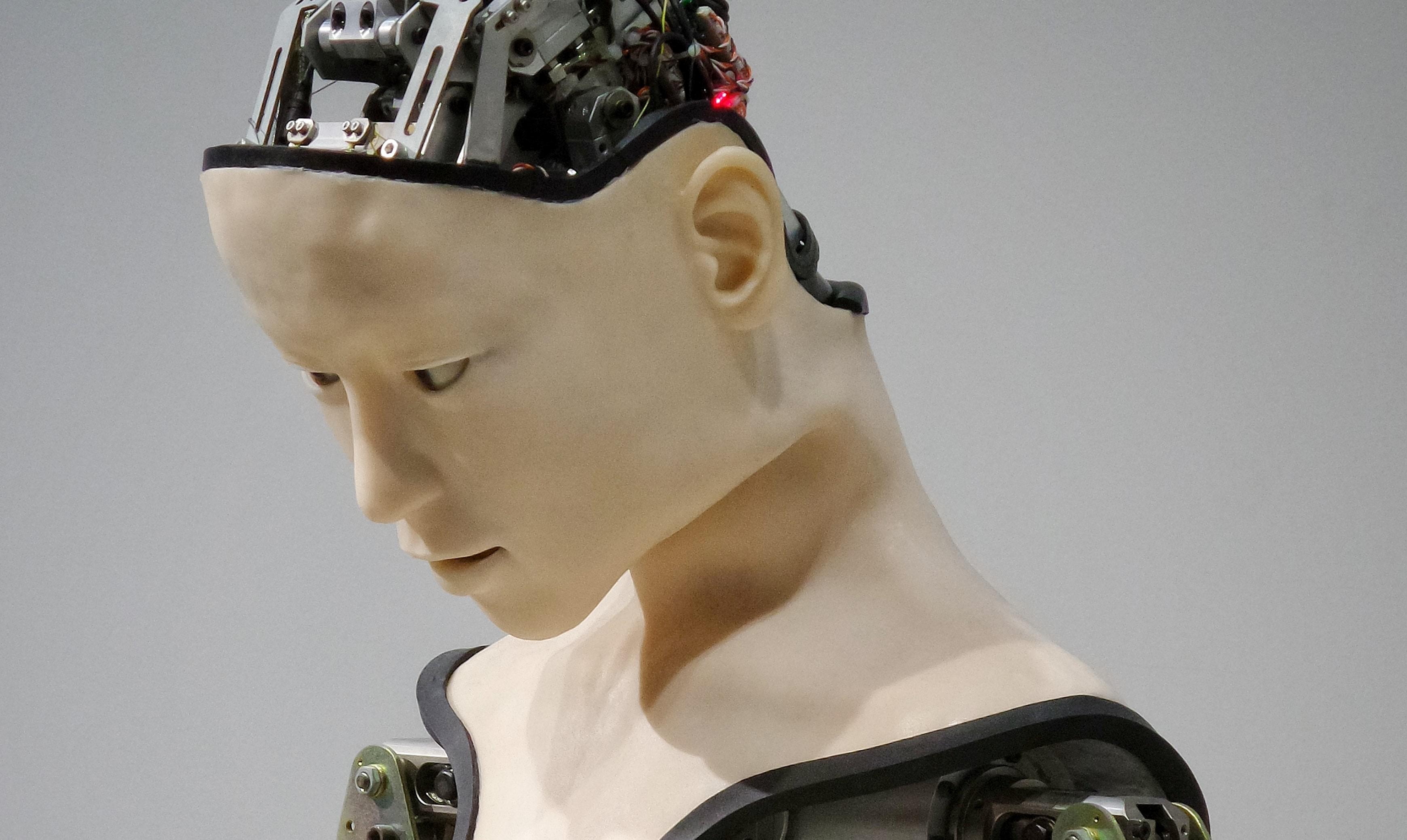

Meet MIT’s Kate Darling, a robot ethicist who says that we should rethink our relationship with robots.

That’s as fast as a bullet train in Japan.

It uses radio waves to pinpoint items, even when they’re hidden from view.

The bird demonstrates cutting-edge technology for devising self-folding nanoscale robots.

Creating an afterlife—or a simulation of one—would take vast amounts of energy. Some scientists think the best way to capture that energy is by building megastructures around stars.

These DIY learning kits focus on topics like coding, robotics, and AI, and are on sale for as low as $41.99.

Boston Dynamics’ notorious robot goes on an interplanetary mission.

Is the quest to upload human consciousness and ditch our meat puppets the future—or is it fool’s gold?

▸

14 min

—

with

A new MIT report proposes how humans should prepare for the age of automation and artificial intelligence.

New book explores a future populated with robot helpers.

Miso Robotics has already served up over 12,000 hamburgers.

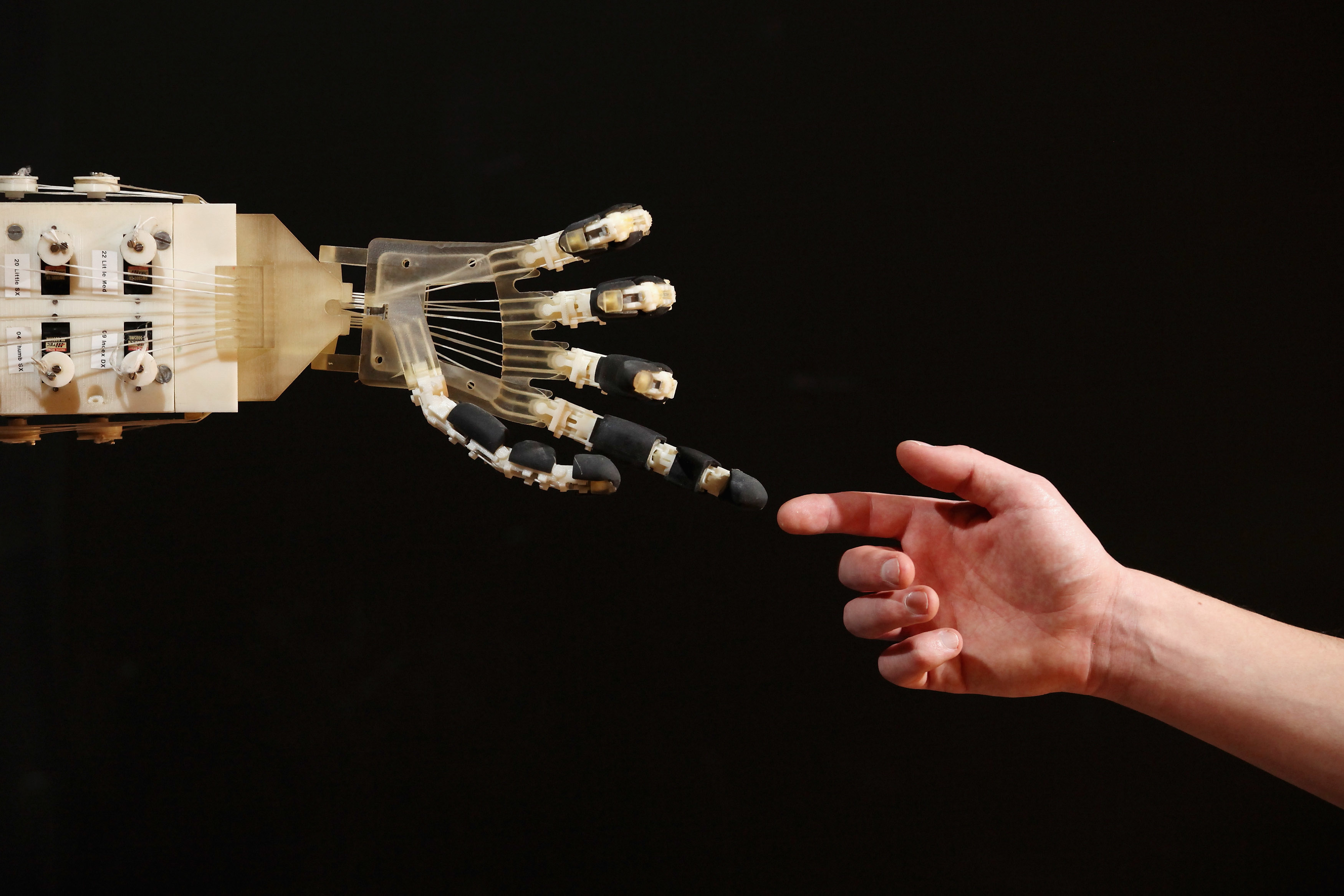

An accident left this musician with one arm. Now he is helping create future tech for others with disabilities.

▸

8 min

—

with

It’s a very human behavior—arguably one of the fundamentals that makes us us.

COVID-19 may strengthen the case for universal basic income, or an idea like it.

▸

24 min

—

with

Coronavirus layoffs are a glimpse into our automated future. We need to build better education opportunities now so Americans can find work in the economy of tomorrow.

If machines develop consciousness, or if we manage to give it to them, the human-robot dynamic will forever be different.

▸

16 min

—

with

Some of the world’s top minds weigh in on one of the most divisive questions in tech.

▸

17 min

—

with

The smart skin can “sweat” like human skin and also self-heal.

Researchers are making progress in the effort to develop safe and practical supernumerary robotic limbs.

Should humans fear artificial intelligence or welcome it into our lives?

▸

3 min

—

with

A team of scientists created a new type of robot inspired by an octopus, and it could be a major breakthrough in the field.

A NASA-sponsored competition asks participants to improve the design of a bucket drum for moon excavation.

Through experiencing time in a nonlinear way, can artificial intelligence provide us more perspective?

▸

3 min

—

with

Our social emotions are now being hijacked by robots.

Ezra Klein offers good reasons to take a skeptical look at automation apocalypse theories.

▸

3 min

—

with