astronomy

NASA’s Michelle Thaller explains what happens when the densest stars in the galaxy collide.

▸

5 min

—

with

From time-traveling billiard balls to information-destroying black holes, the world’s got plenty of puzzles that are hard to wrap your head around.

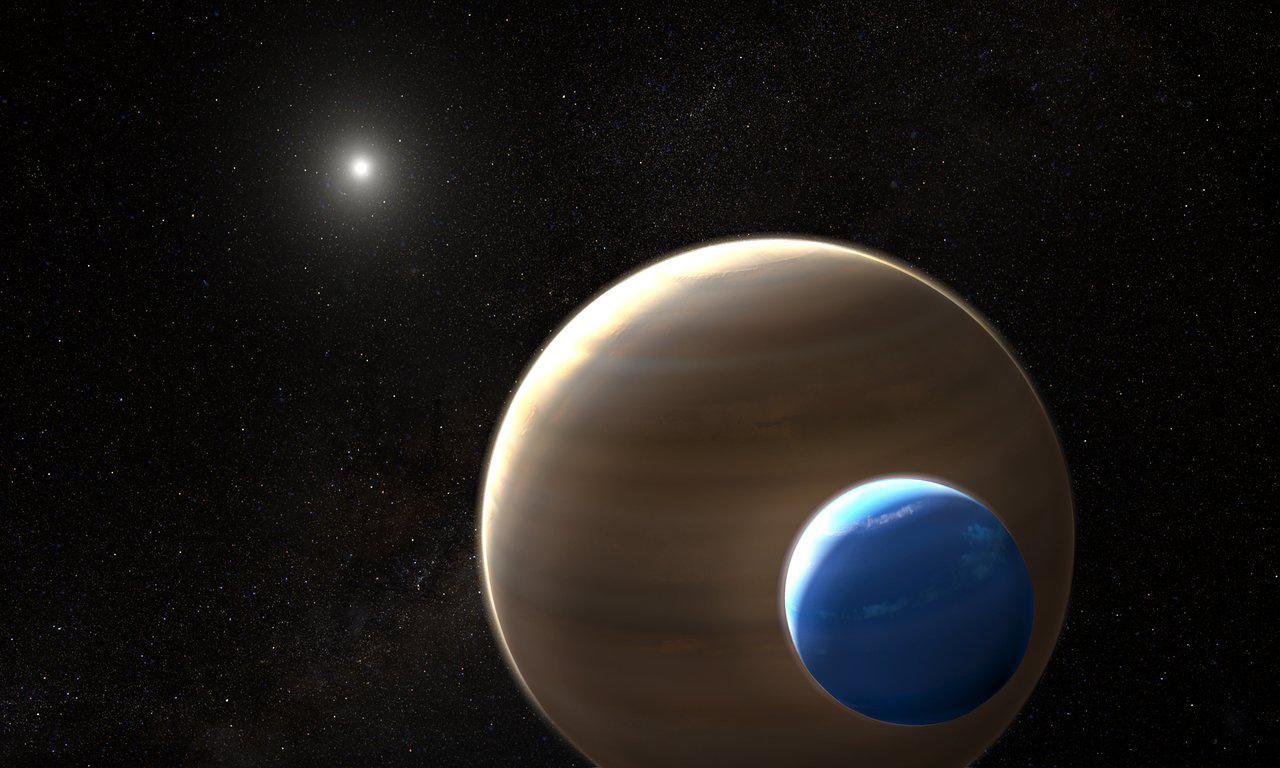

Life finds a way — particularly if it has a moon.

This short story is a fictional account of two very real people — Anaximander and Anaximenes, two ancient Greeks who tried to make sense of the universe.

Water is vital for life. Luckily for spacefaring humans, the solar system is full of it.

How did the troughs form?

A new model addresses a longstanding problem: where do quasars get the fuel they need to outshine entire galaxies?

Galaxies can die if their star-making stuff is lost. But now it can find its way back.

Surrounding Earth is a powerful magnetic field created by swirling liquid iron in the planet’s core. Earth’s magnetic field may be nearly as old as the Earth itself – and […]

Betelgeuse, the tenth brightest star in the night sky, mysteriously dimmed last year. Now researchers know why.

Jupiter’s atmosphere is hotter than it should be, and now we know why.

For the first time, light that comes from behind a black hole has been spotted.

When Olympic athletes perform dazzling feats of athletic prowess, they are using the same principles of physics that gave birth to stars and planets.

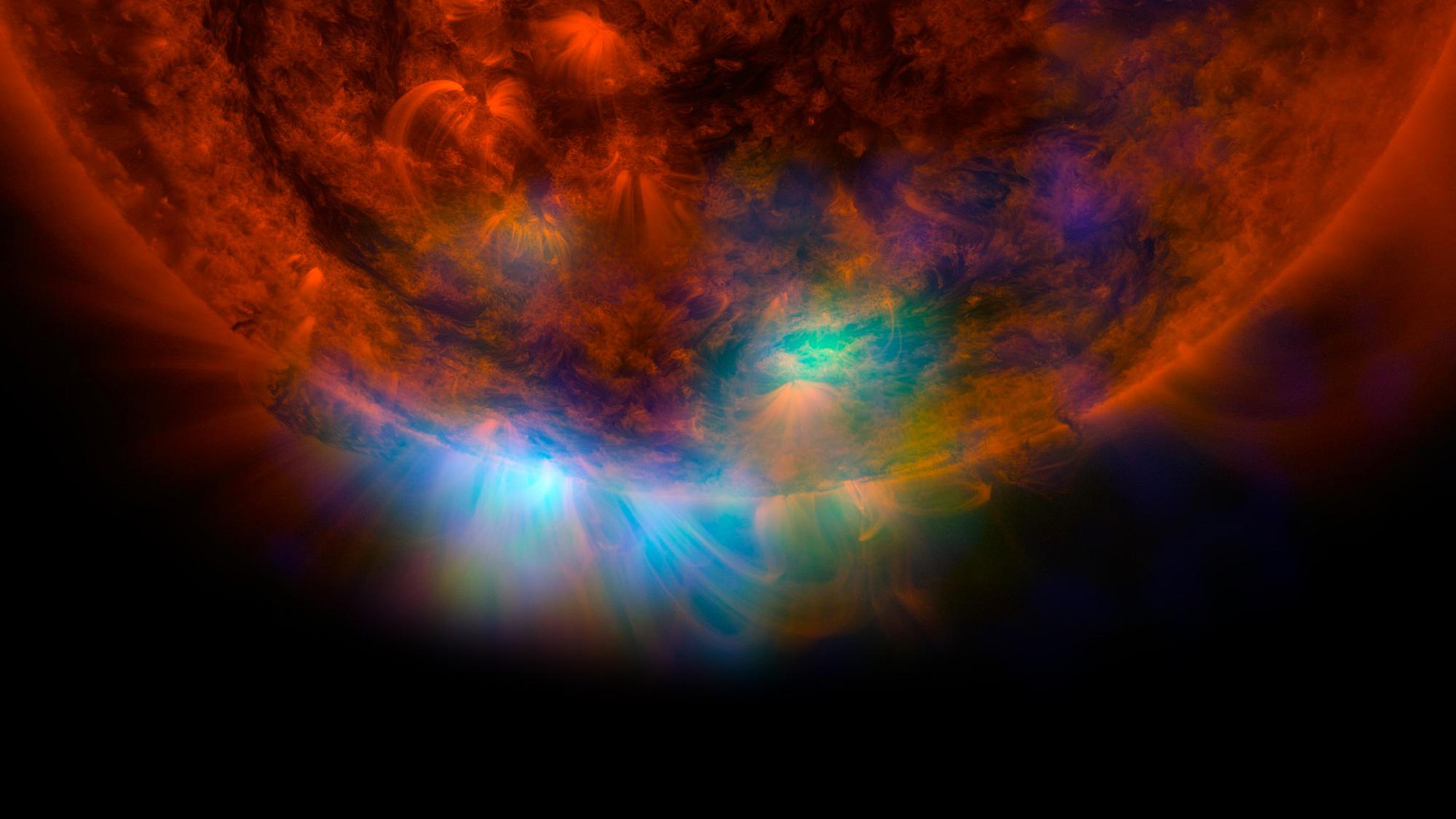

While we can see many solar storms coming, some are “stealthy.” A new study shows how to detect them.

A new government report describes 144 sightings of unidentified aerial phenomena.

Using image analysis tools developed for astronomy, researchers are predicting cancer therapy responses.

If you truly want to understand modern astrophysics, knowing how to read this graph is essential.

A new artificial intelligence method removes the effect of gravity on cosmic images, showing the real shapes of distant galaxies.

If the laws of physics are symmetrical as we think they are, then the Big Bang should have created matter and antimatter in the same amount.

Astronomers find a third type of supernova and explain a mystery from 1054 AD.

An analysis of the gravitational wave data from black hole mergers show that the event horizon area, and entropy, always increases.

Researchers discovered a galactic wind from a supermassive black hole that sheds light on the evolution of galaxies.

Astronomers possibly solve the mystery of how the enormous Oort cloud, with over 100 billion comet-like objects, was formed.

Today, it’s common knowledge, but it took scientists centuries to figure out.

Determining if the universe is infinite pushes the limits of our knowledge.

A new AI-generated map of dark matter shows previously undiscovered filamentary structures connecting galaxies.

Science is an ongoing flirtation with the unknown.

The eclipse season is starting with a bang.

A new paper reveals that the Voyager 1 spacecraft detected a constant hum coming from outside our Solar System.