a.i.

Some say the proliferation of sex robots could lead to less demand for prostitution, but not all agree.

The capabilities on this thing are both impressive and worrisome.

Poachers trade on a black market estimated to total $40 billion. It’s impossible to stop every poacher, but new technology could bolster the efforts of conservationists by putting a set of eyes in the sky.

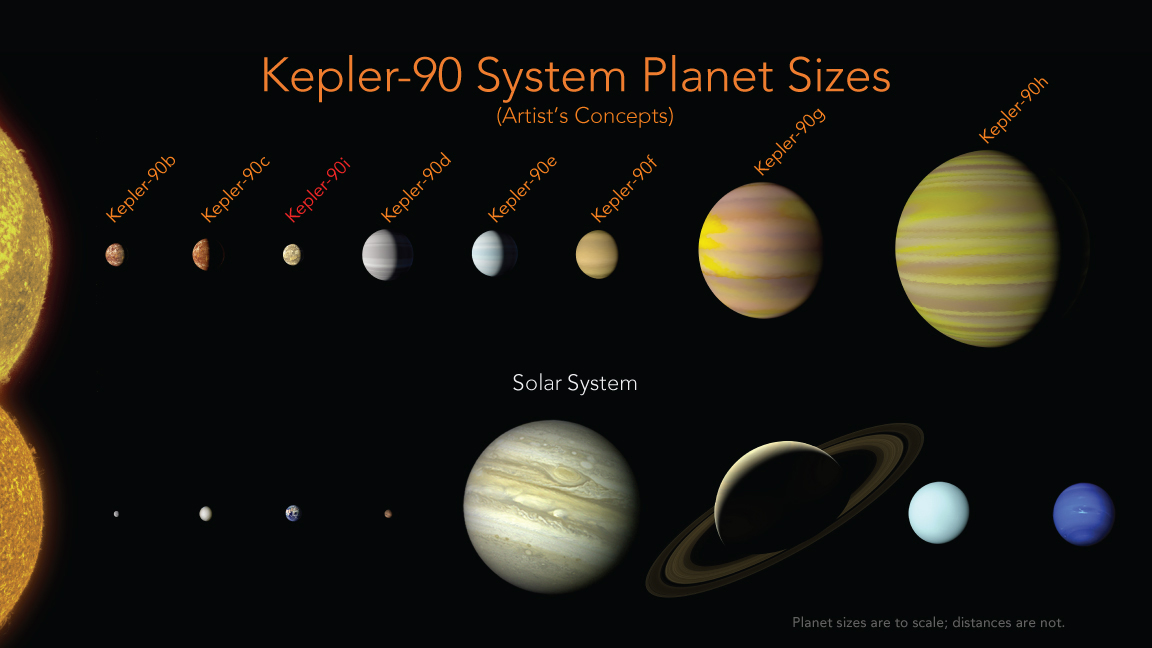

A machine learning algorithm has shown it can discover planets from weak signals overlooked in the Kepler spacecraft’s database.

Researchers at Human Longevity have developed technology that can generate images of individuals face using only their genetic information. But not all are convinced.

Scientists have developed an algorithm that reliably detects the signs of Alzheimer’s dementia before its onset.

What if your car was an extension of yourself? Neuroscience, art, and engineering combine to give us a glimpse of that future.

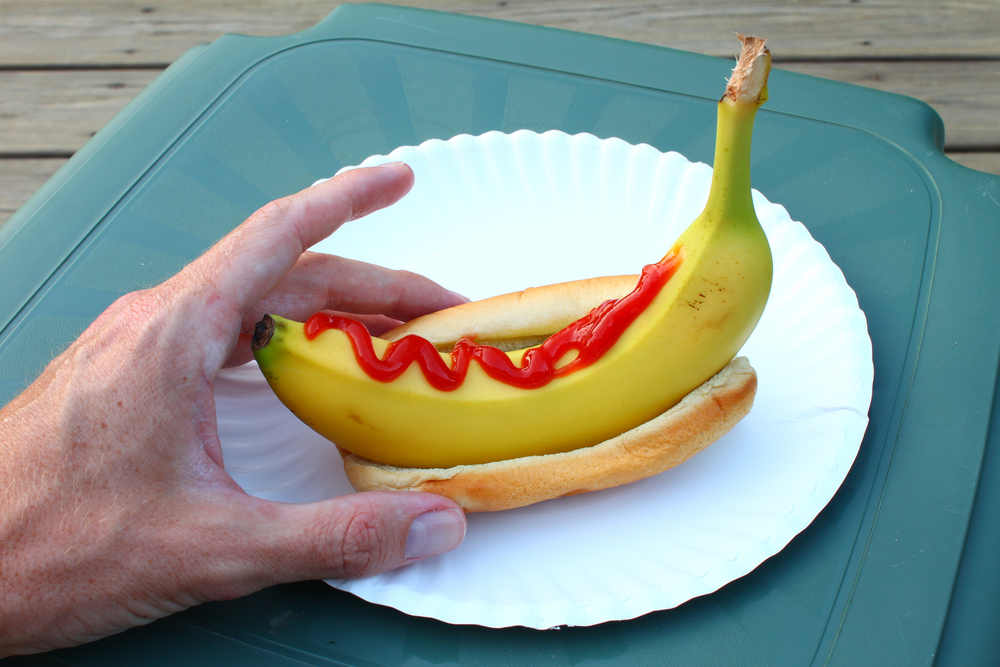

A neural network was trained to create its own cookbook recipes, resulting in some strange and unappetizing concoctions.