surveillance

Companies can identify you from your music preferences, as well as influence and profit from your behavior.

Do you sound friendly? Hostile? And which voice would be more likely to buy something?

And is anyone protecting children’s data?

The attack on the Capitol forces us to confront an existential question about privacy.

A heated debate is occurring at the University of Miami.

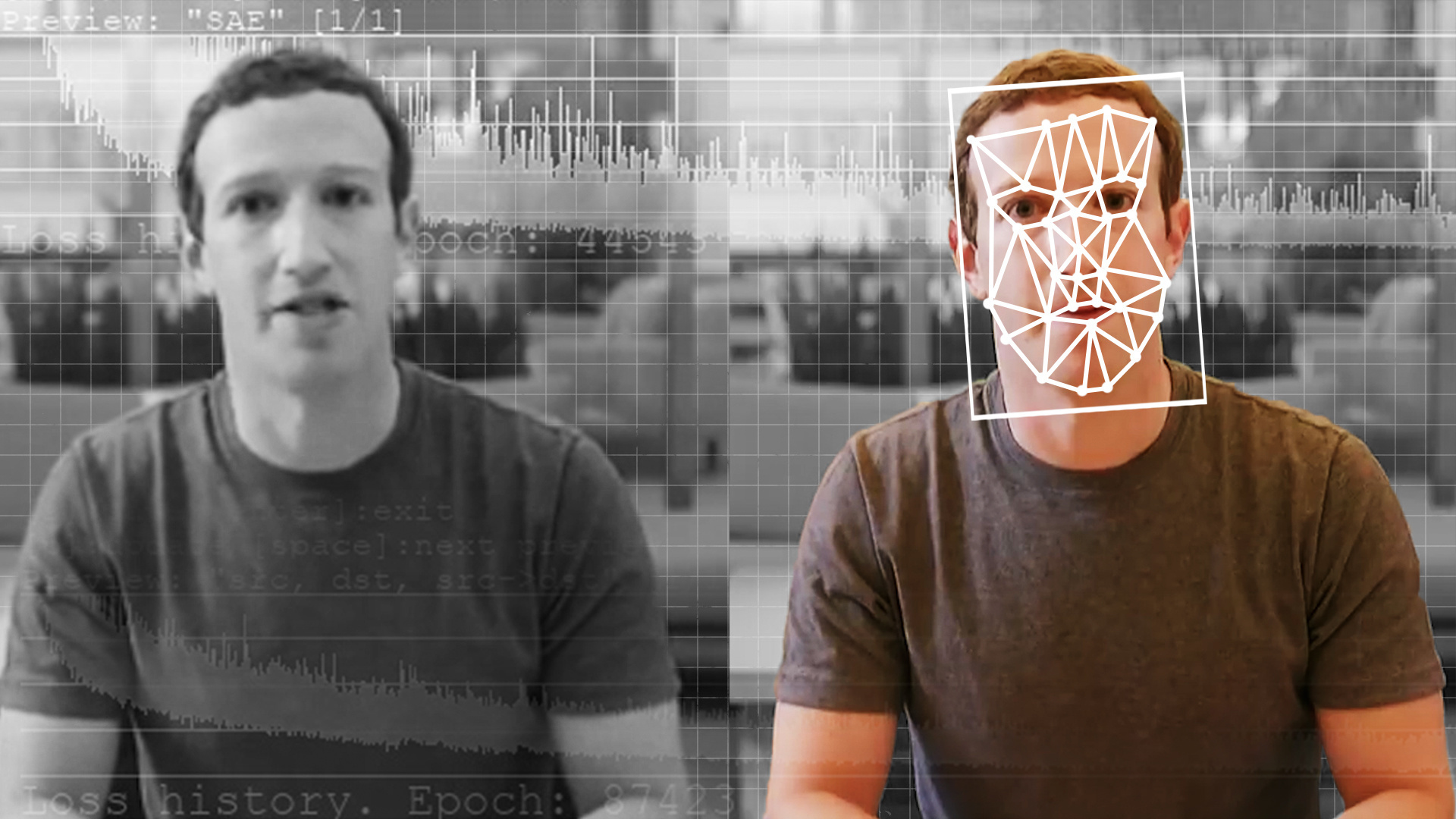

A new interactive documentary “How Normal Am I?” helps reveal the shortcomings of facial recognition technology.

The system is basically facial recognition technology, but for cars.

New documents confirm that the government agency—one of many—has been using a tracking company.

A new study explores how wearing a face mask affects the error rates of popular facial recognition algorithms.

Innovative use of blockchain tech, data trusts, algorithm assessments, and cultural shifts abound.

The programming giant exits the space due to ethical concerns.

The system can even be designed to send alerts to employees when they’ve come too close to a coworker.

If you surreptitiously pick your nose, chances are that everyone can see you doing it.

Amid the panic of the COVID-19 pandemic, are we building the surveillance states of tomorrow?

Despite potential good intentions, interventionist policies are often viewed by classical liberals as violations of individual freedoms.

▸

6 min

—

with

Proponents of drones in foreign conflicts argue that it reduces harm for civilians and U.S. military personnel alike. Here’s why that might be wrong.

▸

with

When it comes to foreign intervention, we often overlook the practices that creep into life back home.

▸

3 min

—

with

Scholars often debate risking their livelihoods and personal safety in order to conduct research in certain areas.

The semiautonomous could help to protect officers, but some are concerned about how exactly police plan to use it.

Deepfakes are “neither intrinsically good nor evil.”

The Response Act calls on schools to increase monitoring of students’ online activity.

From LED-equipped visors to transparent masks, these inventions aim to thwart facial recognition cameras.

Virtual borders have also been subtly dividing the world

Wide Angle Motion Imagery (WAMI) is a surveillance game-changer. And it’s here.

Researchers discover government agencies use facial recognition software on photos from local DMVs.

It could be the largest data leak of a Russian intelligence agency.

Fingerprinting and facial recognition may lead the way in air travel.

China has long spied on its own citizens, but a new report shows how foreigners are increasingly falling under the nation’s watchful eye.

Radio-frequency signals can be used to track peoples’ movements in their own homes.

China is testing electronic monitoring of students’ attention levels.