Mind & Brain

All Stories

What doesn’t kill you makes you stronger. That old adage roughly sums up the idea of antifragility, a term coined by the statistician and writer Nassim Taleb. The term refers […]

A bat and a ball cost $1.10 in total. The bat costs $1.00 more than the ball. How much does the ball cost?

Scientists use tripping rats to show that LSD disrupts communication between two key brain regions.

From “shell shock” to “combat fatigue,” the wars of the past century have violently illuminated the power trauma can wield over the mind and body.

Conventional psychiatric practices tell us that if we feel bad, take this drug and it will go away. But after years of research with some of the top psychiatric practitioners […]

▸

9 min

—

with

Trauma happens to everyone, but the way you respond to it determines its impact on your brain and body.

▸

8 min

—

with

Here’s a letter from our co-founders on the ways to help the world get smarter, faster, through engaging actionable content.

The age-old question, finally answered. Kind of.

These modern-day hermits can sometimes spend decades without ever leaving their apartments.

Riddle me this: what do brain teasers tell you during a job interview? A lot, but not about the applicant.

These five main food groups are important for your brain’s health and likely to boost the production of feel-good chemicals.

Although initial contact with outsiders is stressful, over time we figure out how to fit them into our lives.

A Stanford new study delves into whether passions are fixed or developed.

Can neuroscience provide an alternative hypothesis for the Mandela effect, without evoking quantum physics?

Climate change poses a threat to our mental health. Building connected communities is one way to combat a rise in suicide rates as global temperatures increase.

Bushier eyebrows are associated with higher levels of narcissism, according to new research.

We all make mistakes. However, acknowledging them can help you overcome the “sunk cost fallacy” and move on.

A new study seeks to identify, classify, and categorize the entire range of human feelings. It’s very tricky.

Daydreaming is good. But if you’re doing too much of it, you might have a totally different brain.

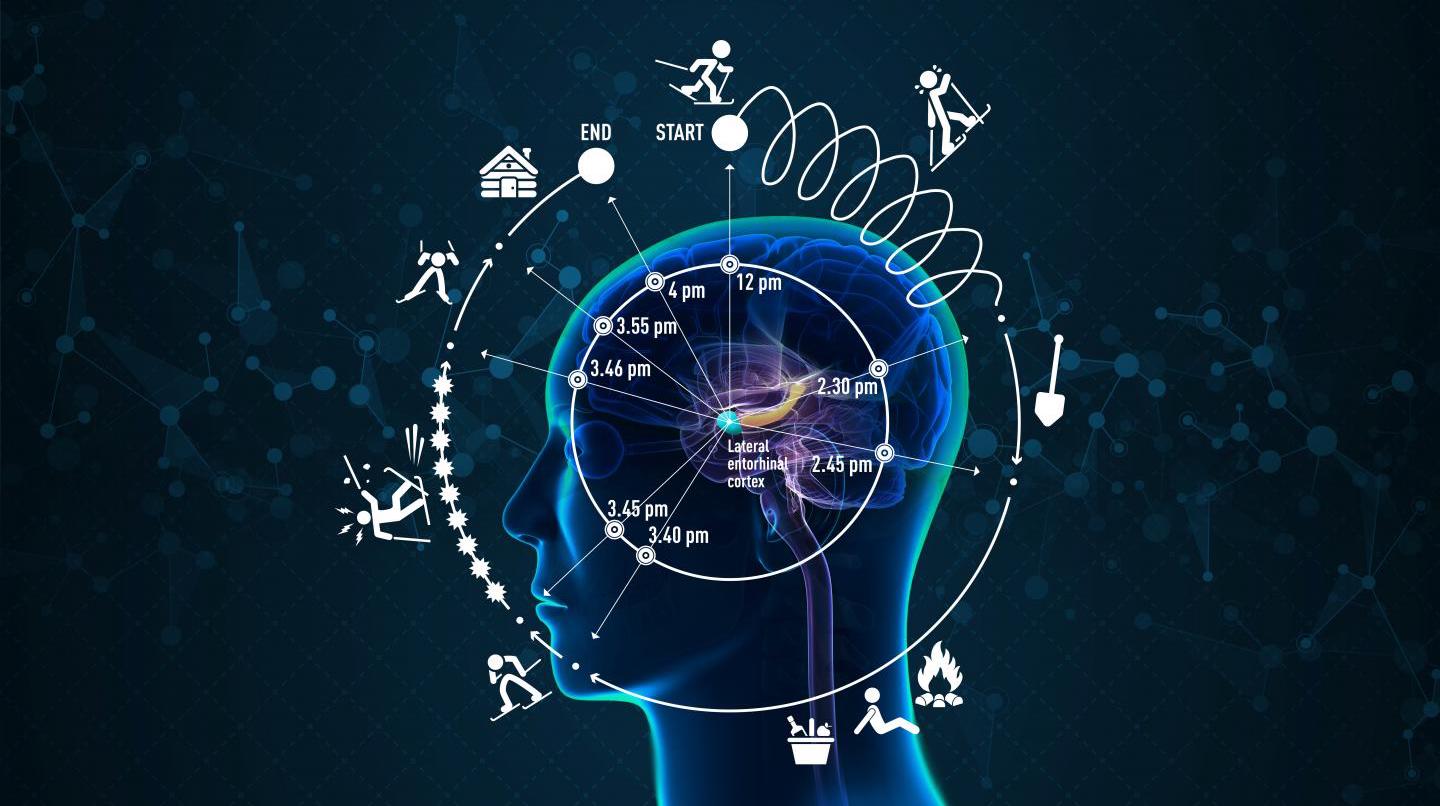

Researchers find the “neural clock” that orders and timestamps experiences and memories.

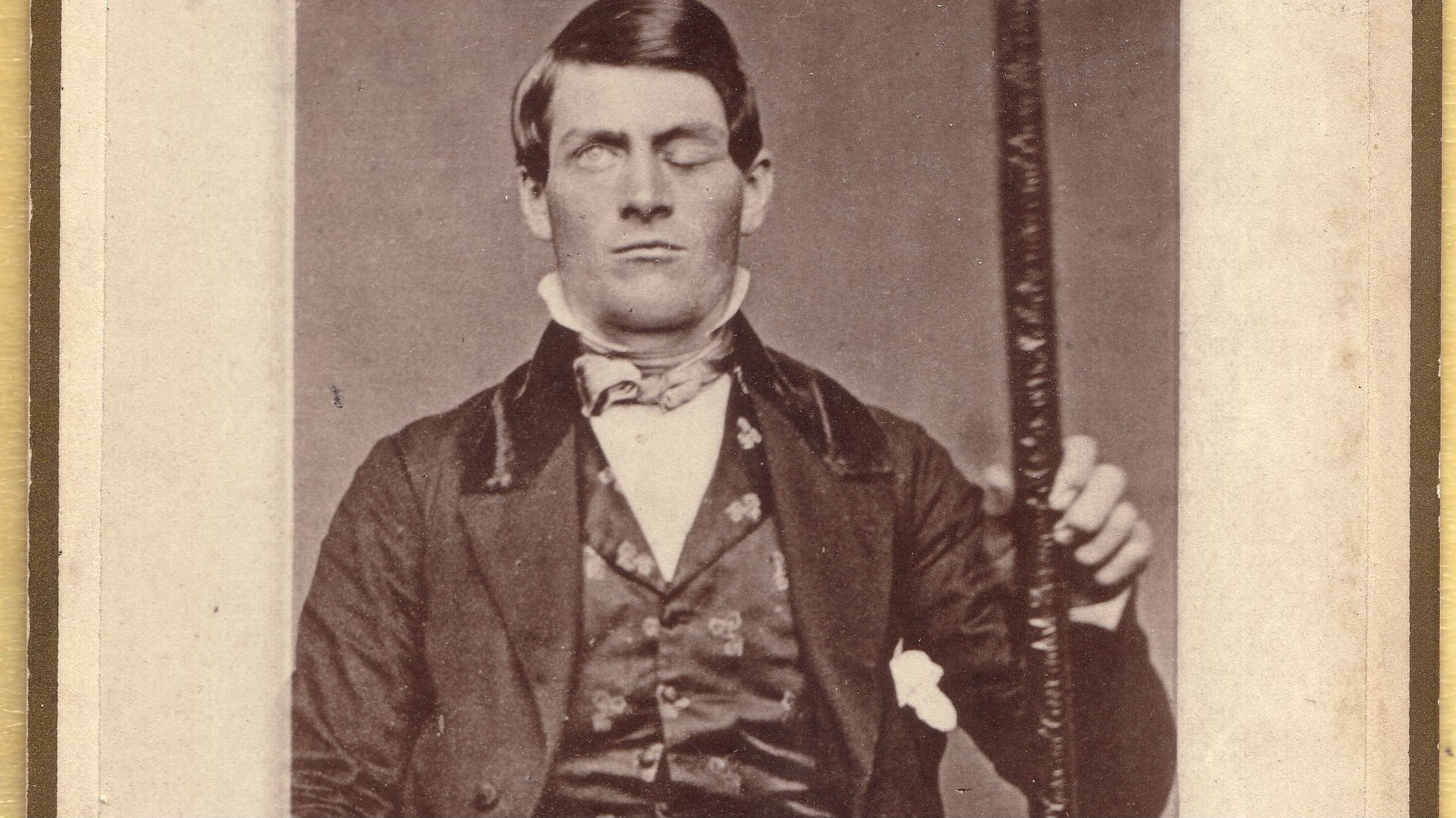

These ten characters have all had a huge influence on psychology. Their stories continue to intrigue those interested in personality and identity, nature and nurture, and the links between mind and body.

When Adele sings “It felt like a movie…”, there’s a scientific reason that it did. Your brain is technically unconscious about 240 times a minute.

A large longitudinal study finds a correlation between a higher IQ, subjective age, and biological age.

Researchers at MIT believe they might have located the neural regions responsible for pessimism.

A new study finds that even minutes of meditation or mindfulness increases your cognitive capabilities.

In his new book, Nick Chater writes that what we see is what we get.

Your brain organizes your memories in your sleep thanks to some incredible neuroscience.

Acetyl-L-carnitine has long been recognized as important for metabolism of fatty acids in mitochondria. Its newly discovered link to depression could one day change the lives of millions.

Depression, post-traumatic stress, workplace stress and fatigue are only some of the mental health problems that crafting can help relieve.

The study also showed that students who didn’t use electronic devices but attended lectures where their use was allowed also performed worse on tests.