truth

Philosopher and logician Kurt Gödel upended our understanding of mathematics and truth.

Scientists believe they have the answer, but philosophers prove them wrong.

It is impossible for science to arrive at ultimate truths, but functional truths are good enough.

A philosopher’s guide to detecting nonsense and getting around it.

Researchers at Cornell found through new experiments that people will overlook dishonesty if it benefits them and the group they identify with.

The truth is a messy business, but an information revolution is coming. Danny Hillis and Peter Hopkins discuss knowledge, fake news and disruption at NeueHouse in Manhattan.

▸

13 min

—

with

Do we really believe everything we say? Are you always trying to establish the truth when you argue? This thought experiment will help answer these questions.

Middle America is tired of those latte-sipping liberals and their “elite media” hanging out in New York City, but Ariel Levy makes the case that Americans aren’t as different from one another as they’d like to think.

▸

5 min

—

with

Capitalism has hijacked our emotions and rewired us for instant gratification—but we can reclaim our lives by practicing deep hope.

▸

5 min

—

with

Why does America confuse fantasy for reality, in pop culture and in politics? Kurt Andersen can pinpoint the moment it happened.

▸

5 min

—

with

The Internet is all shadows and mirrors—but what if it were the central source of truth? Thanks to Blockchain technology, it’s a future that’s possible.

▸

5 min

—

with

Is your Facebook wall more of a façade? Data shows that people are brutally honest with Google, but that Facebook is a pack of shameless lies.

▸

3 min

—

with

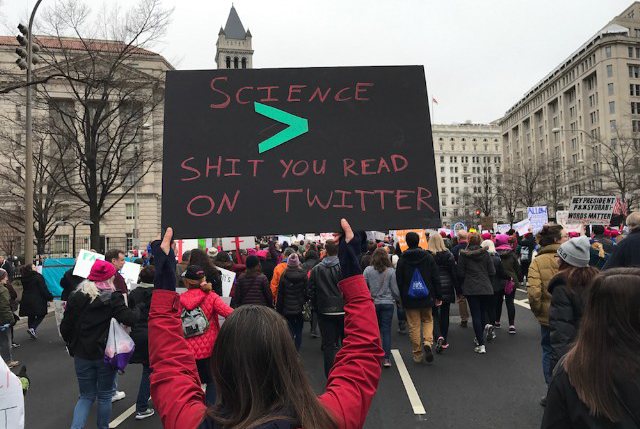

From Abraham Lincoln’s founding of the National Academy of Sciences in 1863, to the US currently leading the world in the Nobel Prize count (a third of which we owe to immigrants), America was built on science. What happens when we doubt and defund it?

▸

12 min

—

with

When the president gets his primary information from talking heads on cable TV rather than intelligence briefings, we have a problem.

▸

4 min

—

with

Director Ezra Edelman just won the Academy Award for Best Documentary Feature for ‘O.J. Simpson: Made in America’. By deconstructing one scene, he gives insight into how truth and art must co-exist in documentary filmmaking.

▸

5 min

—

with

Scientists are planning a Scientists’ March on Washington on April 22 to protest the Trump administration’s anti-science policies.

Nowhere is anti-intellectualism more warmly incubated or does misinformation spread faster than in the online community, which is why Facebook – the third most-visited website in the world – has such a weighty responsibility.rn

▸

3 min

—

with

“We love, as a culture, to attack messengers when the message is something that makes us feel uncomfortable,” says journalist Wesley Lowery.

▸

3 min

—

with