technology

With the right prompts, large language models can produce quality writing — and make us question the limits of human creativity.

“When you feel the isolation setting in at times, you have to reframe your mindset.”

“We should be informed and educated about the risks of AI, but we can’t be afraid,” Khan Academy founder Sal Khan told Big Think.

These scrolls are the only remaining intact library of ancient Rome — and they will crumble at a touch.

Can AI and animals coexist? Philosopher Peter Singer gives us a nuanced take on the issue.

▸

5 min

—

with

“You’re not punished for failing, you’re punished for not trying.” Former Uber exec Emil Michael on how to truly achieve success.

▸

9 min

—

with

It’s like combining Google Translate with a time machine.

Nagomi helps us find balance in discord by unifying the elements of life while staying true to ourselves.

Jimena Canales shares the “demons” that shaped computer science.

▸

6 min

—

with

The first human that isn’t an Earthling could be in our lifetime.

▸

5 min

—

with

What lies in store for humanity? Theoretical physicist Michio Kaku explains how different life will be for your descendants—and maybe your future self, if the timing works out.

▸

with

Theoretical physicist Brian Greene explores the potential particles of time and why we could, in theory, travel forward in time but not back.

▸

with

Social media isn’t the majority – it’s the vocal fringe.

▸

with

Dr. Sara Walker is an astrobiologist and theoretical physicist, who is questioning the very nature of life and how we’re attempting to find it elsewhere.

▸

6 min

—

with

Arguments on social media are notorious. Can practicing intellectual humility make us smarter and happier? Science says yes.

We’re wrong about what other people think – and that has harmful impacts on the next generation.

▸

4 min

—

with

Is social media changing your memory? Here’s what the science actually says.

▸

3 min

—

with

To understand the edges of our universe, we’ll need to explore the edges of our own philosophies.

▸

with

Poachers drove the Northern White Rhino to extinction. One scientist and her “frozen zoo” are on a mission to bring them back.

▸

7 min

—

with

Humanity’s most advanced tech still hasn’t unraveled the mysteries of the human mind. Can brain scans show us how we store memories?

▸

with

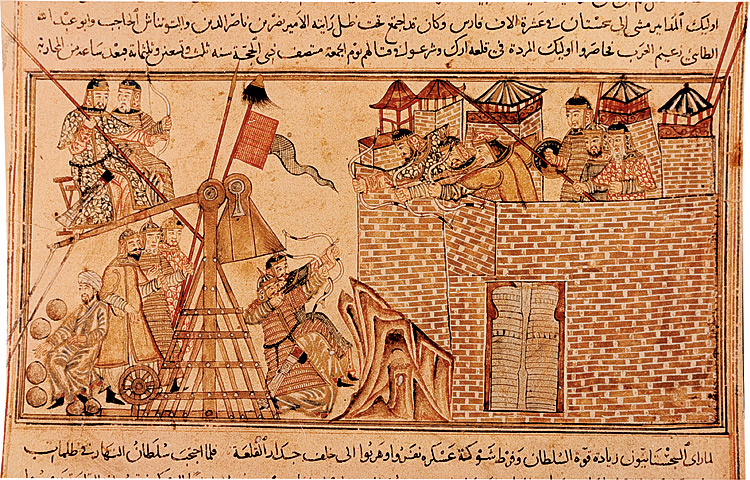

Historians know how military technologies evolved, but the reasons why remain poorly understood.

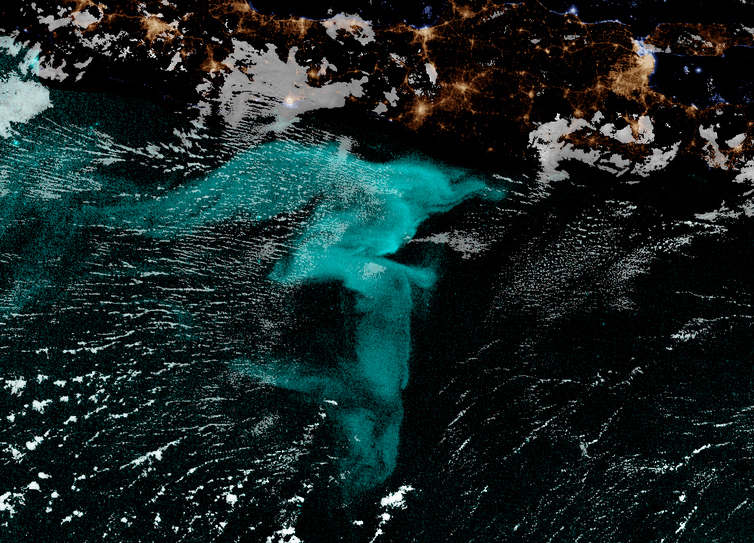

To date, only one research vessel has ever encountered a milky sea.

Scientists are solving the problem of costly energy storage.

A black swan event is rare but disruptive — and might be predictable.

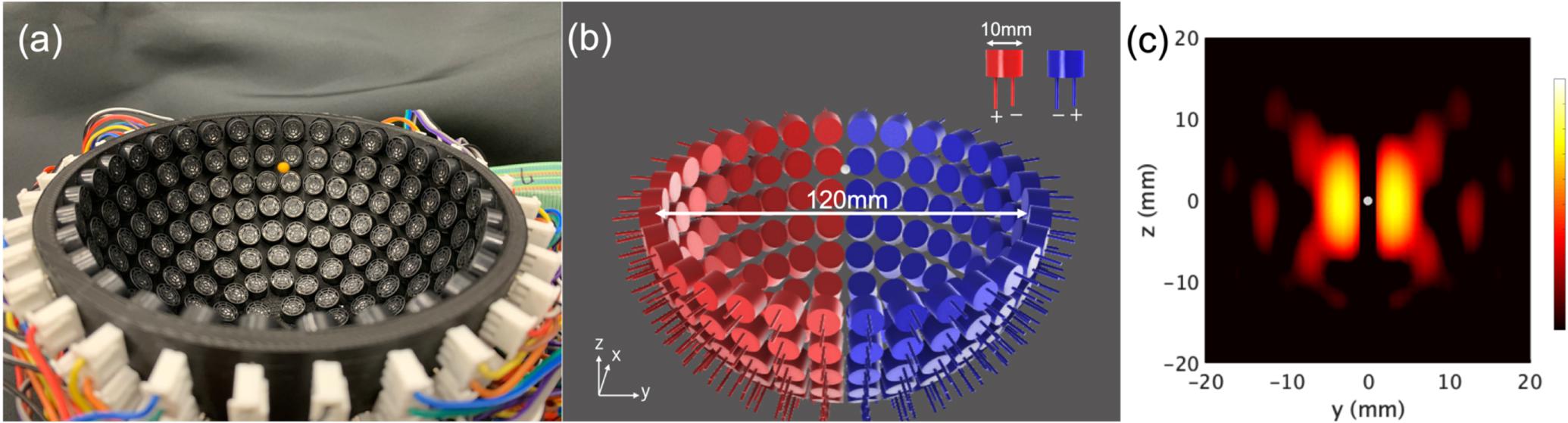

The design could help restore motor function after stroke, enhance virtual gaming experiences.

Prosthetic arms can cost amputees $80,000. A startup called Unlimited Tomorrow is aiming to change that by making customized 3D-printed bionic arms for just $8,000.

Think of a combination of immersive virtual reality, an online role-playing game, and the internet.

Three cutting-edge techniques – the gene-editing tool CRISPR, fluorescent proteins and optogenetics – were all inspired by nature.

Fear that new technologies are addictive isn’t a modern phenomenon.

The non-contact technique could someday be used to lift much heavier objects — maybe even humans.