What was it like when the cosmic dark ages ended?

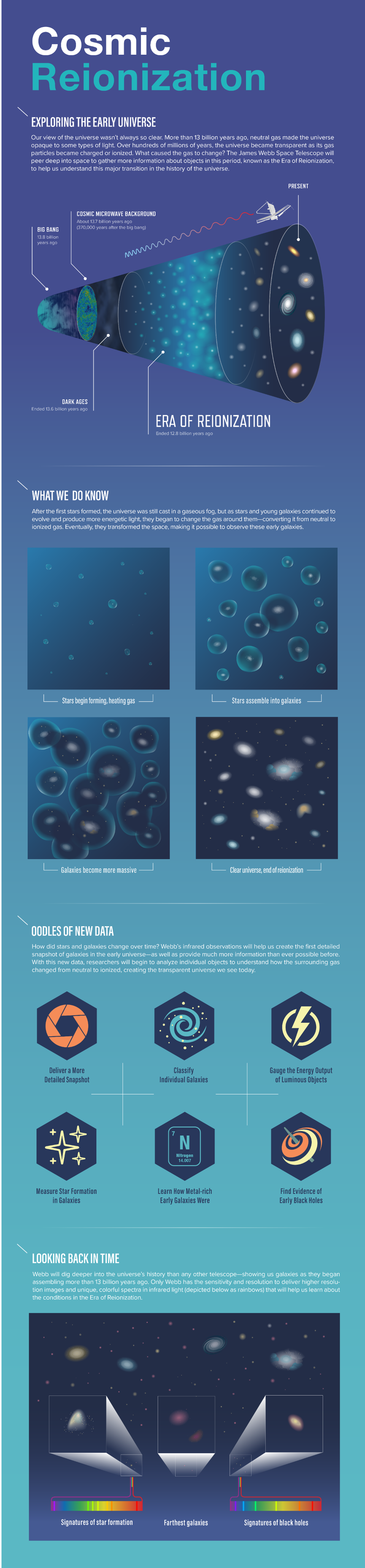

- Even though the very first stars and galaxies formed relatively early on in the Universe’s history, it took a full 550 million years for the last of the light-blocking neutral atoms to become reionized.

- Until that event occurred, the Universe was experiencing what’s known dually as the cosmic dark ages, but also as the epoch of reionization, depending on whether you value the extinction or the starlight more.

- Once the cosmic dark ages came to an end, the Universe finally took on a much more modern look compared to how it was before. Here’s what that critical stage of cosmic growth was like.

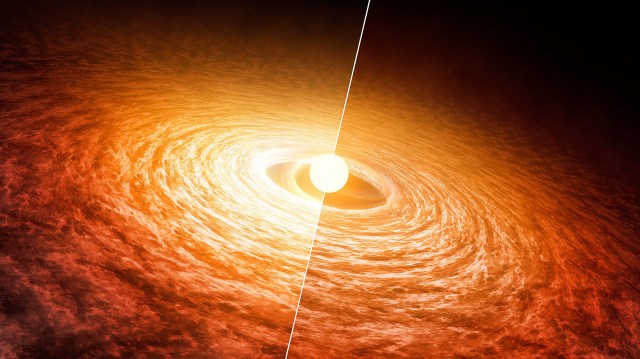

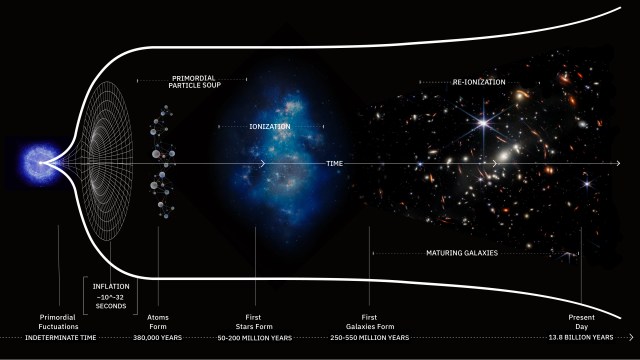

Forming stars sounds like the easiest thing in the Universe to do, given enough time. However, making stars that are actually visible to an observer is, perhaps surprisingly, a lot more challenging. Once you get a sufficiently large amount of mass together, so long as you give it enough time to gravitate, you’ll be able to watch it collapse down into small, dense clumps. If enough mass comes together in those clumps under the right conditions, stars will no doubt ensue. This is how you form stars today, and it’s how we’ve formed stars all throughout our cosmic history, going back to the very first ones some 50-100 million years after the Big Bang.

But even with the first stars burning, as they go about fusing hydrogen into heavier elements and converting that energy into a form that results in the emission of tremendous amounts of light, those stars aren’t necessarily visible to anyone around to observe them. The Universe is simply too good at absorbing and blocking that light. The reason? All of the atoms in the Universe, during the time that the first stars exist, are neutral, and there are simply too many of them for the starlight to penetrate. It took hundreds of millions of years for the Universe to allow that light to freely pass through it: a time known (from the perspective of light) as the cosmic dark ages, but known (from the perspective of atoms) as the epoch of reionization. It’s a vital part of the cosmic story of us whose importance is greatly underappreciated.

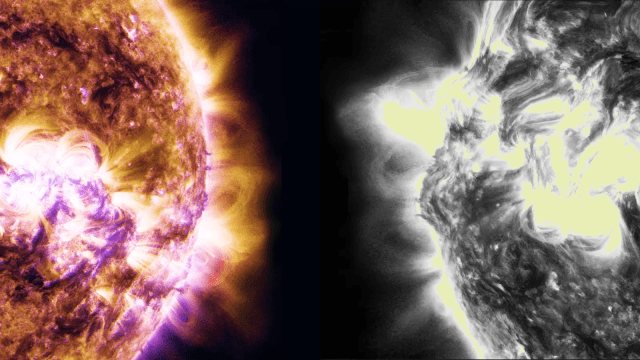

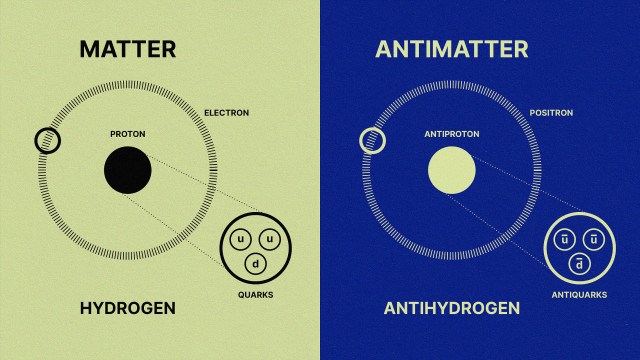

If you want to know what lights up the Universe, the answer always depends on which wavelengths of light you’re looking at it in. The Universe is always illuminated by the cosmic microwave background: the leftover radiation from the Big Bang itself. Early on, this radiation was coupled to the plasma of ionized nuclei and free electrons, prior to the formation of stable, neutral atoms. Less than half-a-million years after the Big Bang, neutral atoms formed and this radiation simply streamed, freely, amidst the sea of atoms.

But why wasn’t this radiation blocked by the now-intervening neutral atoms that populate the abyss of empty space? The reason is wavelength: it only streams freely through the atoms due to the fact that the cosmic radiation was much lower in energy than neutral (mostly hydrogen) atoms are capable of absorbing: at wavelengths too long for absorption to take place. If the radiation were higher in energy, atoms would not only absorb it, they would re-scatter it in all directions, where it would be further absorbed by additional atoms. It’s only because the radiation is so low in energy — it’s primarily infrared light — that it can freely pass through the space that neutral atoms occupy.

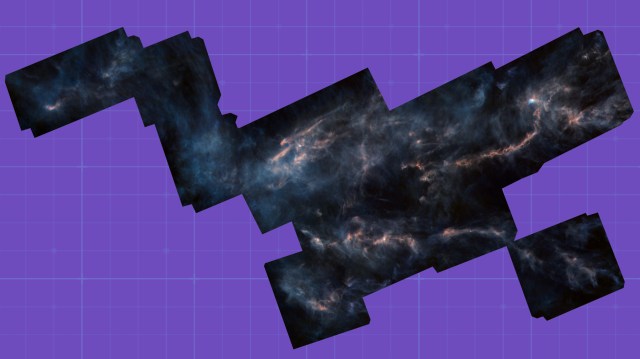

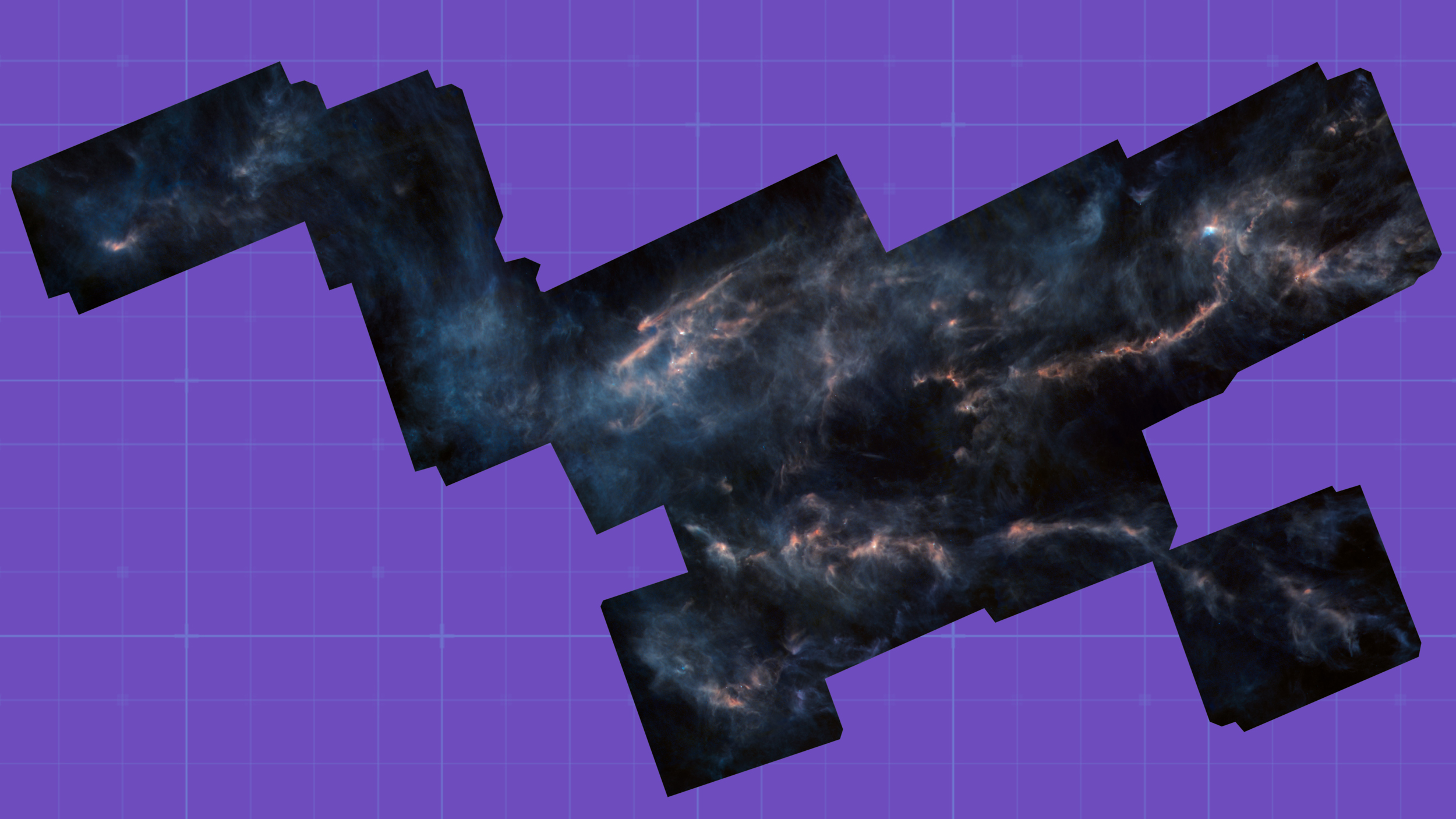

And the light-blocking effects of neutral atoms, so good at visible and ultraviolet wavelengths but so poor at inherently infrared wavelengths, plays out not only in the very early Universe, but in modern times as well. We see this even in our own galaxy: the stars and objects that persist in the Milky Way’s galactic center cannot be seen in visible light. The dust and gas that are present blocks it, just as they efficiently block visible light all throughout the galactic plane. However, at longer wavelengths, infrared light goes clear through those neutral atoms. This explains why the cosmic microwave background doesn’t get absorbed, but starlight does.

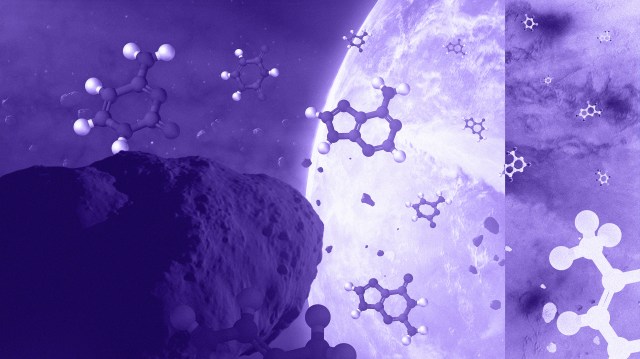

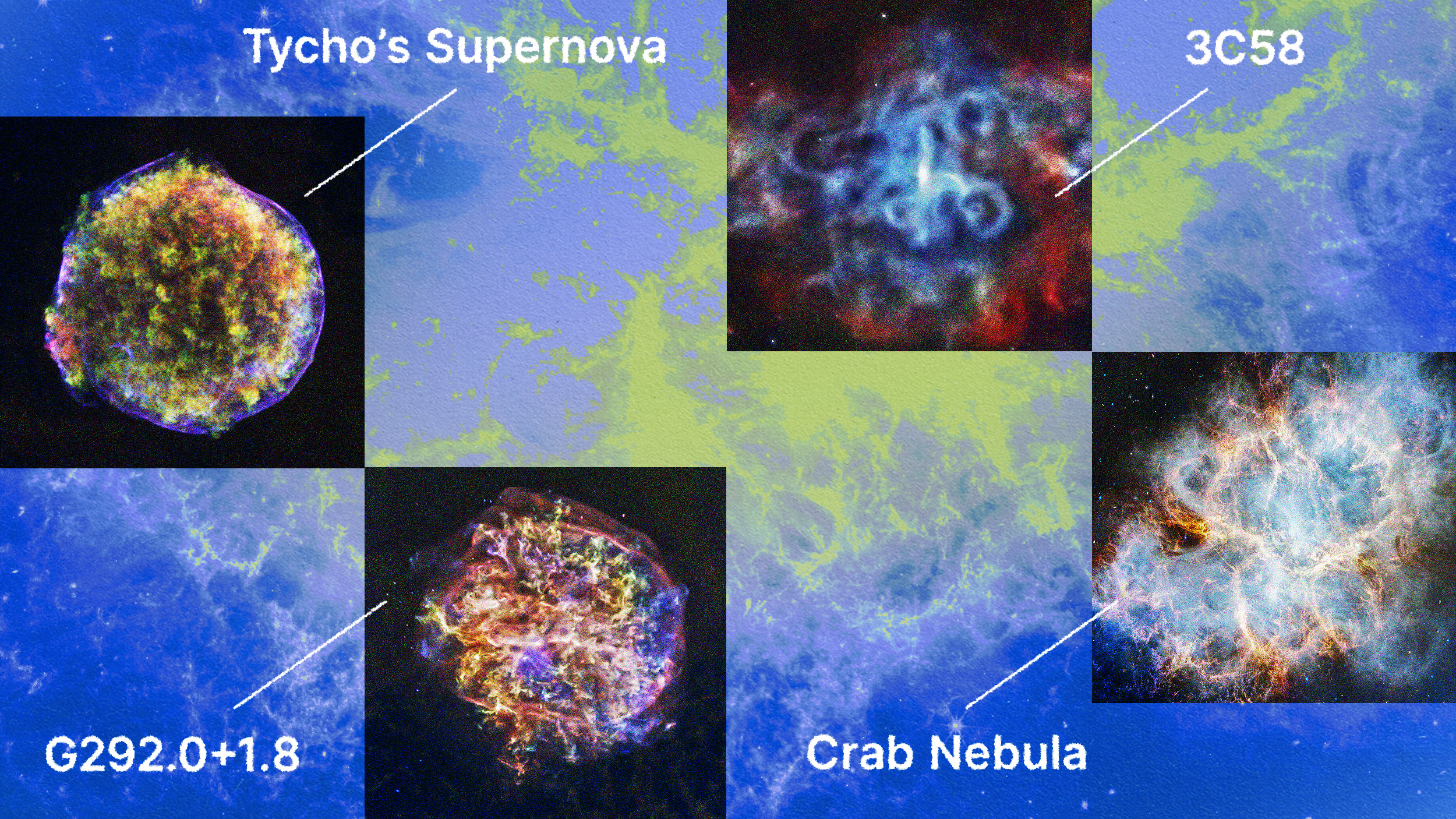

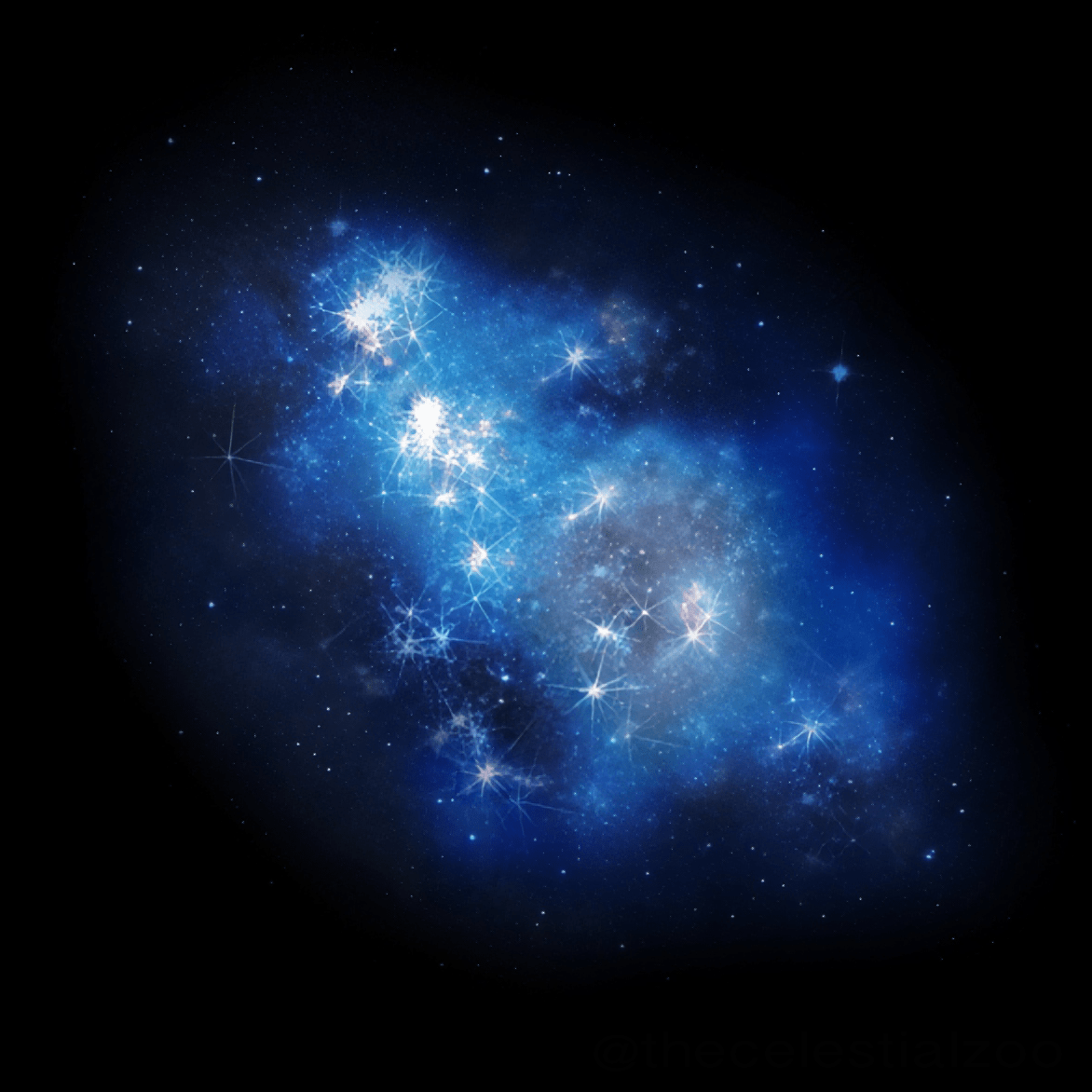

Thankfully, the stars that form in the Universe, particularly early on in cosmic history, can be massive and hot, where the most massive ones are much more luminous and hotter than even our Sun. Early stars can be tens, hundreds, or even (for Population III stars) thousands of times as massive as our own Sun, meaning they can reach surface temperatures of tens or even hundreds of thousands of degrees and brightnesses that are millions of times as luminous as our Sun. These behemoths are the biggest threat to the continued existence of any neutral atoms that happen to be spread throughout the Universe.

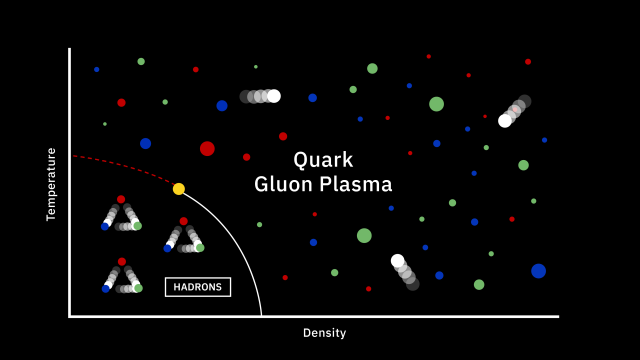

What determines whether atoms remain neutral, and capable of absorbing (visible) light, or whether they become ionized, and transparent to the light that our eyes typically perceive? It’s all about the energy of the radiation that strikes them. The key property of whether an atom becomes ionized by the surrounding/nearby stars is that, for stars above a certain temperature, they’ll emit some fraction of their light in the ultraviolet portion of the spectrum: energetic enough to ionize a neutral atom. And remember, at all moments in cosmic history, even today, the most common atom in the Universe by number is hydrogen: more than 90% of the atoms present are still simply hydrogen.

For a hydrogen atom in its lowest-energy state, it takes a photon of 13.6 eV (or more) to ionize it, which very few photons emitted from most stars possess. But that’s because most stars have their energy peak in either the visible or infrared portion of the spectrum: with fewer high-energy photons above a certain ultraviolet threshold. However, the hotter and more massive your star is, the more ionizing photons they produce. Because these are the shortest-lived stars, it’s only within a few million years of forming a new burst of stars that you get an excessive amount of ionizing photons, which coincides with large numbers of hydrogen atoms being ionized.

If you were to imagine a scenario in which all atoms inhabiting the Universe became ionized, the depths of star-free space would be clear for light to travel through, meaning we could see the distant Universe without a problem. All the starlight that was emitted would freely propagate through space, and none of it would be extinguished unless and until it arrived at the proverbial eyes of an observer. But even so long as a small percentage of the atoms remains neutral, that emitted starlight could be effectively absorbed, making it extraordinarily challenging to detect anything from the era of the first stars and galaxies.

It’s true that a smaller percentage of neutral atoms means that starlight must travel through larger numbers of them to be fully absorbed, as the “extinction” of light in astronomy is cumulative. What we need to happen, if we want the Universe to become truly transparent to starlight, is for enough star formation to occur that it floods the Universe with a sufficient number of ultraviolet photons to ionize the neutral matter in the intergalactic medium so that starlight can travel unimpeded. This requires a large amount of star formation, and requires it to occur quickly enough that the ionized protons and electrons don’t find one another and recombine again.

The very first stars in the Universe make a small dent in the population of neutral atoms surrounding them, but the earliest of the star clusters are small and short lived. The Universe will remain largely neutral with them alone, especially once the most massive of those stars die and neutral atoms re-form. The second generation of stars, formed in the aftermath of the first generation’s death, fare little better.

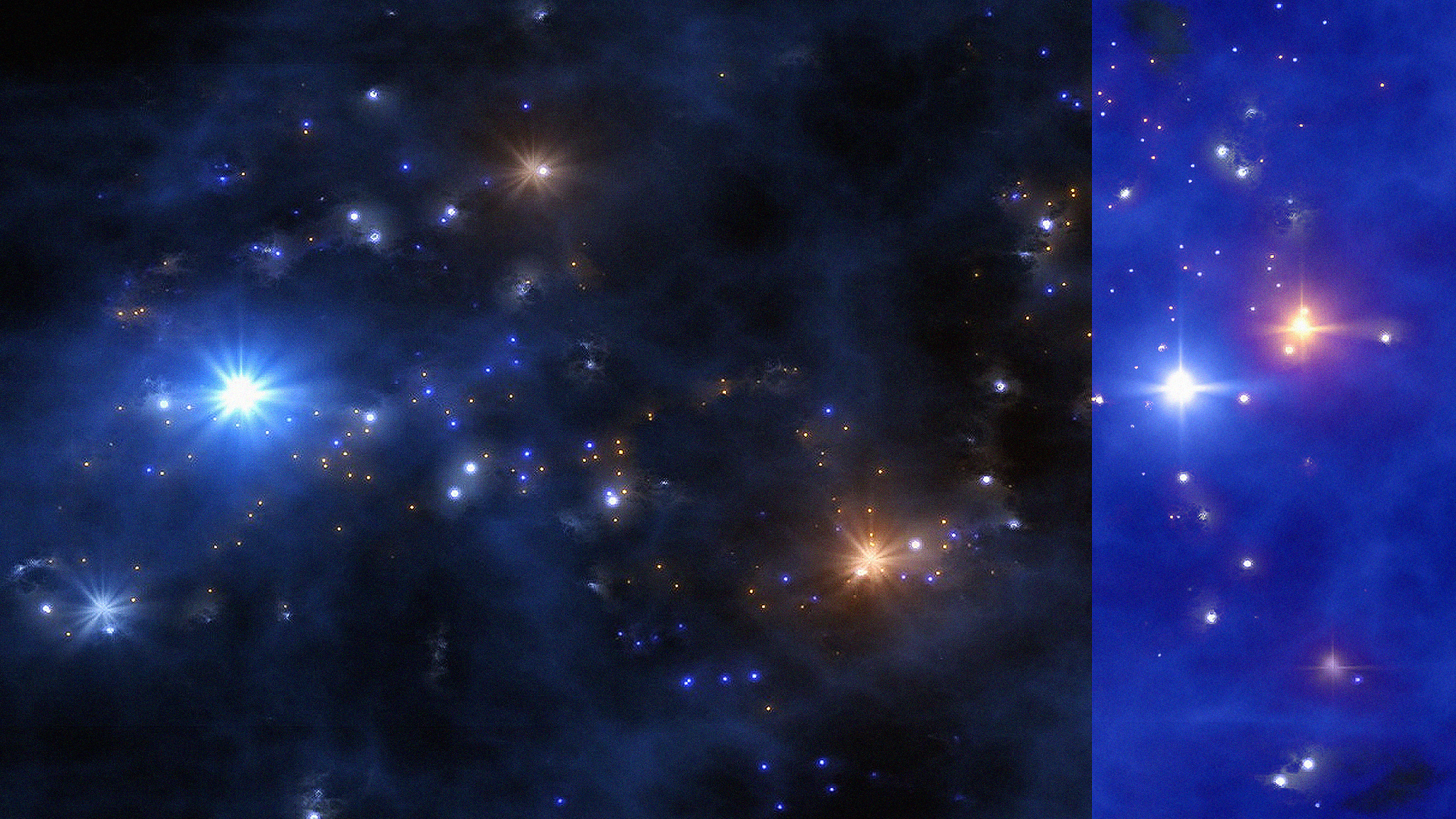

The big problem is that these newly formed stars form in clumps and clusters of perhaps a few million solar masses at most. These early star clusters only have about 0.001% of the masses (and numbers) of stars found in the Milky Way, meaning that for the first few hundred million years of our Universe, the stars within it are barely enough to make a difference in the neutral matter permeating all of space.

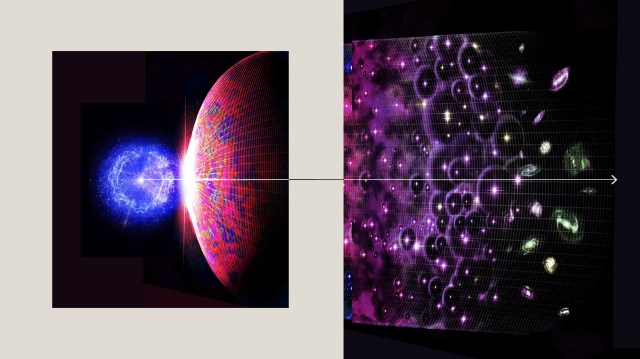

But that begins to change when star clusters merge together, forming the first galaxies. As large clumps of gas, stars, and other matter merge together, they trigger a tremendous burst of star formation, lighting up the Universe as never before. As time goes on, a slew of phenomena take place all at once:

- the regions with the largest collections of matter attract even more early stars and star clusters toward them,

- the regions that haven’t yet formed stars can begin to,

- and the regions where the first galaxies are made attract other young galaxies,

all of which serves to increase the overall star formation rate.

In other words, there are three things going on all at once that interplay with one another.

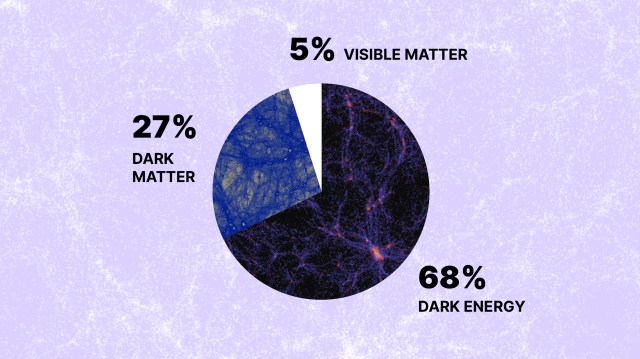

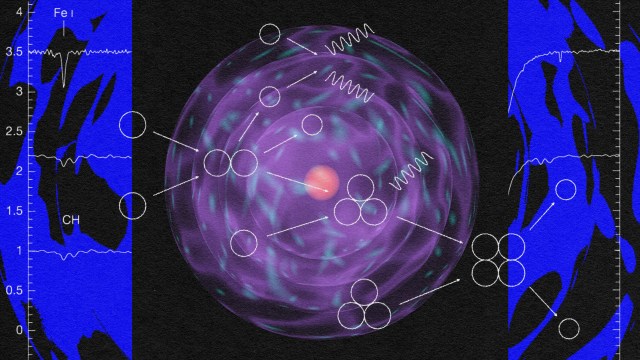

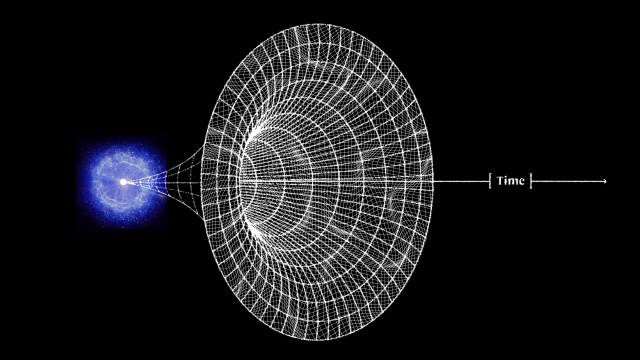

- There are neutral atoms, populating all of space everywhere, clumping and clustering together across the cosmic web.

- There are stars and star-forming regions, found forming within the densest of clumps, and which produce radiation that ionizes these neutral atoms.

- And there are the free electrons and bare atomic nuclei, created by those ionizing photons, but that are capable of finding one another and re-forming neutral atoms once again.

If we were to map out these various features within the Universe at this time, what we’d see is that the star formation rate increases at a relatively steady rate for the first few billion years of the Universe’s existence. In some favorable regions, enough of the matter gets ionized early enough that we can see through the Universe before most regions are reionized; in other, more unfavorable regions, it may take as long as two or three billion years for the last populations of pristine, neutral matter to be blown away.

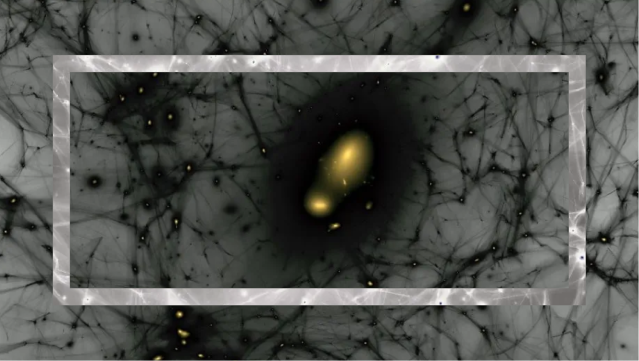

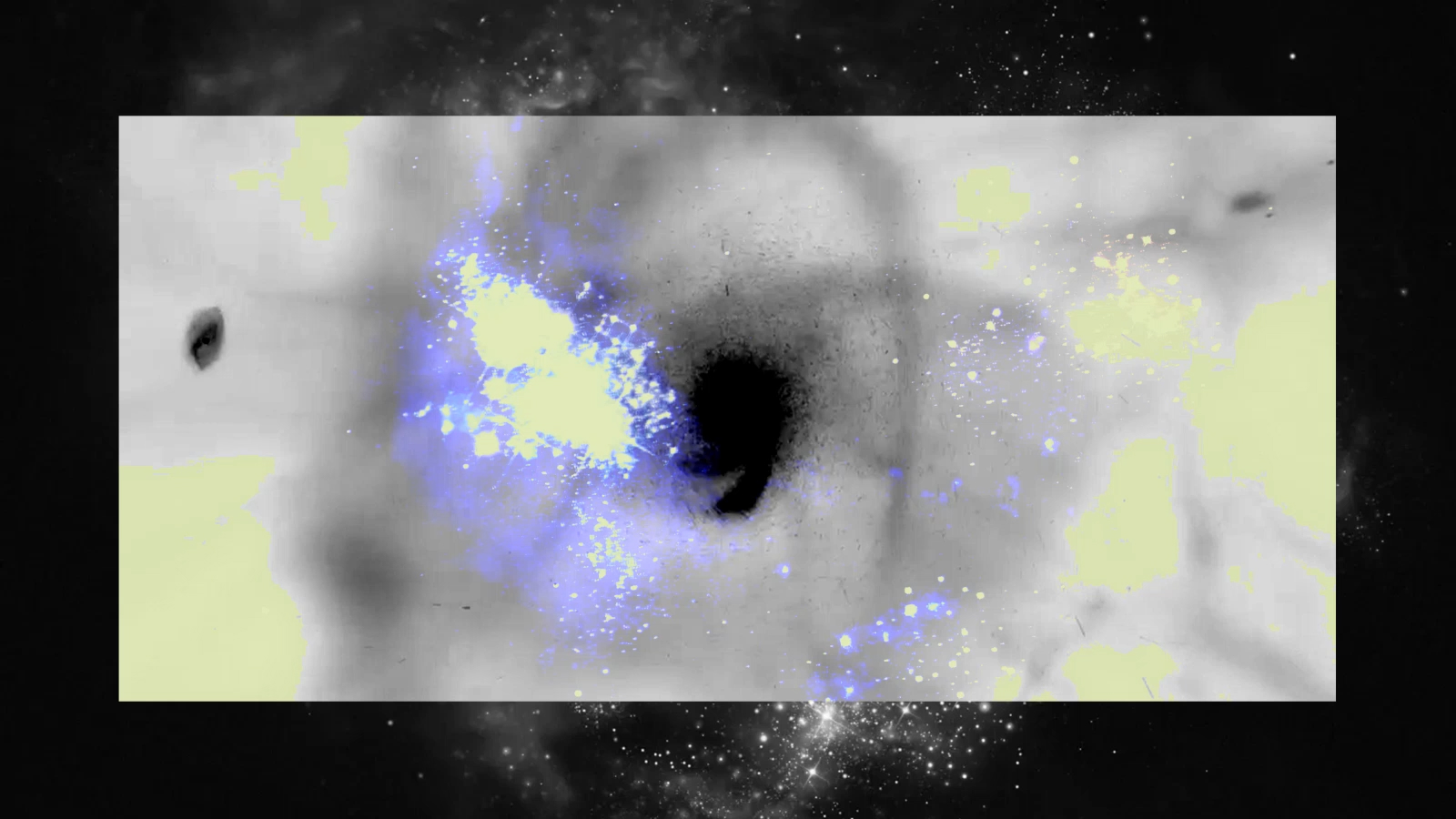

If you were to map out the Universe’s neutral matter from the start of the Big Bang, you would find that it starts to transition to ionized matter in clumps, but you’d also find that it took hundreds of millions of years to mostly disappear. It does so unevenly, and preferentially along the locations of the densest parts of the cosmic web.

Some line-of-sights become transparent to visible light relatively quickly: in just a few hundred million years, where star-formation is the most active and energetic, and where the greatest number of ultraviolet photons are produced at early times. Other lines-of-sight will still have pristine, neutral matter found within them as many as a couple of billion years after the Big Bang. The process of reionization is uneven, and doesn’t “complete” at the same rate in all locations and directions.

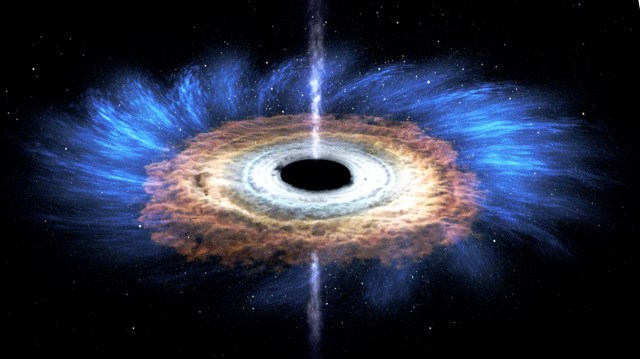

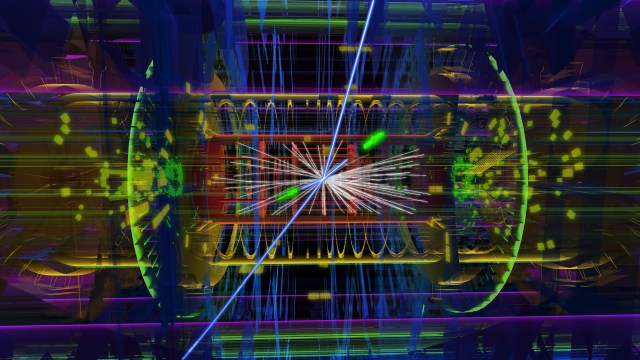

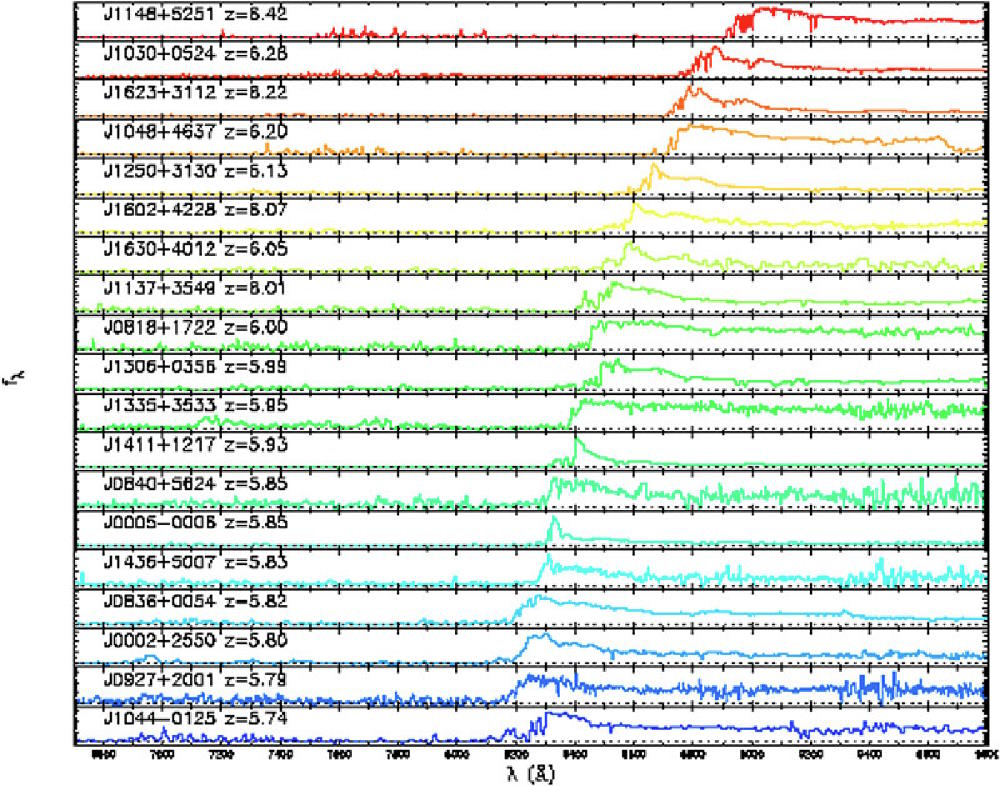

On average, it takes 550 million years from the inception of the Big Bang for the Universe to become reionized and transparent to starlight. We see this from observing ultra-distant quasars, which continue to display the absorption features that only neutral, intervening matter causes. We can also see this from ultra-distant galaxies, by looking at which features they display and which ones are effectively absorbed by the neutral matter within the intergalactic medium.

Because there are a few directions where the matter is reionized much earlier than average, it indicates to us that structure formation is uneven, and gives us hope of finding early galaxies even before that 550 million year limit. Hubble uncovered one such galaxy, GN-z11, whose light comes from an earlier time than the completion of reionization: just 407 million years after the Big Bang.

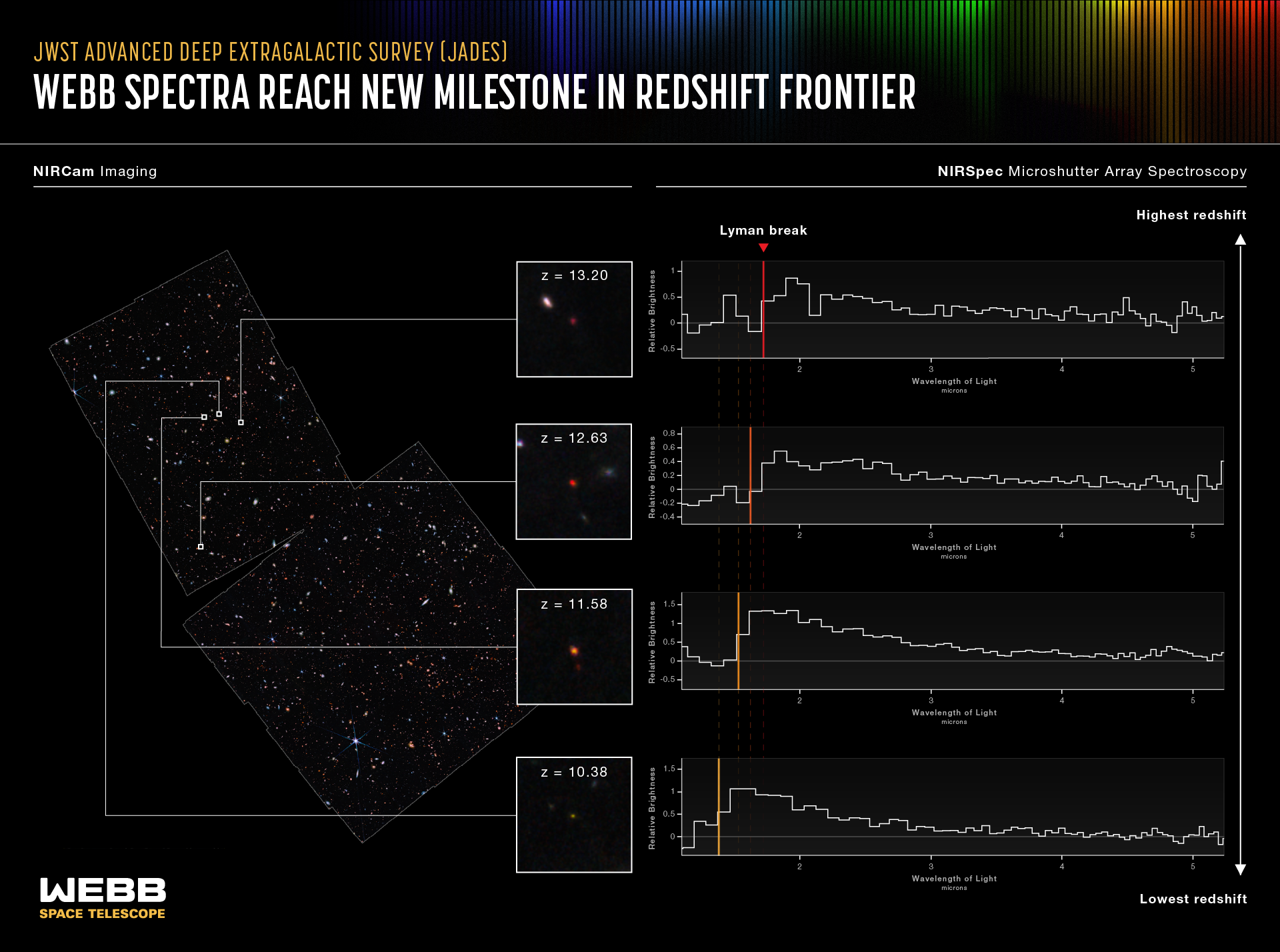

However, other, more distant galaxies have since been discovered by JWST that are invisible to Hubble’s capabilities, as JWST’s sensitivity to longer wavelength, infrared light allows it to see features that are more difficult for neutral atoms to absorb; the Universe becomes effectively transparent earlier at longer wavelengths, when less reionization has occurred. Infrared eyes are what it takes to probe the epoch of reionization itself.

At the most extreme cosmic distances ever probed, there are not yet galaxy clusters in the Universe, and the first galaxies, which are largely to have taken shape between 200 and 250 million years after the Big Bang, cannot be revealed by visible light observations alone. But through the eyes of an infrared observatory, where the light is long-enough in wavelength to not be absorbed by these neutral atoms, this starlight might shine through after all.

It’s no coincidence, then, that the James Webb Space Telescope was designed to look in the near-and-mid-infrared portion of the spectrum, all the way out to wavelengths of 30 microns: some 50 times as long as the longest-wavelength light that human eyes can see. In fact, of the top 10 galaxies known at the end of 2023, 9 of the top 10 spots are JWST galaxies, with GN-z11 the sole confirmed galaxy not requiring either discovery or confirmation with JWST.

The light created in the earliest era of stars and galaxies plays a cosmic role whose importance cannot be overstated: the role of making the Universe transparent to all wavelengths of starlight. The ultraviolet light works to ionize the matter around it, enabling visible light to progressively farther and farther as the ionization fraction increases. The visible light gets scattered in all directions until reionization has gotten far enough to enable our best telescopes today to see it. But the infrared light, also created by the stars, passes through even the neutral matter, giving our 2020s-era telescopes a chance to find them, even where no visible light is available.

Once starlight breaks through the sea of neutral atoms, even before reionization completes, it gives us a chance to detect the earliest objects we’ll ever have seen. It’s no surprise that even in just its first ~18 months of science operations (to date), that JWST has broken a slew of cosmic records, including records for:

- most distant galaxy,

- most distant proto-cluster of galaxies,

- most distant black hole,

- most distant red supergiant star,

- most distant gravitational lens,

- and most distant quasar.

The most distant reaches of the Universe are slowly succumbing to our improved instruments and observatories, bringing the previously unseen, at last, into view. We just have to keep looking, and eventually, we’ll find out what’s truly out there.