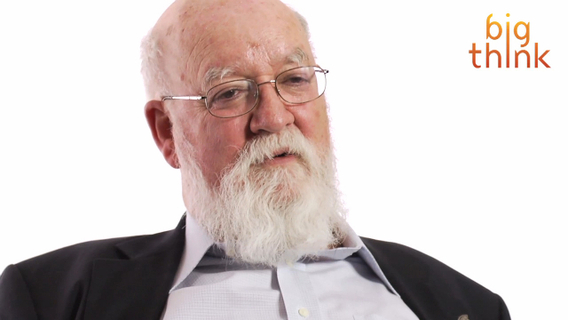

With his new book “Intuition Pumps and Other Tools for Thinking,” philosopher Daniel Dennett offers a kind of self-help book for deep thinkers — a series of thought experiments designed as a workout for the deliberative mind. Here he discusses reductio ad absurdum, “the workhorse of philosophical argumentation,” wherewith thinkers test the validity of an opponent’s argument by taking it to its most illogical extreme.

Daniel Dennett: One of the reasons I wrote this book is because oddly enough, philosophers who are famous -- notorious for being naval gazers, for being reflective. I think, in fact, philosophers are often remarkably unreflective about their own methodology. I wanted to draw attention to how philosophers actually go about their business and get them thinking more self-consciously about the tools they use and how they use them.

A tool that everybody should be familiar with and, in fact, people use it all the time is reductio ad absurdum arguments. It's the sort of general purpose crowbar of rational argument where you take your opponents premises and deduce something absurd from them. That is, you deduce a contradiction officially. We use it all the time without paying much attention to it. If you say something like -- if he gets here in time for supper, he'll have to fly like Superman.

Which is absurd -- nobody can fly that fast. You don't bother spelling it out, you just say -- you point out that something that somebody imagined or proposed has a ridiculous consequence. Well, let's look at one of the great granddaddy reductio ad absurdum arguments of all times. And that's Galileo's proof that heavy things don't fall faster than light things leaving friction aside. He argued as follows.

Okay, suppose you take the premise that you're gonna show is false. Suppose heavier things do fall faster than light things. Now, take a stone A which is heavier than another stone B. That means if we tied B to A with a string, B should act as a drag on A when we drop it because A will fall faster, B will fall slower and so A tied to B should fall slower than A by itself. But A-B tied together is heavier than A by itself so it should fall faster. It should fall both faster and slower than A by itself. That's a manifest contradiction. So we know that our premise with which we began has to be false. That's a classic reductio ad absurdum. That's been known and named for several millennia I guess. And, as I say, it's the workhorse of philosophical argumentation.

Directed / Produced by Jonathan Fowler and Elizabeth Rodd