- What might be wrong with mixing human and animal parts? There are changes we could make to human beings by mixing in animal DNA that might make them better and there are changes we can make to human beings that might make them worse.

- You're made out of DNA from thousands and millions of ancestors and it's the collaboration between DNA from all your ancestors that keeps you alive.

- We are experiencing right now a remarkable deluge of discovery in terms of the causes of disease, much of it coming out of genomics.

BRYAN SYKES: Genetics and DNA does get to the central issue of what makes us tick. It's perhaps too determinist to say that your genes determine everything you do. They don't, but, if you like, it's like the deck of cards that you're dealt at birth. What you do with that deck, like any card game, depends a lot on your choices, but it is influenced by those cards, those genes that you got when you were born.

What I've enjoyed about genetics is looking to see what it tells us about where we've come from because those pieces of DNA, they came from somewhere. They weren't just sort of plucked out of the air. They came from ancestors. And it's a very good way of finding out about your ancestors, not only who they are, but just imagining their lives. You're made up of DNA from thousands and millions of ancestors who've lived in the past, most of them now dead, but they've survived, they've got through, they've passed their DNA onto their children, and it's come down to you. It doesn't matter who you are. You could be the President. You could be the Prime Minister. You could be the head of a big corporation. You could be a taxi driver. You could be someone who lives on the street. But the same is true of everybody. I can see a time, long after I've gone but when, in fact, everyone will know their relationship to everybody else. It is possible, if anybody wants to do it or can afford it, you could actually, I think, draw the family tree of the entire world by linking up the segments of DNA. So you could find out in what way everyone was related to everybody else.

No doubt, most of the funding for the advances in genetics, for example, the complete sequencing of the human genome, has come from ambition to learn more about health issues. The technology for exploring that, which is making leaps and bounds, has come through the healthcare benefits. Those are the two main things that people are learning about themselves and who they're related to, where they've come from. And that does, and I know from experience, that does add a lot to people's sense of identity. It's not for everybody, not everyone's very interested in it, but a lot of people are and I think that's a very good thing.

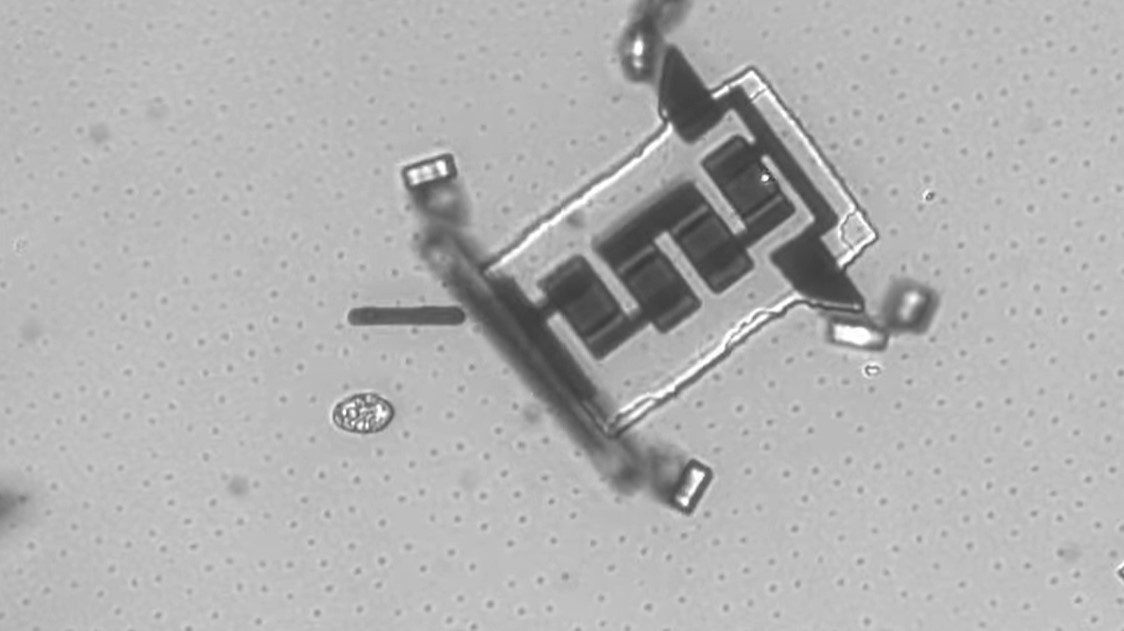

FRANCIS COLLINS: It's too bad that you can't actually see DNA easily under a microscope and scan across the double-helix and read out of the sequence of bases that amounts to the information content because it would be easier, I think, to explain then how a geneticist goes about tracking down the molecular bases of a disease at the DNA level. Our methods are indirect. They're very powerful, they're very highly accurate, but they're not as visual as you might like. We do have methods though now that allow you to read out with high accuracy all 3 billion of the letters of the DNA instruction book. Those letters are actually these chemical bases. The chemical language of DNA is a simple one. There's only four letters in the alphabet. Those bases that we abbreviate, A, C, G, and T. And we have methods of being able to compare then the DNA sequence of people who have a disease versus people who don't and look for the critical differences in order to nail down something that might be the cause. Well, since, however, we all differ in our DNA sequence by about a half of 1%, you wouldn't get very far if you basically sequenced my DNA and the DNA of somebody with Parkinson's disease trying to figure out what the differences were because there would be way too many of them. But if you're willing to do that for a large number of people, you kind of average out all the noise and the difference that matters begins to be more and more clear. That's an overly-simplified description of how a geneticist goes about zeroing in on the actual molecular cause of a complex or a simple disease. This worked most readily for diseases that are highly heritable, cystic fibrosis, Huntington's disease. Those are conditions where a single mutation very reproducibly results in the disease. It's been a lot tougher for diseases where the inheritance is muddy. If you take diabetes for instance, which is what my lab primarily works on, or you take asthma or high blood pressure, that is not a set of conditions where one gene is involved in risk. There are dozens of genes involved in that and no single one of them contributes very much, but you put it all together and the consequences to that individual may tip them over the threshold into having the illness.

We are experiencing right now a remarkable deluge of discovery in terms of the causes of disease, much of it coming out of genomics, the ability to pinpoint at the molecular level what pathway has gone awry in causing a particular medical condition. And that in itself is exciting because it's new information, but what you really want to do is to take that and push that forward into clinical benefit. Some of that can be in prevention by identifying people at highest risk and trying to be sure they're having the right preventative strategy. But people are still going to get sick and so you want to come up with also better treatments than what we have now. And some of it is the ability through personalized medicine to begin to identify individual risks for future illness to get us beyond the-one-size-fits all approach to prevention, which has been not that effective. People haven't necessarily warmed to these recommendations about what you should do about diet, exercise, colonoscopies, mammograms, and so on because it all sounds very much generic. But if you could provide people with information about their personal risks and allow them, therefore, to come up with a personalized plan for maintaining health, that seems to inspire a lot more interest.

Personalized medicine is a term that gets used differently by different people. In my view, this is an effort to try to take diagnosis, prevention, and treatment and, when possible, factor into that individual information about that person in order to optimize the outcome. I think in some instances, we're not very far along with that. In others, we're making real progress. Take, for instance, the effort to try to choose the right drug at the right dose for the right person, what we'd call pharmacogenomics. There are now more than 10% of FDA-approved drugs that have some mention in the label about the importance of paying attention to genetic differences in order to optimize the outcome. Take, for instance, the drug Abacavir, which is used to treat HIV-AIDS, a very powerful antiretroviral, but a drug that caused a pretty serious hypersensitivity reaction in about 6% or 7% of those who took it. We now know exactly how to predict that on the basis of a genetic test and so there is now a what-called black box label on the FDA, a label for this drug saying you must do that genetic test before you prescribe this drug in order to avoid that outcome. That was unimaginable a few years ago, that you would have that kind of precision in making that choice of a drug.

GLENN COHEN: Recent set of controversies has to do with the funding by the federal government about research that mixes human and animal genetic materials, sometimes called chimeras, but there's actually a broader group. So again, the method is to think about a large number of cases. It's helpful to think about very different cases. So, to use some real cases, imagine you mixed human brain cells, so human brain stem cells in the embryonic stage, into a mouse to create a mouse with a humanized brain. Now, it wouldn't be a human brain. It's not exactly the same. It's much smaller, for example, but has humanized elements. Another example is a humanized immune system. Take a mouse, and we do this, we have these at Harvard for example, and created an immune system in order to test drugs. Think about HIV, for example, that was humanized. So not the brain, but just the immune system was very human-like. And last example is actually heart valve replacements. So Jesse Helms, the Senator, had a pig valve placement years ago, so there's a piece of an animal in him. So these are some real cases of different kinds of mixing and the question is which are okay, which are not okay, why can we generate some principles?

So what might be wrong with mixing human and animal parts? So one thing that might be wrong is that we think it will confuse the boundaries between humans and animals, that right now we have a pretty clear distinction. While many people love their dogs and their cats like members of the family, they are able to say this is not a member of my family. This is not a member that has the same rights as my family member. In a world where we had a much more of a continuum between animals and human beings, those distinctions would become difficult. Now, just because they become difficult doesn't mean that that's wrong. It would just pose for us a new problem and maybe it would illustrate a problem we should be thinking about altogether. So I'm not particularly sympathetic to that argument. Different argument though is to say human beings are particular kinds of beings with particular kinds of capacities and there's a dignity to being human being. And if we were to mix enough animal material into a human being, the thing that we would have would not be something new, but it would be a human being that could not flourish as a human being. It would be an undignified human being, a kind of entity that is one that really is unable to really experience what it is to be human. Now, again, you might push on this and say, well, yes, that's true, they would not be a human being and they would not necessarily have all the capacities of a human being. So imagine having some of the capacities of a human being, but being stuck in a rat body, for example. Sure, there'd be ways in which you would not flourish as a human being, but why not think of you as flourishing as a new kind of entity? And in particular you might actually think there might be an obligation to create some kinds of chimeras. So if, for example, we think of Big Bird from Sesame Street, sounds like a silly example, but it's a good one. Big Bird talks, Big Bird has friends, Big Bird goes to school, been in school a long time on Sesame Street, I guess, but he seems to have a pretty good life. Imagine we could take regular birds and turn them into big birds by doing something to them. Would we think of that as improving a little bird's life or would we think about that as hurting a human being's life through this mixture? Hard questions, but at least it might be possible that we think we're doing animals a favor by doing this. And other answers might say it depends a lot on the specifics of the case. There are changes we could make to human beings by mixing in animal DNA that might make them better and there are changes we can make to human beings that might make them worse and worse from a moral perspective. So, for example, if it turned out that there was, to use an example in literature, we could give human beings night vision so they could see at night like some animals through mixing in a little animal DNA, you might think that would be great. We could do more search and rescue. We'd be better drivers. There'll be less fatalities. On the other hand, if the result was to produce human beings that had much stronger aggression or violence or claws or something like that, you might think that's worse because we're going to do more harm. And that would suggest the answer about whether we ought to have chimeras or not and what kind can only be answered in a particularistic way by thinking about a particular case.

I will say, and this is kind of referencing some work by my friend Hank Greely at Stanford, that there are particular kinds of changes which from a sociological perspective seem to bother us more. And he describes them as kind of brains, balls, and faces. So brains, it turns out we're very disturbed by the idea of human brains or humanized brains in animals. Much more disturbed by the humanized brain mice than we are by the humanized immune system mice, for example. The other is balls. We tend to be very nervous when we think about the idea, and this is kind of crazy and out there, imagine you could create an animal that had the ability to reproduce — its gonads, it's reproductive system, was human. So that you'd have animals mating and producing human beings and animals. That's the kind of thing that I think disturbs a lot of people as an idea. And the last is faces. The idea of having animals with human faces, for example, I think just disturbs a lot of people, even though you might say a face is a face. But it's a marker of human beings and the way we relate to each other and I think there's just a strong sociological push back against that.