richard feynman

Few could match the famous physicist in his ability to communicate difficult-to-understand concepts in a simple and warm fashion.

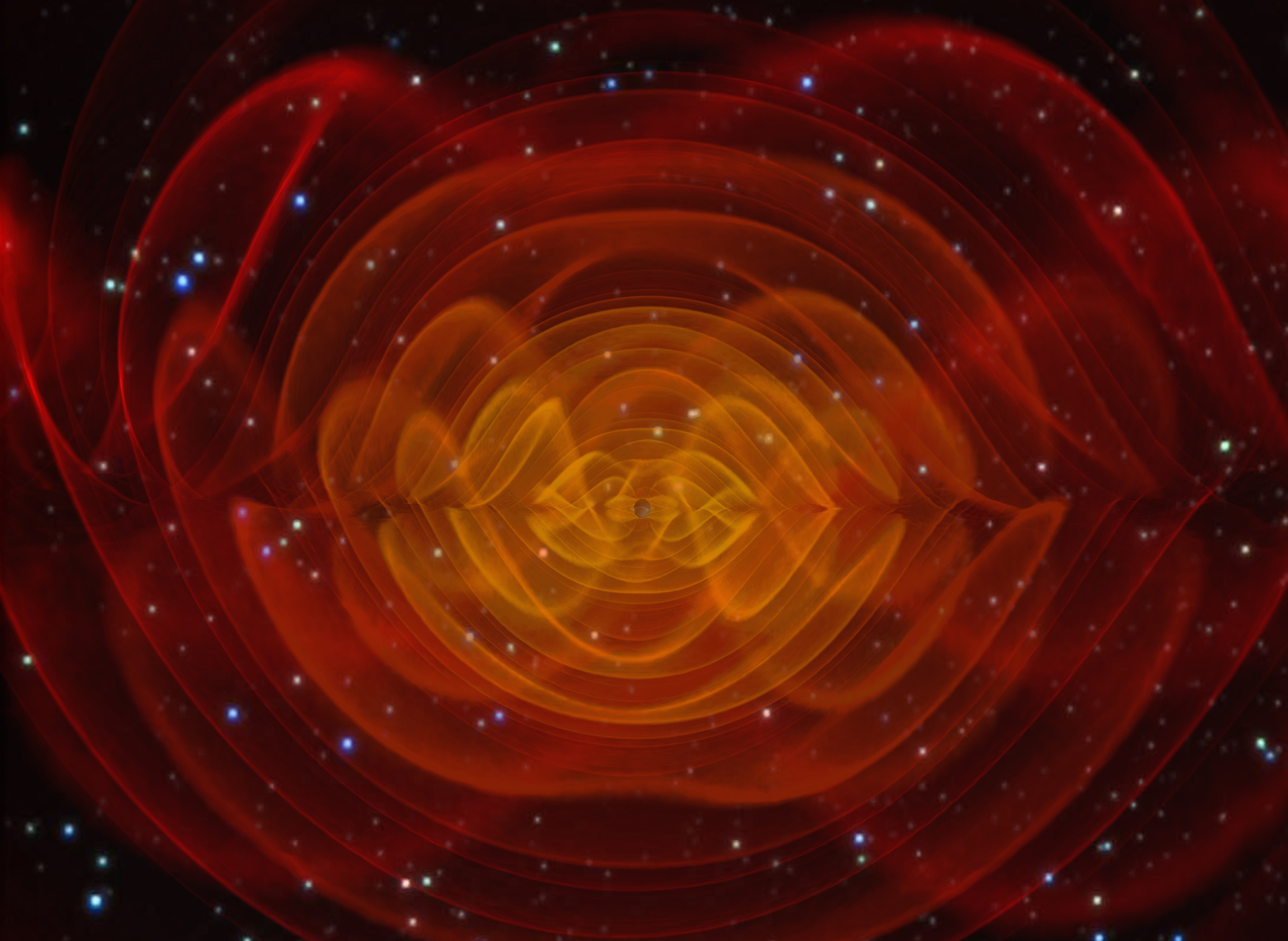

Measuring quantum gravity has proven extremely challenging, stymying some of the greatest minds in physics for generations.

Will we ever have a Theory of Everything? Theoretical physicist Lawrence Krauss isn’t sure that’s the right question to be asking.

▸

4 min

—

with

Don’t believe every science study you read, because sometimes not even their authors believe them. Here are the issues corrupting good, honest science – and how to fix them.