energy

An introduction to “The Engine of Progress” from Jason Crawford, founder of the Roots of Progress Institute.

Barriers to energy abundance — and how to overcome them — were front and center at Progress Conference 2025.

The case that a bipartisan movement structured around progress and reform may be reaching critical mass.

Nuclear chemist Tim Gregory joins Big Think to make the case that nuclear energy can still transform the world for the better.

There are real concerns with long-term power generation on the Moon; nuclear could be the answer. But for NASA, will the cost be too high?

The cofounders of think tank RethinkX are convinced that humanity is undergoing civilizational phase change.

Around the world, biofuels, so-called green energy sources, are waving major red flags.

Electric vehicle sales are rising but public charging in cities is still lacking.

The US needs 28 million EV chargers by 2030. Here’s how it can get there.

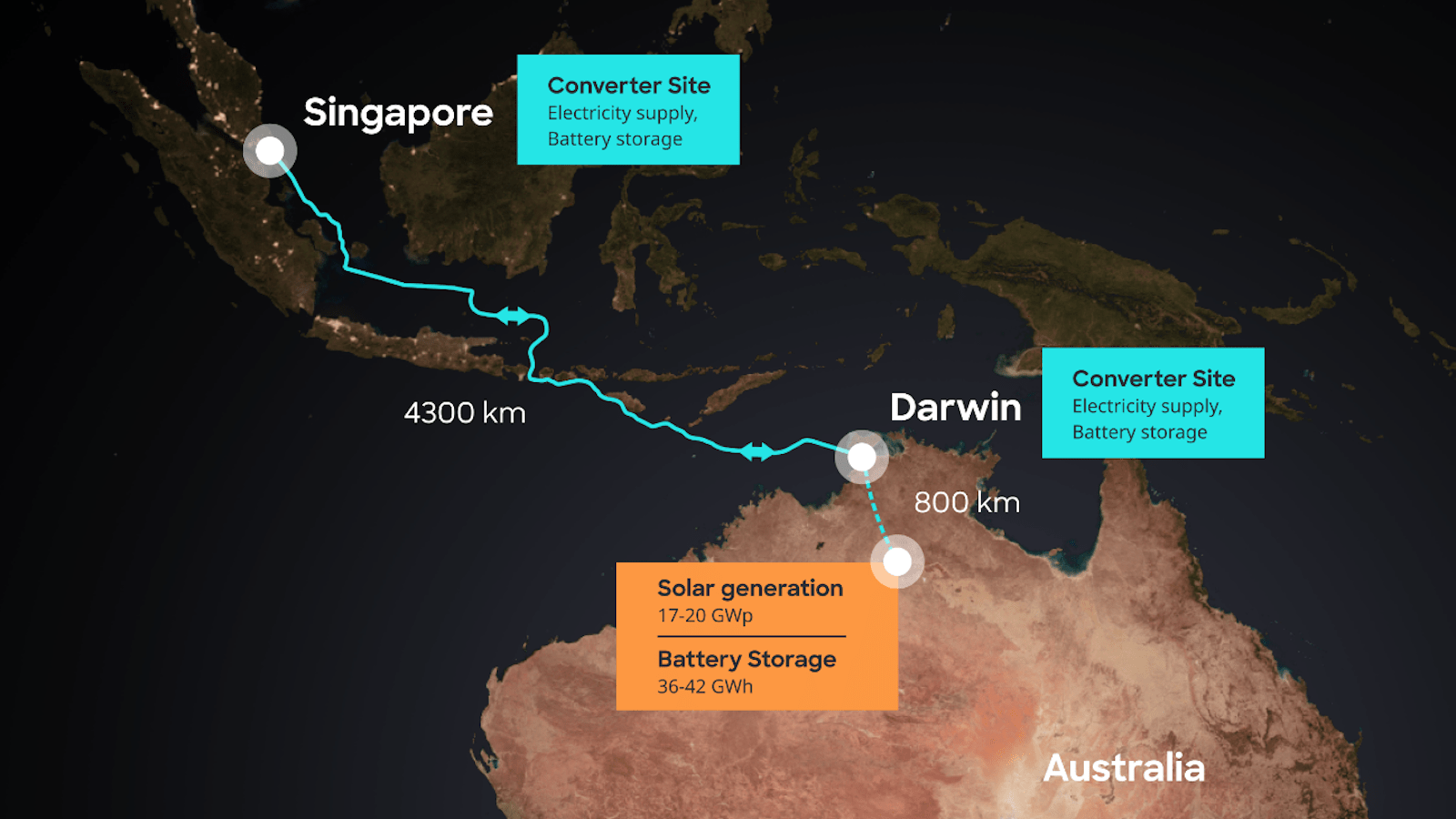

Australia’s AAPowerLink boasts three global superlatives: largest solar farm, largest battery, and longest power cable.

We need more data centers for AI. Developers are getting creative about where to build them.

The evolution of quantum technology is far from over.

For well over a century, engineers have proposed harnessing the ocean’s tides for energy. But the idea hasn’t seemed to register in many places.

Explore data on electric car sales and stocks worldwide.

A $30,000 electric vehicle with 400 miles of range that charges in under 10 minutes remains a pipe dream over the near future.

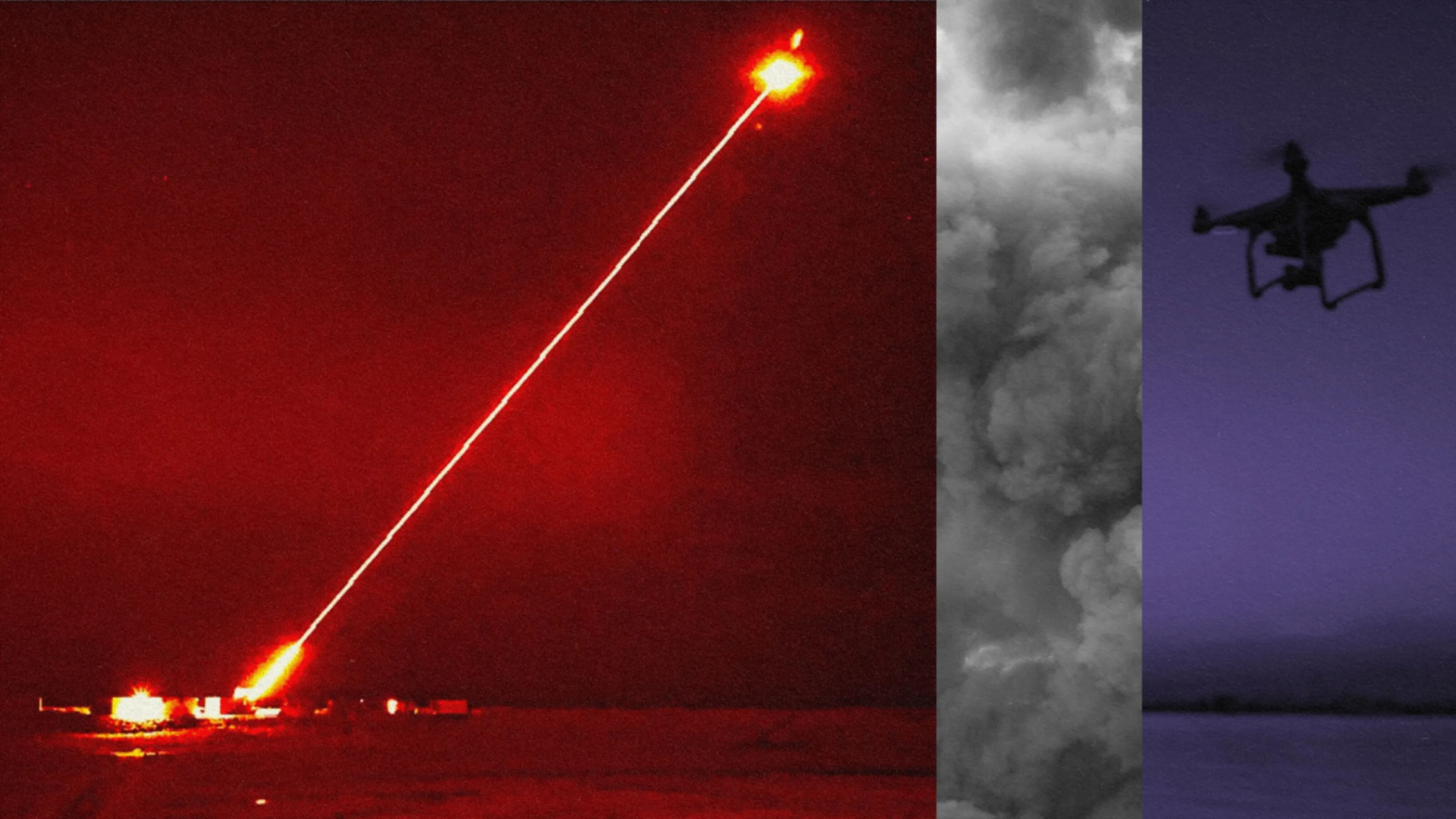

The futuristic weapon could be ready for the battlefield in 5 years.

As wind power grows around the world, so does the threat the turbines pose to wildlife. From simple fixes to high-tech solutions, new approaches can help.

Decades ago, a disaster left three million acres of land uninhabitable and killed between 85,600 and 240,000 people. Chernobyl? No. Banqiao dam in China.

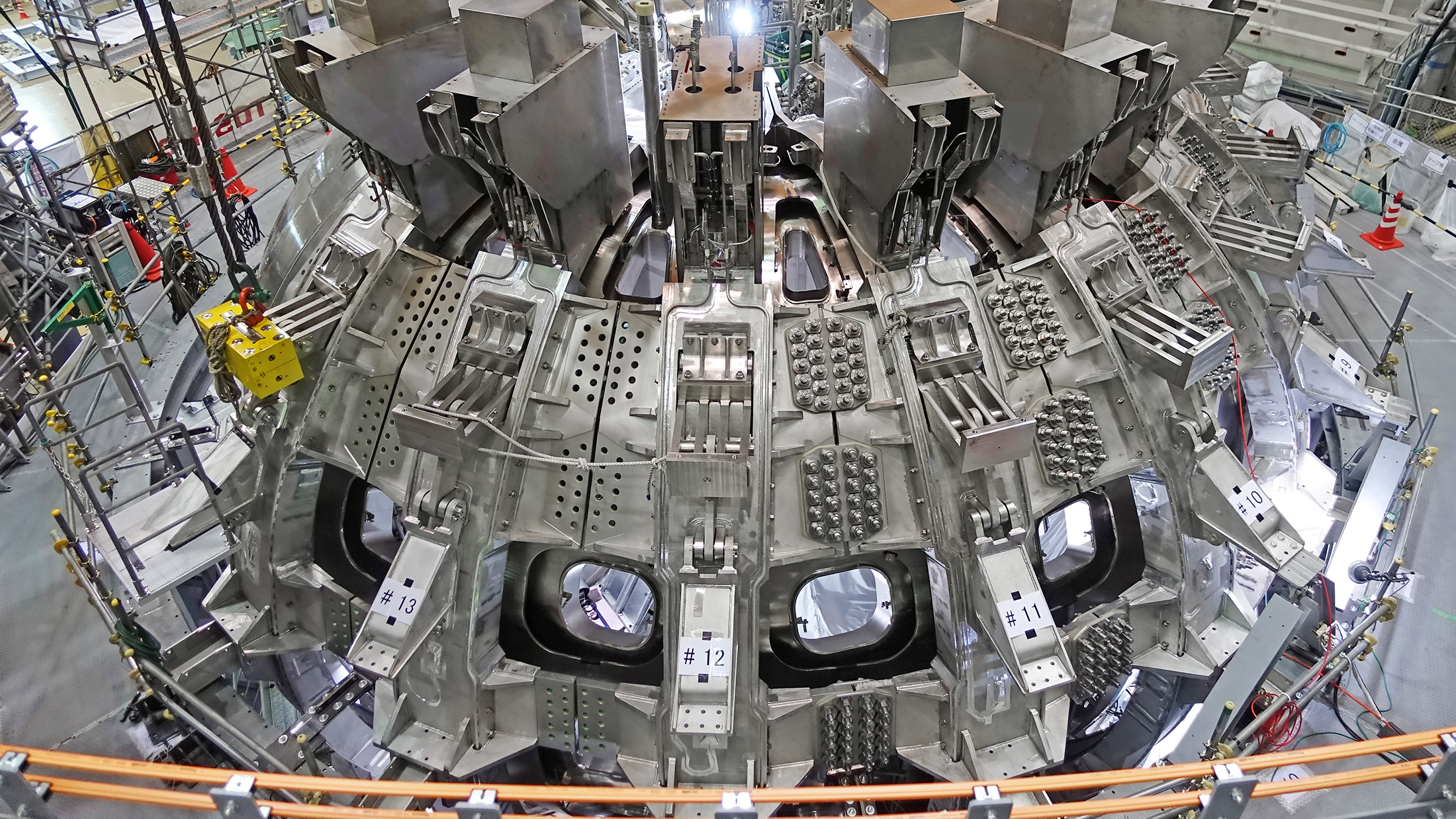

Scientists have been chasing the dream of harnessing the reactions that power the Sun since the dawn of the atomic era. Interest, and investment, in the carbon-free energy source is heating up.

The term “zero-point energy” has at least two meanings, one that is innocuous and one that is a great deal sexier (and scammier).

A massive nuclear fusion experiment just hit a major milestone, potentially putting us a little closer to a future of limitless clean energy.

McDermitt Caldera, the site of an ancient volcanic eruption, straddles the border of Oregon and Nevada.

Experiments on suborbital rockets are revealing how to make a better iron furnace.

In many ways, it was worse than Chernobyl.

The National Ignition Facility just repeated, and improved upon, their earlier demonstration of nuclear fusion. Now, the true race begins.

Ironically, the company did so using technology perfected by the oil industry.

Science fiction met nuclear fission when Hungarian physicist Leó Szilárd pondered the explosive potential of nuclear energy.

The biggest nuclear blast in history came courtesy of Tsar Bomba. We could make something at least 100 times more powerful.

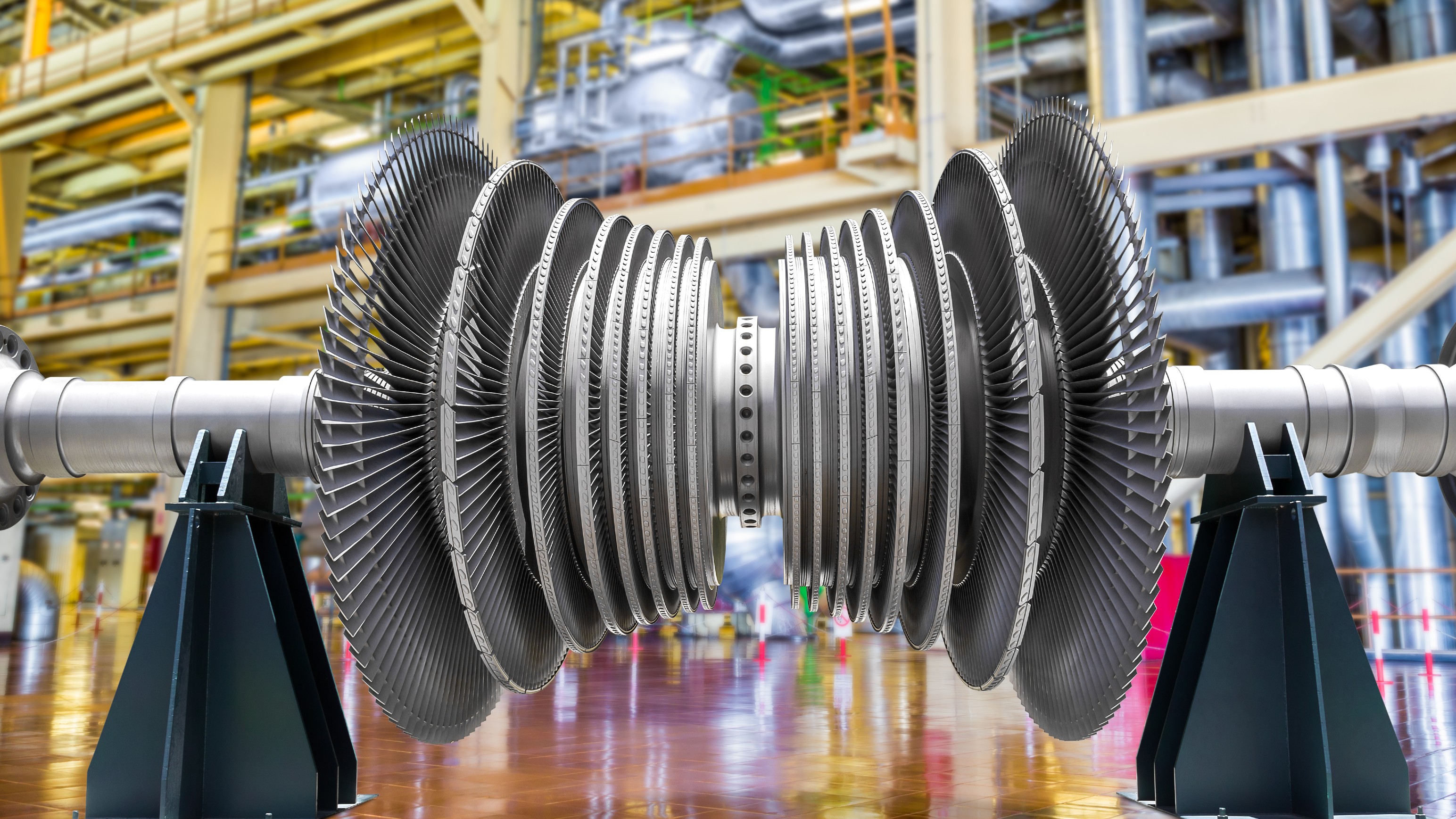

The material is both stronger and lighter than those used to make conventional power plant turbines.

There may be more energy in methane hydrates than in all the world’s oil, coal, and gas combined. It could be the perfect “bridge fuel” to a clean energy future.