bias

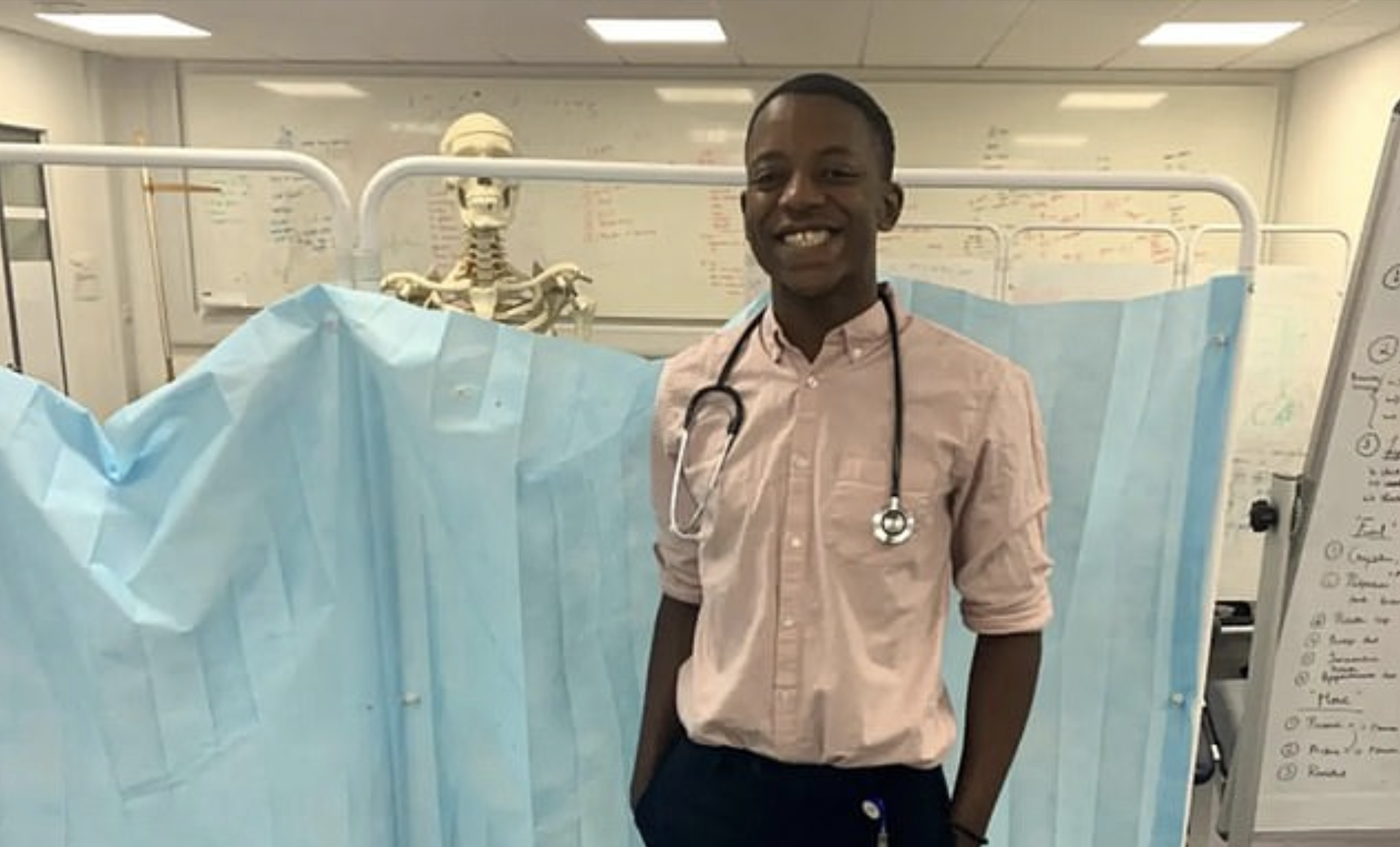

Doctors may be missing fatal illnesses because medical textbooks are biased toward white skin.

Monuments are under attack in America. How far should we go in re-examining our history?

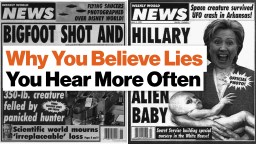

Repeating lies makes people believe they are true, show studies.

The development of implicit biases starts at a young age and then they get reinforced over time.

Do you know the implicit biases you have? Here are some ways to find them out.

How much does cognitive bias change people’s perception? Well, the history of computing would be a lot different.

▸

4 min

—

with

Some people naturally believe they’re thinner than they really are. Here’s how to tell if you’re susceptible.

Here’s why your brain’s biases are a win for fake news, and a pay day for Facebook.

▸

9 min

—

with

Stereotyping isn’t about “bad people doing bad things.” It’s about our subconscious biases, and how they sneak into organizational structures.

▸

7 min

—

with

In an analysis of more than 140 million of its U.S. members, LinkedIn identified a key difference between how men and women present themselves in their profiles.

The most revelatory answers in life come from complex, diverse populations. Technology can open our eyes to what we’re missing and destroy our subconscious biases in one fell swoop.

▸

4 min

—

with

It’s illegal, yet usually a subconscious act. So how can we scrub bias from the hiring process?

How did earlier records get it so wrong, and why do scientists believe they’re right this time?

“We love, as a culture, to attack messengers when the message is something that makes us feel uncomfortable,” says journalist Wesley Lowery.

▸

3 min

—

with