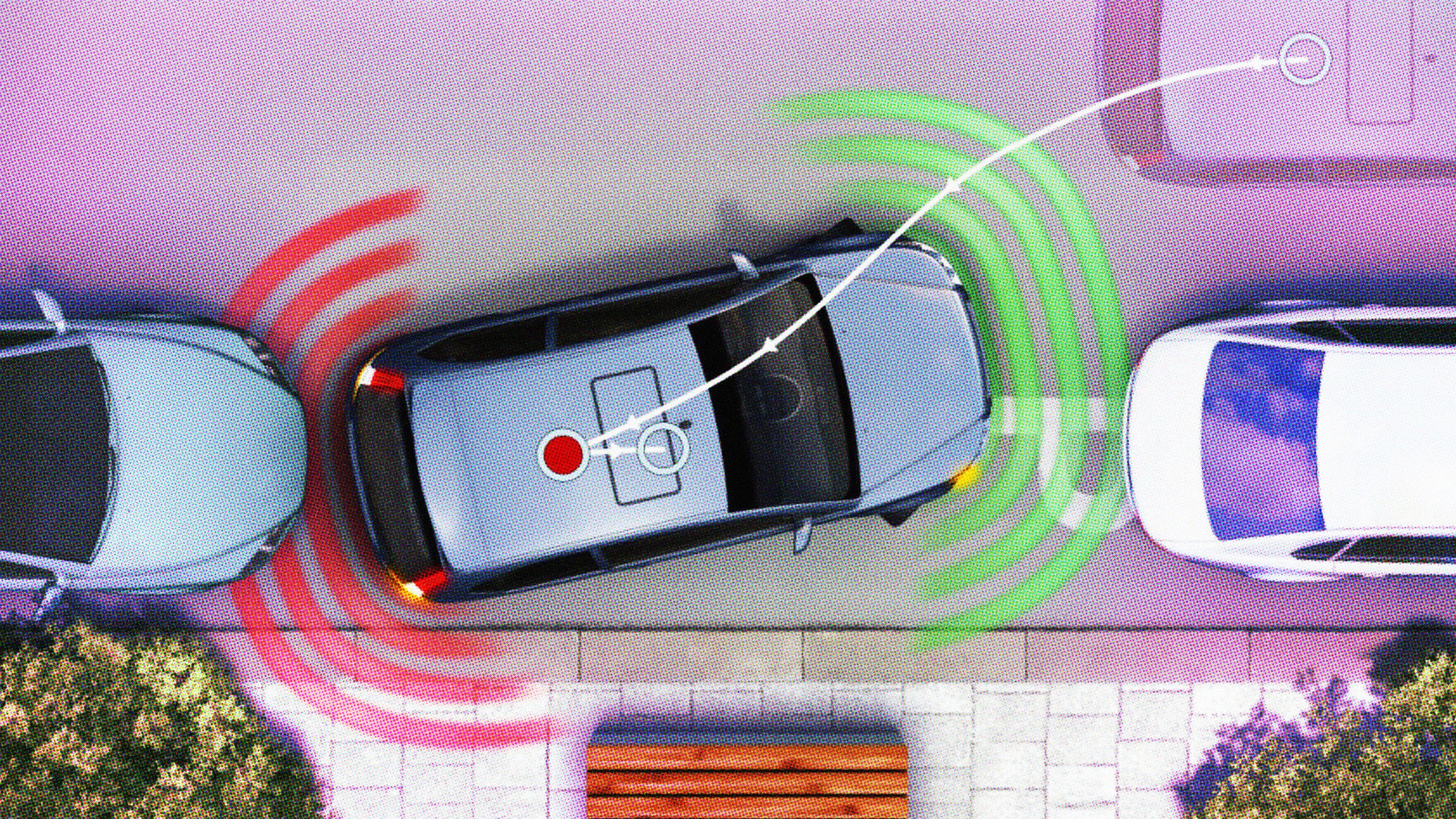

A Google Self-Driving Car Is at Fault for a Crash

Google’s self-driving cars have been in a number of accidents, but after 1.5 million miles of driving this would be the first time one of its cars has been at fault.

According to a report filed by the California DMV, a Google autonomous car ran into the side of a bus at low speed. The full report reads:

“A Google Lexus-model autonomous vehicle (“Google AV”) was traveling in autonomous mode eastbound on El Camino Real in Mountain View in the far right-hand lane approaching the Castro St. intersection. As the Google AV approached the intersection, it signaled its intent to make a right turn on red onto Castro St. The Google AV then moved to the right-hand side of the lane to pass traffic in the same lane that was stopped at the intersection and proceeding straight. However, the Google AV had to come to a stop and go around sandbags positioned around a storm drain that were blocking its path. When the light turned green, traffic in the lane continued past the Google AV. After a few cars had passed, the Google AV began to proceed back into the center of the lane to pass the sand bags. A public transit bus was approaching from behind. The Google AV test driver saw the bus approaching in the left side mirror but believed the bus would stop or slow to allow the Google AV to continue. Approximately three seconds later, as the Google AV was reentering the center of the lane it made contact with the side of the bus. The Google AV was operating in autonomous mode and traveling at less than 2 mph, and the bus was travelling at about 15 mph at the time of contact.

“The Google AV sustained body damage to the left front fender, the left front wheel and one of its driver’s -side sensors. There were no injuries reported at the scene.”

The incident occurred due to a misunderstanding in the car’s software. Google made a note of the accident in its monthly self-driving car report, where it indicated that modifications had been made to the vehicle’s software. From now on, the car will understand that large vehicles and buses will be less likely to yield.

“Nobody wants accidents — and some will play this accident as more than it is — but neither do we want so much caution that we never learn these lessons,” Brad Templeton wrote in his blog.

The autonomous car isn’t a perfect system, which is why it continues to require a human supervisor. However, it may not be able to thrive in the environment it has been born into for some time. The success of attaining its full autonomy is reliant on an ecosystem that supports it, and right now that isn’t the case. Self-driving cars don’t drive how we expect them to — they don’t cut the corner or edge forward slowly into junctions as a human driver would. They give that corner a wide berth and stop abruptly, indirectly causing accidents.

One could make the argument that had this bus also been an autonomous vehicle, this accident would not have occurred. Because autonomous cars have to drive alongside humans, there’s a certain degree of guesswork that has to be made.

Google’s has already had to modify the software in its cars to drive more like a human. So long as human drivers are still on the road, self-driving cars will need to conform to the etiquette of the kinds of drivers that dominate the roads, which are humans.

“We’re going to need new kinds of laws that deal with the consequences of well-intentioned autonomous actions that robots take,” says Jerry Kaplan, who teaches Impact of Artificial Intelligence in the Computer Science Department at Stanford University.

***

Photo Credit: GLENN CHAPMAN/AFP/Getty Images

Natalie has been writing professionally for about 6 years. Her work has appeared in Wall St. Cheat Sheet, Geek, GDGT, and PCMag. She focuses her time on researching topics about the environment and technology, and how these issues impact our lives. Follow her on Twitter: @nat_schumaker