Guest Thinkers

All Stories

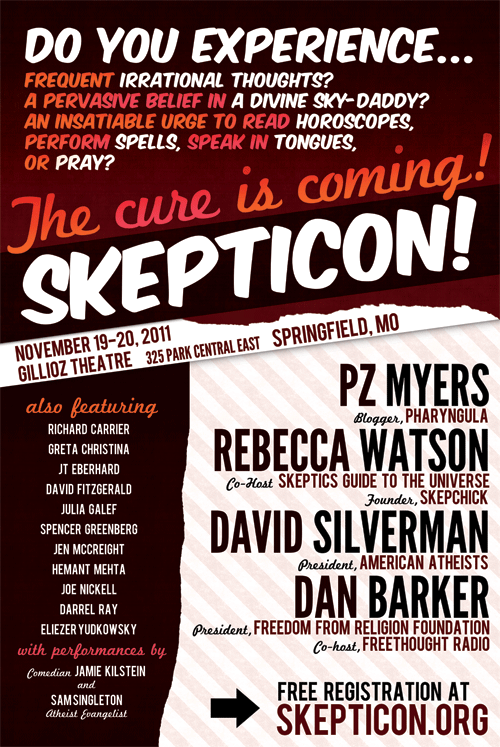

I’m back! As you may know, I’ve spent the last three days in Springfield, Missouri, having a blast at Skepticon IV. The convention was a weekend of great talks that […]

It’s the video that everyone seems to be talking about, or at least a lot of people on Youtube. This video depicts a University of California, Davis police officer pepper-spraying […]

If you were marooned on a desert island and could only bring a handful of books with you–let’s say five–which ones would you pick? Big Think asks Stephen Greenblatt, the bestselling author of Will in the World, a biography of Shakespeare.

Ask me to build a Mount Rushmore of Abstract Expressionism, and I’ll put the faces of Jackson Pollock, Willem de Kooning, Mark Rothko, and Barnett Newman up there. From Hollywood […]

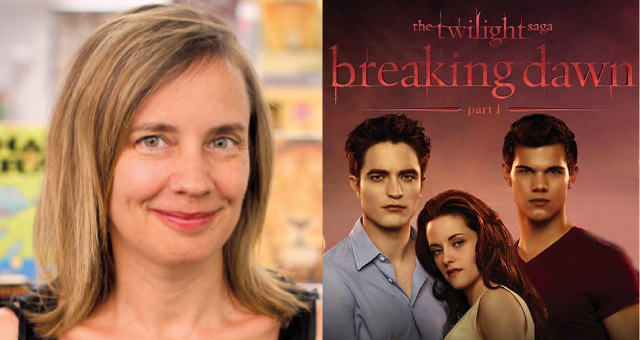

There is certainly value in any book that will make a young person shift his or her gaze from the iPhone screen long enough to read it.

Breaking Dawn Part 1, the latest film version of Stephenie Meyer’s bestselling Twilight saga sank a stake into the weekend box office pulling in an estimated $283.5 million worldwide. A […]

Rumor has it that 80% of Newt Gingrich’s Twitter followers were purchased and as a result are fake and/or useless. This may be an exaggeration, but the evidence is clear […]

Research on gender difference must have enough courage to ask important questions but be thoughtful enough not to jump to conclusions. Here are some guidelines for reading research.

A lot has been and will be written about Salman Khan. Though he already arrived in the spotlight of mainstream media, he is clearly just at the beginning of his […]

So we really do have an aging society. The good news, as I’ve said before, is that we’re living longer, on average, than ever before. The bad (some say) is […]

Obamacare is going to get its day in the Supreme Court. The court granted certiorari in—literally, informed the lower courts that it would hear—three cases challenging the Affordable Care Act […]

Deep in the heart of the cell, your DNA may be undergoing subtle changes that could lead to a devastating disease several years down the line. Scientists want to detect those changes.

You are on a date with a wonderful man/woman. He/she is speaking, but you are gazing lovingly into his/her eyes thinking how lucky you are having finally met your perfect […]

Impulsive and addictive behaviors are genetically linked in men, but not in women, says a new study from the University of Nebraska, Lincoln. The gene in question is called NRXN3.

“What is so distasteful about the Homeric gods,” W. H. Auden complains in his essay “The Frivolous & the Earnest,” is that they are well aware of human suffering but […]

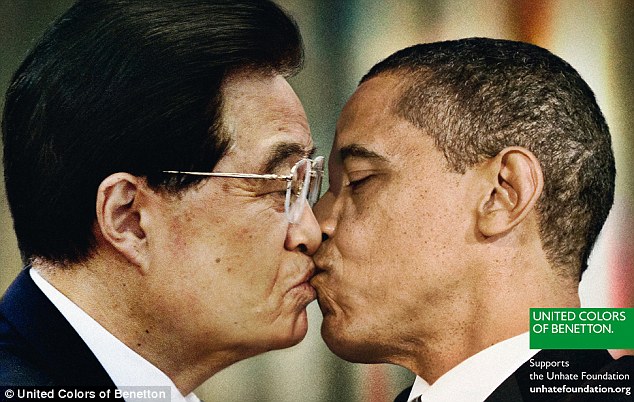

Benetton’s controversial new “Unhate” ad campaign, which features pictures of world leaders like Barack Obama and China’s Hu Jintao caught in a liplock, actually raises a thought-provoking question: Is it […]

This week Big Think decided to give Twitter a big bear hug. Why? We realized the Twitosphere had (undeservedly) become the neglected stepchild of our various social media profiles. To […]

Every Wednesday, Michio Kaku will be answering reader questions about physics and futuristic science. If you have a question for Dr. Kaku, just post it in the comments section below […]

Get your company going in just 54 hours. That’s what many businesses have accomplished by condensing their ideas and pitching to local entrepreneurs and investors.

Great thinkers are not much if their ideas never get noticed. And hey, it’s a jungle out there. Here is some advice about getting your thoughts into the marketplace—and getting noticed.

In my previous post in this series, I quoted a shockingly anti-atheist letter written by Rev. John Buehrens, former president of the Unitarian Universalist Association. This letter repeated all the […]

As I mentioned earlier, I’ll be at Skepticon IV this weekend in Springfield, Missouri, and I’m eagerly looking forward to it. Given the sheer number of fantastic speakers, we’re at […]

Using about 400 transistors, M.I.T. computer scientists have created a silicon chip that mimics one human synapse, removing a barrier to creating a machine that can learn like people.

If astronomers spot a big one headed our way, our risk perception will switch to “It COULD happen to me, and SOON” and we’ll take the threat more seriously.

Reading the daily news (probably on a PC or tablet device) one might have the notion that ebooks were on a killing spree, destroying every part of the old media […]

Advancements in 3D printing technology are revolutionizing consumption and manufacturing. Instead of throwing broken items away, fix them by printing a croudsourced spare part!

–Guest post by American University graduate student Natalie Shuster. Since 2009, the Food and Drug Administration (FDA) has engaged in active conversation with national pharmaceutical and biotechnology companies regarding the […]

During his lifetime, Diego Rivera stood as one of the most important and controversial artists in the world. Today, thanks to the international feminist phenomenon of Frida Kahlo (who stood […]

Yesterday, Big Think Expert and renowned Shakespeare scholar Stephen Greenblatt won the National Book Award for nonfiction for bringing to life a 15th century book-hunting expedition that changed the world. A true […]

Tim Harford, Britain’s answer to Malcolm Gladwell, explains how one of the biggest turnarounds in Broadway history, Movin’ Out, teaches us a fundamental lesson about our ability to adapt.