The Technological Singularity and Merging With Machines

The term “singularity,” which is often heard today, comes originally from my field, theoretical physics. It denotes a point in space and time where the gravitational field becomes infinite. At the center of a black hole, for example, we might find a singularity. It also refers to a mathematical term where a certain function also becomes infinite. But the type of singularity that you have probably been hearing about the most lately is called “The Technological Singularity” and although its not a new concept, it’s definitely becoming more of a mainstream topic of conversation.

Countless books on the subject are being published on a consistent basis, and Ray Kurzweil just recently launched his documentary, “The Transcendent Man” which shares his vision of a world in which humans merge with machines and is currently screening in sold-out screenings around the planet, web forums, blogs and video sites.

Recently it was part of a TIME Magazine cover story entitled “2045: The Year Man Becomes Immortal” which includes a five page narrative. Not to mention that there are an increased number of institutes, dozens of annual singularity conferences and even the 2008 founding of the Singularity University by X-Prize’s Peter Diamandis & Ray Kurzweil which is based at the NASA Ames campus in Silicon Valley. The Singularity University offers a variety of programs including one in particular called “The Exponential Technologies Executive Program” which they state has a main goal to “educate, inform, and prepare executives to recognize the opportunities and disruptive influences of exponentially growing technologies and understand how these fields affect their future, business, and industry.”

My television series Sci Fi Science, on the The Science Channel aired an episode entitled A.I. Uprising which maintained a focus on the coming technological singularity and on the fear that mankind will one day create a machine that could quite possibly threaten our very existence. One cannot rule out the point in time when machine intelligence will eventually surpass human intelligence. These super intelligent machine creations will become self-aware, have their own agenda and may even one day be able to create copies of themselves that are more intelligent than they are.

Common questions I’m often asked are:

But the road to the singularity is not going to be a smooth one. As I originally mentioned in my Big Think interview, “How to Stop Robots from Killing Us“, Moore’s law states that computing power doubles about every 18 months and it’s a curve that has held sway for about 50 years. Chip manufacturing and the technology behind the development of transistors will eventually hit a wall where they are just too small, too powerful and generate way too much heat resulting in a chip meltdown and electrons leaking out due to the Heisenberg Uncertainty Principle.

Needless to say, it’s time to find a replacement for silicon and it’s my belief that eventual replacement will essentially take things to the next level. Graphene is a potential candidate replacement and far superior to that of silicon but the technology to construct a large scale manufacturing of graphene (carbon nanotube sheets) is still up in the air. It’s not clear at all what will replace silicon, but a variety of technologies have been proposed, including molecular transistors, DNA computers, protein computers, quantum dot computers, and quantum computers. However, none of them is ready for prime time. Each has its own formidable technical problems which, at present, keep them on the drawing boards.

Well, because of all these uncertainties, no one knows exactly when this tipping point will happen although there are many predictions when computing power will finally meet and then eventually tower above that of human intelligence. For example, Ray Kurzweil whom I’ve interviewed several times on my radio programs stated in his Big Think interview that he feels by 2020 we’ll have computers that are powerful enough to simulate the human brain but we won’t be finished with the reverse engineering of the brain until about the year 2029. He also estimates that by the year 2045, we’ll have expanded the intelligence of our human machine civilization a billion fold.

But in all fairness, we should also point out there are many different points of view on this question. The New York Times asked a variety of experts at the recent Asilomar Conference on AI in California when machines might become as powerful as humans. The answer was quite surprising. The answers ranged from 20 years to 1,000 years. I once interviewed Marvin Minsky for my national science radio show and asked him the same question. He was very careful to say that he does not make predictions like that.

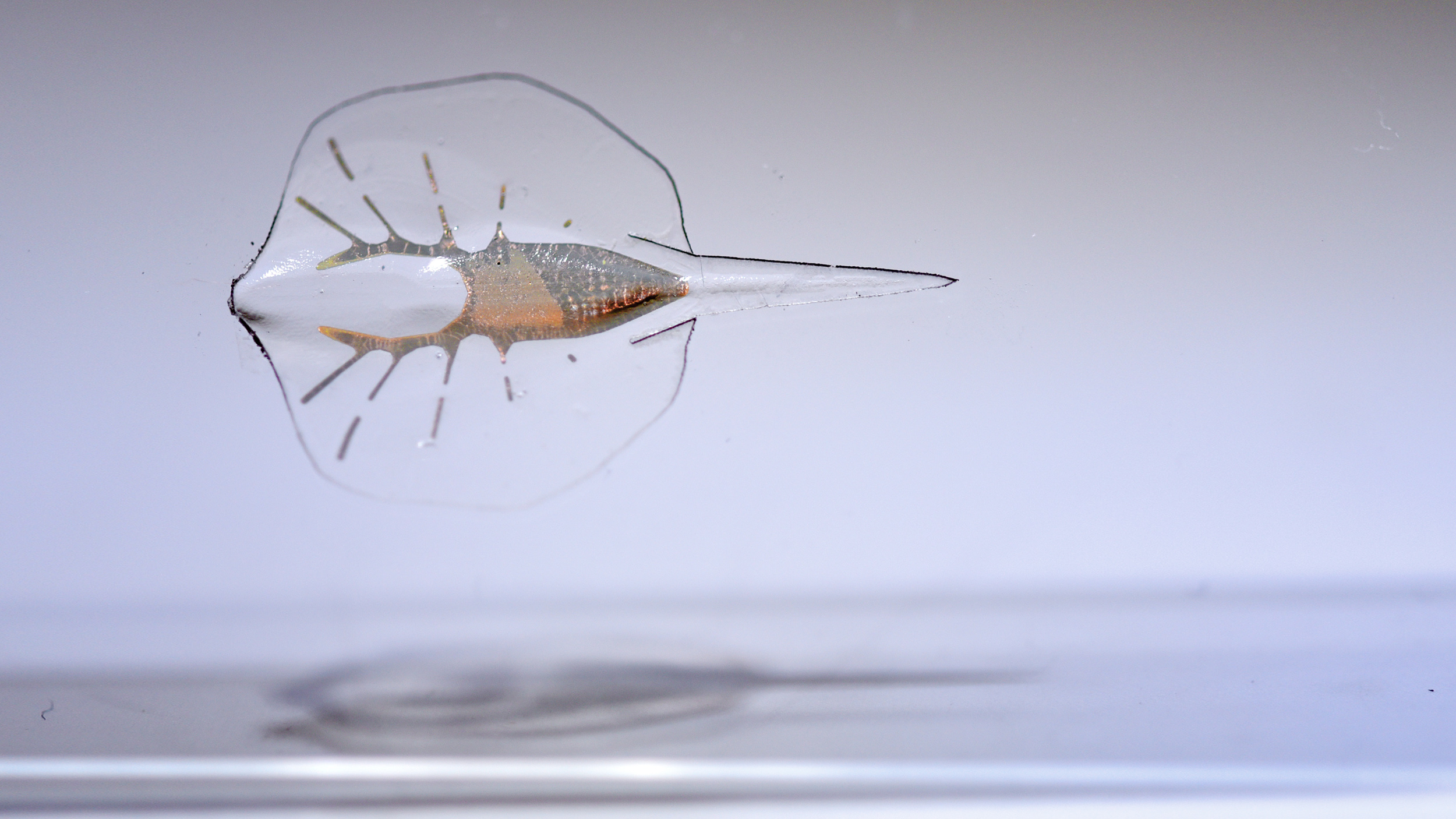

We should also point out that there are a variety of measures proposed by AI specialists about what do to about it. One simple proposal is to put a chip in the brains of our robots, which automatically shut them off if they get murderous thoughts. Right now, our most advanced robots have the intellectual capability of a cockroach (a mentally challengead cockroach, at that). But over the years, they will become as intelligent as a mouse, rabbit, fox, dog, cat, and eventually a monkey. When they become that smart, they will be able to set their own goals and agendas, and could be dangerous. We might also put a fail safe device in them so that any human could shut them off by a simple verbal command. Or, we might create an elite corps of robot fighters, like in Blade Runner, who have superior powers and can track down and hunt for errant robots.

But the proposal that is getting the most traction is merging with our creations. Perhaps one day in the future, we might find ourselves waking up with a superior body, intellect, and living forever. For more, visit the Facebook Fanpage for my latest book, Physics of the Future.