The North Carolina Civil Liberties Union has obtained documents that show Amazon has been nearly giving away facial recognition tools to police departments in Oregon and Orlando in an effort to essentially beta test the tools, which live in the cloud via Amazon Web Services. The package is called Rekognition and has been deployed in some capacity—including alpha and beta testing—since late 2016.

Today, a coalition of civil rights groups has jointly signed a letter that calls for Amazon to stop selling this technology. Why? The letter opens with:

“The undersigned coalition of organizations are dedicated to protecting civil rights and liberties and safeguarding communities.”

In a separate petition, the ACLU states: “Facial recognition is not a neutral technology, no matter how Amazon spins this. It automates mass surveillance, threatens people’s freedom to live their private lives outside the government’s gaze, and is primed to amplify bias and inequality in the criminal justice system.”

In response, an Amazon spokesperson pointed out that there are some upsides to such facial recognition technology, including finding lost children at amusement parks, as well as locating people who have been abducted.

Of course, the problem is that these can also be used for nefarious purposes, such as tracking political protesters, members of activist groups such as Black Lives Matter, and immigrants, as well as simply spying on neighborhoods with no reasonable suspicion established to do so.

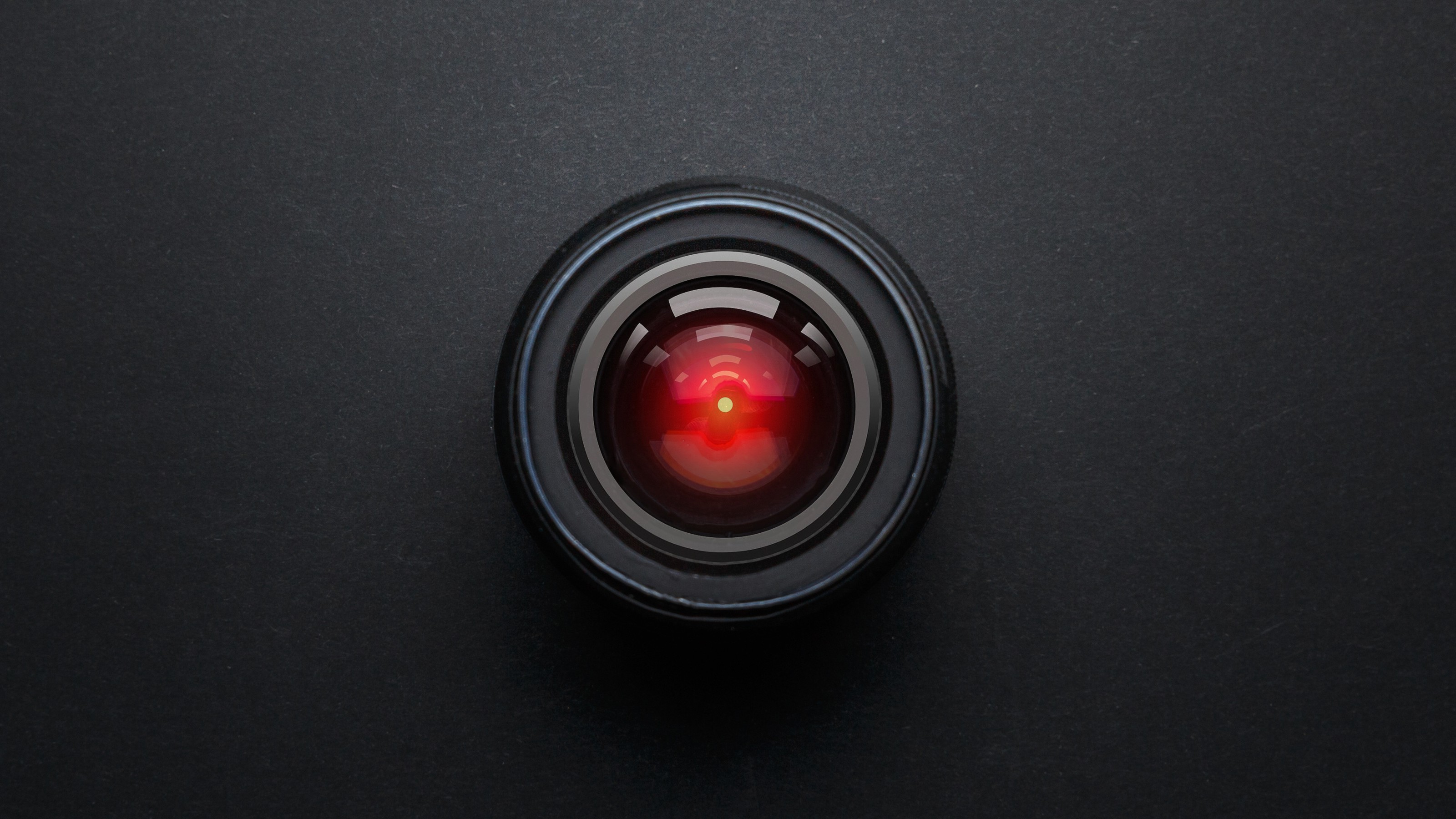

Banks of television monitors display a fraction of London’s CCTV camera network in the Metropolitan Police’s new Special Operations Room on April 20, 2007 in London, England. (Photo by Matt Cardy/Getty Images)

In the era of so-called predictive policing, technology such as this is only as good as the biases —or, rather, lack thereof—of those interpreting the data, as well as the programming itself.

One police department currently using Rekognition is Washington County, Oregon, to perform such tasks as recognizing jail booking photos then verifying them against actual video footage or photos of suspects involved in crimes. With the ubiquity of cameras now, it’s pretty much a guarantee that images of anybody out in pubic will be captured. According to the ACLU, this kind of technology can recognize up to 100 people in a single image.

However, the question can boil over into civil rights areas when, for example, images of a citizen being booked for suspicion of a crime are retained by law enforcement, despite their innocence. But also, it basically means always-on surveillance, as more images are captured with more cameras, and databases build exponentially.

Edmond O’Brien (1915 – 1985), as Winston Smith and Jan Sterling (1921 – 2004) as Julia, during the filming of an adaptation of George Orwell’s novel, ‘1984’. (Photo by Harry Todd/Fox Photos/Getty Images)

Matt Cagle of the ACLU of Northern California says he’s disturbed by what he sees as a lack of transparency and public engagement, as police and tech companies work together to bring this new tool to American streets.

“Amazon is handing governments a surveillance system primed for abuse,” Cagle says. “And that’s why we’re blowing the whistle right now.”

An article that the ACLU posted to announce the joint letter to Amazon:

“With Rekognition, a government can now build a system to automate the identification and tracking of anyone. If police body cameras, for example, were outfitted with facial recognition, devices intended for officer transparency and accountability would further transform into surveillance machines aimed at the public. With this technology, police would be able to determine who attends protests. ICE could seek to continuously monitor immigrants as they embark on new lives. Cities might routinely track their own residents, whether they have reason to suspect criminal activity or not. As with other surveillance technologies, these systems are certain to be disproportionately aimed at minority communities.”