Who’s More Likely to Be Right: A Century of Economics Or A Billion Years of Evolution?

Advocates of nuclear power have been busy this week, casting choices about reactors as a battle of head versus heart: Emotionally, we’re scared and impressed by the ongoing nuclear crisis in Japan, they say, but the rational choice for the future is to keep licensing those reactors.

As I mentioned the other day, behavioral economists, in describing human biases and blind spots, aren’t rebelling against this style of argument. In fact, they often implicitly endorse it. The point of Dan Ariely’s Predictably Irrational, like that of Nudge by Cass Sunstein and Richard Thaler, is that we make mistakes and fail to do the right thing—the rational thing—because of the innate biases of the mind.

The Japanese crisis has got me wondering about this rhetoric. Here’s why: When we say that people make “mistakes” and “fail to do the right thing” in their choices, we’re saying, in essence, that they’re failing to think like economists. And economists have been honing their disciplined and logical methods for more than a century, so they deserve respect. However, the sources of our “biases” and “errors” are strategies for dealing with the world that evolved over for more than a billion years. That too deserves respect.

Take a form of illogic in choice that is easy to demonstrate in people: Imagine that you have a stark choice tonight about dinner. You can eat a fabulous and nutritionally virtuous meal in a loud, noisy, rather frightening dive of restaurant. Or you can have a merely OK dinner in a much less stressful place down the road. To many, it feels like a toss-up.

However, if there is a third option that’s even less appealing—so-so ambiance, really lousy food—it people’s decisions fall in a different pattern. With a worse alternative available, the merely OK option looks better, and most people will choose that one. This is not rational, because the objective value to you of the first two choices has not changed. But absolute value isn’t in our normal decision algorithm. Instead, we rate each option based on its relative value to the others.

Humans share this decision-making bias with insects, birds and monkeys, hinting that it arose in a common ancestor and served well enough to survive eons of natural selection. Last summer, in fact, Tanya Latty and Madeleine Beekman showed that even slime molds have this tendency to see value in comparative, not absolute, terms. (In their experiment, the richest food was bathed in bright light, which is dangerous to the species, Physarum polycephalum, while a less concentrated dollop of oatmeal, in a dark, mold-friendly place, was Option 2. With only two choices, the slime molds didn’t show a strong preference for either. But when a third, nutritionally poor choice was added, they greatly preferred Option 2.)

The ever-handy Timetree website tells me that the ancestors of Physarum and humans diverged 1.2 billion years ago. So if you argue that the “relativity heuristic” causes people to make errors, you’re arguing that the last two centuries of economic theory are a better guide than the last billion or so years of evolution. And I think that argument is worth hearing. But I don’t see why I should assume it’s true. Isn’t it possible that sometimes our evolved heuristics are smarter than economists?

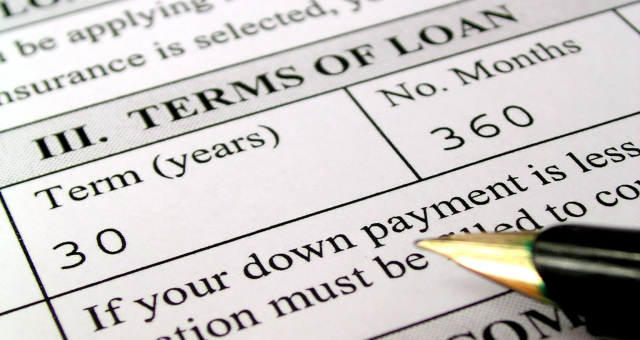

The other day I mentioned a frequently cited example of successful nudging, based on a post-rationalist understanding of the mind: Workers are more likely to participate in a 401(k) program if they’re automatically enrolled and have to opt out, than they would if they instead have to opt in. So switching from opt-in to opt-out 401(k) plans seems like a worthy and sensible policy, and Congress changed the law to encourage this in 2006. Let’s help those irrational workers overcome their natural tendency to error, right?

Since 2006, though, stocks plummeted and many companies stopped matching employee contributions to these retirement plans. As David K. Randall explains here, in recent years, many workers who went with their irrational biases might end up better off.

So I wonder, now, if people with an irrational fear of nukes—a fear that can’t be assuaged by the confident predictions of experts—might not be making a better choice than people who deliberately, maturely and rationally reason their way to accepting the absolute value of nuclear power. The rational argument for nuclear power is that it is hands-down the least destructive way we can generate energy in the amounts we demand. The irrational fears about it are based on the fact that something just went badly wrong with it; and that accidents, while rare, do a lot of damage; and that people, we know, tend to lie, cover up and slip up in their imperfect real lives. I think it’s worth considering whether those fears might not be a better guide.

Post-rationalist researchers are sometimes accused of devaluing reason, but the ones I have read do the opposite: They (ahem) irrationally overestimate reason’s powers. They think it can correct the mind’s tendency to “mistakes.” But reasoning doesn’t always lead us right.

The problem isn’t that logic is flawed. It’s that we easily attribute the soundness of logic to the assumptions on which that logic rests. And that’s a mistake.

We can reason our way out of that error with difficulty. Or we can listen to the “biases” evolution has bequeathed us. Biases that tell us to be very impressed by recent, rare, frightening events, whatever the credentialed experts say. Both paths may lead to the same end. But the latter path is faster and more convincing.

Maybe the goal of a post-rational model of the mind should not be to “nudge” ourselves into being more rational, but rather to find a better balance between the reasoning and unreasoning parts of the mind. If reason is good for correcting the errors of our innate heuristics, it may also be true that those innate biases may be good for correcting the errors of reason.

Latty, T., & Beekman, M. (2010). Irrational decision-making in an amoeboid organism: transitivity and context-dependent preferences Proceedings of the Royal Society B: Biological Sciences, 278 (1703), 307-312 DOI: 10.1098/rspb.2010.1045