The mediocrity of the mediocrity principle (for life in the universe)

- The mediocrity principle states that if certain objects in a collection are more numerous than others, then the odds of drawing one of them is higher.

- The principle has been extended to apply to the existence of life in the universe: If life exists here and Earth is not a special place, then life is not special.

- However, applying the principle to life throughout the universe has no foundation in data and is more of a wish than a principle.

Two weeks ago, I wrote about the Copernican Principle, which states that Earth is an ordinary planet moving about the sun. After Copernicus published his book in 1543, it made a lot of sense to elevate this notion to a principle. (In reality, the real action started much later, with Galileo and Kepler during the first two decades of the 1600s.) The move was to displace the Earth from its prime position of cosmic centrality, which combined bad astronomy with Judeo-Christian theology: Earth is important as are we — created in God’s image and wielding dominion over Earth and its creatures and lands. The Copernican displacement was central to the Scientific Revolution and to the Enlightenment (though the latter did spearhead the notion of the moral and intellectual superiority of the Western white man).

If we constrain the Copernican Principle to a statement about Earth not being a special planet in terms of its location in the universe, all is well. Trouble starts when we extrapolate to statements about the ubiquity of life in the universe, following the faulty notion that if Earth is not special then neither is life. This is a massive non sequitur. It becomes exponentially nonsensical when elevated to the so-called principle of mediocrity: Given that there is life on Earth and Earth is not a special place, life should be abundant in Earth-like planets around the universe, including intelligent life. In other words, the principle states that life is so abundant out there that it’s a mediocre property of the universe. This sort of thinking is not only bad science but also bad philosophy, and it has serious repercussions on our current project of civilization. If our planet and the abundant life in it are so trivial to the point of being mediocre, why respect either?

But first, the good stuff about the principle. In general, if you have many samples of different objects, some in larger numbers than others — for example, balls of different colors in a big box, but most are red balls — odds are that you will have a higher probability of drawing a red ball compared to other colors. In this example, red balls are mediocre for being the most common. Sounds pretty obvious.

In astronomy, the principle can be useful. For example, Isaac Newton and Christiaan Huygens applied it in the 17th century to estimate distances to stars, in particular Sirius. If one assumes that all stars are essentially identical (hence “mediocre” in this sense of all being equal), then their distances can be estimated by the differences in their luminosity: The farther the star, the fainter it is from us, with a decrease in power with the square of the distance. Although clearly faulty (stars are most definitely not the same), it was a good rough approximation to get the ball rolling.

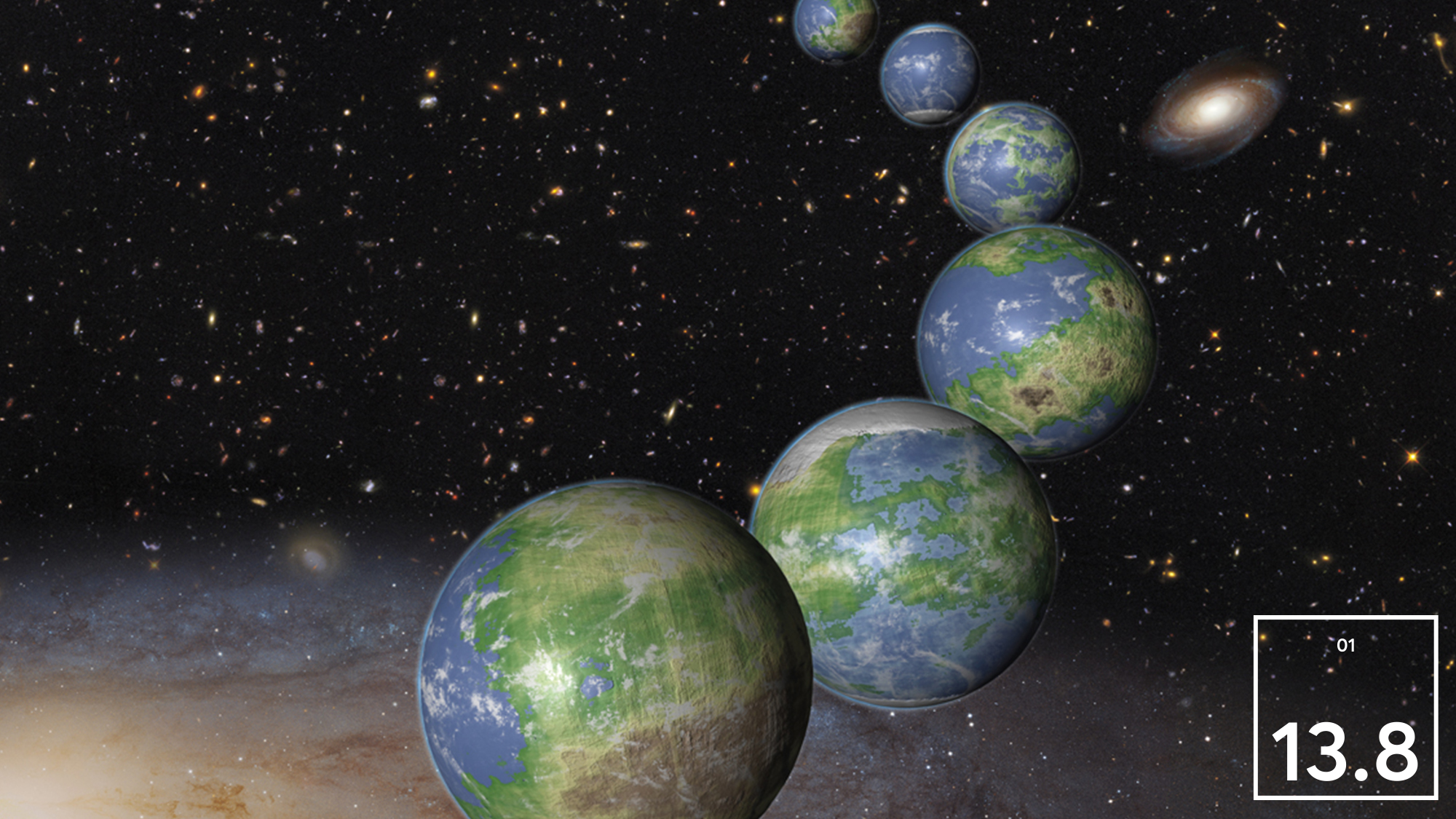

But stellar luminosity is very different from life. The mediocrity principle implies that Earth-like environments are common and, by extension, so is life. However, the steps from nonlife to life, still completely unknown, cannot be considered a straightforward consequence of Earth-like environments. A planet may have the right properties for harboring life — the right chemical composition, distance to the main star, atmosphere, magnetic field, etc. — and there would still be no guarantee that life would exist there. The fundamental mistake when applying the mediocrity principle to estimate the ubiquity of life in the universe is its starting point: to assume that Earth and its properties, including the existence of life here, are typical.

Quite the opposite: A quick look at our solar system neighbors should dispel this notion. Mars is a frozen desert; if it had life in its early years, it didn’t offer enough stability to support it for very long. The same applies to Venus, now a hellish furnace. Farther away, there are many “Earth-like” exoplanets, but only in the sense that they have a similar mass and orbit a star at a distance that is within the habitable zone, where water, if present on the surface, is liquid. These preconditions for life are a far cry from life itself. It’s not enough that life is merely possible in another world. Life needs to be possible and exist for a long time in order to have a chance of impacting the planet’s atmospheric composition to be detectable from dozens, hundreds or thousands of light years away. So, a planet not only needs to be able to generate life, but also able to make it viable for hundreds of millions or billions of years.

This is also the case for expectations of intelligent life elsewhere. To go from unicellular creatures to intelligent ones takes an unfathomably long time. Natural selection is not a fast process, and it depends on a series of exogenous factors that vary from planet to planet. It requires the planet to offer climatic and geochemical stability, and its parent star not to be a strong producer of life-killing ultraviolet radiation. There is nothing mediocre about this set of properties. Applying the mediocrity principle to the study of life in the universe is a mediocre move based on faulty reasoning.