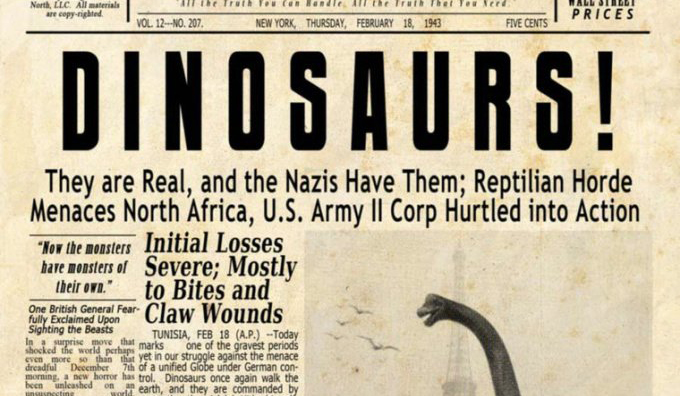

A recent Buzzfeed investigation found that fake news stories were more popular on Facebook in the 3-month runup to the 2016 presidential election than real news stories from credible sources. The analysis showed the public’s voracious appetite for sensational falsehoods. Stories like the Pope endorsing Donald Trump, or Hillary Clinton selling arms to ISIS, gathered large amounts of attention from Facebook users even though they never happened.

Such stories are frequently featured on bogus websites, masquerading as legitimate news sources, that look simply to convert user clicks into advertising revenue. Enter the question of legal versus moral responsibility. Under US law, online platforms are not legally responsible for content they post, but companies as large and as powerful as Facebook do have a responsibility to the public interest, says Washington Post reporter Wesley Lowery.

Over the last years, Lowery has crisscrossed America, covering stories of police violence that often find a sympathetic audience on social platforms like Facebook. And as an increasing number of individuals get their news from social networks, Lowery compares Facebook to traditional newspapers, which make editorial decisions deciding what to publish. If a letter to the paper is full of misinformation, or is simply a rant, the editors will decline to publish it in the paper. Facebook did have a team of editors who decided which stories should be featured in the trending news category, but those editors were laid off after a controversy over what bias they may have in selecting stories.

Of course one bias they did not have was promoting stories that were flagrantly false. Now, Facebook and Google say they will exclude fraudulent media outlets from their advertising network, attempting to de-incentivize their drive to create fake news in the first place.

Lowery’s book is “They Can’t Kill Us All”: Ferguson, Baltimore, and a New Era in America’s Racial Justice Movement.

Wesley Lowery: I think Facebook is responsible for what exists and what happens on its platform and I think that Facebook has been negligent in its responsibility to safeguarding and providing a forum in which sane and reasonable interaction happens and that hysterical and untrue interaction does not happen. What we know is that Facebook has the ability to deal with fake news. These are fake sites that they pop up. They’re being spread by specific pages and specific accounts and rather than address that Facebook has allowed millions of people to become deeply ill informed. Now there’s a question how democratic should a platform like Facebook be? If everyone wants to share a fake news article should that be allowed? Or does Facebook have some editorial control of what it allows to be propagated via its own mechanisms and its own channels. I mean I think that Facebook needs to take greater steps in these spaces because again Facebook itself has become a media publisher and it is now the platform and the canvas for chaos to be created by all of this fake information spreading so quickly without any check and balance and any responsibility being taken by the platform of sorting through what is true and what is not.

I mean there has to be some editorial infrastructure. And they’ve had in the past some editorial infrastructure. They at one time had a staff that was figuring out what should be in the trending news and what should not. And immediately after they got rid of that staff all of a sudden there was a bunch of fake news in the trending, right. And that would be one obvious step. But I also think that there has to be – Facebook has remarkable power through its algorithms and through its media partnerships to make decisions about what becomes prominent on its site and what does not. There’s certainly an organic democratic role to this but it’s not as if they are just completely sitting back and playing no role over what we see and what we do not. And as soon as they begin playing that role at all they now take on I believe a responsibility to curate this content. Like I said I just think it’s extremely damaging, you think about newspapers. Newspapers don’t run every letter they get. There’s a decision. There’s a curation, right. We decide this one is just a rambling that has nothing to do with anything. This is just full of falsehoods, right. When you choose to publish something on your platform on your canvas you are making an editorial decision to allow it to exist in a space. Facebook takes down threatening and abusive things all the time. It takes down personal attacks or nudity or pornography, right. It has the ability when you have specific publishers that are constantly spreading misinformation it has some ability to undercut those publishers from further spreading that.