vision

The case of a 7-year-old Australian boy who was supposed to lose sight at two weeks old but can still see has stunned scientists.

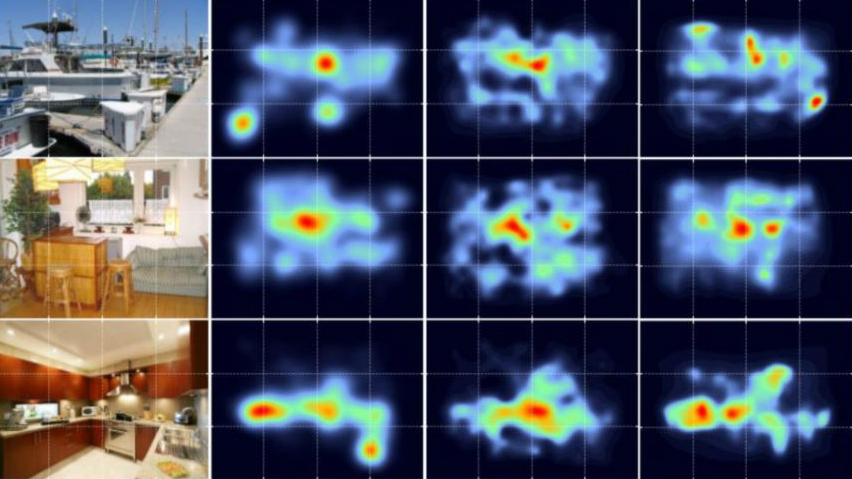

A new study overturns the conventional thinking about how we focus our visual attention.

That’s a big yes, as an incredible new study from University of Melbourne researchers found.

These glycerin “smart glasses” may be the only specs you’ll need – although they do need a design intervention at some point.