self-driving cars

Here’s why coding skills alone won’t save you from job automation.

▸

7 min

—

with

If we could jump 50 years into the future, what will our world look like? Flying cars? Hologram phones? Bill Nye sees two technological paths ahead – and we’re in the fork between them at this very moment.

▸

4 min

—

with

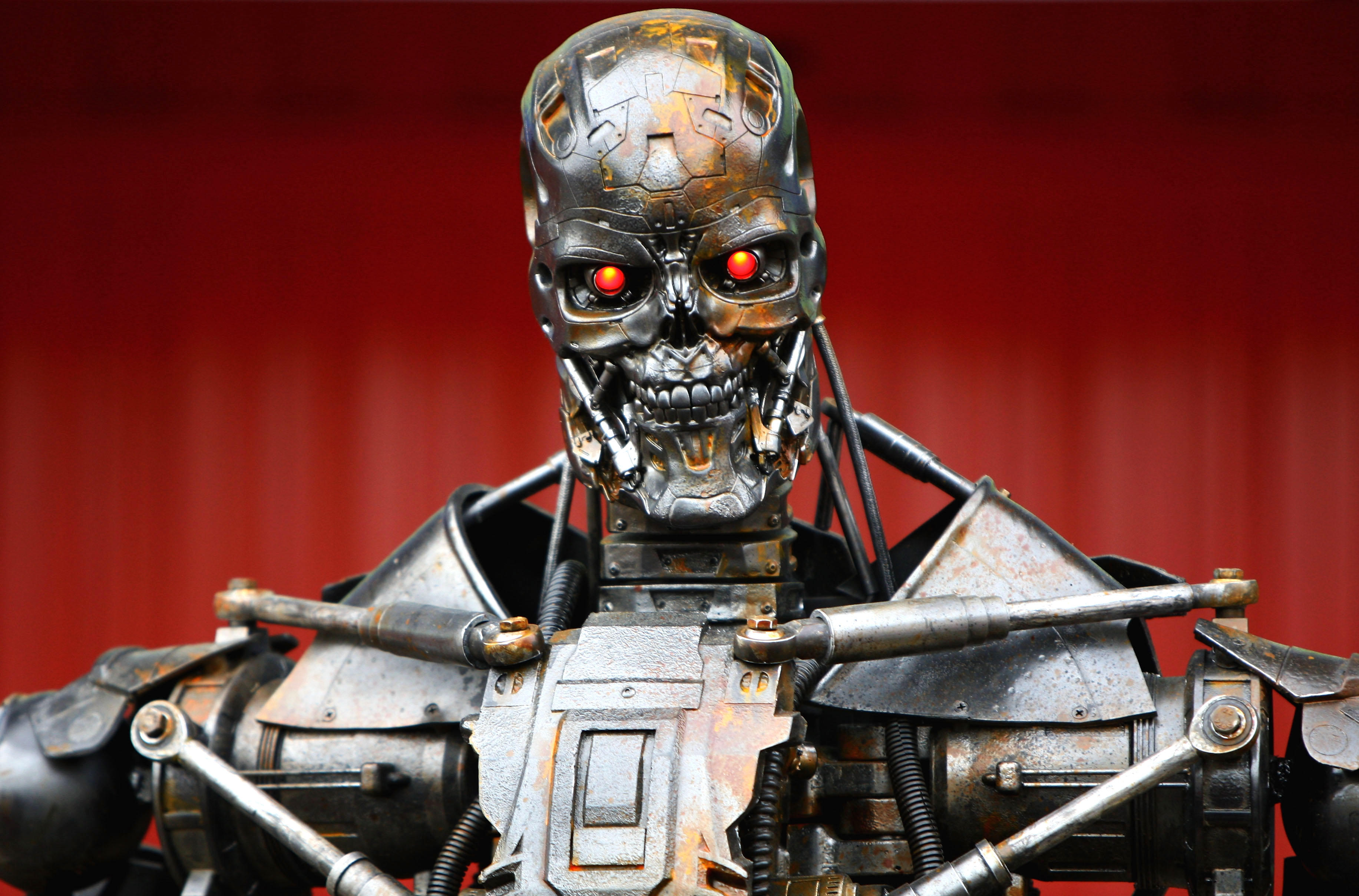

Tesla’s Elon Musk gives a grave warning to those trying to hold back self-driving car technology. According to him, we have it all backwards.

A new study highlights the new ethical dilemmas caused by the rise of robotic and autonomous technology, like self-driving cars.