Sam Harris

Is life worse or better than non-existence? And if it is, who is judging? Welcome to anti-natalism, a small but lively corner of philosophy.

The US is arguably the most scientifically and technologically advanced society in the world, and yet at the same time the most religious of Western societies.

There are many people who preach the supposed benefits of psychedelics, but none do it as well, nor as reliably, as these philosophers and scientists.

Where are the four “horsewomen” of new atheism? Well, here are two of them, secular scholars Rebecca Goldstein and Susan Jacoby.

New research claims religious terrorism is on the rise, and it appears that it’s going to get worse before we see a decline in such horrendous acts.

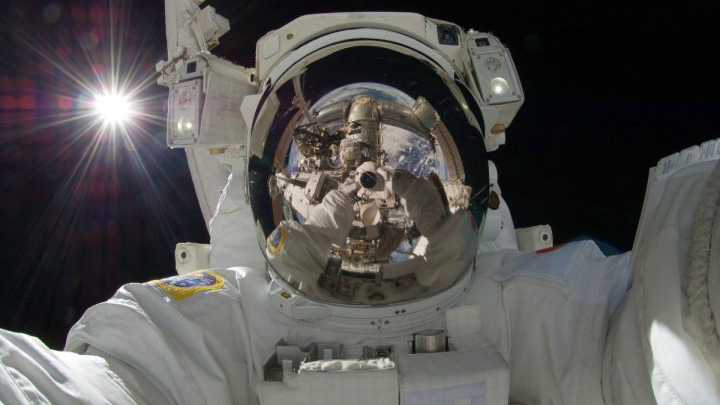

Sam Harris talks with David Deutsch about how modern people are already living like astronauts.

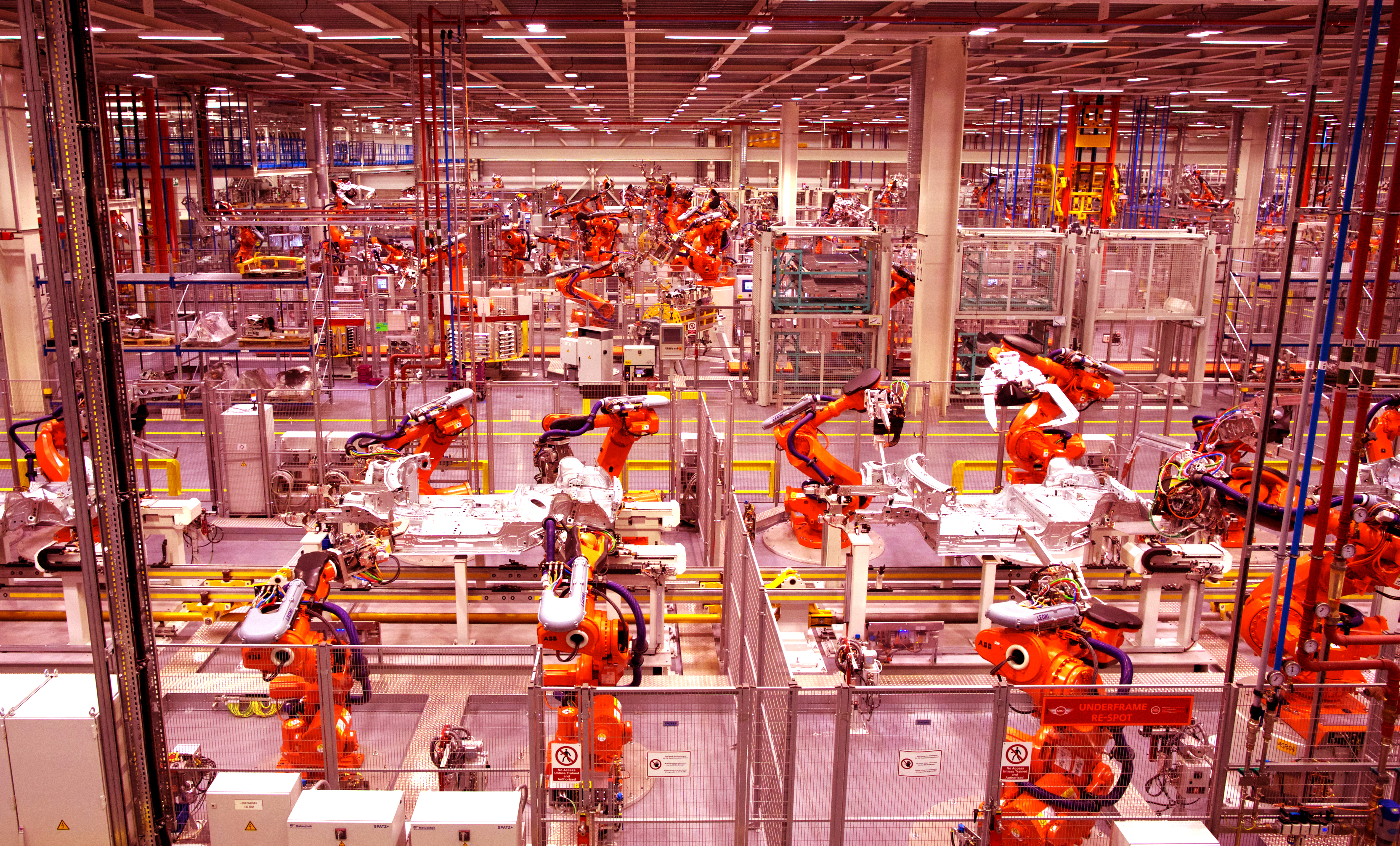

Elon Musk, Sam Harris, Ray Kurzweil and other visionaries discuss AI superintelligence at a recent conference.

Philosopher and cognitive scientist David Chalmers warns about an AI-dominated future world without consciousness at a recent conference on artificial intelligence that also included Elon Musk, Ray Kurzweil, Sam Harris, Demis Hassabis and others.

A recent conference on the future of artificial intelligence features visionary debate between Elon Musk, Ray Kurzweil, Sam Harris, Nick Bostrom, David Chalmers, Jaan Tallinn and others.

“If all that liberals can do in response is continue to lie about the causes of terrorism and lock arms with Islamists, we have some very rough times ahead,” writes Sam Harris.