pattern recognition

Everyone encounters stereotypes. But what you do afterward says something about you

Artificial intelligence (AI) is not nearly as smart as we want it to be. Because we are not nearly as smart as we want to be.

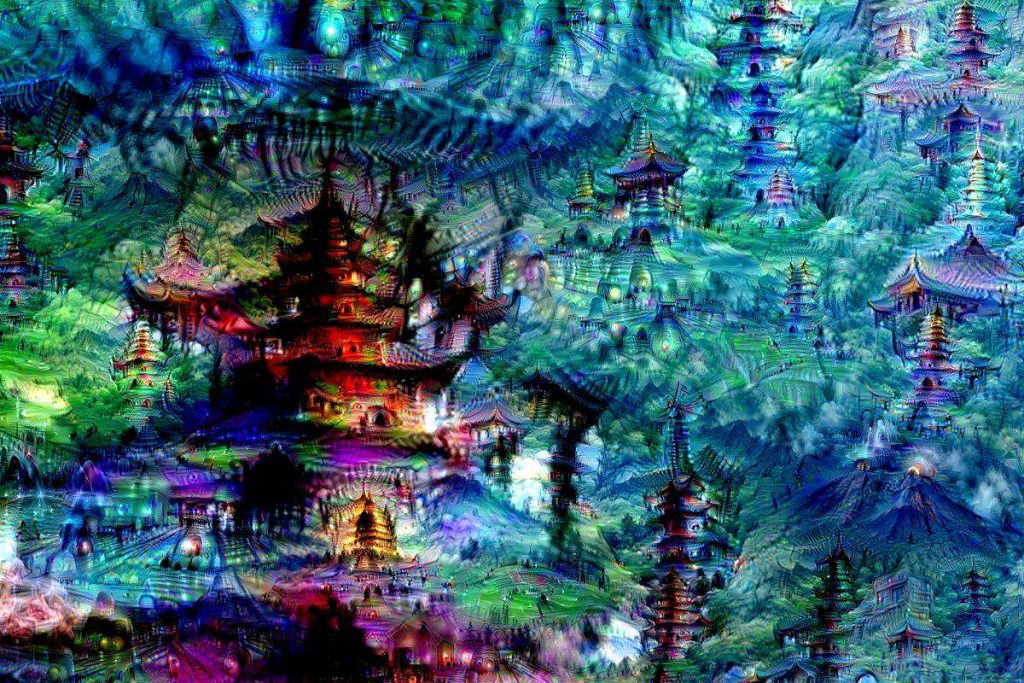

They may look odd, but it’s all part of Google’s plan to solve a huge issue in machine learning: recognizing objects in images.

We’re only seeing a fraction of the world around us. Amy Herman teaches the art of perception; if you’re game to test your visual intelligence, take one of her perception challenges here.

▸

5 min

—

with