cognitive biases

“Our results show why debates about controversial issues often seem so futile,” the researchers said.

People think that stereotypes are true but also that it is not acceptable to admit this and therefore say they are false. Moreover, they say this to themselves too, in inner speech.

Your brain stops at the most comforting thought. The truth is somewhere beyond that. Using scientific skepticism as a guide, astrophysicist Lawrence Krauss outlines the questions that critical thinkers ask themselves.

▸

6 min

—

with

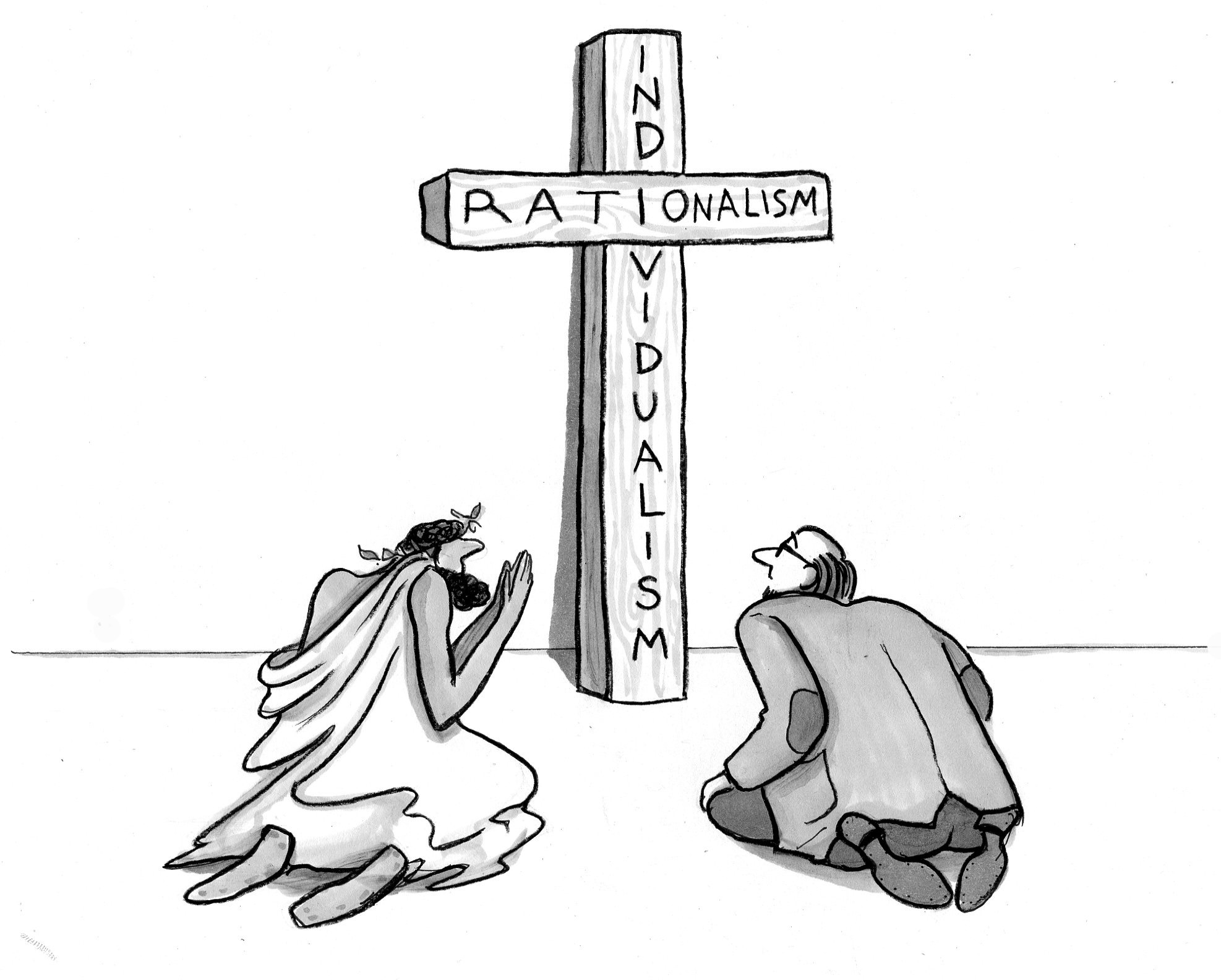

How did our world come to be ruled by a view of human nature that contradicts the testimony of much of history, and the bulk of the arts, and your daily experience? Mathoholics are to blame.