19th-century medicine: Milk was used as a blood substitute for transfusions

Photo credit: Robert Bye on Unsplash

- Prior to the discovery of blood types in 1901, giving people blood transfusions was a risky procedure.

- In order to get around the need to transfuse others with blood, some doctors resorted to using a blood substitute: Milk.

- It went pretty much how you would expect it to.

For the bulk of human history, medical science has been a grim affair. Modern innovations in the scientific process and medical techniques mean that we can determine with a good deal of accuracy what’s going to work and what won’t, and we can test those theories in a relatively safe and scientifically sound way.

Not true for the past. Take blood transfusions, for instance. Prior to the discovery of blood types by Karl Landsteiner in 1901 and effective methods of avoiding coagulation when transfusing blood, human beings who had lost significant amounts of blood were pretty screwed, and not just because of the loss of blood, but also because of what we used to replace it with.

For a brief and bizarre time in the late 19th century, scientists were convinced that milk was the perfect substitute for lost blood.

A early blood transfusion from a rather unhappy-looking lamb to man. Image source: Wellcome Collection. CC BY

The first successful transfusion of blood was performed in the 17th century by a physician named Richard Lower. He had developed a technique that enabled him to transfer blood without excess coagulation in the process, which he demonstrated when he bled a dog and then replaced its lost blood with that from a larger mastiff, who died in the process. Aside from being traumatized and abused, the receiving dog recovered with no apparent ill effects. Lower later transfused lamb blood into a mentally ill individual with the hope that the gentle lamb’s temperament would ameliorate the man’s insanity. The man survived; his mental illness persisted.

In 1667, Jean-Baptiste Denys transfused the blood of a sheep into a 15-year-old boy and a laborer, both of which survived. Denys and his contemporaries chose not to perform human-to-human transfusions since the process often killed the donor. Despite their initial successes, which likely only occurred due to the small quantities of blood involved, the later transfusions made by these physicians did not go so well. Denys, in particular, became responsible for the death of the Swedish Baron Gustaf Bonde and that of a mentally ill man named Antoine Mauroy.

Ultimately, these experiments were condemned by the Royal Society, the French government, and the Vatican by 1670. Research into blood transfusion stopped for 150 years. The practice had a brief revival in the early 19th century, but there had been no progress — many of the same problems were still around, like the difficulty of preventing blood from coagulating and the recipients’ annoying habits of dying after their lives had just been saved by a blood transfusion. How best to get around blood’s pesky characteristics? By the mid 19th century, physicians believed they had an answer: Don’t use blood at all but use a blood substitute. Milk seemed like the perfect choice.

The first injection of milk into a human took place in Toronto in 1854 by Drs. James Bovell and Edwin Hodder. They believed that oily and fatty particles in milk would eventually be transformed into “white corpuscles,” or white blood cells. Their first patient was a 40-year-old-man who they injected with 12 ounces of cows’ milk. Amazingly, this patient seemed to respond to the treatment fairly well. They tried again with success. The next five times, however, their patients died.

Despite these poor outcomes, milk transfusion became a popular method of treating the sick, particularly in North America. Most of these patients were sick with tuberculosis, and, after receiving their blood transfusions, typically complained of chest pain, nystagmus (repetitive and involuntary movements of the eyes), and headaches. A few survived, and, according to the doctors carrying out these procedures, seemed to fare better after the treatment. Most, however, fell comatose and died soon after.

Most medical treatments today are first tested on animals and then on humans, but for milk transfusions, this process was reversed. One doctor, Dr. Joseph Howe, decided to perform an experiment to see whether it was the milk or some other factor causing these poor outcomes. He bled several dogs until they passed out and attempted to resuscitate them using milk. All of the dogs died.

From “Observations on the Transfusion of Blood,” an illustration of James Blundell’s Gravitator. Image source: The Lancet

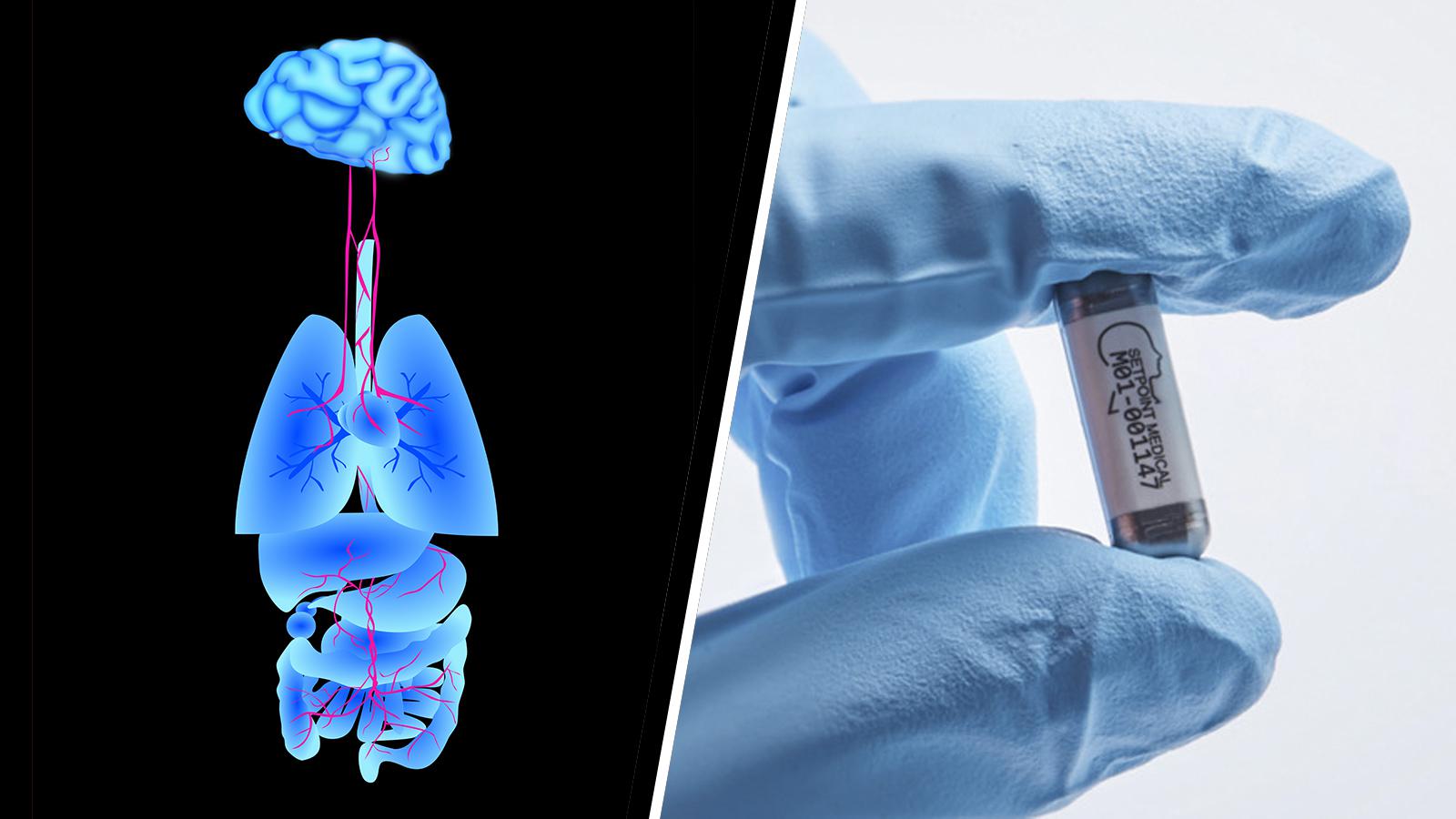

However, Howe would go on to conduct another experiment in milk transfusion, believing that the milk itself wasn’t responsible for the dogs’ deaths, but rather the large quantity of milk he had administered. He also eventually hypothesized that the use of animal milk — he sourced it from goats — in humans was causing the adverse reactions. So, in 1880, Howe gathered three ounces of human milk with the goal of seeing whether using animal milk was somehow incompatible with human blood.

He transfused this into a woman with a lung disease, who stopped breathing very quickly after being injected with milk. Fortunately, Howe resuscitated the woman with artificial respiration and “injections of morphine and whiskey.”

By this time, around 1884, the promise of milk as a perfect blood substitute had been thoroughly disproved. By the turn of the century, we had discovered blood types, and a safe and effective method of transfusing blood was established. Would these discoveries have occurred without the dodgy practice of injecting milk into the bloodstream? It’s difficult to say. At the very least, we can say with confidence that life is much better — less hairy — for sick people in the 21st century than in the 19th.