Is all of modern theoretical physics pointless? If you listen to any one of a number of disillusioned high-energy physicists (or wannabe physicists), you might conclude that it is. After all, the 20th century was a century of theoretical triumphs: we were able, on both subatomic and cosmic scales, to at last make sense of the Universe that surrounded and comprised us. We figured out what the fundamental forces and interactions governing physics were, what the fundamental constituents of matter were, how they assembled to form the world we observe and inhabit, and how to predict what the results of any experiment performed with those quanta would be.

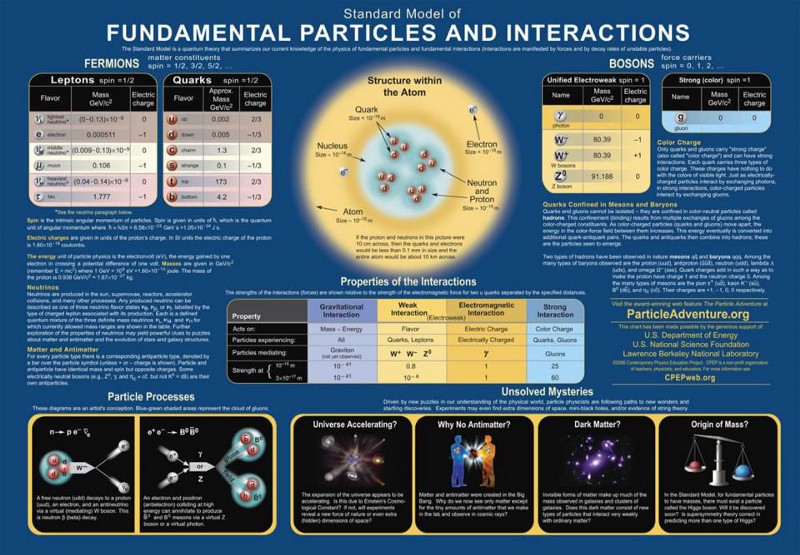

Combined, the Standard Model of elementary particles and the standard model of cosmology represent the culmination of 20th century physics. While experiments and observations have revealed a number of hitherto unsolved puzzles — puzzles like dark matter, dark energy, cosmic inflation, baryogenesis, massive neutrinos, the strong CP problem, and numerous others — theorists have failed to make significant progress on all of these issues over the past 25+ years.

Have they all simply been wasting their time?

That’s an unfair accusation. It’s easy to criticize, but suggestions for what they should be doing instead are largely even worse. If you take a fairer look at the situation, you’ll realize that progress has been indeed occurring, because exploring ideas when you’re at the frontiers of what experiment and observation can teach you is still the best tool we have in our arsenal.

It’s true, in the 20th century, there were a slew of theoretical advances that led to meaningful predictions that were later verified. Some of these include:

- the prediction of positrons: the antimatter counterpart of electrons,

- the prediction of the neutrino: a subatomic, energy-and-momentum-carrying particle participating in nuclear reactions,

- the prediction of quarks as constituents of the proton and neutron,

- the prediction of additional “generations” of both quarks and leptons,

- the structure of the Standard Model, with the strong nuclear force, the weak nuclear force, and the electromagnetic force,

- the prediction of electroweak unification and the Higgs boson,

- the prediction of the Big Bang and the cosmic microwave background,

- the prediction of cosmic inflation and the imperfections in the cosmic microwave background,

- and the prediction of cold dark matter and its implications for large-scale structure formation in the Universe.

These remarkable successes led to our standard picture of the Universe today: a picture which, at its heart, consists of the Standard Model of elementary particles and of general relativity governing the gravitational force.

On the other hand, physics didn’t end with these discoveries or with this picture, which has been in place — more or less — since the early 1980s. Sure, details of cosmic inflation, the massive nature of neutrinos, and the existence of dark energy have been revealed since then: a triumph of perhaps a more modest nature.

But what has recent work in theoretical physics given us atop this standard picture?

- Supersymmetry, whose particles do not appear to exist.

- Extra dimensions, whose predictions do not appear in our experiments or observations.

- Grand unification, which has no evidence supporting its existence.

- String theory, which has not given us a single testable prediction.

- Modifications to gravity, which add additional parameters but have failed to create a consistent picture that supersedes general relativity.

- Modifications to cold, collisionless dark matter, which, again, add additional parameters that are wholly unnecessary, failing to supersede the simplest cold dark matter models.

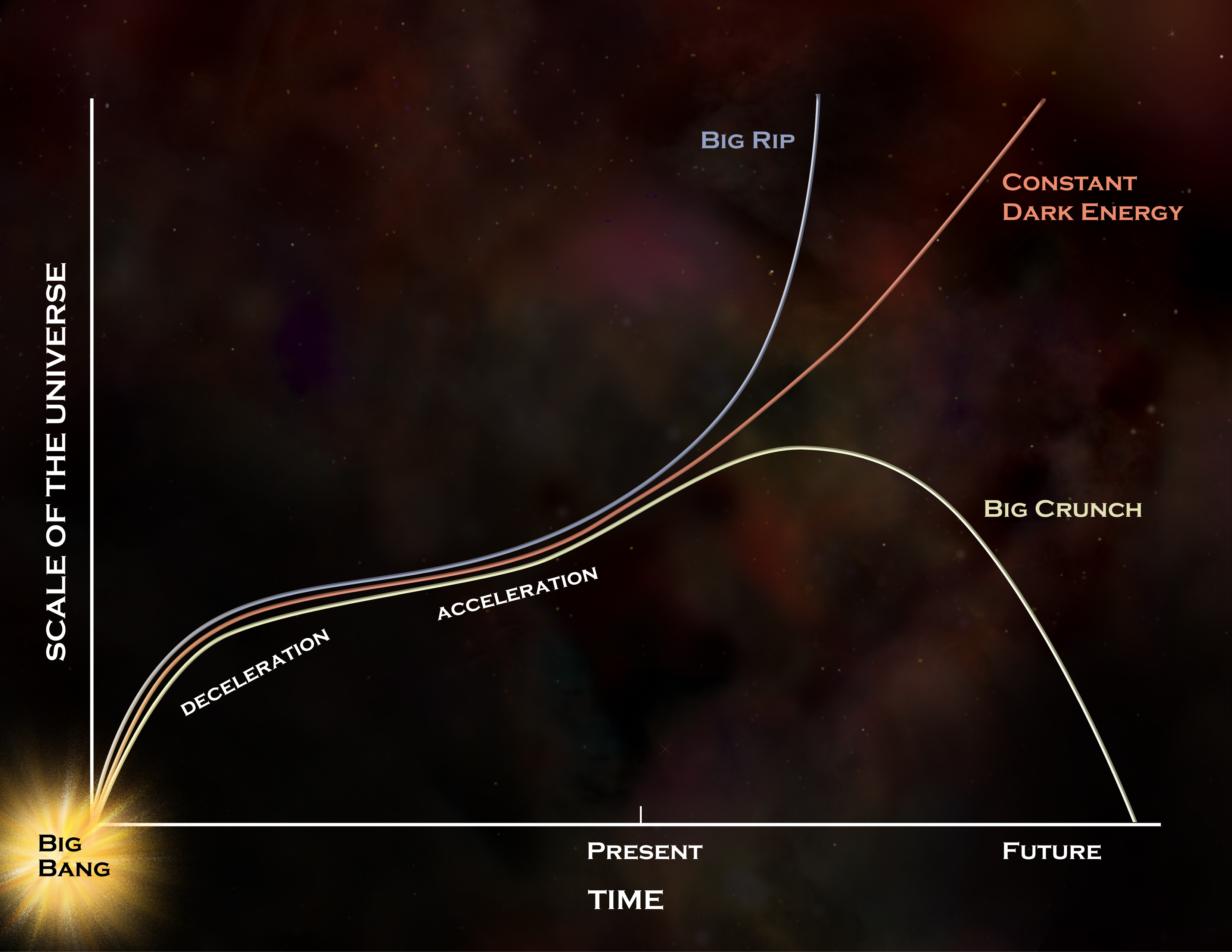

- And modifications to the simplest picture of (constant) dark energy, which yet again add additional parameters but have nothing to offer (except better-fitting a suggestive, but not proven, set of data that supports evolving dark energy) above and beyond the simplest, constant model of dark energy.

There are all sorts of ways that people have attempted to break-and-bend the existing laws of physics over the past few decades, and none of them do a better job at explaining what we observe and measure than the standard picture without any additional modifications.

This is not what “failure” looks like.

This is what theoretical physics looks like — and what at least a portion of theoretical physics has always looked like — when we have insufficient data to point us in the right direction about what lies beyond the currently accepted consensus picture of reality.

It’s easy to go back to the 20th century and point to the successes and say, “Look how good we were at predicting what would come next!” Sure, but one could just as easily go back to the 20th century and pick out any of the much more numerous conjectures that turned out to not describe our reality very well at all. It turns out we all have a selective memory when we look back on our triumphs; we overlook all of the attempts that didn’t pan out.

- We remember the quark model, not the Sakata model.

- We remember general relativity, not the Newcomb and Hall modifications to Newton’s laws.

- We remember quantum chromodynamics, not the “guess the S-matrix” approach.

- We remember the neutron, not the idea that there were proton-electron bound states within the nucleus.

- We remember the Higgs model, not technicolor models.

- We remember the expanding Universe, not the tired light theory.

- We remember the Big Bang, not the Steady State model.

- We remember cosmic inflation, not a variable speed of light.

That’s the first problem with the “theorists are all wrong” take: when we grow up, scientifically, we take for granted what was achieved in the past, but not how we got there, nor the missteps we took and the blind alleys that we went down along the way.

The second problem is this: theorists have no expectation of knowing what comes next when the experimental and observational data we do possess is insufficient to light the way. During the 20th century, revolutionary data came in at an alarming rate as new particle physics experiments were performed at higher energies, with better statistics, and in novel environments, such as above Earth’s atmosphere. Similarly, in astronomy, larger apertures, advances in photography and spectroscopy, the development of multi-wavelength astronomy beyond the visible light spectrum, and the first space telescopes all brought in new observational data that upended many pre-existing ideas.

- A heavier “cousin” of the electron, the muon, was first revealed by balloon-borne experiments that enabled us to detect their presence among the cosmic rays.

- Deep inelastic scattering experiments — i.e., high-energy collisions between particles with precision measurements of the particle shrapnel that comes out — revealed that the proton and neutron were composite particles, but the electron was not.

- Nuclear reactors, where heavy elements were transmuted into lighter ones, released antineutrinos that could be absorbed by atomic nuclei outside of the reactor, leading to their discovery.

In other words, the reason that theoretical physics was so successful in the 20th century is this:

Experiments, measurements, and observations eventually reached the point where the data we were collecting pointed the way forward, where competing ideas for what might come next could be tested against one another, and meaningful, informative conclusions could then be drawn.

If you don’t push the frontiers of where you’re looking into unexplored territory — examples of which include better, cleaner data, greater statistics, higher energies, greater precisions, smaller distance scales, etc. — you won’t be able to find anything novel.

- Sometimes, you charge into unexplored territory and don’t find anything novel; this indicates the currently prevailing theories are valid over a larger range than you previously knew they would be.

- Sometimes you charge into unexplored territory and you do find something novel: something you anticipated might be there. One new idea (or set of ideas) is suddenly much more interesting than before, as they now have the best kind of support behind them: experimental/observational data.

- Sometimes, you charge into unexplored territory and not only do you find something novel, you find something novel that you hadn’t anticipated before. That’s the spirit behind the saying, “The most exciting phrase in science is not ‘Eureka!’ but rather ‘That’s funny.'”

- And sometimes, you want to charge into unexplored territory, but a lack of either funding, imagination, or both prevents you from doing so.

Without novel experiments or observations to guide us, all we can do is pursue ideas of our own concoction that don’t conflict with the existing data we already possess. This typically involves a conservative approach: we attempt to add in a new parameter, a new particle, a new interaction, to replace a constant with a variable, to (slightly) violate a conservation law, to (slightly) break a symmetry, etc. Exploring the consequences of doing any of these things lets you know where the theoretical boundary of our wiggle-room is: between what remains possible and what’s already ruled out.

We cannot alter things too greatly, or the new idea will arrive having already been ruled out by old data. We also cannot simply throw in too many new parameters without sufficient motivation, or we’ll unnecessarily overcomplicate matters without gaining any substantial insight into what’s capable of being constrained. (The “Why not both?” approach, when considering two speculative theoretical options, always succumbs to this pitfall.) And we cannot put too much weight behind one novel, unconfirmed experimental result of dubious significance: this is indeed a form of ambulance-chasing, and deriding such an approach is completely justified.

Here are some uncomfortable truths for the theorists out there: both professionals and armchair amateurs alike.

- Most of the ideas that you will have, when it comes to superseding our known and accepted theories, are not new ideas, but already exist in the literature.

- Most of the new ideas that you do have will, upon further inspection, turn out to be fatally flawed for any of a number of reasons: they will turn out to be bad ideas.

- And most of the new, good ideas that you have, interesting though they may be, will turn out to not describe our reality at all, as nature is under no obligation to conform to even the best of our ideas.

- And finally, if you have not done the hard work of quantifying the physical effects that will arise from your new idea, you don’t have a theory at all: you have a half-baked guess.

Coming up with a new, good idea that actually makes explicit predictions that can be tested, and then the results can be compared against the alternatives, including the previously prevailing theory, is a very tall order, but a necessary hurdle to clear in order for a novel idea to be accepted. As Lord Kelvin once put it:

“I often say that when you can measure what you are speaking about, and express it in numbers, you know something about it, when you cannot express it in numbers, your knowledge is of a meager and unsatisfactory kind; it may be the beginning of knowledge, but you have scarcely, in your thoughts advanced to the stage of science, whatever the matter may be.”

That isn’t to say that theorists, in exploring the ideas they’re exploring today, are necessarily doing anything more notable than stabbing in the dark. We have pieces of the puzzle that don’t quite fit.

- We see CP-violating decays in the weak interactions in some systems but not others, and we don’t know how to predict the magnitude of that violation.

- We don’t see CP-violating decays in the strong interactions, even though the Standard Model does not forbid them, and we do not understand what suppresses or prevents them.

- We know that the Higgs field, by coupling to massive particles, gives them their rest masses, but we don’t know how to calculate what those masses ought to be.

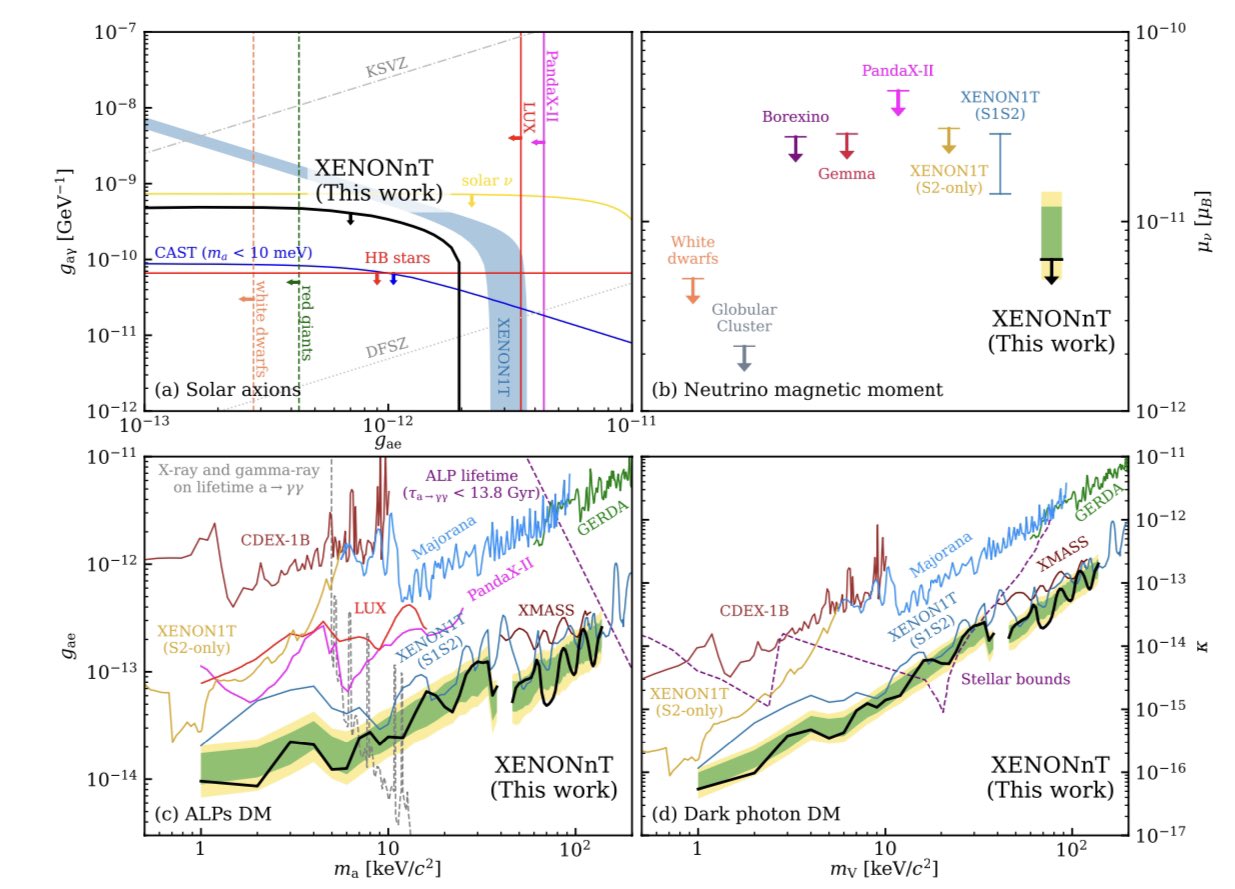

- We know, from astrophysical observations, that some invisible form of energy that behaves like it has a positive rest mass but does not have a cross-section with light or normal matter exists, but we don’t know what its nature is.

- We know that there are quantum fields permeating empty space, but we don’t know how to calculate the zero-point energy of those fields. We also know, astrophysically, that the Universe expands as though there’s a positive, non-zero energy inherent to space itself, but we can only measure it.

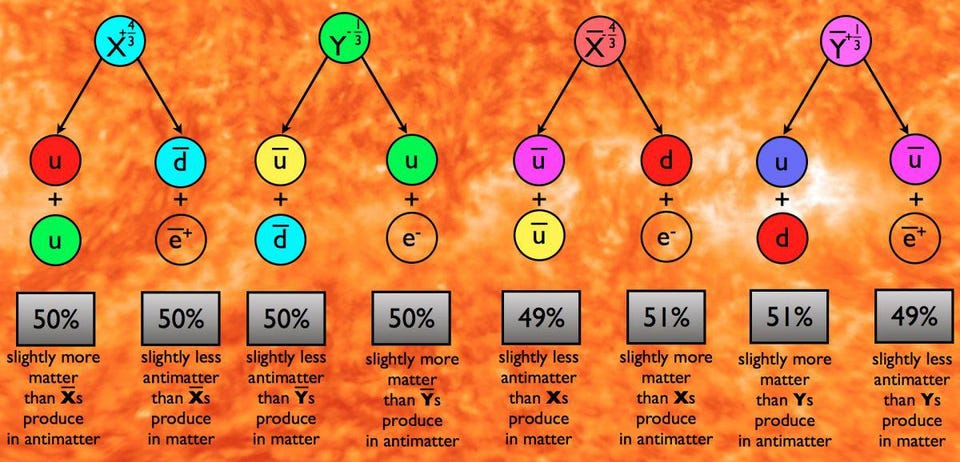

- We know that the Universe has more matter than antimatter in it, but not how it was generated.

- We know that neutrinos have non-zero rest masses, but not what gives them those masses.

And yet, these clues are not sufficient for us to have come up with answers that have been borne out by experiments or measurements. We have successfully reverse-engineered a number of possible scenarios, but no definitive cause has yet been identified for any of these effects.

It’s very easy — too easy, in fact — to look at the current state of affairs and assert, “You’re all doing it wrong.” We know. All of us know we’re doing it wrong. But here’s the important thing you have to remember: as theorists, if we knew what doing it right looked like, we’d do that, and we’d put these puzzle pieces together in a way that finally moves the field forward. No one is doing that, and the reason is precisely because there’s no clear path as to how we’d successfully do so.

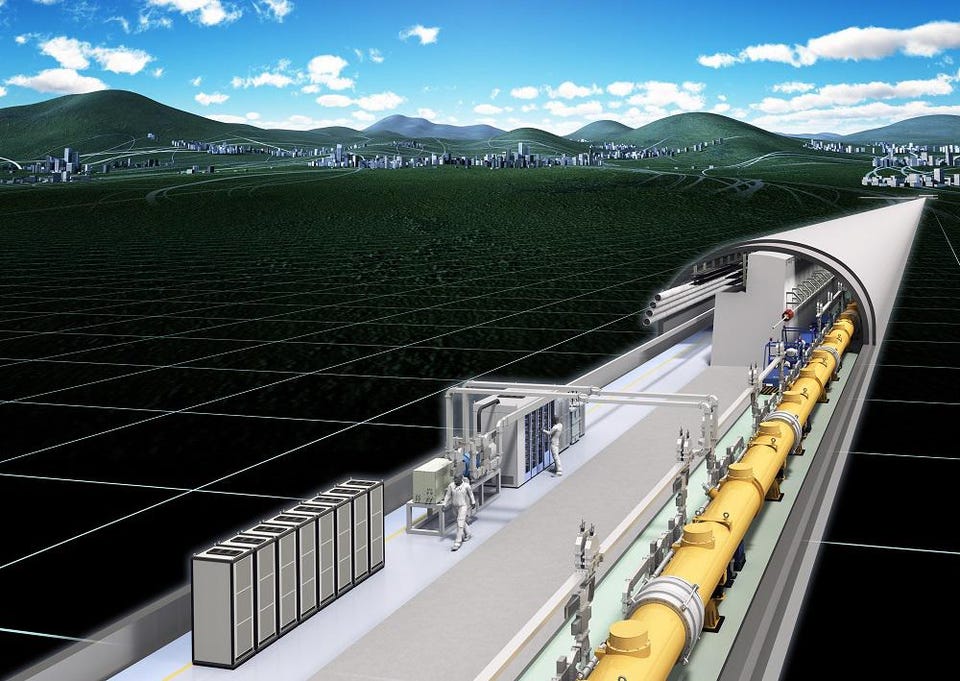

What we do know, however, is that the best hope the field has to move forward beyond our current limitations lies not in more theoretical work, but in experiment and observation. Theory has gone as far as it can go without superior data; if we were to have more clues from the Universe itself, we would improve our chances of making that next critical breakthrough that takes us beyond the Standard Model of particle physics and beyond the inflationary ΛCDM model of our cosmos. That means new observatories, new experiments, and new colliders. If we want to advance, we need better information to guide us.

It’s always easier to criticize than it is to come up with a superior path forward. The best we’ve come up with is this: to let people choose for themselves what they work on. Until there’s a more compelling clue that shows us what the Universe is actually doing, we’ve got nothing better than to simply keep trying our best.

This article was first published in October of 2022. It was updated in August of 2025.