How did we fool ourselves into believing in a new particle that wasn’t there?

The 750 GeV particle that the LHC thought it saw? A sham. And we all should have known.

“The first principle is that you must not fool yourself, and you are the easiest person to fool.” –Richard Feynman

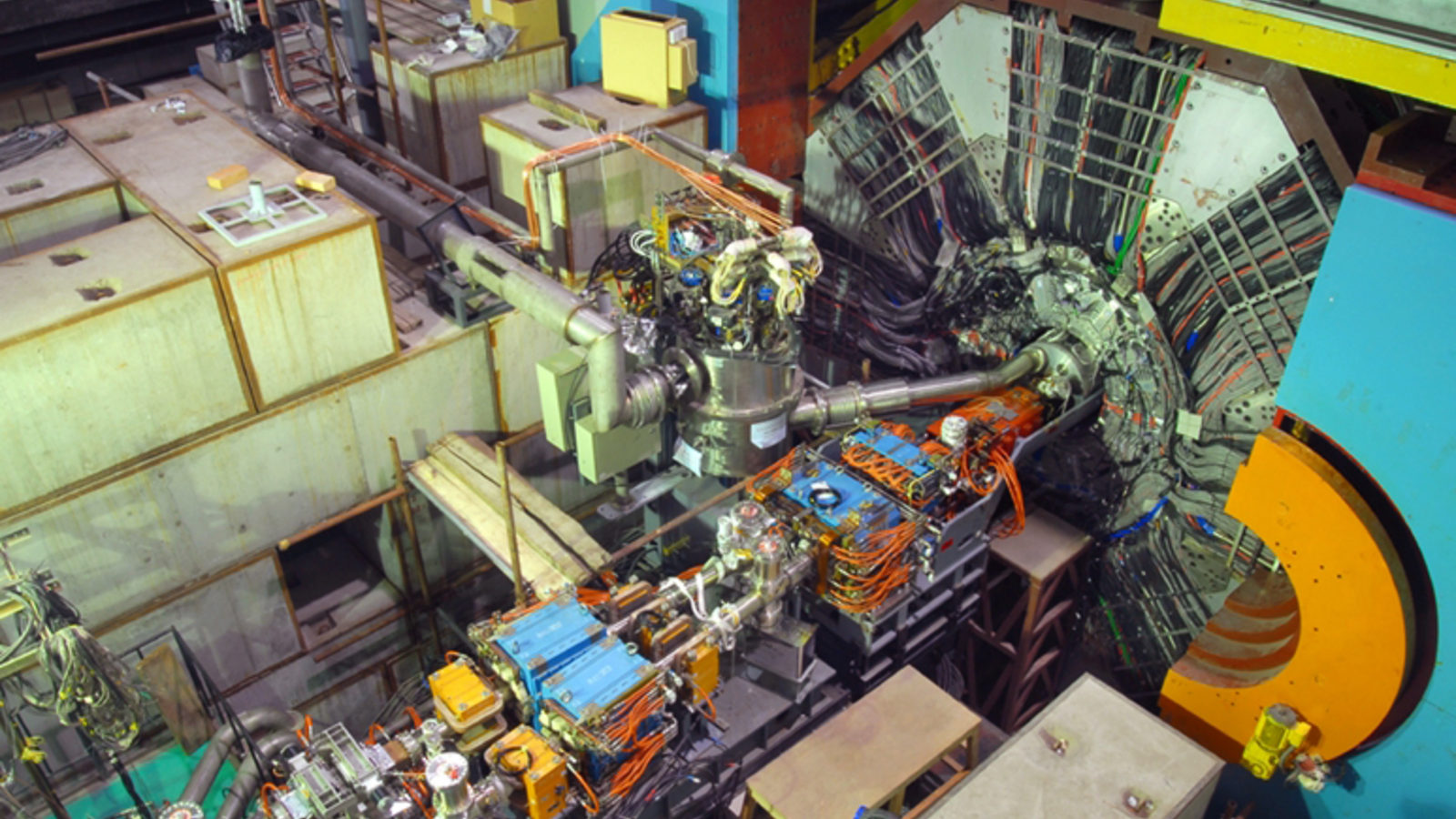

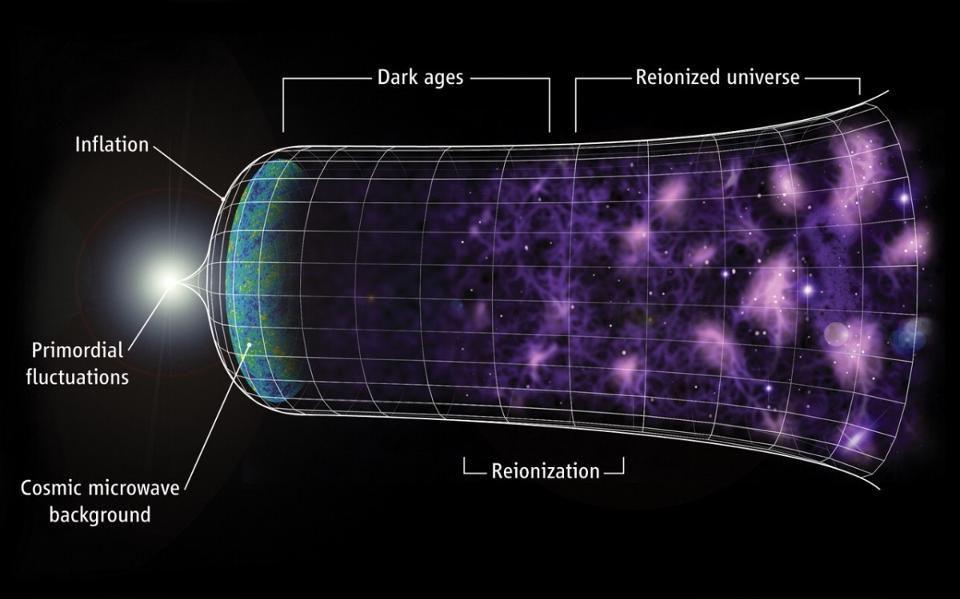

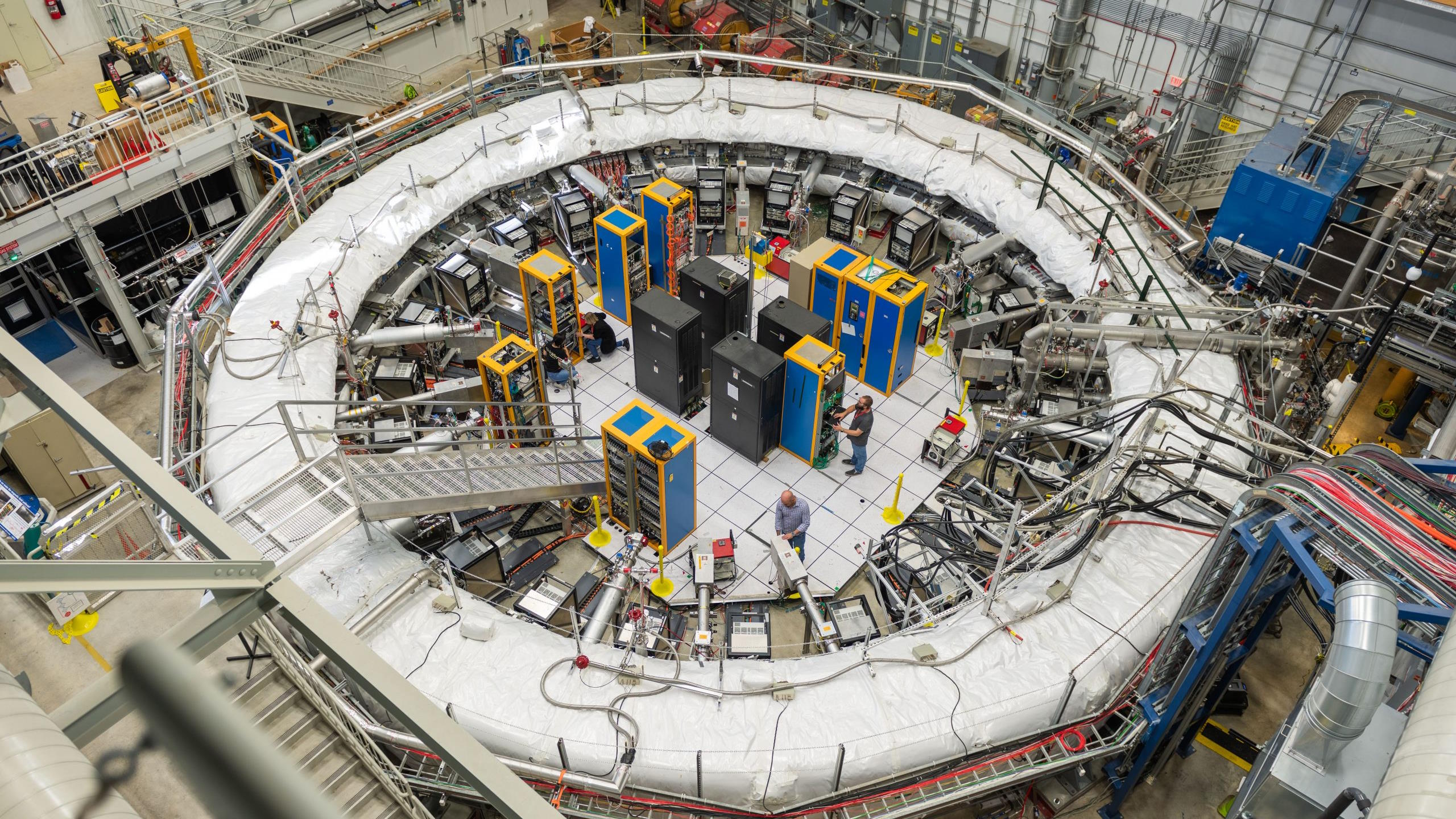

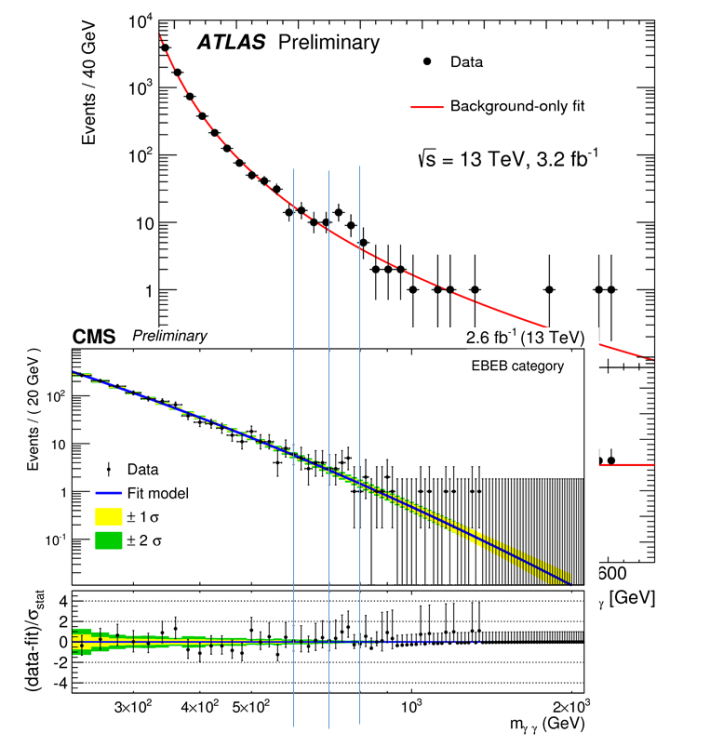

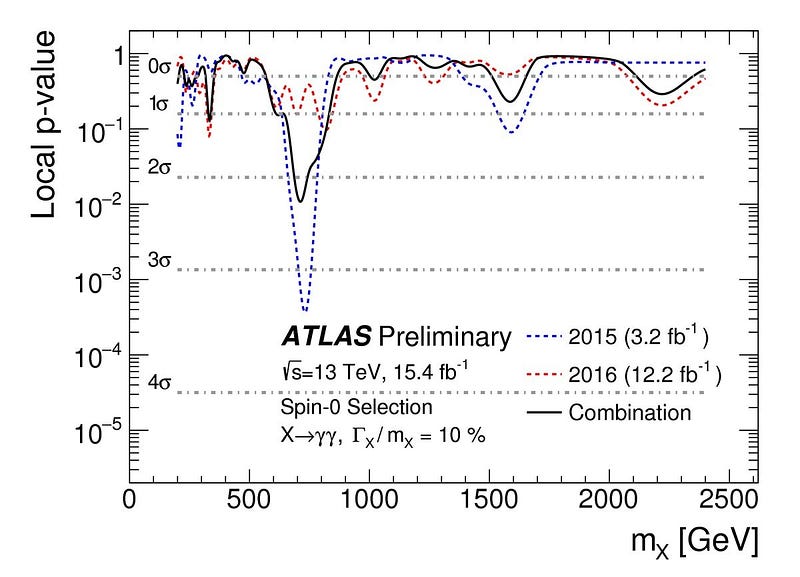

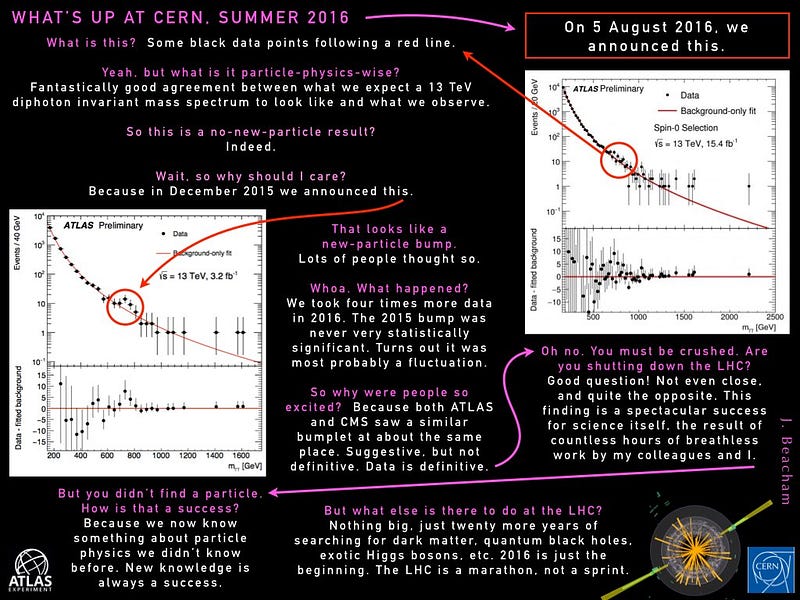

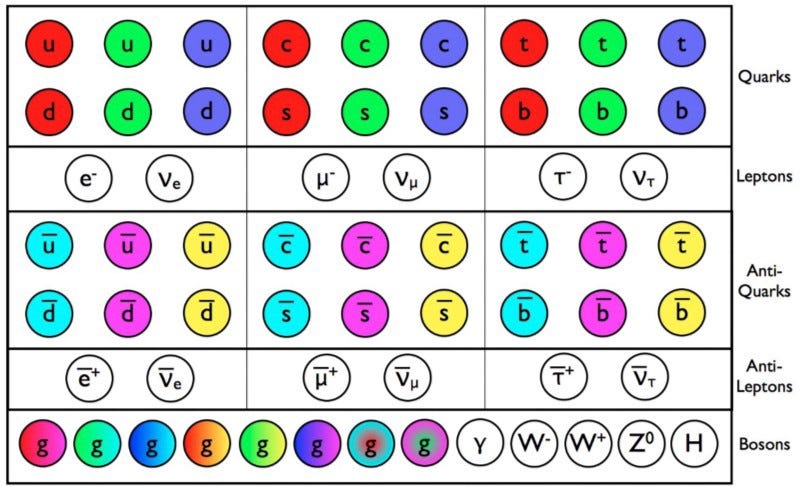

From the end of 2015 until now, the particle physics community had been all abuzz about an incredible new possibility: a new fundamental particle that the LHC showed hints of. It couldn’t have been a quark, a lepton, or any of the predicted bosons. It appeared to be more massive than anything else ever discovered at 750 GeV of energy, four times the mass of the top quark, the heaviest known particle. And signals of it appeared in both detectors’ data, CMS and ATLAS, independently. Many physicists were touting that this was most likely real, excited that the first fundamental particle beyond the Standard Model was about to be discovered. Some were even giving ridiculously long odds against its discovery, claiming there was less than a 1-in-1,000 chance this wasn’t real. If you looked at the 2015 data, there was very clearly something going on at that particular energy, and it was the great hope of physicists that more data would elevate this hint into the realm of robust discovery.

Yet the 2016 data — where four times as much information came in as in 2015 — had other plans. Instead of confirming this particle, the evidence overwhelmingly pointed to the fact that nothing was there at all. “Statistical fluke” was the general conclusion, and the evidence for this particle, like allfundamental, beyond-the-standard-model particles ever proposed, has vanished with more and better data. The big question is, how did we wind up in this situation in the first place? How did we fool ourselves into believing there was a particle there at all? And did the data warrant us believing in this particle at all, or were we so eager to believe in something that we were the fools, and the data was just incidental?

Odds are a funny thing if you’re not accustomed to them. If you have very long odds of something happening: 1-in-100, 1-in-1,000, 1-in-1,000,000, then you expect they won’t happen unless you create a large number of opportunities for yourself. (And even then, only if you have a certain amount of luck.) If you flip a fair coin ten times, for example, you don’t expect to get 10 heads in a row: that’s a very rare occurrence. But if you flipped a fair coin a thousand times, you wouldn’t be nearly as surprised if you looked anywhere in your data of the 1,000 flips and found 10 heads in a row. That’s kind of like what we do in particle physics.

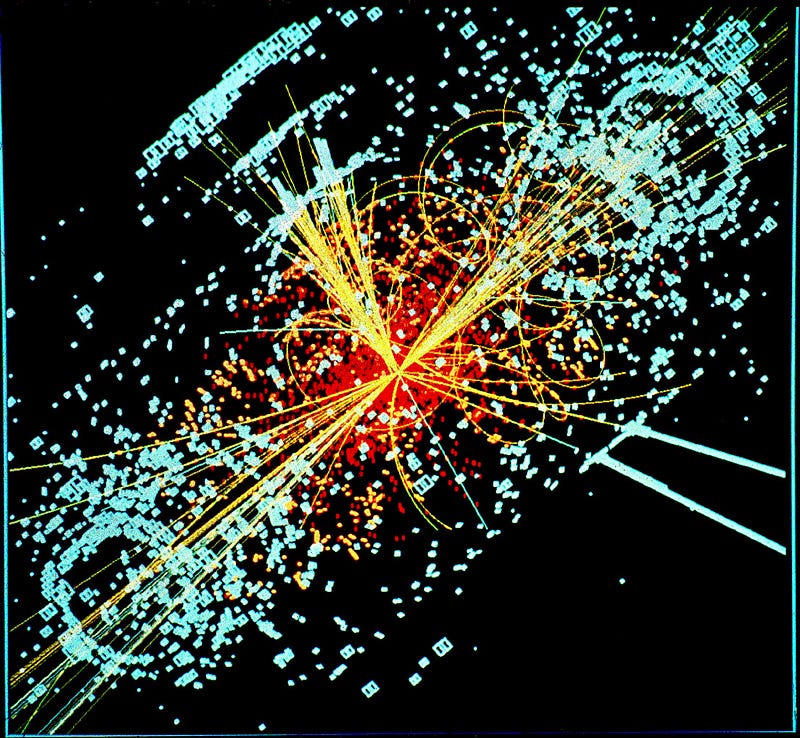

It’s very rare to get one collision so perfect that we can point to that and say, “right there, that’s a new particle!” It’s been a very long time since that definitively happened, and that isn’t how discoveries are generally made. Instead, we take a whole slew of data from billions upon billions of collisions, calculate what we expect out of the Standard Model, and compare our observations with what we predicted. You almost never get an exact match, just like you almost never get exactly 500,000 heads and 500,000 tails if you flip a coin 1,000,000 times, but you get something that’s close within a certain amount of error. Given the amount of statistics we have, we even know how big that error should be.

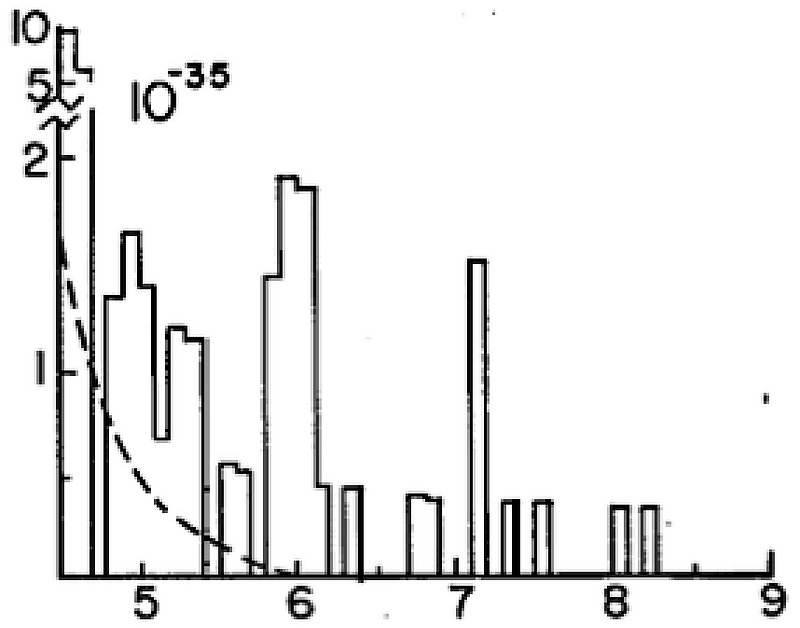

A 1-in-100 or 1-in-1,000 result isn’t all that good. In 1976, physicists were looking for an upsilon particle: a hypothetical particle that would be made up of a bottom quark and a bottom anti-quark. We knew to look for this even before the bottom quark had been found, thanks to the Standard Model. The early data that came in showed a “signal” for this that was somewhat significant close to the expected energy, and so it was published, with a discovery announced. With the next data run, it became clear that the particle didn’t exist, and so it became known as the “oops-Leon” (after Leon Lederman, who announced the discovery), with the actual upsilon particle finally appearing a little over a year later. The mistake? We didn’t have enough statistical significance, and rare fluctuations — like getting 10 heads in a row — are common if you have enough data.

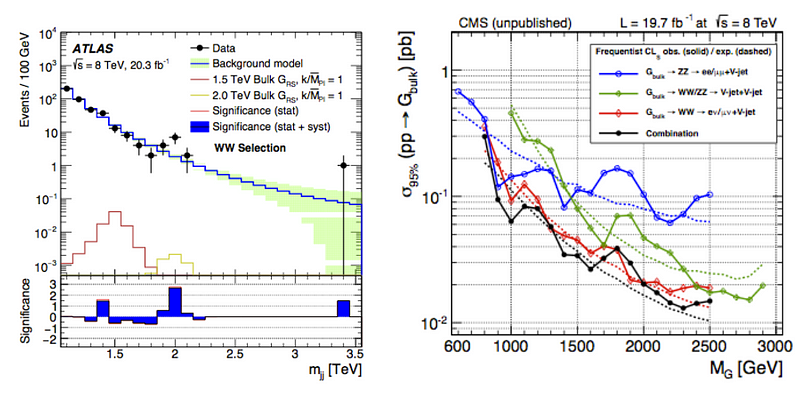

That’s exactly what happened at the LHC, and it’s happened before. There was a ~2 TeV signal for a “diboson excess,” or a potential new particle producing more events in a particular decay channel. It went away with more data. The ~750 GeV signal was a “diphoton excess”, meaning two photons with an energy of around 750 GeV total were produced more frequently than expected. As more data was taken, though, that signal went away. And that’s the situation we find ourselves in today.

All of this wouldn’t be such a big deal if most particle physicists weren’t desperate to find a new particle beyond the Standard Model, something that’s been understood and predicted for around 50 years now. For all the mysteries of nature we have — why there’s more matter than antimatter, why neutrinos have mass, why there’s no strong CP-violation, why there’s dark matter and dark energy — we don’t have new fundamental particles that we’ve found to explain them. They’re just puzzles without a definitive solution. We talked about a new particle because we wanted a new particle, not because we had found one. And when the new data came in, we realized we had been fooling ourselves with a false hope.

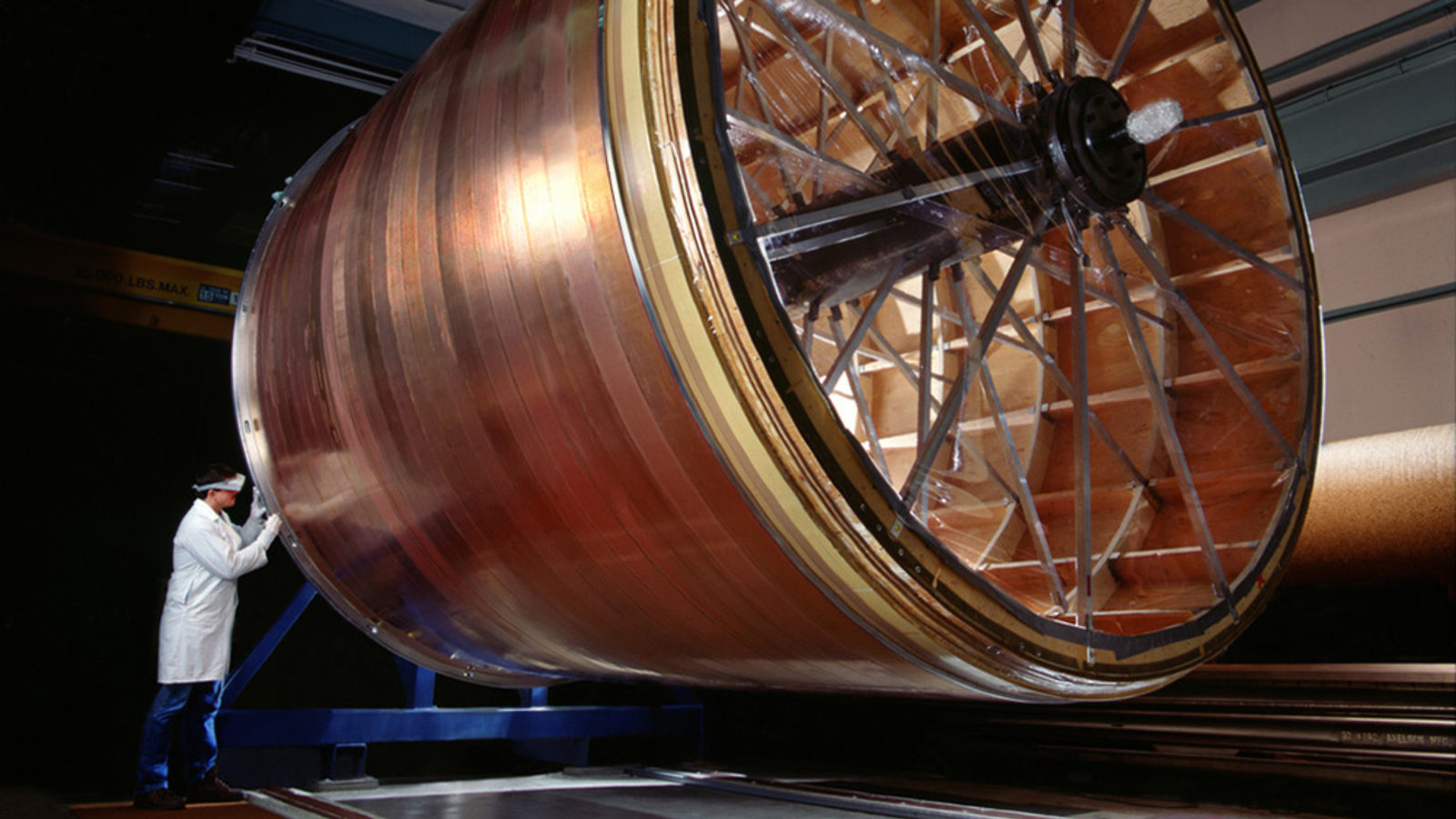

I suppose it’s a very human endeavor, the same way someone in desperate economic straits might buy a lottery ticket: for hope, not because you think you’re going to win. Believing in this signal was quite akin to that. The evidence wasn’t quite there, the odds were against them, and finding an unlikely fluctuation, given all the data we had compiled, was quite likely to occur somewhere in the CMS and ATLAS detectors combined. When we announced the discovery of the Higgs boson some 4–5 years ago, we had reached a significance threshold of 5σ, which has “fluke” odds of less than one-in-a-million. That threshold has been the gold standard for discovery ever since the 1970s, mostly due to the “oops-Leon” incident. This ~750 GeV signal? It had about 1-in-3,000 odds of being a fluke, which is significant, given that we had billions of proverbial coin tosses.

When it comes to new discoveries that usher in a new era of physics, it’s up to all of us to not chase our greatest hopes only to meet with disappointment, but to look at what the evidence says with a critical eye, and a view to all we have (and haven’t) learned from our past experience with statistics. After all, Richard Feynman’s words about new discoveries in science ring just as true today as when he uttered them, “The first principle is that you must not fool yourself, and you are the easiest person to fool.”

This post first appeared at Forbes, and is brought to you ad-free by our Patreon supporters. Comment on our forum, & buy our first book: Beyond The Galaxy!