For all of history, there’s been an underlying but unspoken assumption about the laws that govern the Universe: that if you know enough information about a system, like the positions, momenta, and properties of the particles within it, then you can use that information to predict precisely how that system will evolve and behave in the future. The assumption is, in other words, that the equations governing the Universe are deterministic. The classical equations of motion — Newton’s laws — are completely deterministic. The laws of gravity, both Newton’s and Einstein’s, are deterministic. Even Maxwell’s equations, governing classical electricity and magnetism, are 100% deterministic as well.

But that picture of the Universe got turned on its head with a series of discoveries that began in the late 1800s. Starting with radioactivity and radioactive decay, humanity slowly uncovered aspects about the nature of reality that were fundamentally quantum, casting doubt on the idea that we live in a deterministic Universe. In terms of predictive power, many aspects of reality could only be discussed in a statistical fashion: where a set of possible outcomes with appropriately weighted probabilities could be presented, but knowing which one would occur, and when, could not be established in advance of a measurement. The hope of avoiding the necessity of “quantum spookiness” was championed by many, including Einstein, with the most compelling alternative to determinism put forth by Louis de Broglie and David Bohm.

Decades later, Bohmian mechanics was finally put to a compelling experimental test, which — to the chagrin of many — it failed spectacularly. Here’s how the best alternative to the spooky nature of our quantum reality simply fails.

Is nature deterministic? There are all sorts of experiments we can perform that demonstrate that we cannot predictively know the outcomes of our quantum reality in any sort of definitive fashion.

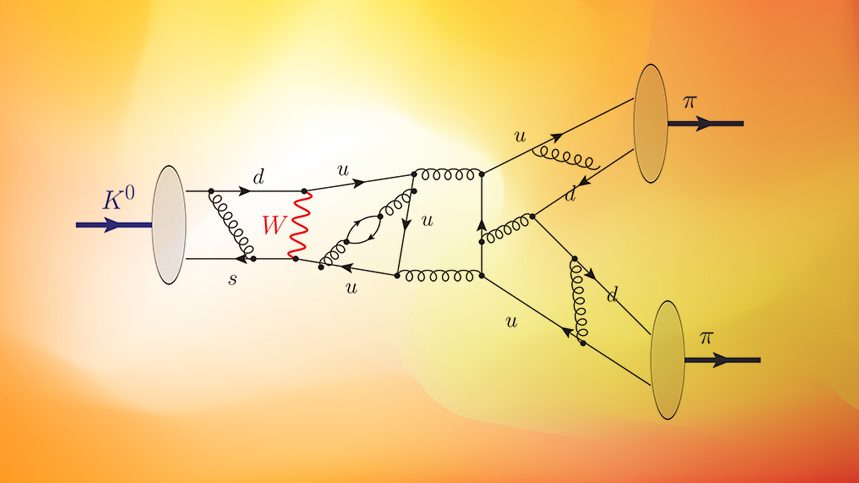

- Place a number of radioactive atoms in a container and wait a specific amount of time. When you observe your container at that later time, you can predict how many atoms remain versus how many have decayed, on average, and what the probability distribution will be of having a certain specific number of undecayed atoms remaining after a certain amount of time has elapsed. However, you cannot predict which atoms will decay versus which ones will remain, nor can you predict the exact number of atoms that will have decayed versus the ones that remain. Those latter aspects must be measured in order to be determined.

- Fire a series of particles through a narrowly spaced double slit and you’ll be able to predict what sort of interference pattern will arise on the screen behind it, including the spacing and distribution of the areas of constructive and destructive interference. However, for each and every particle, even when you’re capable of sending them through the slits one at a time, you cannot predict — other than purely probabilistically — where each one is going to land. You must measure them, individually, in order to determine their outcomes.

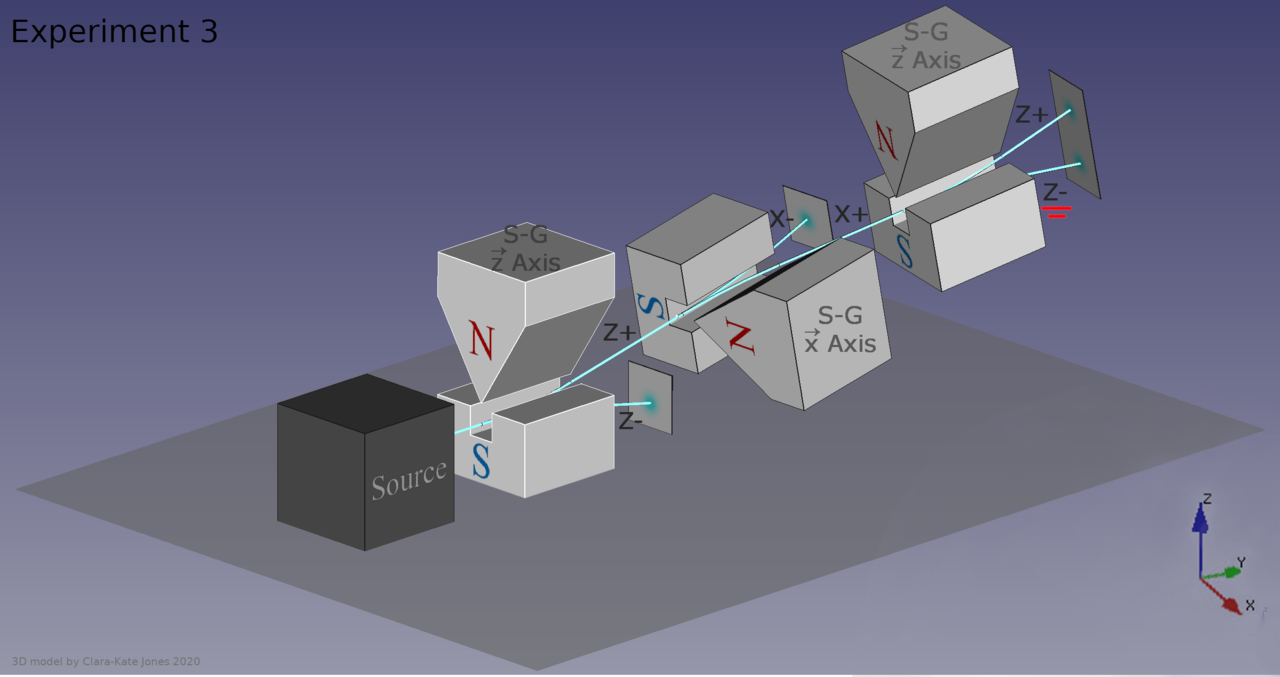

- Pass a series of particles (that possess quantum spin) through a magnetic field and you’ll observe that half of them deflect “up” and half “down,” aligned with or against the direction of the field. If you pass them through another magnet oriented the same way, the ones that went “up” will still go “up” and the ones that went “down” still go down, unless you pass them through an in-between magnet oriented in one of the two perpendicular directions. If you do, the beam will split again and the particles’ spins in the original direction will be re-randomized once again, with no way to determine which way they’ll split when you pass them through the final magnet. Again, you must measure each individual particle to determine what its outcome was.

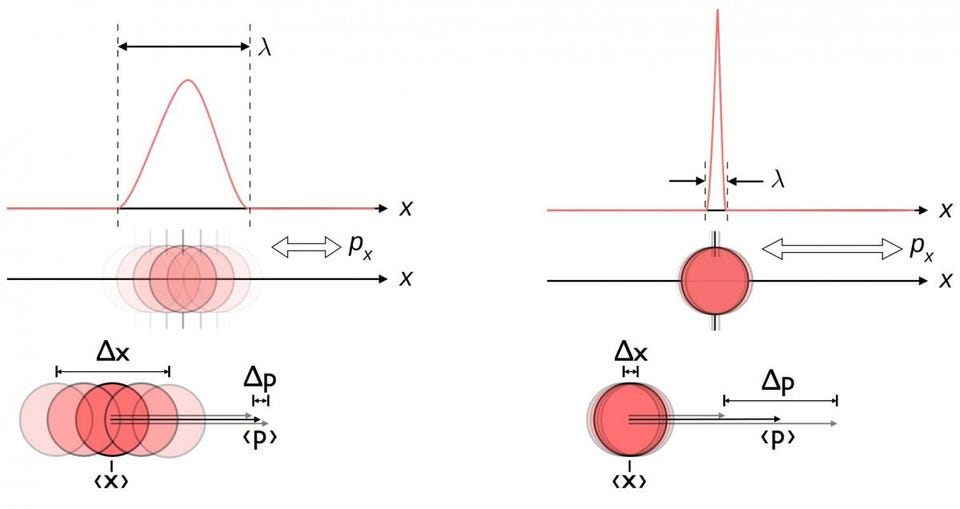

The list of experiments that display this sort of quantum weirdness or spookiness is long, and examples such as the ones given are far from exhaustive. This inherently quantum behavior shows up in all sorts of physical systems, both for individual particles as well as for complex multi-particle systems, under a broad variety of conditions. Although physicists have been able to write down the rules and equations that govern these quantum systems, including the Pauli Exclusion Principle, the Heisenberg Uncertainty Principle, the Schrödinger equation and many more, the fact is that only a set of possible conditions and probable outcomes can be predicted in the absence of a definitive measurement.

Somehow, in quantum systems, the act of making a measurement appears to be a very important factor, flying in the face of the idea that we inhabited a sort of “independent reality” that was agnostic about the role of the observer or the one doing the measuring. Properties of a physical system that had previously been treated as intrinsic and immutable — like position, momentum, angular momentum, or even the energy of a particle — were suddenly knowable only up to a certain precision. Moreover, the act of measuring those properties, which requires an interaction with another quantum of some type, fundamentally changes, or perhaps even determines, those values, while simultaneously increasing the indeterminism and/or uncertainties of other measurable parameters.

Although this explanation was and still is counterintuitive, it seems to be a requirement to accurately describe our Universe.

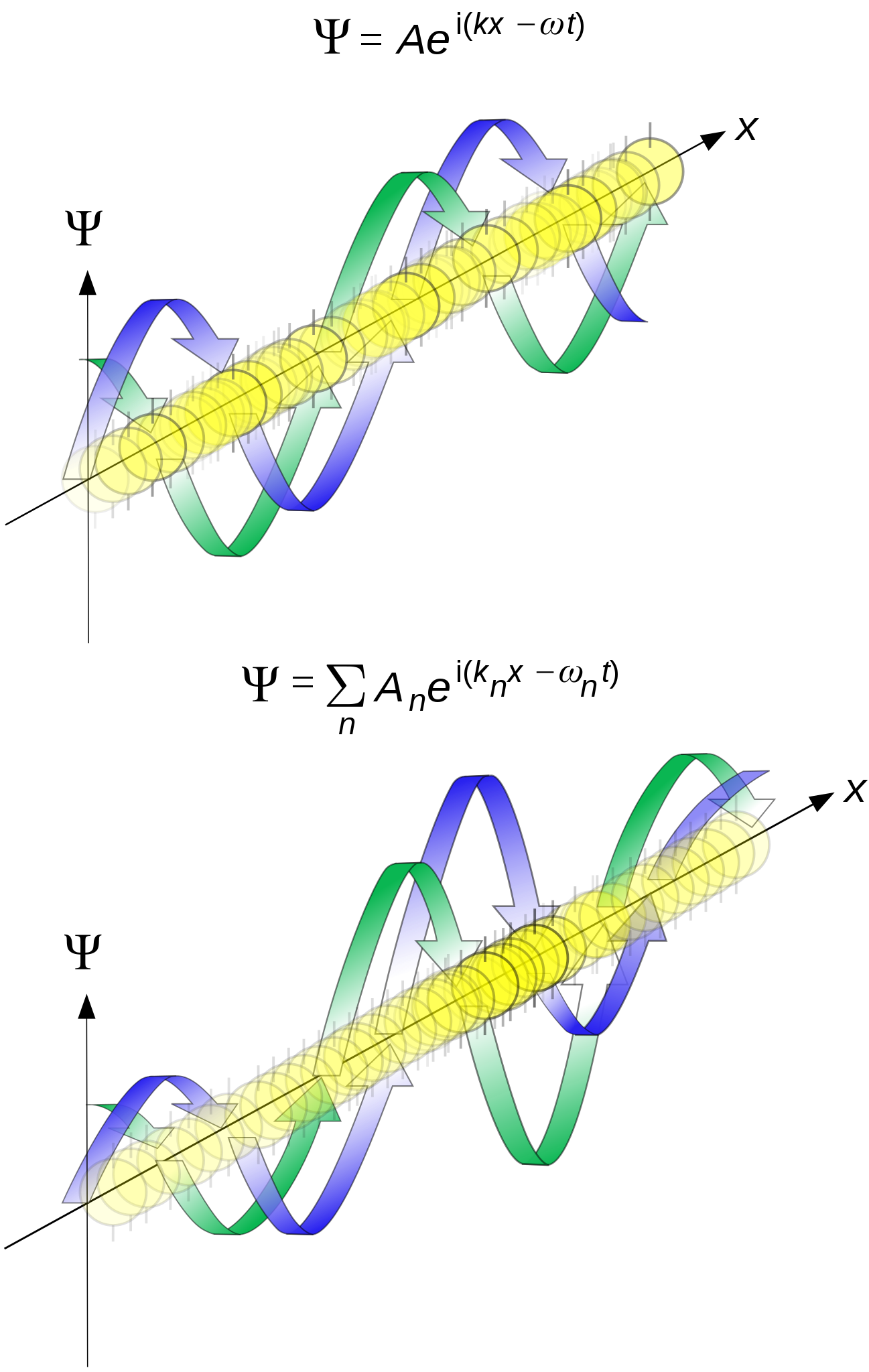

The central idea behind what we now call the Copenhagen interpretation of quantum mechanics, which is the standard way that physics students are taught to conceive of the quantum Universe, is that nothing is certain until that critical moment where an observation occurs. Everything that cannot be exactly calculated from what’s already known is describable by some sort of wavefunction — a wave that encodes a continuum of more likely and less likely possible outcomes — until the critical moment when a measurement is made. At that precise instant, the wavefunction description gets replaced by a single, now-determined reality: what some describe as a collapse of the wavefunction. In other words:

- every quantum in existence behaves as a wave while it propagates through a physical system,

- but behaves as a particle when it interacts, or experiences an event, with an observer or a measurement apparatus.

If this sounds bizarre to you, as though it couldn’t possibly be right, don’t worry: you’re not alone in how you feel. It was this level of weirdness, or “spookiness,” that was so objectionable to many pioneers of the quantum Universe with Einstein himself being perhaps the most famous among them. Einstein was aghast at the idea that somehow reality was random in nature, and that effects could occur — like one member of a pair of identical atoms decaying while the other did not — without an identifiable cause. In many ways, this position was summed up in a famous remark attributed to Einstein, “God does not play dice with the Universe.” While Einstein himself never came up with a viable alternative to the Copenhagen interpretation, one of his (and Bohr’s) contemporaries had an idea for how reality could work instead: Louis de Broglie.

The idea that quanta could behave like waves began with light: first put forth by Huygens in the 1600s and then revived by Thomas Young’s double slit experiment at the dawn of the 19th century. Max Planck’s work a century later showed that light must indeed be quantized, however, and Einstein’s subsequent work on the photoelectric effect demonstrated light’s particle-like behavior as well. Just a few years later, Louis de Broglie gained fame for showing that it wasn’t simply light that possessed a dual nature of being simultaneously wave-like and particle-like, but that matter itself — and specifically, electrons — must possess a wave-like nature when subjected to the proper quantum conditions. His formula for calculating the wavelength of “matter waves” is still widely used today, and to de Broglie, it implied that the dual nature of quanta, both wave-like and matter-like, must be taken literally.

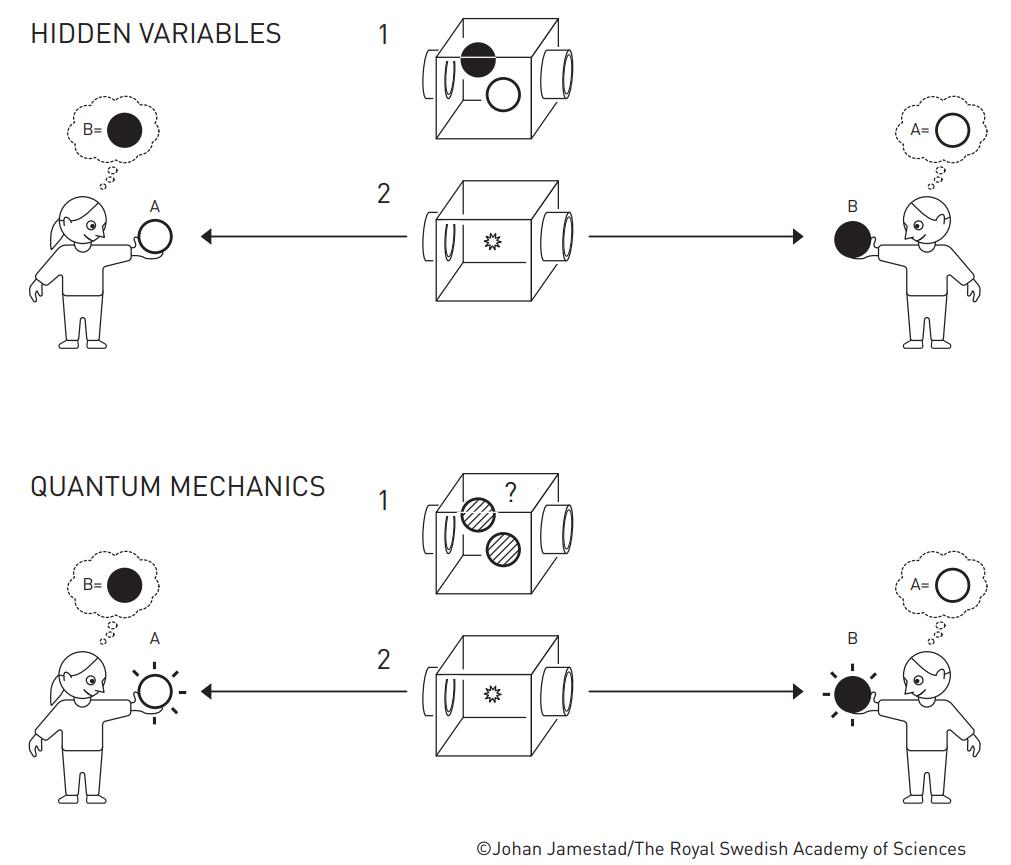

In de Broglie’s version of quantum physics, there were always concrete particles, with definite (but not always well-measured) positions to them, that are guided through space by these quantum mechanical wavefunctions, which he called “pilot waves.” Although de Broglie’s version of quantum physics couldn’t describe systems with more than one particle, and suffered from the challenge of not being able to measure or identify precisely what was “physical” about the pilot wave, it represented an interesting alternative to the Copenhagen interpretation: one where “pilot waves” guided particles through space, but that they always objectively existed as definitive particles, whose properties were then revealed by measurement.

Instead of being governed by the weird rules of quantum spookiness, de Broglie envisioned an underlying, hidden reality that was completely deterministic. Many of de Broglie’s ideas were expanded upon by other researchers, who all sought to discover a less “spooky” alternative to the quantum reality that generations of students, with no superior alternative, had been compelled to accept.

Perhaps the most famous extension came courtesy of the physicist David Bohm, working on similar problems a generation later. In the 1950s, Bohm developed his own interpretation of quantum physics that’s still considered today: the de Broglie-Bohm (or pilot wave) theory. The underlying wave equation, in this idea, is the same as the conventional Schrödinger equation, as in the Copenhagen interpretation. However, there’s also a guiding equation that acts on the wavefunction, and properties like the position of a particle can be extracted from the relationship of the Schrödinger equation’s wavefunction to that guiding equation. It’s an explicitly causal, deterministic interpretation of quantum mechanics, with the addition of a guiding equation to it.

Conceptually, however, it gives up something that the Copenhagen interpretation preserves: locality, or the idea that no information-carrying signal can be transmitted from one quantum to another at speeds exceeding the speed of light. Even if you accept non-locality, however, this pilot wave interpretation possesses its own difficulties. For one, you can’t recover classical dynamics using this pilot wave theory; Newton’s F = ma doesn’t describe the dynamics of a particle at all. In fact, the particle itself doesn’t affect the wavefunction in any way; rather, the wavefunction describes the velocity field of each particle or system of particles, and you have to apply the appropriate “guiding equation” to find out just where the particle is and how its motion is affected by whatever is exerting a force on it.

In many ways, pilot wave theory was more of an interesting counterexample to the assertion that “no hidden variable theory could reproduce the success of quantum indeterminism.” It could and in fact did, as Bohm’s pilot wave theory illustrated, although there was a cost: the theory demands both a fundamental non-locality and the difficult notion of having to extract physical properties from a guiding equation, whose results are not extractable in a straightforward fashion.

Consider the following example: a particle, like a ball, floating on top of a flowing river. In Newtonian mechanics, what happens to the ball is simple:

- the ball has a mass,

- which means it has an inertia,

- and that means it follows Newton’s first and second laws.

- This object in motion will remain in motion unless acted on by an outside force.

- If it is acted upon by an outside force, it accelerates via Newton’s famous equation, F = ma.

- As the ball travels downstream, the river’s twists and turns will cause the water to flow downstream, but will quickly drive the ball to one bank of the river or the other.

In Newton’s classical picture, the ball always winds up on one side of the river, and inertia is the guiding principle behind the floating ball’s net motion.

But in Bohmian mechanics, the flow of the river determines the evolution of the wavefunction, which should preferentially stay in the center of the river. This shows the conceptual difficulty with pilot wave theory: if you want your particle to ride on the wavefunction like a surfer — as de Broglie originally envisioned — you have to go through a variety of twisted contortions to get back the basic predictions that we’re all familiar with from classical mechanics. Bohmian mechanics does not lead one to recover Newtonian mechanics, even in the classical limit of the theory.

As the perfectly valid Copenhagen interpretation has long demonstrated, however, just because something is counterintuitive or even illogical doesn’t mean it’s incorrect. Physical behavior is often more bizarre than we’d ever expect, and that is why we must always confront our predictions with the harsh reality of experiments. Perhaps, contrary to what we’d intuitively expect, nature really did follow these Bohmian trajectories.

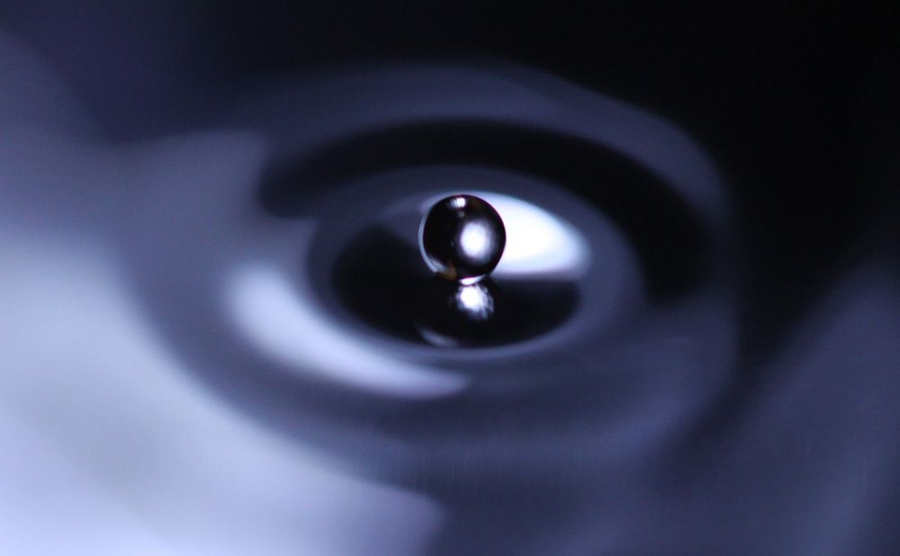

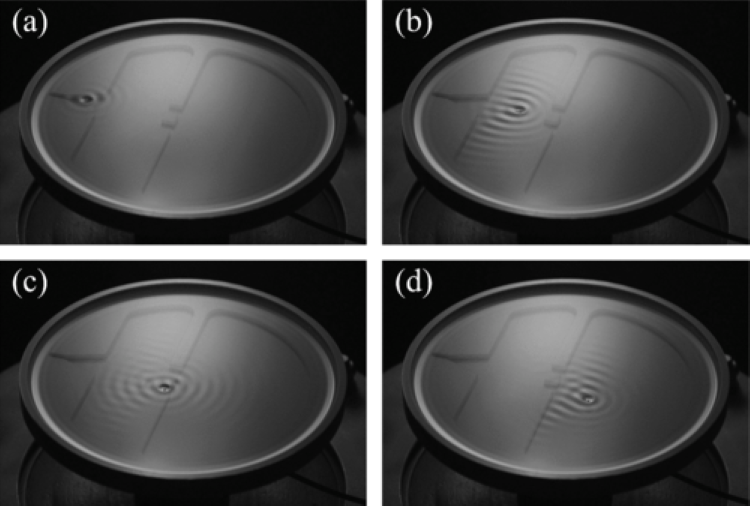

In 2006, physicists Yves Couder and Emmanuel Fort began to bounce an oil droplet atop a vibrating fluid bath made out of that same oil, recreating an analogue of the quantum double-slit experiment. As the wave ripples down the tank and approaches the two slits, the droplet bounces atop the waves and is guided through one slit or the other by the waves. When many droplets were passed through the slits and a statistical pattern emerged, it was found to exactly reproduce the standard predictions of quantum mechanics: the interference pattern that had been known since the time of Thomas Young.

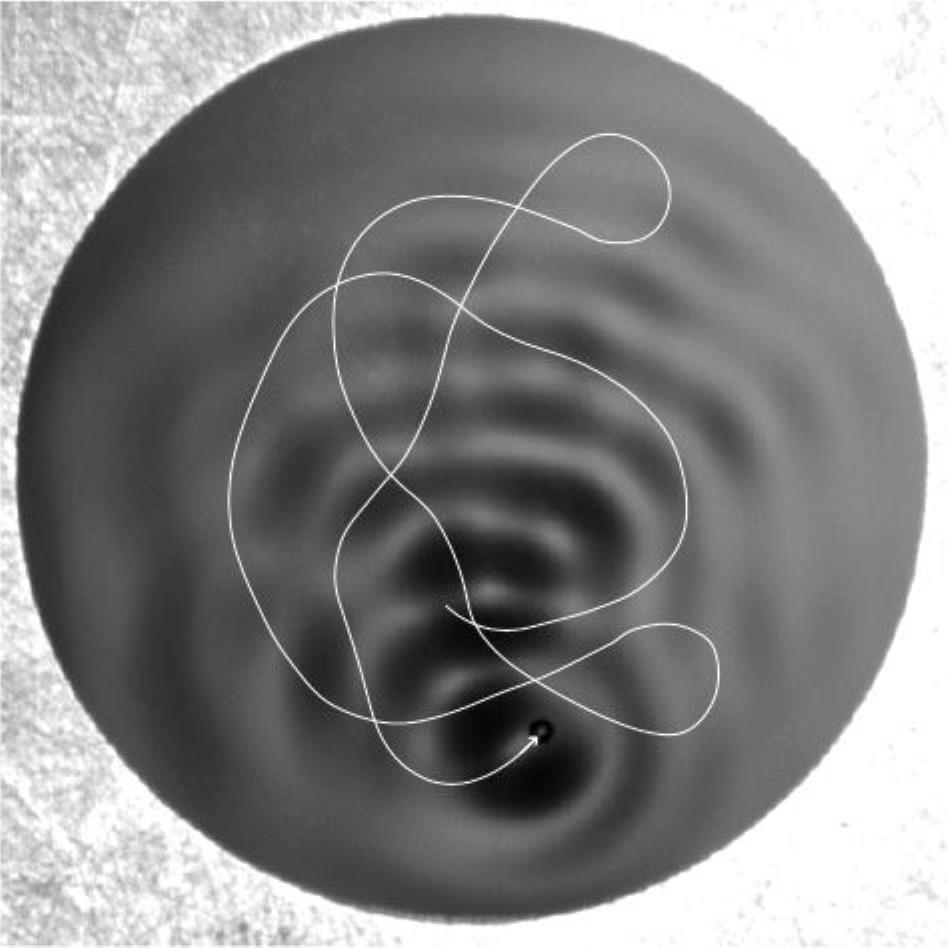

In 2013, an expanded team led by John Bush at MIT leveraged the same technique to test a different quantum system: confining electrons into a circular corral-like area by a ring of ions. To the surprise of many, with an appropriately set up boundary, the underlying wave patterns that are produced are complex, but the trajectory of the bouncing droplets atop them actually follow a pattern determined by the wavelength of the waves, in agreement with the quantum predictions that underlie them.

What appeared to be random, in these experiments, wasn’t truly random at all, but rather provided a thrilling confirmation of the ideas of pilot wave theory.

Or so it seemed. What looked like it could be a shocking revelation, all of a sudden, all fell apart.

Normally, the double slit experiment only gives you the vaunted interference pattern if you don’t measure which of the two slits the particle passes through. At quantum scales, it’s possible to set up a detector at the site of the slits themselves, measuring which slit each particle goes through. If you do this, your experiment still works, but the interference pattern is destroyed by “forcing” that interaction. You simply wind up with two piles of particles on the other side, with each pile corresponding to particles that simply passed straight through one of the two slits, with no interference effects at all.

In Couder and Fort’s original 2006 experiment, they had set 75 separate bouncing droplets through the slits where they could watch which slit each droplet passed through — while also recording the pattern of where they landed on the screen — finding the needed interference pattern. If this held up, it would seem to confirm that, perhaps, there really could be these hidden variables underlying what appeared to be an indeterminate quantum reality.

And then the reproduction attempts came. Lo and behold, as soon as the path through one of the two slits was singled out by each droplet, the paths that the particle takes depart from what quantum mechanics predicts. There was no interference pattern, and it was found that the original work contained a few mistakes that were corrected in the reproduction attempt. As the eight authors of the 2015 study refuting Couder and Fort’s work (emphasis mine) conclude:

“We show that the ensuing particle-wave dynamics can capture some characteristics of quantum mechanics such as orbital quantization. However, the particle-wave dynamics can not reproduce quantum mechanics in general, and we show that the single-particle statistics for our model in a double-slit experiment with an additional splitter plate differs qualitatively from that of quantum mechanics.”

Of course, arguing over whether reality is:

- truly acausal,

- truly indeterminate,

- or whether it truly lacks any type of hidden variables imaginable,

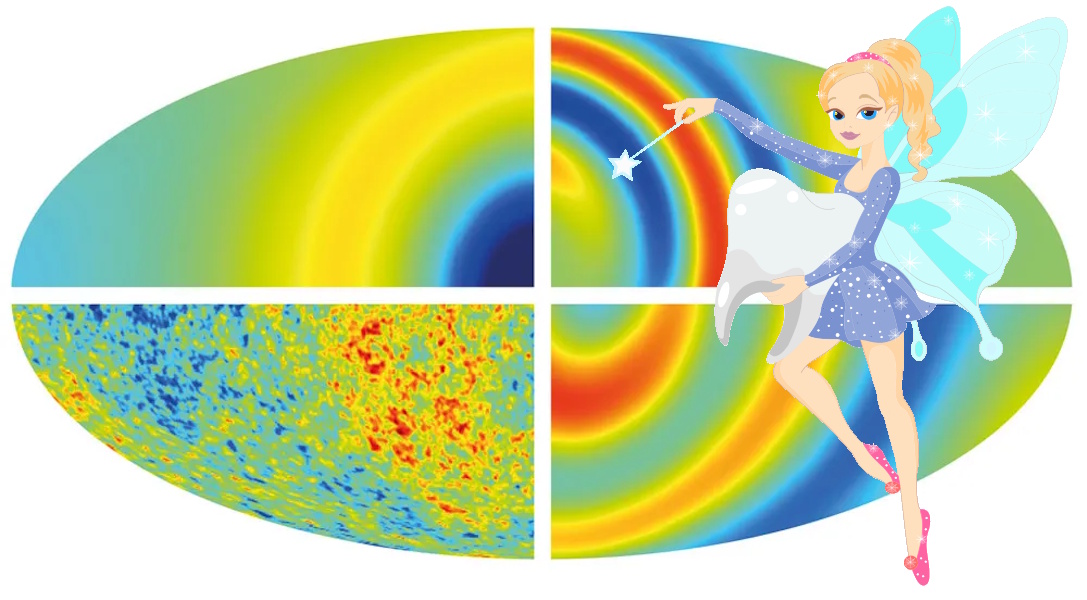

is tantamount to playing a never-ending game of whack-a-mole. Any specific claim that can be tested can always be ruled out, but it can be replaced with a more complex, hitherto untestable claim that still purports to have whatever aspects (or combination of aspects) one desires. Still, when assembling our picture of reality, it’s important to make sure that we don’t ideologically choose one that conflicts with the experiments we can perform, and that we accept reality as it is. It’s for these inescapable reasons that work on quantum entanglement, arguably the “spookiest” component of quantum physics, was awarded the 2022 Nobel Prize in physics.

We may not have the ultimate, complete, full answer to the question of how the Universe works, but we have knocked down a tremendous number of pretenders from the throne. If your predictions disagree with experiments, your theory is wrong, no matter how popular, compelling, or beautiful it happens to be. We have not yet ruled out all possible incarnations of Bohmian mechanics, or of more general pilot wave theories, or of all possible quantum mechanics interpretations that possess hidden variables. In fact, it may not ever be possible to do so. However, every attempt to construct a theory that agrees with experiment requires some level of quantum spookiness that simply cannot be done away with.

The least spooky alternative, as of 2015, has thoroughly been falsified, as a single, concrete, deterministic set of equations simply fails to describe the reality we observe and measure.