The chance to text with the dead via AI is creepy or wonderful

The human mind isn’t good at grasping certain very big things, perhaps chief among them infinity. The idea of infinite space is a head-scratcher, but the idea of infinite time can be truly mind-busting, especially when one is trying to get a grip on death, either their own or someone else’s. What does it mean, as many of us believe to be our fates, to not be forever? When someone dies, it’s this aspect of their passing that seems so unreal, impossible almost. How can someone who was just here be so completely gone? And forever? The process of acquiring enough acceptance to return to life and be able to even imagine joy again is often a painful, protracted one. Different cultures through the ages have developed their own ways of grieving, and now technologists are working on a new one for our time, or a little ways off in the future: griefbots.

Chatting with the dead

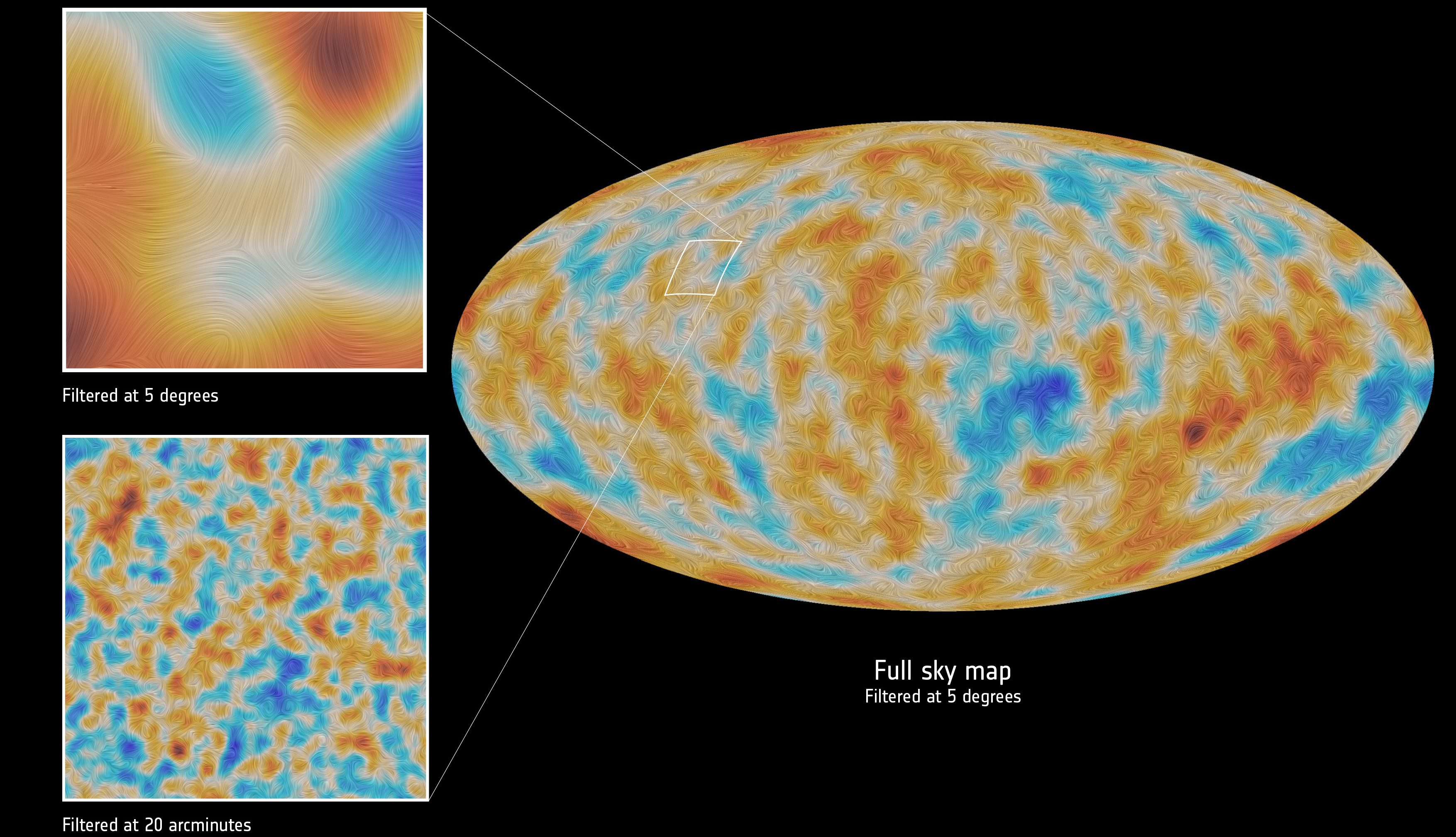

We spend so much of our time feeding social media and each other with our thoughts, photos, favorite memes, and so on, that each of us leaves quite the digital footprint behind. This is especially true of Millennials. It all amounts to a lot of information about us — or at least who we pretend to be on social media. A number of AI experts and programmers think it’s nearly enough info to construct digital replicas of ourselves who can convincingly chat with our friends and loved ones after we’re gone.

Hossein Rahnama of Ryerson Univerity says the sweet spot of having enough data to really pull this off is around a zettabyte, telling Quartz, “Fifty or 60 years from now, Millennials will have reached a point in their lives where they each will have collected zettabytes [1trillion gigabytes] of data, which is just what is needed to create a digital version of yourself.” How much of yourself is an interesting question, though, with Rahnama cautioning that a zettabyte is also just about the threshold at which a simulation would be Campbell of revealing a bit too much: “We have to consider an individual’s privacy when it comes to passing on virtual profiles. You should be able to own your data and only pass it along to people you trust, so allowing people to engage with their own ancestors would be likely.”

Still, there are a number of programmers working on developing believable chatbots, motivated not just by their own desire to continue to interact with the departed, but also to share those lost with others who never got to know them alive, as Muhammad Ahmad is doing for his children by programming a bot of his late father.

The idea is for the bots to do more than merely play back — in text, audio, or synthesized speech — thing once written or said by the deceased. Instead, the goal is for machine-learning algorithms to learn from data left behind what the person was like and how they communicated in order to create a digital avatar that’s identical to the original person. These avatars could even conceivably keep up with current events, allowing the living to continue having brand-new conversations that they theoretically might have had with the dead. Of course, there are hazards to this. As Pamela Rutledge of Media Psychology Research Center tells The Daily Beast, “What you don’t want is people taking advice from a bot.”

We’re still a ways off from truly convincing bots, though, and even when such a thing is possible, the AI “person” will not be the original one, no matter how seemingly identical it is. Should a time arrive when we physically merge with machines, of course, things may not be so simple.

Escaping grief?

There’s some disagreement as to whether AI simulacra would help make grieving less painful or make it worse by prolonging the recovery process. Certainly, an AI replica of a loved one does nothing to help us answer the big questions mentioned above — the originals are still gone as they’ll ever be.

Some grief experts feel that we’d be better off to face the pain and confusion that accompany a death directly. Psychologist Ernest Becker, the author of The Denial of Death, is concerned that an AI doppelgänger could interfere with an important corner that needs to be turned during grieving, saying, “People will always continue to mourn, but at a certain point people remember instead of relive.” (Our emphasis.) Getting too attached to what he calls a “projection of memories” could leave one stuck in a sad, irresolvable place.

Others suggest a bot could help a survivor transition into acceptance gently, essentially weaning themselves from the departed’s presence in their lives. But would you be comforted by a little more time with the something like the person who’s just died? And would you find a bot texting you “I’m alright,” or “I miss you, too,” to be comforting or a stabbing reminder of your loss? Eugenia Kuyda, a pioneer in this field, likes when the bot she’s developed of her late friend Roman Mazurenko reassures her.

Some say that a griefbot could help survivors through their loss by giving them a comforting way to share their pain. Grief counselor Andrea Warnick tells Quartz, “In modern society, many people are hesitant to talk about someone who has died for fear of upsetting those who are grieving — so perhaps the importance of continuing to share stories and advice from someone who has died is something that we humans can learn from chatbots.”

In Season 2 of the Netflix series Black Mirror, a new widow invites first a chatbot simulation of her husband, then a voice bot, and then finally a full-sized — and apparently fully functional, ahem — android into her life. “Be Right Back” is a haunting episode that captures what this experience may indeed someday be like. Rutledge’s concern is pretty much what happens to the widow: “If you have a lot of contact with something… [but] you don’t have this awareness that [para-social relationships] can happen, you might end up with a relationship that actually keeps you from grieving the loss of that person.”

The missing piece of the puzzle

Here’s a problem. What we miss as much about the dead as their traits, manner, and sense of humor — to name just three attributes — is how they make us feel because of how they feel about us. Considering this, for all of our genius at data collection, machine learning, and AI, until a time arrives when AI can truly feel, any such simulated relationship will emanate from an ice-cold core, empty of the most important ingredient in a close relationship: Love.