Top 20 greatest inventions of all time

Technology is a core component of the human experience. We have been creating tools to help us tame the physical world since the early days of our species.

Any attempt to count down the most important technological inventions is certainly debatable, but here are some major advancements that should probably be on any such list (in chronological order):

1. FIRE – it can be argued that fire was discovered rather than invented. Certainly, early humans observed incidents of fire, but it wasn’t until they figured out how to control it and produce it themselves that humans could really make use of everything this new tool had to offer. The earliest use of fire goes back as far as two million years ago, while a widespread way to utilize this technology has been dated to about 125,000 years ago. Fire gave us warmth, protection, and led to a host of other key inventions and skills like cooking. The ability to cook helped us get the nutrients to support our expanding brains, giving us an indisputable advantage over other primates.

2. WHEEL – the wheel was invented by Mesopotamians around 3500 B.C., to be used in the creation of pottery. About 300 years after that, the wheel was put on a chariot and the rest is history. Wheels are ubiquitous in our everyday life, facilitating our transportation and commerce.

Circa 2000 BC, Oxen drawing an ancient Egyptian two-wheeled chariot. (Photo by Hulton Archive/Getty Images)

3. NAIL – The earliest known use of this very simple but super-useful metal fastener dates back to Ancient Egypt, about 3400 B.C. If you are more partial to screws, they’ve been around since Ancient Greeks (1st or 2nd century B.C.).

4. OPTICAL LENSES – from glasses to microscopes and telescopes, optical lenses have greatly expanded the possibilities of our vision. They have a long history, first developed by ancient Egyptians and Mesopotamians, with key theories of light and vision contributed by Ancient Greeks. Optical lenses were also instrumental components in the creation of media technologies involved in photography, film and television.

5. COMPASS – this navigational device has been a major force in human exploration. The earliest compasses were made of lodestone in China between 300 and 200 B.C.

Circa 1121 BC, An ancient Chinese magnetic chariot. The figure, pointing to the south, moves in accordance with the principle of the magnetic compass. (Photo by Hulton Archive/Getty Images)

6. PAPER – invented about 100 BC in China, paper has been indispensible in allowing us to write down and share our ideas.

7. GUNPOWDER – this chemical explosive, invented in China in the 9th century, has been a major factor in military technology (and, by extension, in wars that changed the course of human history).

8. PRINTING PRESS – invented in 1439 by the German Johannes Gutenberg, this device in many ways laid the foundation for our modern age. It allowed ink to be transferred from the movable type to paper in a mechanized way. This revolutionized the spread of knowledge and religion as previously books were generally hand-written (often by monks).

1511, Printing Press, from the title page of ‘Hegesippus’ printed by Jodocus Badius Ascensius in Paris. (Photo by Hulton Archive/Getty Images)

9. ELECTRICITY – utilization of electricity is a process to which a number of bright minds have contributed over thousands of years, going all the way back to Ancient Egypt and Ancient Greece, when Thales of Miletus conducted the earliest research into the phenomenon. The 18th-century American Renaissance man Benjamin Franklin is generally credited with significantly furthering our understanding of electricity, if not its discovery. It’s hard to overestimate how important electricity has become to humanity as it runs the majority of our gadgetry and shapes our way of life. The invention of the light bulb, although a separate contribution, attributed to Thomas Edison in 1879, is certainly a major extension of the ability to harness electricity. It has profoundly changed the way we live, work as well as the look and functioning of our cities.

10. STEAM ENGINE – invented between 1763 and 1775 by Scottish inventor James Watt (who built upon the ideas of previous steam engine attempts like the 1712 Newcomen engine), the steam engine powered trains, ships, factories and the Industrial Revolution as a whole.

circa 1830: An early locomotive hauling freight. (Photo by Hulton Archive/Getty Images)

11. INTERNAL COMBUSTION ENGINE – the 19th-century invention (created by Belgian engineer Etienne Lenoir in 1859 and improved by Germany’s Nikolaus Otto in 1876), this engine that converts chemical energy into mechanical energy overtook the steam engine and is used in modern cars and planes. Elon Musk’s electric car company Tesla, among others, is currently trying to revolutionize technology in this arena once again.

12. TELEPHONE – although he was not the only one working on this kind of tech, Scottish-born inventor Alexander Graham Bell got the first patent for an electric telephone in 1876. Certainly, this instrument has revolutionized our ability to communicate.

13. VACCINATION – while sometimes controversial, the practice of vaccination is responsible for eradicating diseases and extending the human lifespan. The first vaccine (for smallpox) was developed by Edward Jenner in 1796. A rabies vaccine was developed by the French chemist and biologist Louis Pasteur in 1885, who is credited with making vaccination the major part of medicine that is it today. Pasteur is also responsible for inventing the food safety process of pasteurization, that bears his name.

14. CARS – cars completely changed the way we travel, as well as the design of our cities, and thrust the concept of the assembly line into the mainstream. They were invented in their modern form in the late 19th century by a number of individuals, with special credit going to the German Karl Benz for creating what’s considered the first practical motorcar in 1885.

Karl Benz (in light suit) on a trip with his family with one of his first cars, which was built in 1893 and powered by a single cylinder, 3 h.p. engine. His friend Theodor von Liebig is in the Viktoria. (Photo by Hulton Archive/Getty Images)

15. AIRPLANE – invented in 1903 by the American Wright brothers, planes brought the world closer together, allowing us to travel quickly over great distances. This technology has broadened minds through enormous cultural exchanges—but it also escalated the reach of the world wars that would soon break out, and the severity of every war thereafter.

16. PENICILLIN – discovered by the Scottish scientist Alexander Fleming in 1928, this drug transformed medicine by its ability to cure infectious bacterial diseases. It began the era of antibiotics.

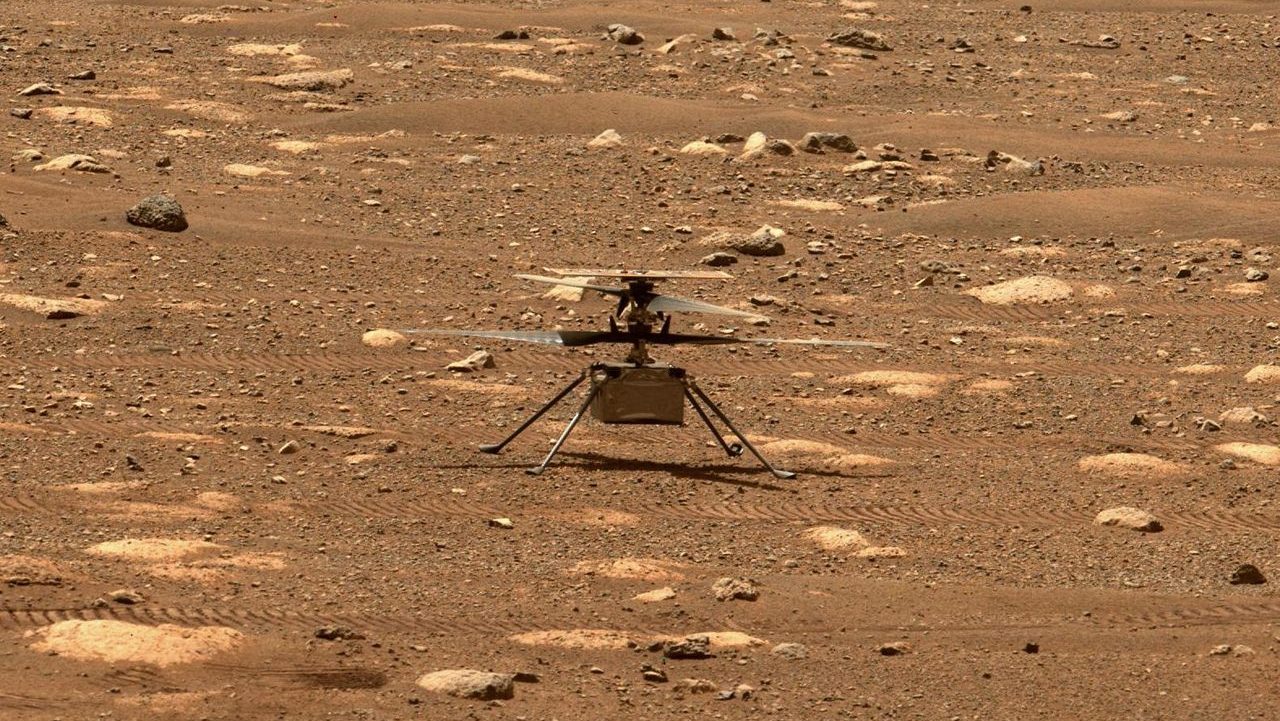

17. ROCKETS – while the invention of early rockets is credited to the Ancient Chinese, the modern rocket is a 20th century contribution to humanity, responsible for transforming military capabilities and allowing human space exploration.

18. NUCLEAR FISSION – this process of splitting atoms to release a tremendous amount of energy led to the creation of nuclear reactors and atomic bombs. It was the culmination of work by a number of prominent (mostly Nobel Prize-winning) 20th-century scientists, but the specific discovery of nuclear fission is generally credited to the Germans Otto Hahn and Fritz Stassmann, working with the Austrians Lise Meitner and Otto Frisch.

19. SEMICONDUCTORS – they are at the foundation of electronic devices and the modern Digital Age. Mostly made of silicon, semiconductor devices are behind the nickname of “Silicon Valley”, home to today’s major U.S. computing companies. The first device containing semiconductor material was demonstrated in 1947 by America’s John Bardeen, Walter Brattain and William Shockley of Bell Labs.

20. PERSONAL COMPUTER – invented in the 1970s, personal computers greatly expanded human capabilities. While your smartphone is more powerful, one of the earliest PCs was introduced in 1974 by Micro Instrumentation and Telemetry Systems (MITS) via a mail-order computer kit called the Altair. From there, companies like Apple, Microsoft, and IBM have redefined personal computing.

(BONUS) 21. THE INTERNET – while the worldwide network of computers (which you used to find this article) has been in development since the 1960s, when it took the shape of U.S. Defense Department’s ARPANET, the Internet as we know it today is an even more modern invention. 1990s creation of the World Wide Web by England’s Tim Berners-Lee is responsible for transforming our communication, commerce, entertainment, politics, you name it.

Cover photo: a drawing by Leonardo Da Vinci