Brain-machine interfaces, or BMIs, are fascinating. “Brain machine interfaces are devices that allow a person or animal to control a computer with their mind. In humans, that could be controlling a robotic arm to pick up a cup of water, or moving a cursor on a computer to type a message using the mind,” says Kelly Clancy of the Sainsbury Wellcome Centre for Neural Circuits and Behaviour (SWC). She’s the co-author of a paper recently published in Neuron.

The potential of BMIs is obvious as tools for people with conditions that deprive them of the use of their limbs. Beyond that, BMIs may one day allow us to control and operate all sorts of devices using only our minds — it’s easy to imagine, for example, surgeries performed by doctors interfacing directly with ultra-precise robotic appendages.

While there has already been some success with BMIs, there is a lot that’s not yet known about how they work. Researchers at SWC have developed a BMI for mice that lets them track the manner in which it interacts with the mouse’s brain as the subject moves an onscreen cursor using only its thoughts. It also gives them a peek into how those brains function.

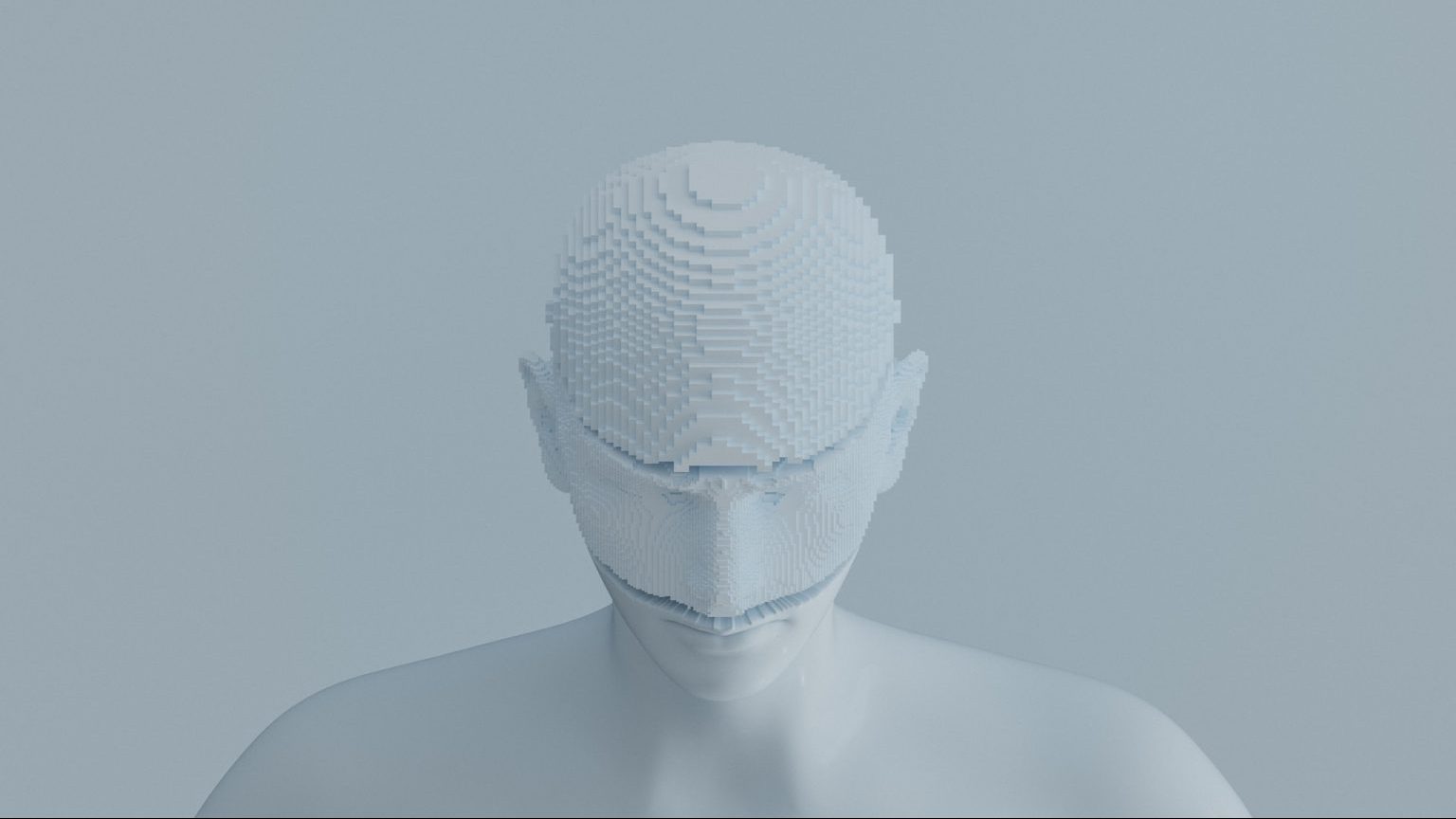

Credit: Kurashova/Adobe Stock

According to co-author Tom Mrsic-Flogel, also of SWC, “Right now, BMIs tend to be difficult for humans to use and it takes a long time to learn how to control a robotic arm for example. Once we understand the neural circuits supporting how intentional control is learned, which this work is starting to elucidate, we will hopefully be able to make it easier for people to use BMIs.”

In addition to understanding BMIs better, their use in research may help scientists unravel at least one mystery of the mind. Working out how objects are represented in the brain has been tricky. When tests subjects interact with objects, scans simultaneously show signals representing that interaction—a motor process—and thoughts about the object. It’s hard to tell which is which. The motor signals are removed when interaction with an object is strictly virtual, as when using a BMI.

Credit: Clancy, et al./Neuron

In the study, BMI were affixed to the skulls of seven female mice who were subsequently trained to use a mouse to move an onscreen cursor to a target location in order to receive a reward. The researchers’ aim was to investigate intentionality.

As the mice moused, the researchers used wide field brain imaging to observe their entire dorsal cortexes. This provided an overview of the area that would allow the scientists to see which regions of the brain exhibited activity.

Not surprisingly, visual cortical areas were active. More of a surprise was the involvement of the anteromedial cortex, the rodent equivalent of the human parietal cortex that’s associated with intention.

“Researchers have been studying the parietal cortex in humans for a long time,” says Clancy. “However, we weren’t necessarily expecting this area to pop out in our unbiased screen of the mouse brain. There seems to be something special about parietal cortex as it sits between sensory and motor areas in the brain and may act as a way station between them.”

That “station” may enable a continual back-and-forth between the regions. Says the paper, “Thus, animals learning neuroprosthetic control of external objects must engage in continuous self-monitoring to assess the contingency between their neural activity and its outcome, preventing them from executing a habitual or fixed motor pattern, and encouraging animals to learn arbitrary new sensorimotor mappings on the fly.”

The parietal cortex is an excellent candidate for this job, say the authors: “Parietal activity has been found to be involved in representing task rules, the value of competing actions, and visually guided real-time motor plan updating, both in humans and non-human primates.”