How to Think About the Federal “Nudge Squad”

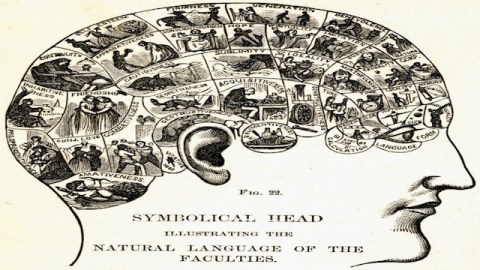

Fox News this week has the not very surprising news that the Obama Administration is looking for social scientists to help form a “Behavioral Insights Team” that, like the group of the same name in Britain, will develop policies that work with, rather than against, the mind’s unconscious and irrational components. The British version proudly cops to the name “nudge squad,” since a lot of what it does to help people “make better choices for themselves” involves “nudging” people to what’s right by subtly shaping their choices, rather than explicitly commanding or persuading them.

I say this news isn’t surprising because the President is known to be interested in the behavioral insights that are relentlessly replacing “Rational Economic Man” as models for how people think and act. And the “nudge” concept, of course, comes from the 2009 book co-authored by the economist Richard Thaler and Cass Sunstein, who until recently directed the White House Office of Information and Regulatory Affairs, which reviews and modifies all proposed federal regulations before they go into effect.

It’s no surprise that Obama’s government would move to harness behavioral insights to shape policy. While some academics fight a rearguard action against this behavioral turn, governments (and corporations) are buying. The story is just one more reminder that for governments and corporations the Age of Reason—attempts to get people to do things on the basis of explicit arguments (“everyone has to pay their taxes or society can’t function” or “saving for retirement is in your best interests”)—is giving way to the Age of Nudge (“we’d just like to tell you that most of your neighbors pay their taxes on time” or “you’re automatically enrolled in our 401(k) program, now let’s get you a stapler”).

The nudge people by now have developed a pretty smooth patter about how their approach is simultaneously great (fearing nudges, Thaler has said, is like fearing good directions when you’re lost) and no big deal (after all, government already tries to get you to pay your taxes, conserve energy, plan for retirement and so on—these are just improvements to good ol’ familiar policies). Meanwhile some on the right are against it because, you know, live free or die, man. And also because Obama seems to be for it.

Neither pro-nudge boosterism nor fretting about imaginary jackbooted thugs, though, are really capturing what’s going on as the Nudge Era dawns. The jackboots critique fails because it depends on the notion that people were all rationally, consciously and autonomously making good choices for themselves until the Nudgers came along with a sneaky plan to force the population to drink smaller sodas and buy energy-saving lightbulbs. But companies, too, use nudges—social media marketing, sales techniques that drive people to buy stuff they don’t need, advertising that suggests drinking Pepsi makes life more fun, food chemistry that finds the precise flavors and “mouthfeel” to make potato chips hard to resist. When I see a soda delivery truck here in New York with a sign that says “”Don’t Let Bureaucrats Tell You What Size Beverage To Buy” I always imagine a smooth and well-trained voice adding, “Let Us Do It.” A government that provides a countervailing nudge isn’t acting like a dictatorship. You could argue it’s fulfilling a key mission: To protect people.

That said, it’s also important to note that the usual defenses of “nudgeocracy” rather understate how different it is from what has come before, and how much disruption it will create.

The first problem: Who decides what is the better choice?

The key here is that notion of helping people to make better choices for themselves. The alternatives to the behavioral approach, Thaler told Fox’s Maxim Lott, “are hunches, tradition, and ideology (either left or right).”

That sounds about right. But the thing about traditions and ideologies is that they are explicit in their claims. Their definition of the “better” choice are proclaimed in their sacred texts and in the words of their true believers. The same, I believe, goes for the “hunches” of policy-makers, as these tend to be based on the rational-man assumptions of classical economics—the assumptions that say you stop a behavior by taxing or fining it, or by telling people why it is bad for them. Such actions may be less effective at instilling change than a well-designed nudge, but at least the nudged know what beliefs those policies represent.

Nudges, by definition, are indirect, and often are supposed to be unnoticed. Being threatened with a penalty tells you that the government is out to get you to pay your taxes on time. Being told the neighbors paid theirs may get you to comply sooner, but it doesn’t provide a moment of clarity, in which you can say to yourself, oh, those people, over there, in government, want me to do that.

This is not easy to square with the ideal of democratic accountability. When I am threatened or cajoled, I can see who is cajoling and why—which means I know whom to punish at the ballot box if I don’t like the policy. When I am nudged, I don’t know whom to complain to. I might not even know I have been nudged.

The second problem: How do we know one choice is better?

One of the most frequent examples of a successful nudge is the rejiggering of 401(k) plan procedures from “opt-in” (fill out all this paperwork to get into the plan) to “opt-out” (you are automatically in the plan unless you make an effort to get out). Participation in a plan goes way up when it moves from opt-in to opt-out, and that’s seen as a good thing, because saving money for retirement is a classic “better choice.”

Yet I find myself wondering about all those people who put money into their 401(k) plans before the Great Recession. They might well have been better off not doing so. How many were nudged into not thinking about it?

The point here is that when behavioral economists say that people aren’t rational, they’re saying people don’t think like economists. There is a mismatch between our innate orientation (use resources now, the future is far) and economic reality (the future is coming, like it or not). But it may be the case that thinking like an economist will turn out not to be “the best choice.”

I don’t meant to suggest here that it’s a bad idea to save for retirement in the abstract. But in the real world, it may turn out in the future, as it has in the past, that our financial system isn’t as robust and reliable as economists think. In which case it may be that our innate instincts (which tell us to spend now, because the future is unknowable) are a better fit with reality than our economists assumptions (which tell us to trust the financial system with our savings).

Any policy that is based on science will struggle with how to cope when science changes. It’s a constant problem in public health (eggs are bad! oh, wait, not really; margarine is better than butter! oh, never mind). And often what happens is that even as the science changes, the policy stays in place. Remember, for example, that advice about making sure you drink eight glasses of water a day? It’s nonsense, but it’s still circulating.

When an explicit policy is based on theories that are later proved false, it can be attacked as outmoded and wrong. But if the policy is a nudge—a practically invisible bit of “choice architecture” getting people to prefer one choice without their knowing why—it could well be hard to notice, much less change. And this is especially true in circumstances where the political debate itself is subject to nudges. After all, it is not just high-minded policymakers who are interested in behavioral techniques. Political activists, interested in swaying public opinion, are interested too.

I don’t suggest here that nudgy approaches ought to be abandoned. This blog’s main subject is the inevitability of behavioral approaches to politics, markets, education, medicine and other domains. What I am saying, rather, is that the Nudge Era is a bigger deal than its proponents make out, and that we need to see it—and debate it—for what it is.

Follow me on Twitter: @davidberreby