Kevin Zollman: Game theory can be applied to scientific understanding in a lot of different ways. One of the interesting things about contemporary science is that it's done by these large groups of people who are interacting with one another, so science isn't just the lone scientist in his lab removed from everyone else, but rather it's teams working together, sometimes in competition with other teams who are trying to beat them out to make a big discovery, so it's become much more like a kind of economic interaction. These scientists are striving for credit from their peers, for grants from federal agencies, and so a lot of the decisions that they make are strategic in nature, they're trying to decide what things will get funded, what strategies are most likely to lead to a scientific advance. How can they do things so as to get a leg up on their competition and also get the acclaim their peers?

Game theory helps us to understand how the incentives that scientists face in trying to get credit, in trying to get grants and trying to get acclaim might affect the decisions that they make. And sometimes there are cases were scientists striving to get acclaim can actually make science worse because a scientist might commit fraud if he thinks he can get away with it or a scientist might rush a result out of the door even though she's not completely sure that it's correct in order to beat the competition. So those of us who use game theory in order to try and understand science apply it in order to understand how those incentives that scientists face might eventually impact their ability to produce truths and useful information that we as a society can go on, or how those incentives might encourage them to do things that are harmful to the progress of science by either publishing things that are wrong or fraudulent or even withholding information that would be valuable.

This is one of the big problems that a lot of people have identified with the way that scientific incentives work right now. Scientists get credit for publication, and they're encouraged to publish exciting new findings that demonstrate some new phenomenon that we have never seen before. But when a scientist fails to find something, that's informative too—the fact that I was unable to reproduce a result of another scientist shows that maybe that was an error. But the way that the system is set up right now I wouldn't get credit for publishing what's called a null result, a finding where I didn't discover something that somebody else had claimed to discover. So as a result when we look at the scientific results that show up in the journals that have been published it turns out that it's skewed towards positive findings and against null results. A lot of different people have suggested that we need to change the way that scientists are incentivized by rewarding scientists more for both publishing null results and for trying to replicate the results of others. In particular in the fields like psychology and medicine, places where there's a lot of findings and there's lots of things to look at, people really think that we might want to change the incentives a little bit in order to encourage more duplication of effort in order to make sure that a kind of exciting but probably wrong result doesn't end up going unchallenged in the literature.

Traditionally, until very recently, scientists were mostly looking for the acclaim of their peers. You succeeded in science when you got the acclaim of another scientist in your field or maybe some scientists outside your field. But now as the area of science journalism is increasing the public is starting to get interested in science, and so scientists are starting to be rewarded for doing things that the public is interested in. This has a good side and a bad side. The good side is it means that scientists are driven to do research that has public impact that people are going to find it useful and interesting. And that helps to encourage scientists not just to pursue some esoteric question that maybe is completely irrelevant to people's everyday life. The bad side is that now scientists are encouraged to do things that will make a big splash, get an article in the New York Times or make them go viral on the Internet, but not necessarily things that are good science. And so one of the things that people are concerned with is that this new incentive, being popular with the public, can make scientists shift towards research that's likely to be exciting but not necessarily true. Because the public doesn't discover when ten years later we decide that the research was wrong, they just remember the research that they read about in the New York Times ten years before. And so the danger is that lots of misinformation can get spread when scientists are rewarded for making a big splash.

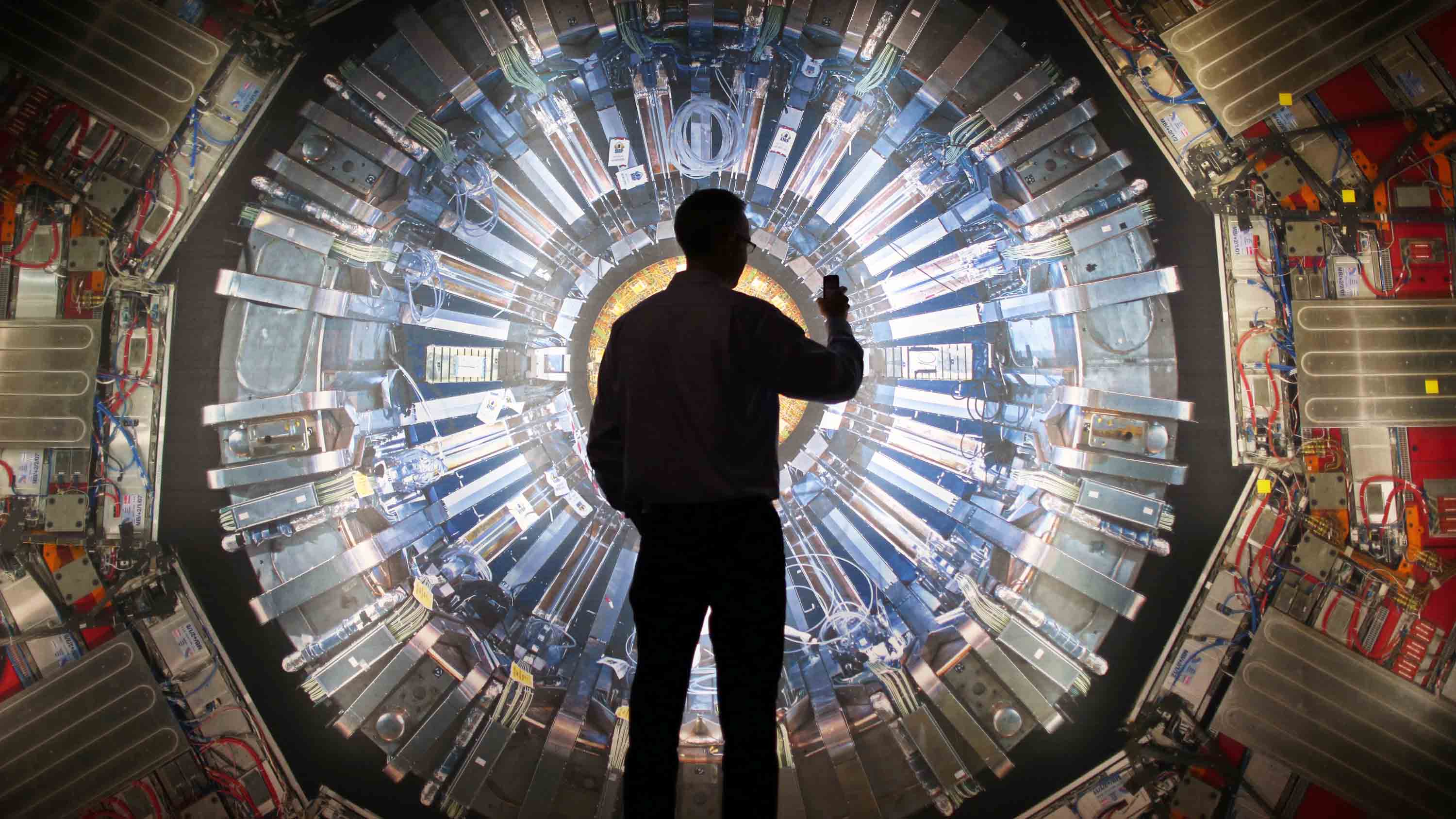

One of the things that we've really discovered through this use of game theory in studying science is that it's really very complicated. That scientists striving to get credit from their peers might initially seem counterproductive, you think, "Well, scientists should care about truth and nothing more." But instead what's actually going on is that sometimes the desire for credit can actually do things that are really good for science. Scientists are encouraged to publish their results quickly rather than sort of keeping them secret until they're absolutely positively sure, and that can actually be beneficial because then other scientists can build on it, can use it in their discoveries. All of that is the good side. There's also a bad side, which is that striving for credit can sometimes encourage scientists to skew towards one project or another and not distribute their labor as a community of scientists over a bunch of different projects. One thing that's really important in science is that scientists work on different projects, because we don't know which project is going to end up working out. There are many different ways to say, detect gravitational waves, or many different ways to try and discover a subatomic particle, and what we want is scientists to distribute themselves so that we have groups working on each different project. The desire for credit can sometimes encourage them to be too homogenous, to all jump onboard with what looks like the best project and not distribute themselves in a good or useful way across all the different projects.

Nobody really has a good idea yet of how to balance these, because there's a good side and a bad side. We don't want to scientists to be completely divorced from the world, but on the other hand the danger is if we put too much emphasis on public reward and the acclaim of the public then we worry that we have scientists just generating false but exciting results over and over and over again in an attempt to get popular acclaim.