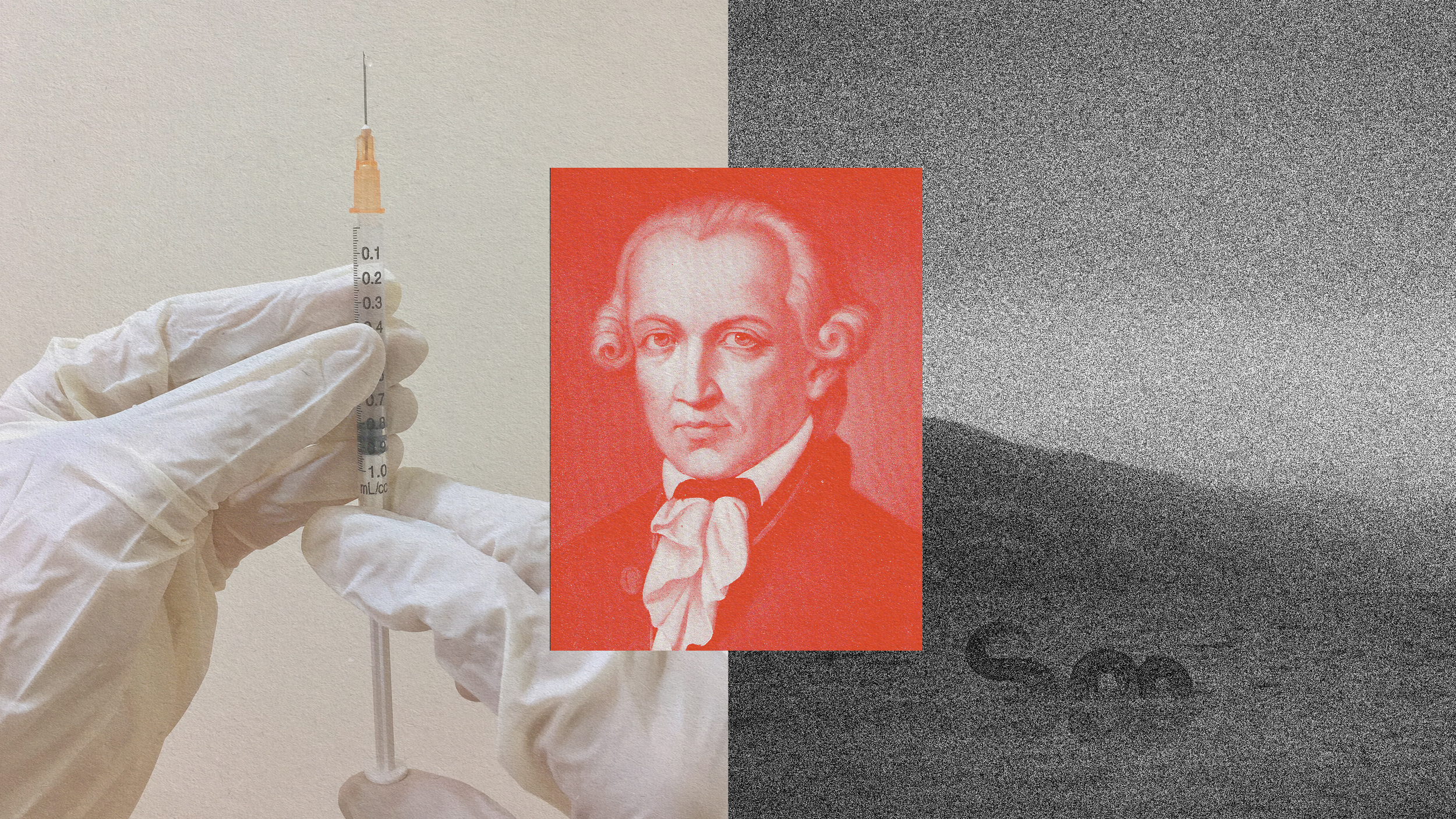

It is really, really fun when Harvard professors play ‘What If…’. This is a regular part of Glenn Cohen’s work as a law professor specializing in health policy, bioethics and biotech. Invoking the Jurassic Park rule of ‘just because you can, doesn’t mean you should’ and imagining all eventualities is how Cohen explores the fascinating and sometimes twisted intricacies driving our ethical dilemmas.

It’s particularly fun on the topic of chimeras, which broadly defined is research that mixes human and animal genetic material. Cohen lists some real cases as examples: mixing human brain cells into mice brains, or humanizing a mouse’s immune system to carry out studies that yield results relevant to humans. Former U.S. senator Jesse Helms had a pig valve placement in his heart many years ago. To make a more imaginative leap, what about a gorilla with a human face? How would we feel about that?

Cohen discusses a few key ethical arguments surrounding our hesitancy to mix interspecies genetic material, then moves onto a hypothetical concerning Sesame Street’s Big Bird, and surmises that when it comes to this ethical area, decisions can really only be made on a case by case basis. Some changes simply are more unethical or sociologically disturbing to humans, and here Cohen cites his friend and colleague at Stanford Law School, Professor Henry T. Greely, who sums it up nicely with three words: brains, balls, and faces. Those are the things that make us most uncomfortable in genetic mixing, perhaps because they are definitive markers of what it is to be human.

The jury might be out on chimeras, but at least you have some insight into how a bioethicist’s mind works.

Glenn Cohen’s book is Patients with Passports: Medical Tourism, Law, and Ethics.

Glenn Cohen: So a recent set of controversies has to do with the funding by the federal government about a research that mixes human and animal genetic material sometimes called chimeras, but there's actually a broader – so again, the method is to think about a large number of cases; it's helpful to think about very different cases. So to use some real cases imagine you mixed human brain cells, so human brain stem cells in the embryonic stage into a mouse to create a mouse with a humanized brain and it wouldn't be a human brain. It isn't exactly the same. It's much smaller, for example, but has humanized elements. Another example is imagine you took a gorilla, treated the gorilla exactly as it is but were able to generate a human looking face, so a gorilla with a human face, how would we think about that entity?

Third example, humanized immune system. Took a mouse and we do this with, we have these at Harvard, for example, and created an immune system in order to test drugs, think about HIV, for example, that was humanized. So not the brain but just the immune system was very human like. And last example is actually valve replacements, heart valve replacements. So Jesse Helms, a senator had a pig valve placement years ago so there's a piece of an animal in him. So these are four examples of different kinds of mixing and the question is which are okay and which are not okay? Why can we generate some principles? So what might be wrong with mixing human and animal parts? So one thing that might be wrong is that we think it will confuse the boundaries between humans and animals, that right now we have a pretty clear distinction. Many people love their dogs and their cats like members of the family. They are able to say this is not a member of my family. This is not a member that has the same rights as my family member. In a world where we had much more of a continuum between animals and human beings those distinctions would become difficult. Now just because they become difficult doesn't mean that that's wrong, it just posed for us a new problem. Maybe it would illustrate a problem we should be thinking about all together. So I'm not particularly sympathetic to that argument.

A different arguments though is to say human beings are particular kinds of things with particular kinds of capacities and there's a dignity to being a human being. And if we were to mix enough animal material into a human being the thing that we would have would not be something new but would be a human being that could not flourish as a human being. It would be an undignified human being, a kind of entity that is one of that really is unable to really experience what it is to be human. Now again, you might push on this and say well yes that's true they would not be a human being and they would not necessarily have all the capacities of a human being. So imagine having some of the capacities of a human being being stuck in a rat's body for example. Sure they would be ways in which you would not flourish as a human being, but why not think of you as flourishing as a new kind of entity?

And in particular you might actually think there might be an obligation to create some kinds of chimeras. So if, for example, we think of Big Bird from Sesame Street, it sounds like a silly example but it's a good one right? Big Bird talks. Big Bird has friends. Big Bird goes to school, been at school a long time on Sesame Street I guess, but he seems to have a pretty good life. Imagine if we could take regular birds and turn them into Big Birds by doing something to them. Would we think of that as improving a little bird's life or do we think about that as hurting a human being's life through this mixture? Hard questions, but at least it might be possible that we think we're doing animals a favor by doing this.

And other answers might say it depends a lot on the specifics of the case. There are changes we could make to human beings by mixing in animal DNA that might make them better, and there are changes we could make to human beings that might make them worse and worse from a moral perspective. So, for example, if it turned out that there was, to use an example of the literature, we could give human beings night vision so they could see at night like some animals through mixing in a little animal DNA. You might think that would be great. We could do more search and rescue. We'd be better drivers. There would be less fatalities. On the other hand if the result was to produce human beings that had much stronger aggression or violence or claws or something like that you might think that's worse because we're going to do more harm. And that would suggest that the answer about whether we ought to have chimeras or not and what kind can only be answered in a particularistic way of thinking about a particular case.

I will say, and this is kind of referencing some work by my friend Hank Greely at Stanford, that there are particular kinds of changes which from a sociological perspective seem to bother us more. And he describes them as kind of brains, balls and faces. So brains, it turns out we're very disturbed by the idea of human brains or humanized brains in animals, much more disturbed by the humanized brain in mice than we are by the humanized immune system in mice, for example. The other is balls. We tend to be very nervous when we think about the idea, and this is kind of crazy and out there, imagine you could create an animal that had the ability to reproduce it's gonads, it's reproductive system was human so that you'd have animals mating and producing human beings and animals. That's the kind of thing that I think disturbs a lot of people as an idea. And the last is faces. The idea of having animals with human faces, for example, I think just disturbs a lot of people. Even though you might say a face is a face, but it's a marker of human beings in the way we relate to each other and I think there's just a strong sociological pushback against that.