Vivek Wadhwa: Innovation Optimist vs. Innovation Pessimist

If you’re anything like Vivek Wadhwa, you grew up watching Star Trek and cultivating a positive vision for the future of humanity. It wasn’t just the replicators and transporters that embodied Gene Roddenberry’s future society. What was truly special was how the civilization presented in the show was one that had employed technological innovation to solve humanity’s problems. Gone were racism, classism, sexism, and internal strife. In their place: an insatiable thirst for truth and exploration.

So, as Wadhwa explains in today’s featured Big Think interview, he became awfully pessimistic upon reaching adulthood to find that humanity hadn’t made much progress in vanquishing our universal problems: poverty, hunger, social disorder, war, pollution, etc. Yet today, Wadhwa considers himself among the most optimistic tech academics out there. He explains why in the video below:

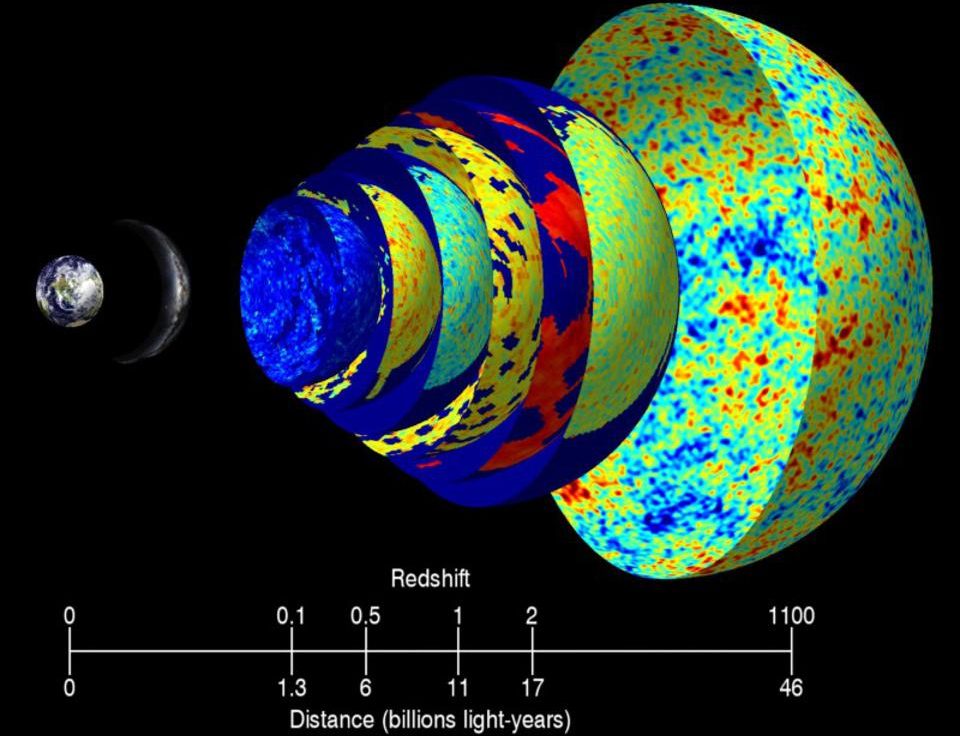

Wadhwa cites as the source of this optimism his time studying with Peter Diamandis and Ray Kurzweil at Singularity University:

"I started learning about the fact that computing is moving exponentially and it's causing many other fields to go exponential. We all know about Moore's Law and we've seen how computers advance in capabilities. Indeed our smartphones are more powerful than the greatest supercomputers of yesteryear. The same advances are now happening in 3-D printing, in artificial intelligence, robotics, synthetic biology, and in medicine."

At the pace we're going, Wadhwa says that by 2030 we could be living in an age of unlimited energy, unlimited food, 3D-printed meat, major advances in healthcare, and clean electric cars. At the same time, Wadhwa still holds a number of apprehensions and fears with regard to "the dark side" of technology:

"We could be creating killer viruses; that with all this automation with 3-D printing and robotics we're headed into an era when we won't need human beings doing manufacturing. With AI based physicians we won't need as many doctors. We won't need supermarket clerks. We won't need people doing delivery. We won't need truck drivers. We won't need taxi drivers. We're headed into a jobless future. It's almost certain that automation now takes away more jobs than it creates."

Assuming social structure doesn't undergo a massive change, such a level of unemployment will undoubtedly lead to unrest. Do we have a contingency plan for this byproduct of innovation? Likely not, considering governments and legislators are always several steps behind new technologies:

"The laws can't keep up because laws are based on - laws are essentially codified ethics. That we develop a consensus as a society about what's good and what's bad and then it becomes what's right and what's wrong, and then it becomes what's legal and what's illegal. That's the way the progression goes. On most of those technologies we haven't decided what's good or bad. Is it good to have drones delivering our goods?"

Sure, it's convenient to have robots deliver our stuff. But how do we regulate them? Is it okay for them to have cameras? How about weapons? Are the drones going to be used to make society better or to help make small numbers of people very rich at the expense of others. The two aren't necessarily mutually exclusive but a servant cannot serve two masters:

"We can make the world, the Star Trek utopia we dreamed about, or we can make it a Mad Max madhouse and be killing each other and destroy humanity. It's really up to us. So this is why I encourage students to now start learning ethics and values and to focus on bettering the world because it's really up to us what we do with it."

So Wadhwa's message here is that our future could be extremely bright as long as we're cautious and thoughtful with regard to how we implement our innovations.