Why great AI produces lazy humans

- Researchers ran an experiment in which one group of consultants worked with the assistance of AI and another group did work the standard way.

- The results showed that the AI-assisted group outperformed the no-AI group in almost every measure of performance.

- However, the AI-assisted group also tended to over-rely on the computer systems, opening up the possibility of errors slipping into their work.

It is one thing to theoretically analyze the impact of AI on jobs, but another to test it. I have been working on doing that, along with a team of researchers, including the Harvard social scientists Fabrizio Dell’Acqua, Edward McFowland III, and Karim Lakhani, as well as Hila Lifshitz-Assaf from Warwick Business School and Katherine Kellogg of MIT. We had the help of Boston Consulting Group (BCG), one of the world’s top management consulting organizations, which ran the study, and nearly eight hundred consultants who took part in the experiments.

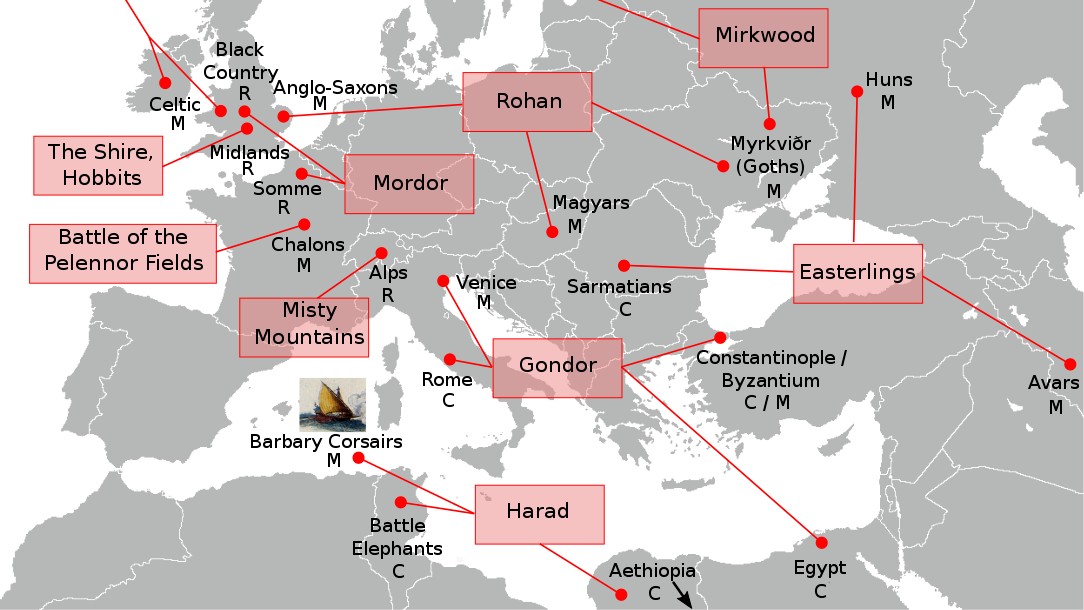

Consultants were randomized into two groups: one that had to do work the standard way and one that got to use GPT-4, the same off-the-shelf vanilla version of the LLM that everyone in 169 countries has access to. We then gave them some AI training and set them loose, with a timer, on eighteen tasks that were designed by BCG to look like the standard job of consultants. There were creative tasks (“Propose at least 10 ideas for a new shoe targeting an underserved market or sport”), analytical tasks (“Segment the footwear industry market based on users”), writing and marketing tasks (“Draft a press release marketing copy for your product”), and persuasiveness tasks (“Pen an inspirational memo to employees detailing why your product would outshine competitors”). We even checked with shoe company executives to ensure that this work was realistic.

The group working with the AI did significantly better than the consultants who were not. We measured the results every way we could — looking at the skill of the consultants or using AI to grade the results as opposed to human graders — but the effect persisted through 118 different analyses. The AI-powered consultants were faster, and their work was considered more creative, better written, and more analytical than that of their peers.

But a more careful look at the data revealed something both more impressive and somewhat worrying. Though the consultants were expected to use AI to help them with their tasks, the AI seemed to be doing much of the work. Most experiment participants were simply pasting in the questions they were asked, and getting very good answers. The same thing happened in the writing experiment done by economists Shakked Noy and Whitney Zhang from MIT — most participants didn’t even bother editing the AI’s output once it was created for them. It is a problem I see repeatedly when people first use AI: They just paste in the exact question they are asked and let the AI answer it. There is danger in working with AIs — danger that we make ourselves redundant, of course, but also danger that we trust AIs for work too much.

And we saw the danger for ourselves because BCG designed one more task, this one carefully selected to ensure that the AI couldn’t come to a correct answer — one that would be outside the “Jagged Frontier.” This wasn’t easy, because the AI is excellent at a wide range of work, but we identified a task that combined a tricky statistical issue and one with misleading data. Human consultants got the problem right 84 percent of the time without AI help, but when consultants used the AI, they did worse — getting it right only 60 to 70 percent of the time. What happened?

The powerful AI made it likelier that the consultants fell asleep at the wheel and made big errors when it counted.

Ethan Mollick

In a different paper, Fabrizio Dell’Acqua shows why relying too much on AI can backfire. He found that recruiters who used high-quality AI became lazy, careless, and less skilled in their own judgment. They missed out on some brilliant applicants and made worse decisions than recruiters who used low-quality AI or no AI at all. He hired 181 professional recruiters and gave them a tricky task: to evaluate 44 job applications based on their math ability. The data came from an international test of adult skills, so the math scores were not obvious from the résumés. Recruiters were given different levels of AI assistance: some had good or bad AI support, and some had none. He measured how accurate, how fast, how hardworking, and how confident they were.

Recruiters with higher-quality AI were worse than recruiters with lower-quality AI. They spent less time and effort on each résumé, and blindly followed the AI recommendations. They also did not improve over time. On the other hand, recruiters with lower-quality AI were more alert, more critical, and more independent. They improved their interaction with the AI and their own skills. Dell’Acqua developed a mathematical model to explain the trade-off between AI quality and human effort. When the AI is very good, humans have no reason to work hard and pay attention. They let the AI take over instead of using it as a tool, which can hurt human learning, skill development, and productivity. He called this “falling asleep at the wheel.”

Dell’Acqua’s study points to what happened in our study with the BCG consultants. The powerful AI made it likelier that the consultants fell asleep at the wheel and made big errors when it counted. They misunderstood the shape of the Jagged Frontier.