New AI can identify you by your dancing “fingerprint”

Photo by David Redfern / Staff via Getty Images

- The way we dance to music is so signature to an individual that a computer can now identify us by our unique dancing “fingerprint” with over 90 percent accuracy.

- The AI had a harder time identifying dancers who were trying to dance to metal and jazz music.

- Researchers say they are interested in what the results of this study reveal about human response to music, rather than potential surveillance uses.

When music comes on, some people are toe-tappers or head-bobbers, others sway their hips, and then there are those that let the rhythm move them to a full-body boogie. But, whatever it is, the way we groove to a beat it is so signature to an individual that a computer can now identify us by our unique dancing “fingerprint.”

A recent study has discovered that the way that we move to music, regardless of genre, is nearly always the same. So much so, an AI can identify who the dancer is with over 90 percent accuracy.

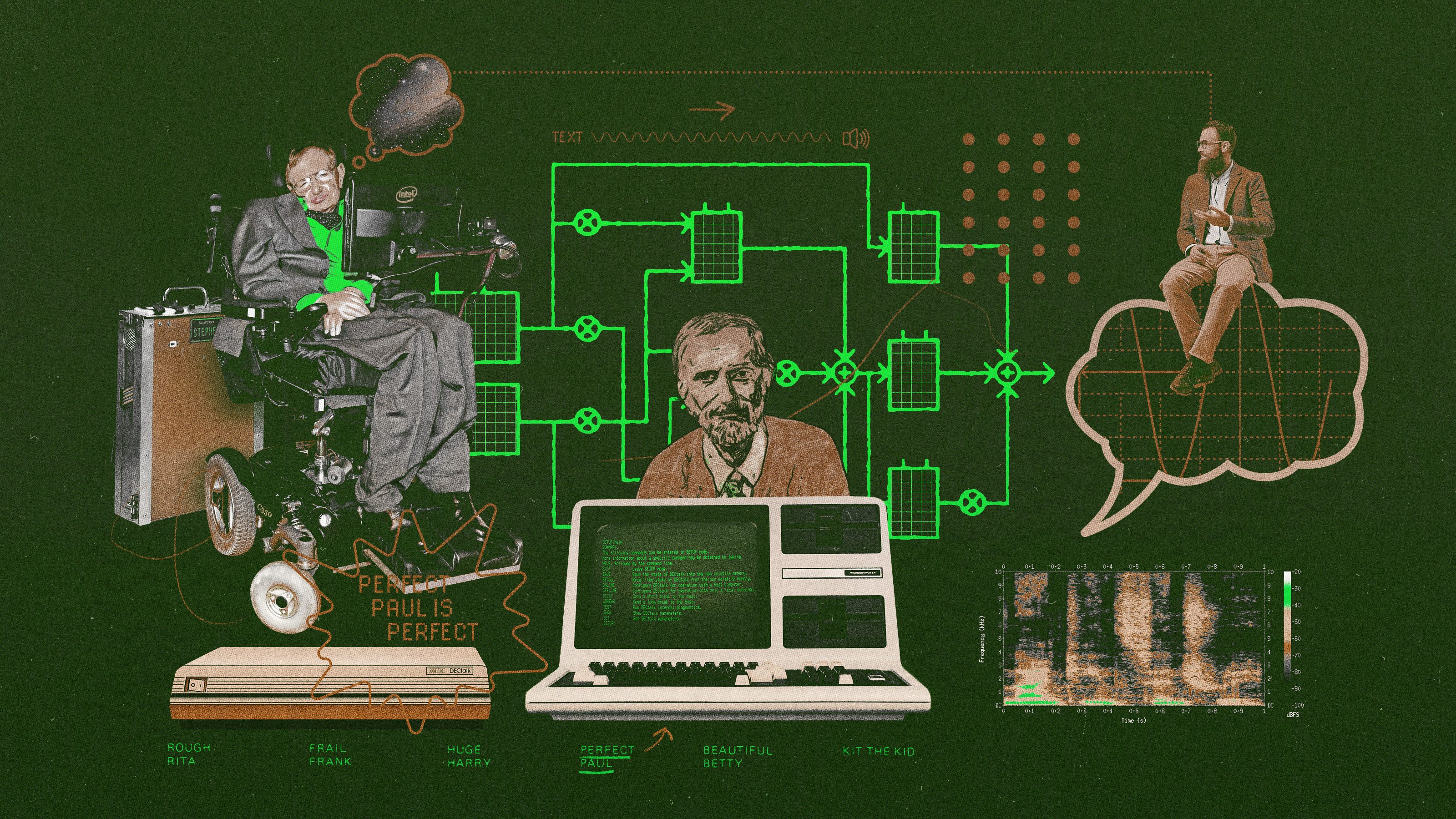

Researchers at the Centre for Interdisciplinary Music Research at Finland’s University of Jyväskylä have been using motion capture technology to study what a person’s dance moves say about his or her mood, personality, and ability to empathize. They recently stumbled upon a serendipitous discovery while trying to see if an ML machine, a form of artificial intelligence, would be able to identify which genre of music was playing based on how the participants of the study were dancing. In their study, published in the Journal of New Music Research, the researchers motion captured 73 participants with the AI technology while they danced to eight different music genres: electronica, jazz, metal, pop, rap, reggae, country, and blues. The only instruction the dancers were given was to move in a way that felt natural. The original objective was a flop. The ML’s algorithm was wrong in distinguishing genres over 70 percent of the time.

But what it could do was more shocking. The computer was able to correctly identify which one of the participants was dancing 94 percent of the time, regardless of what kind of music was playing, based on the pattern of a person’s dance style. It was the movement of participants’ heads, shoulders and knees that were important markers in distinguishing between individuals. If the computer were to have guessed randomly who was dancing with no other information to go off, the expected accuracy of its guesses would have been less than 2 percent.

“It seems as though a person’s dance movements are a kind of fingerprint. Each person has a unique movement signature that stays the same no matter what kind of music is playing,” said Pasi Saari, a co-author of the study, in a release.

The researchers noticed that some genres might have more influence on the way an individual dances than others. For instance, the AI had a harder time identifying dancers who were trying to dance to Metal and Jazz music. They aren’t exactly an intuitive genre to groove to, so we all tend to go about it using the same types of movements.

“There is a strong cultural association between Metal and certain types of movement, like headbanging,” Emily Carlson, the first author of the study, explained. “It’s probable that Metal caused more dancers to move in similar ways, making it harder to tell them apart.

It’s possible that dance-recognition software could become something similar to face-recognition software, but it doesn’t seem as practical. For now, researchers say that they are not as interested in possible surveillance uses of this technology, but rather what the results of this study say about how humans respond to music.

“We have a lot of new questions to ask, like whether our movement signatures stay the same across our lifespan, whether we can detect differences between cultures based on these movement signatures, and how well humans are able to recognize individuals from their dance movements compared to computers,” concluded Carlson.

So don’t worry about being identified at nightclub by an AI via your signature dance moves… yet.