wikipedia

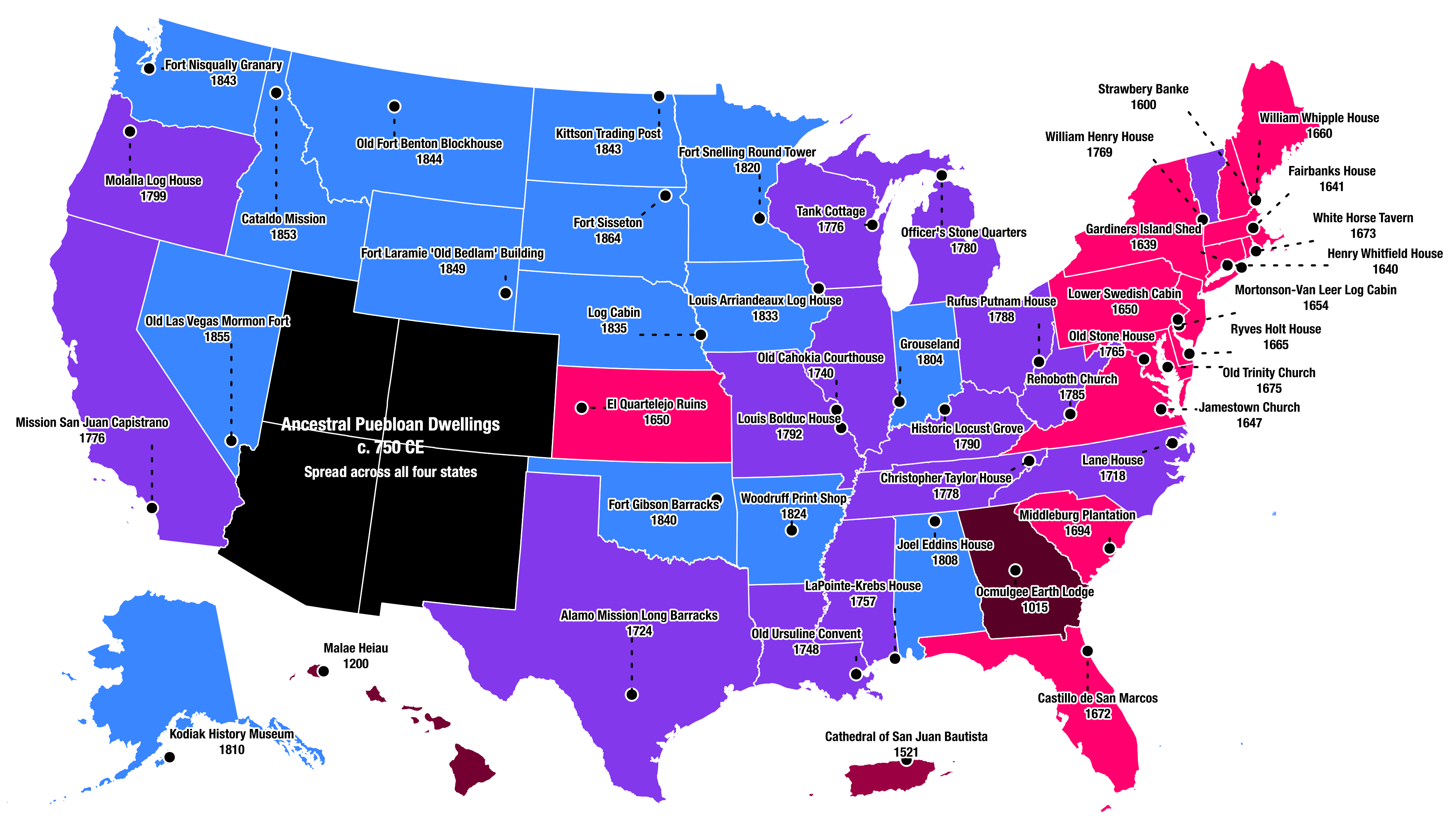

Map shows oldest buildings for each U.S. state – but also hints at what’s missing.

Opinion is more compelling than fact. That’s tearing society apart.

▸

5 min

—

with

Can algorithms use collective knowledge to make us all internet explorers?

Crowdsourcing as an idea isn’t anything new, says historian and sex researcher Alice Dreger. She tells us about the history of public gathering of information from the medieval era to today.

▸

4 min

—

with

What information can we trust? Truth isn’t black and white, so here are three requirements every fact should meet.

▸

7 min

—

with

Users don’t need better media literacy to beat fake news. We need social media to be frank about its commercial interests.

▸

5 min

—

with