theory

A thought experiment from 1867 leads scientists to design a groundbreaking information engine.

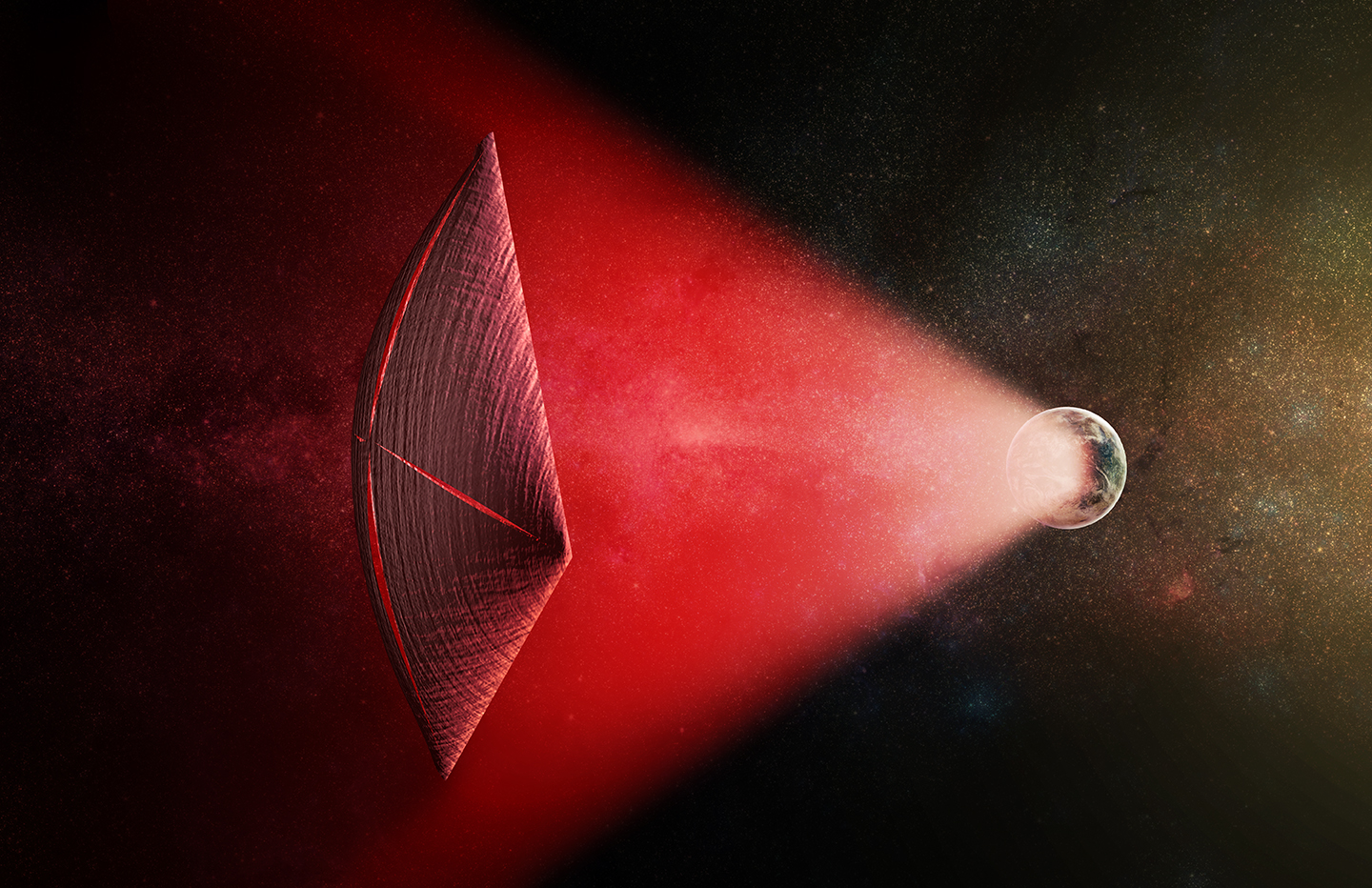

Harvard scientists propose how mysterious Fast Radio Bursts from outer space could actually be powering the spacecrafts of an advanced alien civilization.

Physicists finds evidence from just after the Big Bang that supports the controversial holographic universe theory.

Physicist Erik Verlinde’s theory successfully predicts the distribution of gravity around 33,000+ galaxies without relying on unobserved “dark matter”.

Climate change is a topic that’s politically charged rather than scientifically charged. Bill Nye offers tips for how those on the side of science can begin to have meaningful conversations with skeptics.

▸

7 min

—

with