Social media

For elite climbers, divers, and explorers, mastery can fuel an escalation loop in which identity and danger rise together.

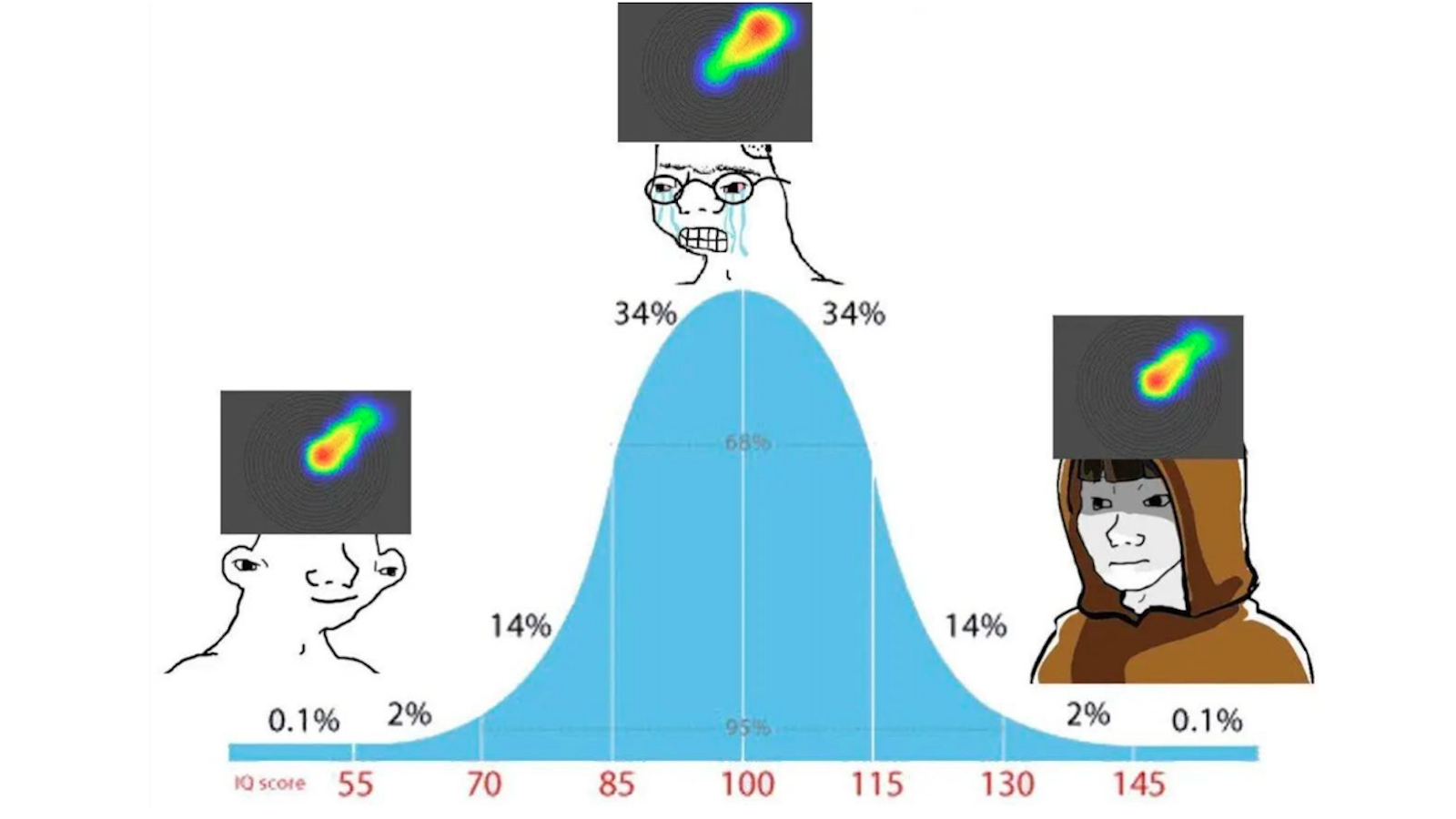

What a 1950s experiment reveals about conformity in the age of the internet.

Before we can build the future, we have to imagine it.

In “That Book Is Dangerous,” author Adam Szetela examines the rise of the “Sensitivity Era” in publishing and how outrage campaigns try to control what books authors can write and readers can read.

Stuck on a hamster wheel of mindless social media scrolling? Neuroscientist Anne-Laure Le Cunff explains how to consciously redirect your reward system.

Leaders may not realize it — they’re not just being watched, they’re being interpreted, filtered, and judged, frame by frame.

You no longer need an army of followers to stand out as a writer — “one great piece is all it takes,” says Perell.

The founder of GenZ Publishing joins Big Think from the infinitely unfurling confluence of print and digital.

Bestselling author Seth Godin urges us to rethink our definition of longevity — and to step back and measure what matters.

A study on the “moral circles” of liberals and conservatives gets drafted into the culture wars — with mixed results.

Mark Weinstein outlines a new path for social media that protects, respects, and empowers the regular users.

The digital world will always entail risks for teens, but that doesn’t mean parents aren’t without recourse.

Some news is slow, some news is fast — and there are two simple techniques to help you filter both.

What you can learn about media by parodying it from the print era into the digital age.

TikTok and its allies won’t go down without a legal fight.

Mental health awareness is more widespread than ever. Some professionals think it may have gone overboard — especially on TikTok.

Should social media platforms have the right to decide what speech to allow online? Should the government?

From Hogwarts to hashtags, kids’ reading habits have changed drastically in recent decades — but data suggests cause for hope.

You can learn an awful lot about people, culture, and politics by studying R.

The outrage machine is fueled by toxicity. But there are practical steps that we can take to recapture control over our emotions.

Since 2012, the amount of time that teenagers spend socializing in person has plummeted. Is it a coincidence that depression is more common?

A new online religion is spreading misinformation and phony products.

Self-help gurus for the digital age.

Research shows that spending more time on social media is associated with body image issues in boys and young men.

Telegrams were the “Twitter of the 1850s and 1860s” — and they elicited the exact same overblown fears as Twitter does today.

If you lost your religion, it might be because the internet and social media are having a secularizing effect on American society.

When boredom creeps in, many of us turn to social media. But that may be preventing us from reaching a transformative level of boredom.

People naturally judge fact from fiction in offline social settings, so why is it so hard online?

Brown noise, the better-known white noise, and even pink noise are all sonic hues.