Ray Kurzweil

Elon Musk, Sam Harris, Ray Kurzweil and other visionaries discuss AI superintelligence at a recent conference.

Philosopher and cognitive scientist David Chalmers warns about an AI-dominated future world without consciousness at a recent conference on artificial intelligence that also included Elon Musk, Ray Kurzweil, Sam Harris, Demis Hassabis and others.

A recent conference on the future of artificial intelligence features visionary debate between Elon Musk, Ray Kurzweil, Sam Harris, Nick Bostrom, David Chalmers, Jaan Tallinn and others.

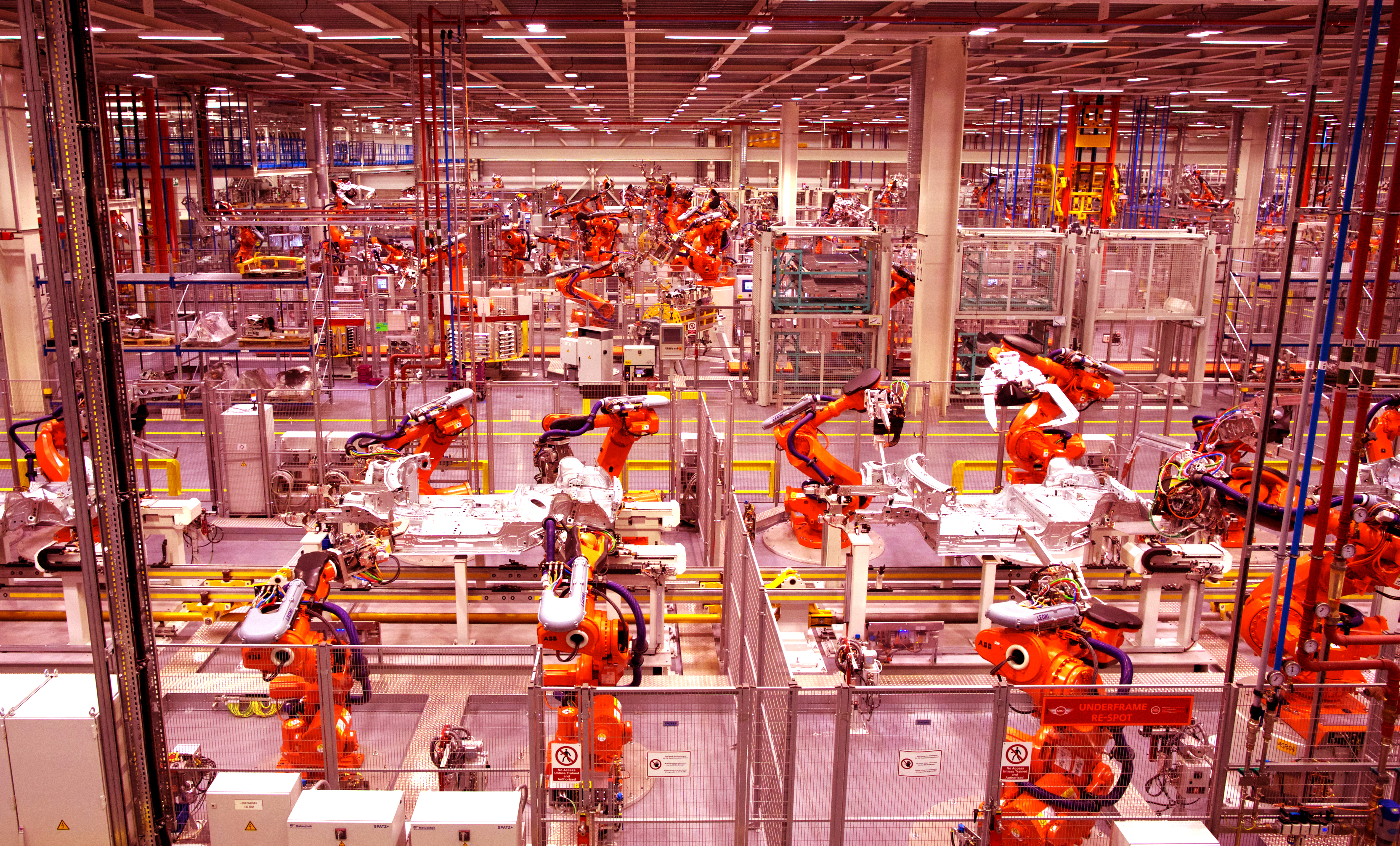

Job automation won’t be as bad as we think, so we need to learn how to stop working and prepare so we’re not dragged into the future kicking and screaming.